Mahathir Monjur

mmAnomaly: Leveraging Visual Context for Robust Anomaly Detection in the Non-Visual World with mmWave Radar

Apr 01, 2026Abstract:mmWave radar enables human sensing in non-visual scenarios-e.g., through clothing or certain types of walls-where traditional cameras fail due to occlusion or privacy limitations. However, robust anomaly detection with mmWave remains challenging, as signal reflections are influenced by material properties, clutter, and multipath interference, producing complex, non-Gaussian distortions. Existing methods lack contextual awareness and misclassify benign signal variations as anomalies. We present mmAnomaly, a multi-modal anomaly detection framework that combines mmWave radar with RGBD input to incorporate visual context. Our system extracts semantic cues-such as scene geometry and material properties-using a fast ResNet-based classifier, and uses a conditional latent diffusion model to synthesize the expected mmWave spectrum for the given visual context. A dual-input comparison module then identifies spatial deviations between real and generated spectra to localize anomalies. We evaluate mmAnomaly on two multi-modal datasets across three applications: concealed weapon localization, through-wall intruder localization, and through-wall fall localization. The system achieves up to 94% F1 score and sub-meter localization error, demonstrating robust generalization across clothing, occlusions, and cluttered environments. These results establish mmAnomaly as an accurate and interpretable framework for context-aware anomaly detection in mmWave sensing.

mmWEAVER: Environment-Specific mmWave Signal Synthesis from a Photo and Activity Description

Dec 10, 2025Abstract:Realistic signal generation and dataset augmentation are essential for advancing mmWave radar applications such as activity recognition and pose estimation, which rely heavily on diverse, and environment-specific signal datasets. However, mmWave signals are inherently complex, sparse, and high-dimensional, making physical simulation computationally expensive. This paper presents mmWeaver, a novel framework that synthesizes realistic, environment-specific complex mmWave signals by modeling them as continuous functions using Implicit Neural Representations (INRs), achieving up to 49-fold compression. mmWeaver incorporates hypernetworks that dynamically generate INR parameters based on environmental context (extracted from RGB-D images) and human motion features (derived from text-to-pose generation via MotionGPT), enabling efficient and adaptive signal synthesis. By conditioning on these semantic and geometric priors, mmWeaver generates diverse I/Q signals at multiple resolutions, preserving phase information critical for downstream tasks such as point cloud estimation and activity classification. Extensive experiments show that mmWeaver achieves a complex SSIM of 0.88 and a PSNR of 35 dB, outperforming existing methods in signal realism while improving activity recognition accuracy by up to 7% and reducing human pose estimation error by up to 15%, all while operating 6-35 times faster than simulation-based approaches.

SpeechQualityLLM: LLM-Based Multimodal Assessment of Speech Quality

Dec 09, 2025Abstract:Objective speech quality assessment is central to telephony, VoIP, and streaming systems, where large volumes of degraded audio must be monitored and optimized at scale. Classical metrics such as PESQ and POLQA approximate human mean opinion scores (MOS) but require carefully controlled conditions and expensive listening tests, while learning-based models such as NISQA regress MOS and multiple perceptual dimensions from waveforms or spectrograms, achieving high correlation with subjective ratings yet remaining rigid: they do not support interactive, natural-language queries and do not natively provide textual rationales. In this work, we introduce SpeechQualityLLM, a multimodal speech quality question-answering (QA) system that couples an audio encoder with a language model and is trained on the NISQA corpus using template-based question-answer pairs covering overall MOS and four perceptual dimensions (noisiness, coloration, discontinuity, and loudness) in both single-ended (degraded only) and double-ended (degraded plus clean reference) setups. Instead of directly regressing scores, our system is supervised to generate textual answers from which numeric predictions are parsed and evaluated with standard regression and ranking metrics; on held-out NISQA clips, the double-ended model attains a MOS mean absolute error (MAE) of 0.41 with Pearson correlation of 0.86, with competitive performance on dimension-wise tasks. Beyond these quantitative gains, it offers a flexible natural-language interface in which the language model acts as an audio quality expert: practitioners can query arbitrary aspects of degradations, prompt the model to emulate different listener profiles to capture human variability and produce diverse but plausible judgments rather than a single deterministic score, and thereby reduce reliance on large-scale crowdsourced tests and their monetary cost.

Data Distribution Dynamics in Real-World WiFi-Based Patient Activity Monitoring for Home Healthcare

Feb 03, 2024Abstract:This paper examines the application of WiFi signals for real-world monitoring of daily activities in home healthcare scenarios. While the state-of-the-art of WiFi-based activity recognition is promising in lab environments, challenges arise in real-world settings due to environmental, subject, and system configuration variables, affecting accuracy and adaptability. The research involved deploying systems in various settings and analyzing data shifts. It aims to guide realistic development of robust, context-aware WiFi sensing systems for elderly care. The findings suggest a shift in WiFi-based activity sensing, bridging the gap between academic research and practical applications, enhancing life quality through technology.

SoundSieve: Seconds-Long Audio Event Recognition on Intermittently-Powered Systems

May 25, 2023

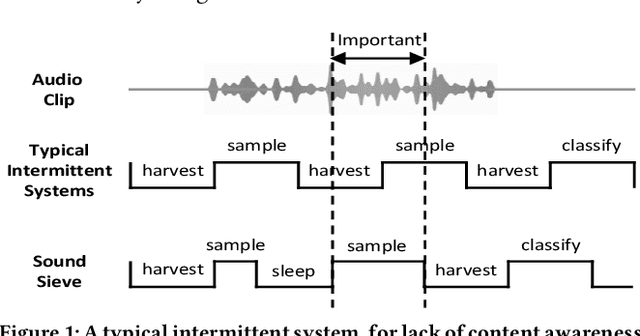

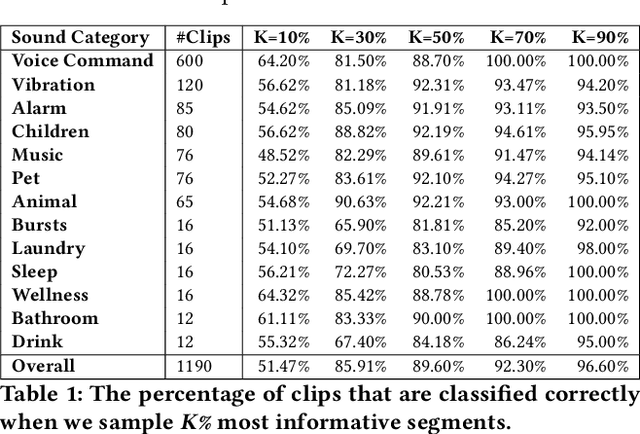

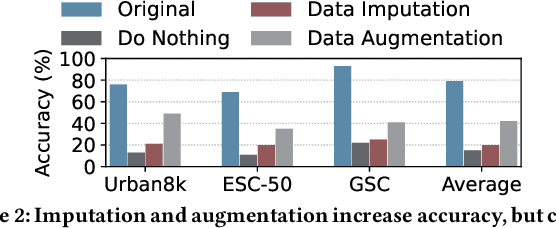

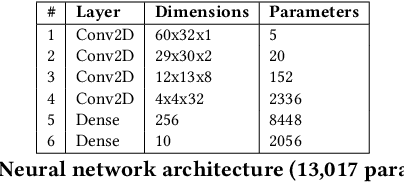

Abstract:A fundamental problem of every intermittently-powered sensing system is that signals acquired by these systems over a longer period in time are also intermittent. As a consequence, these systems fail to capture parts of a longer-duration event that spans over multiple charge-discharge cycles of the capacitor that stores the harvested energy. From an application's perspective, this is viewed as sporadic bursts of missing values in the input data -- which may not be recoverable using statistical interpolation or imputation methods. In this paper, we study this problem in the light of an intermittent audio classification system and design an end-to-end system -- SoundSieve -- that is capable of accurately classifying audio events that span multiple on-off cycles of the intermittent system. SoundSieve employs an offline audio analyzer that learns to identify and predict important segments of an audio clip that must be sampled to ensure accurate classification of the audio. At runtime, SoundSieve employs a lightweight, energy- and content-aware audio sampler that decides when the system should wake up to capture the next chunk of audio; and a lightweight, intermittence-aware audio classifier that performs imputation and on-device inference. Through extensive evaluations using popular audio datasets as well as real systems, we demonstrate that SoundSieve yields 5%--30% more accurate inference results than the state-of-the-art.

CarFi: Rider Localization Using Wi-Fi CSI

Dec 21, 2022

Abstract:With the rise of hailing services, people are increasingly relying on shared mobility (e.g., Uber, Lyft) drivers to pick up for transportation. However, such drivers and riders have difficulties finding each other in urban areas as GPS signals get blocked by skyscrapers, in crowded environments (e.g., in stadiums, airports, and bars), at night, and in bad weather. It wastes their time, creates a bad user experience, and causes more CO2 emissions due to idle driving. In this work, we explore the potential of Wi-Fi to help drivers to determine the street side of the riders. Our proposed system is called CarFi that uses Wi-Fi CSI from two antennas placed inside a moving vehicle and a data-driven technique to determine the street side of the rider. By collecting real-world data in realistic and challenging settings by blocking the signal with other people and other parked cars, we see that CarFi is 95.44% accurate in rider-side determination in both line of sight (LoS) and non-line of sight (nLoS) conditions, and can be run on an embedded GPU in real-time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge