Lucija Gosak

Chapter 7 Review of Data-Driven Generative AI Models for Knowledge Extraction from Scientific Literature in Healthcare

Nov 18, 2024

Abstract:This review examines the development of abstractive NLP-based text summarization approaches and compares them to existing techniques for extractive summarization. A brief history of text summarization from the 1950s to the introduction of pre-trained language models such as Bidirectional Encoder Representations from Transformer (BERT) and Generative Pre-training Transformers (GPT) are presented. In total, 60 studies were identified in PubMed and Web of Science, of which 29 were excluded and 24 were read and evaluated for eligibility, resulting in the use of seven studies for further analysis. This chapter also includes a section with examples including an example of a comparison between GPT-3 and state-of-the-art GPT-4 solutions in scientific text summarisation. Natural language processing has not yet reached its full potential in the generation of brief textual summaries. As there are acknowledged concerns that must be addressed, we can expect gradual introduction of such models in practise.

Petal-X: Human-Centered Visual Explanations to Improve Cardiovascular Risk Communication

Jun 26, 2024

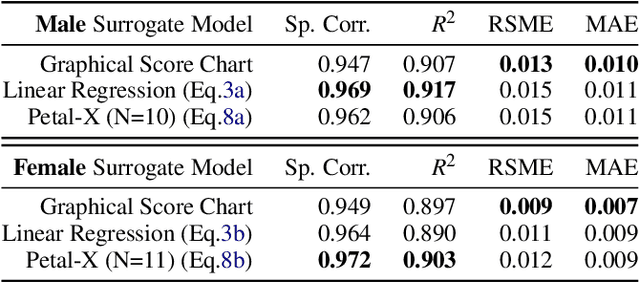

Abstract:Cardiovascular diseases (CVDs), the leading cause of death worldwide, can be prevented in most cases through behavioral interventions. Therefore, effective communication of CVD risk and projected risk reduction by risk factor modification plays a crucial role in reducing CVD risk at the individual level. However, despite interest in refining risk estimation with improved prediction models such as SCORE2, the guidelines for presenting these risk estimations in clinical practice remained essentially unchanged in the last few years, with graphical score charts (GSCs) continuing to be one of the prevalent systems. This work describes the design and implementation of Petal-X, a novel tool to support clinician-patient shared decision-making by explaining the CVD risk contributions of different factors and facilitating what-if analysis. Petal-X relies on a novel visualization, Petal Product Plots, and a tailor-made global surrogate model of SCORE2, whose fidelity is comparable to that of the GSCs used in clinical practice. We evaluated Petal-X compared to GSCs in a controlled experiment with 88 healthcare students, all but one with experience with chronic patients. The results show that Petal-X outperforms GSC in critical tasks, such as comparing the contribution to the patient's 10-year CVD risk of each modifiable risk factor, without a significant loss of perceived transparency, trust, or intent to use. Our study provides an innovative approach to the visualization and explanation of risk in clinical practice that, due to its model-agnostic nature, could continue to support next-generation artificial intelligence risk assessment models.

EXMOS: Explanatory Model Steering Through Multifaceted Explanations and Data Configurations

Feb 01, 2024

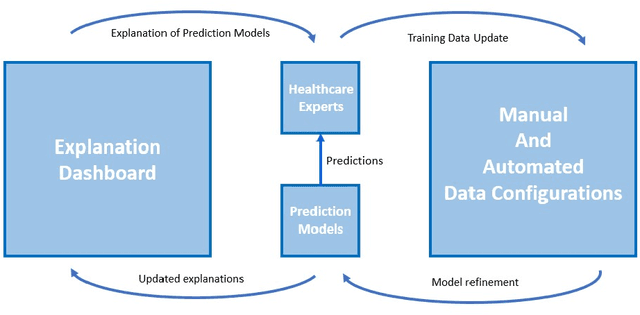

Abstract:Explanations in interactive machine-learning systems facilitate debugging and improving prediction models. However, the effectiveness of various global model-centric and data-centric explanations in aiding domain experts to detect and resolve potential data issues for model improvement remains unexplored. This research investigates the influence of data-centric and model-centric global explanations in systems that support healthcare experts in optimising models through automated and manual data configurations. We conducted quantitative (n=70) and qualitative (n=30) studies with healthcare experts to explore the impact of different explanations on trust, understandability and model improvement. Our results reveal the insufficiency of global model-centric explanations for guiding users during data configuration. Although data-centric explanations enhanced understanding of post-configuration system changes, a hybrid fusion of both explanation types demonstrated the highest effectiveness. Based on our study results, we also present design implications for effective explanation-driven interactive machine-learning systems.

* This is a pre-print version only for early release. Please view the conference published version from ACM CHI 2024 to get the latest version of the paper

Lessons Learned from EXMOS User Studies: A Technical Report Summarizing Key Takeaways from User Studies Conducted to Evaluate The EXMOS Platform

Oct 03, 2023

Abstract:In the realm of interactive machine-learning systems, the provision of explanations serves as a vital aid in the processes of debugging and enhancing prediction models. However, the extent to which various global model-centric and data-centric explanations can effectively assist domain experts in detecting and resolving potential data-related issues for the purpose of model improvement has remained largely unexplored. In this technical report, we summarise the key findings of our two user studies. Our research involved a comprehensive examination of the impact of global explanations rooted in both data-centric and model-centric perspectives within systems designed to support healthcare experts in optimising machine learning models through both automated and manual data configurations. To empirically investigate these dynamics, we conducted two user studies, comprising quantitative analysis involving a sample size of 70 healthcare experts and qualitative assessments involving 30 healthcare experts. These studies were aimed at illuminating the influence of different explanation types on three key dimensions: trust, understandability, and model improvement. Results show that global model-centric explanations alone are insufficient for effectively guiding users during the intricate process of data configuration. In contrast, data-centric explanations exhibited their potential by enhancing the understanding of system changes that occur post-configuration. However, a combination of both showed the highest level of efficacy for fostering trust, improving understandability, and facilitating model enhancement among healthcare experts. We also present essential implications for developing interactive machine-learning systems driven by explanations. These insights can guide the creation of more effective systems that empower domain experts to harness the full potential of machine learning

Improving Primary Healthcare Workflow Using Extreme Summarization of Scientific Literature Based on Generative AI

Jul 24, 2023

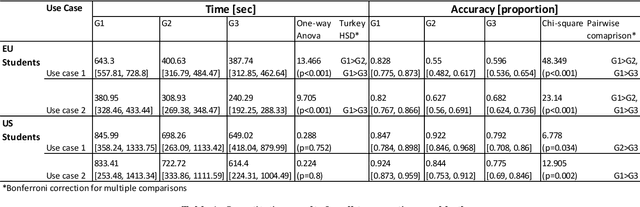

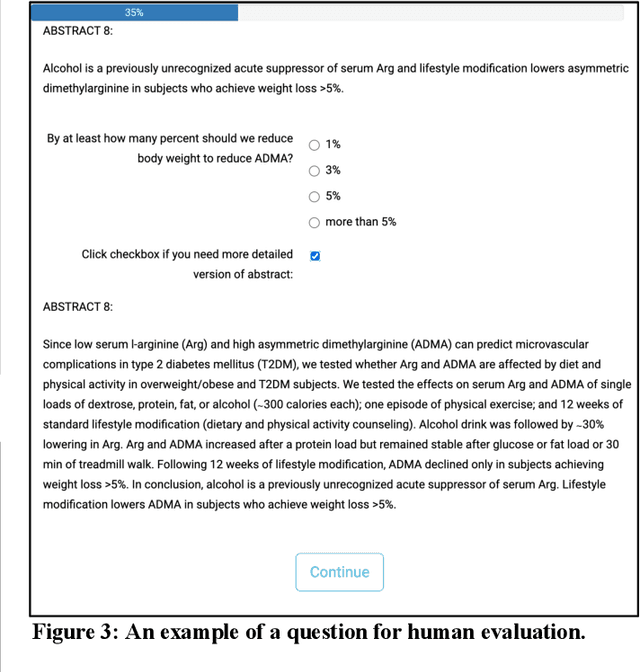

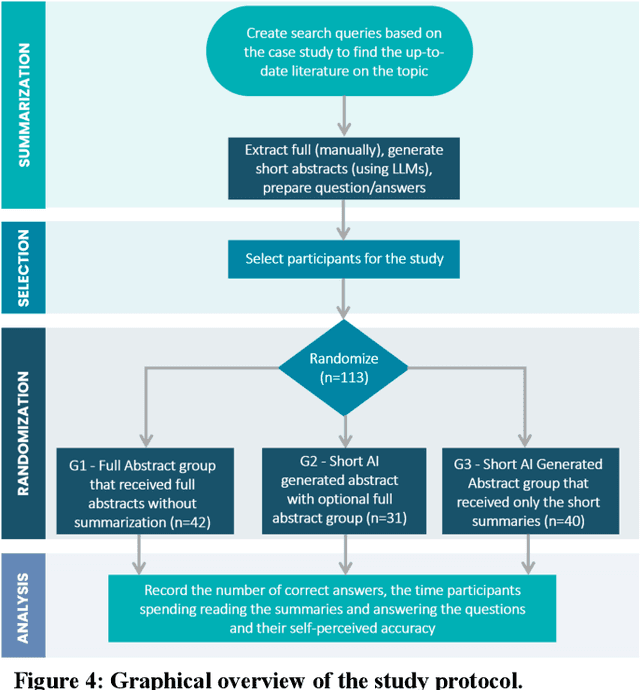

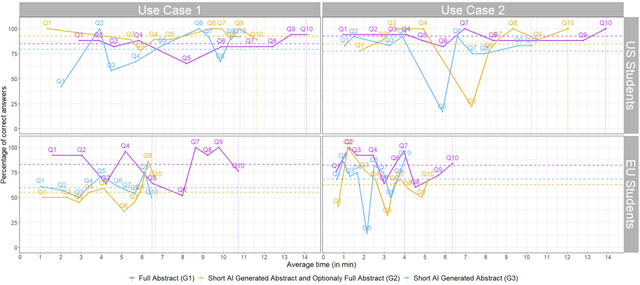

Abstract:Primary care professionals struggle to keep up to date with the latest scientific literature critical in guiding evidence-based practice related to their daily work. To help solve the above-mentioned problem, we employed generative artificial intelligence techniques based on large-scale language models to summarize abstracts of scientific papers. Our objective is to investigate the potential of generative artificial intelligence in diminishing the cognitive load experienced by practitioners, thus exploring its ability to alleviate mental effort and burden. The study participants were provided with two use cases related to preventive care and behavior change, simulating a search for new scientific literature. The study included 113 university students from Slovenia and the United States randomized into three distinct study groups. The first group was assigned to the full abstracts. The second group was assigned to the short abstracts generated by AI. The third group had the option to select a full abstract in addition to the AI-generated short summary. Each use case study included ten retrieved abstracts. Our research demonstrates that the use of generative AI for literature review is efficient and effective. The time needed to answer questions related to the content of abstracts was significantly lower in groups two and three compared to the first group using full abstracts. The results, however, also show significantly lower accuracy in extracted knowledge in cases where full abstract was not available. Such a disruptive technology could significantly reduce the time required for healthcare professionals to keep up with the most recent scientific literature; nevertheless, further developments are needed to help them comprehend the knowledge accurately.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge