Lipai Huang

R2RAG-Flood: A reasoning-reinforced training-free retrieval augmentation generation framework for flood damage nowcasting

Feb 10, 2026Abstract:R2RAG-Flood is a reasoning-reinforced, training-free retrieval-augmented generation framework for post-storm property damage nowcasting. Building on an existing supervised tabular predictor, the framework constructs a reasoning-centric knowledge base composed of labeled tabular records, where each sample includes structured predictors, a compact natural language text-mode summary, and a model-generated reasoning trajectory. During inference, R2RAG-Flood issues context-augmented prompts that retrieve and condition on relevant reasoning trajectories from nearby geospatial neighbors and canonical class prototypes, enabling the large language model backbone to emulate and adapt prior reasoning rather than learn new task-specific parameters. Predictions follow a two-stage procedure that first determines property damage occurrence and then refines severity within a three-level Property Damage Extent categorization, with a conditional downgrade step to correct over-predicted severity. In a case study of Harris County, Texas at the 12-digit Hydrologic Unit Code scale, the supervised tabular baseline trained directly on structured predictors achieves 0.714 overall accuracy and 0.859 damage class accuracy for medium and high damage classes. Across seven large language model backbones, R2RAG-Flood attains 0.613 to 0.668 overall accuracy and 0.757 to 0.896 damage class accuracy, approaching the supervised baseline while additionally producing a structured rationale for each prediction. Using a severity-per-cost efficiency metric derived from API pricing and GPU instance costs, lightweight R2RAG-Flood variants demonstrate substantially higher efficiency than both the supervised tabular baseline and larger language models, while requiring no task-specific training or fine-tuning.

DisastIR: A Comprehensive Information Retrieval Benchmark for Disaster Management

May 20, 2025

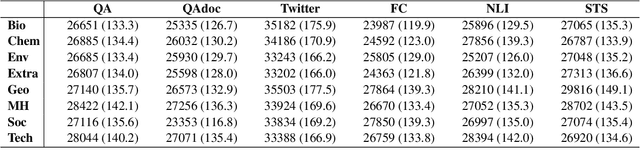

Abstract:Effective disaster management requires timely access to accurate and contextually relevant information. Existing Information Retrieval (IR) benchmarks, however, focus primarily on general or specialized domains, such as medicine or finance, neglecting the unique linguistic complexity and diverse information needs encountered in disaster management scenarios. To bridge this gap, we introduce DisastIR, the first comprehensive IR evaluation benchmark specifically tailored for disaster management. DisastIR comprises 9,600 diverse user queries and more than 1.3 million labeled query-passage pairs, covering 48 distinct retrieval tasks derived from six search intents and eight general disaster categories that include 301 specific event types. Our evaluations of 30 state-of-the-art retrieval models demonstrate significant performance variances across tasks, with no single model excelling universally. Furthermore, comparative analyses reveal significant performance gaps between general-domain and disaster management-specific tasks, highlighting the necessity of disaster management-specific benchmarks for guiding IR model selection to support effective decision-making in disaster management scenarios. All source codes and DisastIR are available at https://github.com/KaiYin97/Disaster_IR.

High-Resolution Flood Probability Mapping Using Generative Machine Learning with Large-Scale Synthetic Precipitation and Inundation Data

Sep 20, 2024

Abstract:High-resolution flood probability maps are essential for addressing the limitations of existing flood risk assessment approaches but are often limited by the availability of historical event data. Also, producing simulated data needed for creating probabilistic flood maps using physics-based models involves significant computation and time effort inhibiting the feasibility. To address this gap, this study introduces Flood-Precip GAN (Flood-Precipitation Generative Adversarial Network), a novel methodology that leverages generative machine learning to simulate large-scale synthetic inundation data to produce probabilistic flood maps. With a focus on Harris County, Texas, Flood-Precip GAN begins with training a cell-wise depth estimator using a limited number of physics-based model-generated precipitation-flood events. This model, which emphasizes precipitation-based features, outperforms universal models. Subsequently, a Generative Adversarial Network (GAN) with constraints is employed to conditionally generate synthetic precipitation records. Strategic thresholds are established to filter these records, ensuring close alignment with true precipitation patterns. For each cell, synthetic events are smoothed using a K-nearest neighbors algorithm and processed through the depth estimator to derive synthetic depth distributions. By iterating this procedure and after generating 10,000 synthetic precipitation-flood events, we construct flood probability maps in various formats, considering different inundation depths. Validation through similarity and correlation metrics confirms the fidelity of the synthetic depth distributions relative to true data. Flood-Precip GAN provides a scalable solution for generating synthetic flood depth data needed to create high-resolution flood probability maps, significantly enhancing flood preparedness and mitigation efforts.

FloodDamageCast: Building Flood Damage Nowcasting with Machine Learning and Data Augmentation

May 23, 2024

Abstract:Near-real time estimation of damage to buildings and infrastructure, referred to as damage nowcasting in this study, is crucial for empowering emergency responders to make informed decisions regarding evacuation orders and infrastructure repair priorities during disaster response and recovery. Here, we introduce FloodDamageCast, a machine learning framework tailored for property flood damage nowcasting. The framework leverages heterogeneous data to predict residential flood damage at a resolution of 500 meters by 500 meters within Harris County, Texas, during the 2017 Hurricane Harvey. To deal with data imbalance, FloodDamageCast incorporates a generative adversarial networks-based data augmentation coupled with an efficient machine learning model. The results demonstrate the model's ability to identify high-damage spatial areas that would be overlooked by baseline models. Insights gleaned from flood damage nowcasting can assist emergency responders to more efficiently identify repair needs, allocate resources, and streamline on-the-ground inspections, thereby saving both time and effort.

MaxFloodCast: Ensemble Machine Learning Model for Predicting Peak Inundation Depth And Decoding Influencing Features

Aug 11, 2023Abstract:Timely, accurate, and reliable information is essential for decision-makers, emergency managers, and infrastructure operators during flood events. This study demonstrates a proposed machine learning model, MaxFloodCast, trained on physics-based hydrodynamic simulations in Harris County, offers efficient and interpretable flood inundation depth predictions. Achieving an average R-squared of 0.949 and a Root Mean Square Error of 0.61 ft on unseen data, it proves reliable in forecasting peak flood inundation depths. Validated against Hurricane Harvey and Storm Imelda, MaxFloodCast shows the potential in supporting near-time floodplain management and emergency operations. The model's interpretability aids decision-makers in offering critical information to inform flood mitigation strategies, to prioritize areas with critical facilities and to examine how rainfall in other watersheds influences flood exposure in one area. The MaxFloodCast model enables accurate and interpretable inundation depth predictions while significantly reducing computational time, thereby supporting emergency response efforts and flood risk management more effectively.

FairMobi-Net: A Fairness-aware Deep Learning Model for Urban Mobility Flow Generation

Jul 20, 2023

Abstract:Generating realistic human flows across regions is essential for our understanding of urban structures and population activity patterns, enabling important applications in the fields of urban planning and management. However, a notable shortcoming of most existing mobility generation methodologies is neglect of prediction fairness, which can result in underestimation of mobility flows across regions with vulnerable population groups, potentially resulting in inequitable resource distribution and infrastructure development. To overcome this limitation, our study presents a novel, fairness-aware deep learning model, FairMobi-Net, for inter-region human flow prediction. The FairMobi-Net model uniquely incorporates fairness loss into the loss function and employs a hybrid approach, merging binary classification and numerical regression techniques for human flow prediction. We validate the FairMobi-Net model using comprehensive human mobility datasets from four U.S. cities, predicting human flow at the census-tract level. Our findings reveal that the FairMobi-Net model outperforms state-of-the-art models (such as the DeepGravity model) in producing more accurate and equitable human flow predictions across a variety of region pairs, regardless of regional income differences. The model maintains a high degree of accuracy consistently across diverse regions, addressing the previous fairness concern. Further analysis of feature importance elucidates the impact of physical distances and road network structures on human flows across regions. With fairness as its touchstone, the model and results provide researchers and practitioners across the fields of urban sciences, transportation engineering, and computing with an effective tool for accurate generation of human mobility flows across regions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge