Cheng-Chun Lee

ML4EJ: Decoding the Role of Urban Features in Shaping Environmental Injustice Using Interpretable Machine Learning

Oct 03, 2023

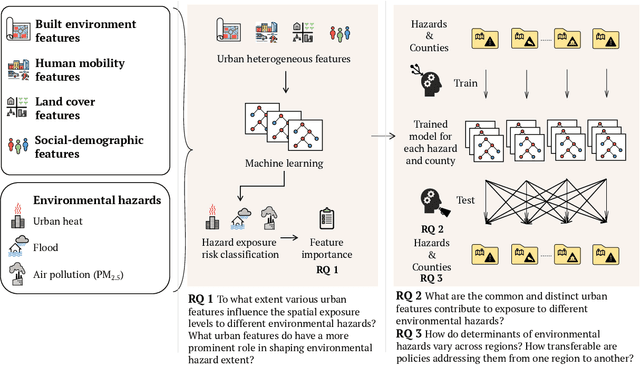

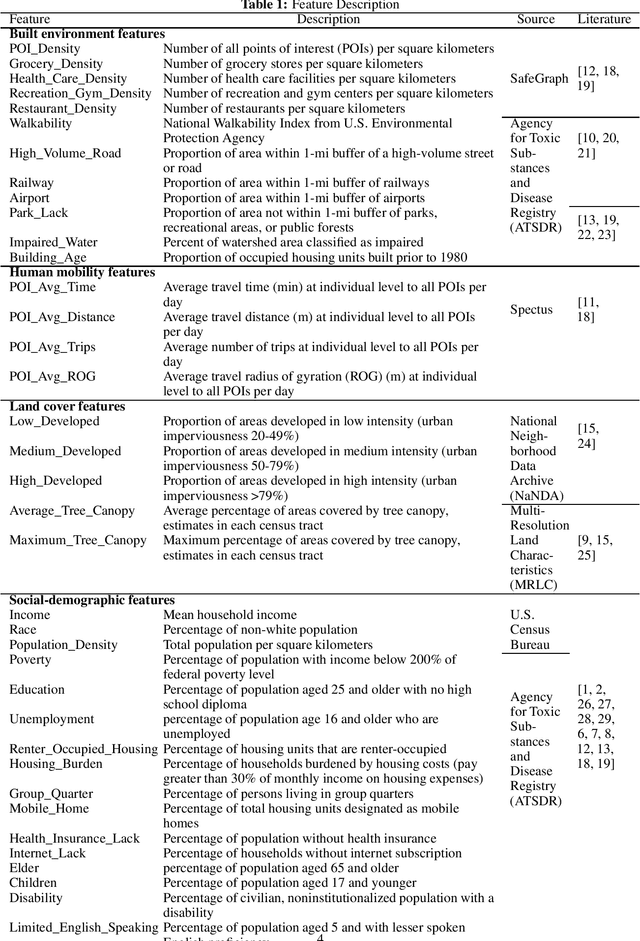

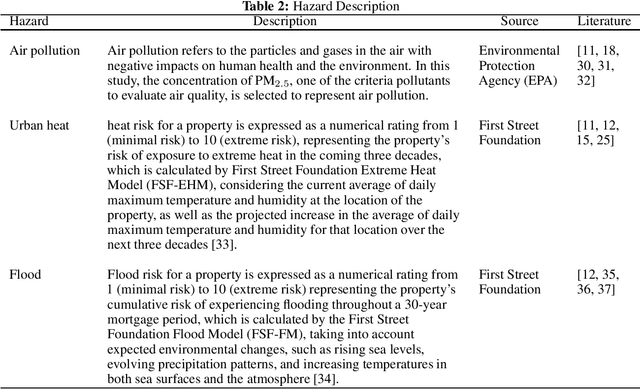

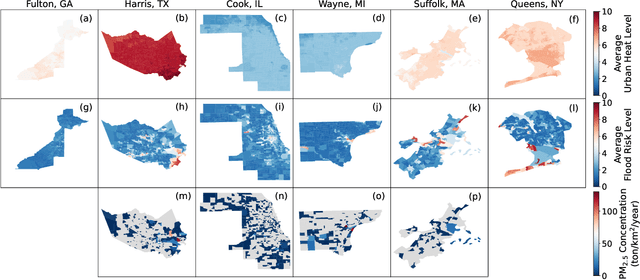

Abstract:Understanding the key factors shaping environmental hazard exposures and their associated environmental injustice issues is vital for formulating equitable policy measures. Traditional perspectives on environmental injustice have primarily focused on the socioeconomic dimensions, often overlooking the influence of heterogeneous urban characteristics. This limited view may obstruct a comprehensive understanding of the complex nature of environmental justice and its relationship with urban design features. To address this gap, this study creates an interpretable machine learning model to examine the effects of various urban features and their non-linear interactions to the exposure disparities of three primary hazards: air pollution, urban heat, and flooding. The analysis trains and tests models with data from six metropolitan counties in the United States using Random Forest and XGBoost. The performance is used to measure the extent to which variations of urban features shape disparities in environmental hazard levels. In addition, the analysis of feature importance reveals features related to social-demographic characteristics as the most prominent urban features that shape hazard extent. Features related to infrastructure distribution and land cover are relatively important for urban heat and air pollution exposure respectively. Moreover, we evaluate the models' transferability across different regions and hazards. The results highlight limited transferability, underscoring the intricate differences among hazards and regions and the way in which urban features shape hazard exposures. The insights gleaned from this study offer fresh perspectives on the relationship among urban features and their interplay with environmental hazard exposure disparities, informing the development of more integrated urban design policies to enhance social equity and environmental injustice issues.

MaxFloodCast: Ensemble Machine Learning Model for Predicting Peak Inundation Depth And Decoding Influencing Features

Aug 11, 2023Abstract:Timely, accurate, and reliable information is essential for decision-makers, emergency managers, and infrastructure operators during flood events. This study demonstrates a proposed machine learning model, MaxFloodCast, trained on physics-based hydrodynamic simulations in Harris County, offers efficient and interpretable flood inundation depth predictions. Achieving an average R-squared of 0.949 and a Root Mean Square Error of 0.61 ft on unseen data, it proves reliable in forecasting peak flood inundation depths. Validated against Hurricane Harvey and Storm Imelda, MaxFloodCast shows the potential in supporting near-time floodplain management and emergency operations. The model's interpretability aids decision-makers in offering critical information to inform flood mitigation strategies, to prioritize areas with critical facilities and to examine how rainfall in other watersheds influences flood exposure in one area. The MaxFloodCast model enables accurate and interpretable inundation depth predictions while significantly reducing computational time, thereby supporting emergency response efforts and flood risk management more effectively.

ELEV-VISION: Automated Lowest Floor Elevation Estimation from Segmenting Street View Images

Jun 05, 2023

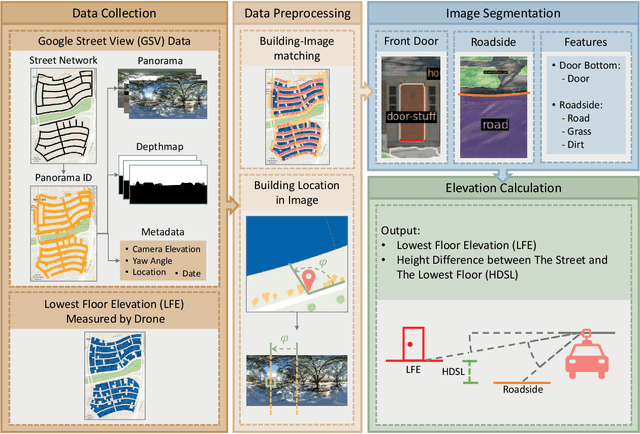

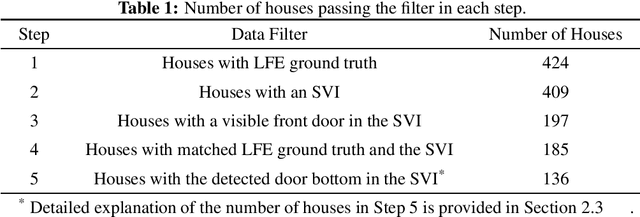

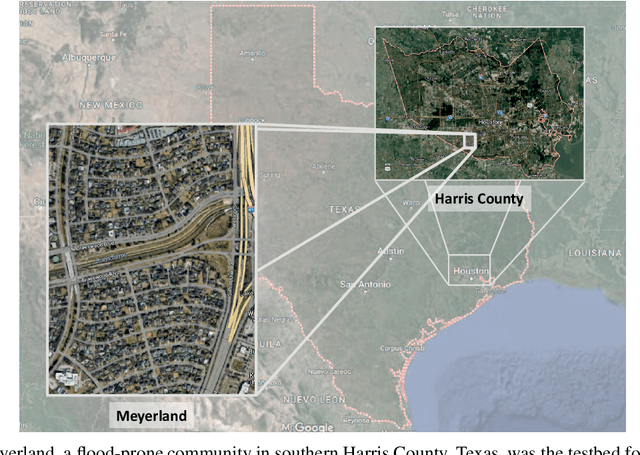

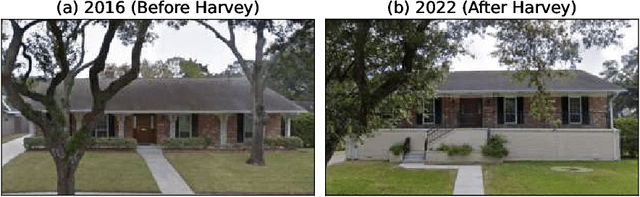

Abstract:We propose an automated lowest floor elevation (LFE) estimation algorithm based on computer vision techniques to leverage the latent information in street view images. Flood depth-damage models use a combination of LFE and flood depth for determining flood risk and extent of damage to properties. We used image segmentation for detecting door bottoms and roadside edges from Google Street View images. The characteristic of equirectangular projection with constant spacing representation of horizontal and vertical angles allows extraction of the pitch angle from the camera to the door bottom. The depth from the camera to the door bottom was obtained from the depthmap paired with the Google Street View image. LFEs were calculated from the pitch angle and the depth. The testbed for application of the proposed method is Meyerland (Harris County, Texas). The results show that the proposed method achieved mean absolute error of 0.190 m (1.18 %) in estimating LFE. The height difference between the street and the lowest floor (HDSL) was estimated to provide information for flood damage estimation. The proposed automatic LFE estimation algorithm using Street View images and image segmentation provides a rapid and cost-effective method for LFE estimation compared with the surveys using total station theodolite and unmanned aerial systems. By obtaining more accurate and up-to-date LFE data using the proposed method, city planners, emergency planners and insurance companies could make a more precise estimation of flood damage.

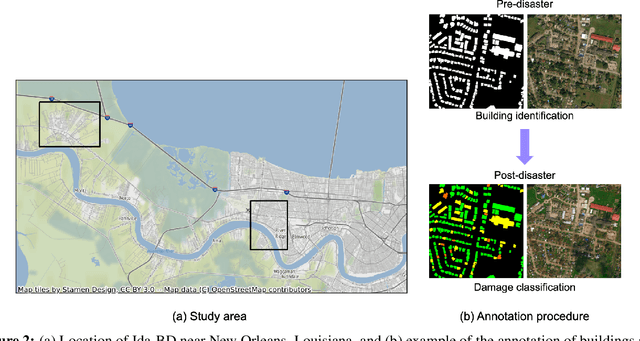

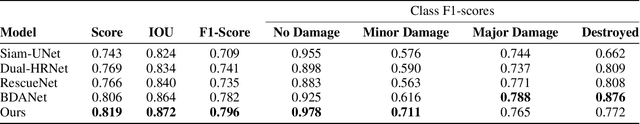

DAHiTrA: Damage Assessment Using a Novel Hierarchical Transformer Architecture

Aug 03, 2022

Abstract:This paper presents DAHiTrA, a novel deep-learning model with hierarchical transformers to classify building damages based on satellite images in the aftermath of hurricanes. An automated building damage assessment provides critical information for decision making and resource allocation for rapid emergency response. Satellite imagery provides real-time, high-coverage information and offers opportunities to inform large-scale post-disaster building damage assessment. In addition, deep-learning methods have shown to be promising in classifying building damage. In this work, a novel transformer-based network is proposed for assessing building damage. This network leverages hierarchical spatial features of multiple resolutions and captures temporal difference in the feature domain after applying a transformer encoder on the spatial features. The proposed network achieves state-of-the-art-performance when tested on a large-scale disaster damage dataset (xBD) for building localization and damage classification, as well as on LEVIR-CD dataset for change detection tasks. In addition, we introduce a new high-resolution satellite imagery dataset, Ida-BD (related to the 2021 Hurricane Ida in Louisiana in 2021, for domain adaptation to further evaluate the capability of the model to be applied to newly damaged areas with scarce data. The domain adaptation results indicate that the proposed model can be adapted to a new event with only limited fine-tuning. Hence, the proposed model advances the current state of the art through better performance and domain adaptation. Also, Ida-BD provides a higher-resolution annotated dataset for future studies in this field.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge