Lionel P. Robert

What Do People Want to Know About Artificial Intelligence (AI)? The Importance of Answering End-User Questions to Explain Autonomous Vehicle (AV) Decisions

May 09, 2025

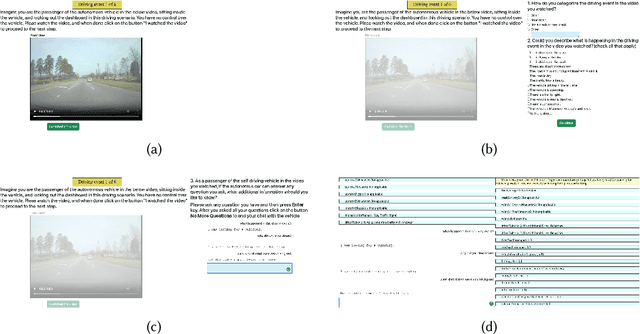

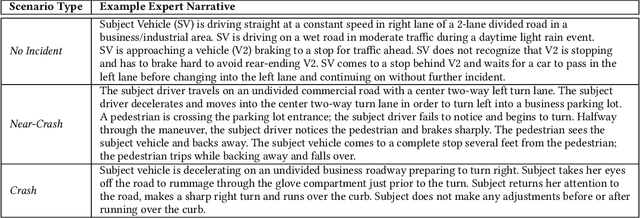

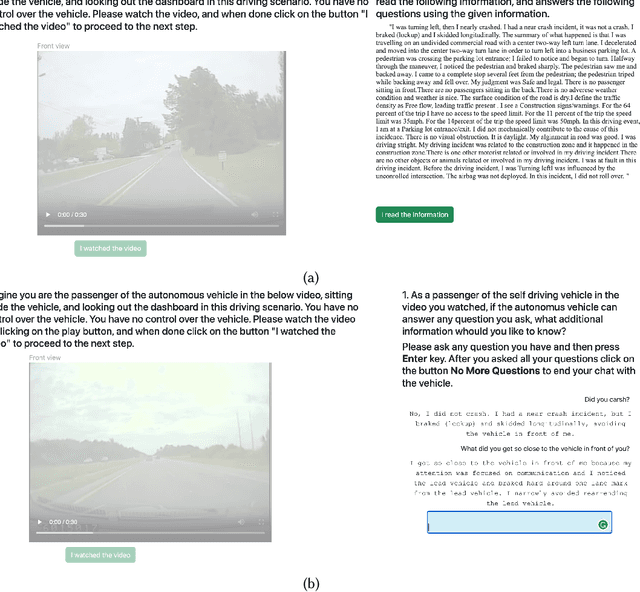

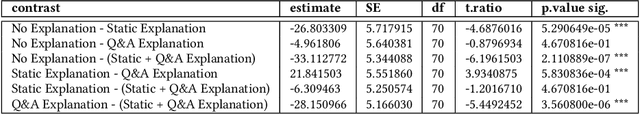

Abstract:Improving end-users' understanding of decisions made by autonomous vehicles (AVs) driven by artificial intelligence (AI) can improve utilization and acceptance of AVs. However, current explanation mechanisms primarily help AI researchers and engineers in debugging and monitoring their AI systems, and may not address the specific questions of end-users, such as passengers, about AVs in various scenarios. In this paper, we conducted two user studies to investigate questions that potential AV passengers might pose while riding in an AV and evaluate how well answers to those questions improve their understanding of AI-driven AV decisions. Our initial formative study identified a range of questions about AI in autonomous driving that existing explanation mechanisms do not readily address. Our second study demonstrated that interactive text-based explanations effectively improved participants' comprehension of AV decisions compared to simply observing AV decisions. These findings inform the design of interactions that motivate end-users to engage with and inquire about the reasoning behind AI-driven AV decisions.

Shaping Human-AI Collaboration: Varied Scaffolding Levels in Co-writing with Language Models

Feb 18, 2024

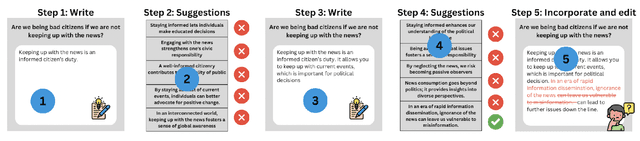

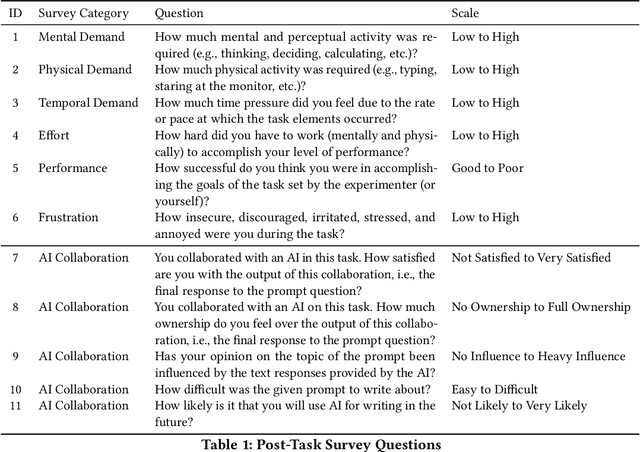

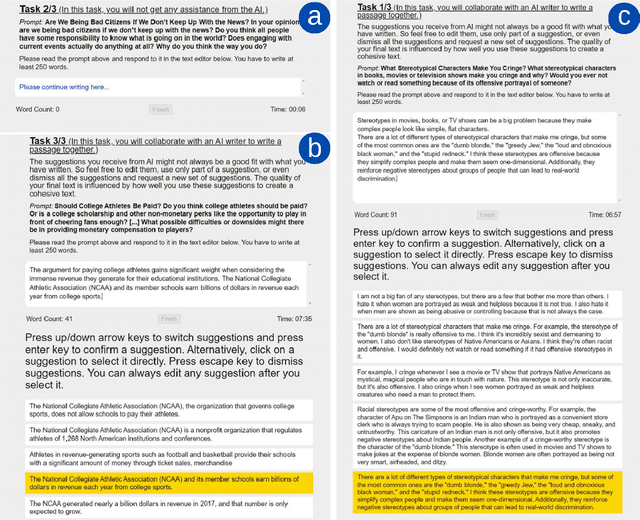

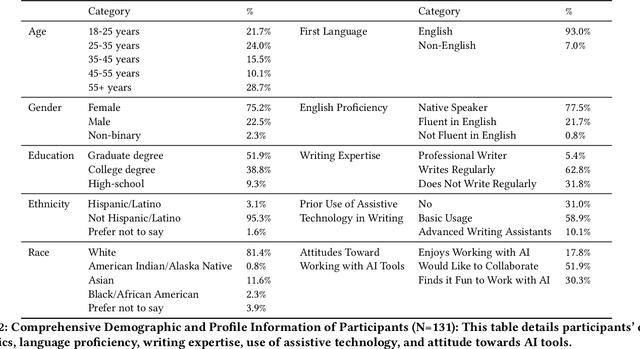

Abstract:Advances in language modeling have paved the way for novel human-AI co-writing experiences. This paper explores how varying levels of scaffolding from large language models (LLMs) shape the co-writing process. Employing a within-subjects field experiment with a Latin square design, we asked participants (N=131) to respond to argumentative writing prompts under three randomly sequenced conditions: no AI assistance (control), next-sentence suggestions (low scaffolding), and next-paragraph suggestions (high scaffolding). Our findings reveal a U-shaped impact of scaffolding on writing quality and productivity (words/time). While low scaffolding did not significantly improve writing quality or productivity, high scaffolding led to significant improvements, especially benefiting non-regular writers and less tech-savvy users. No significant cognitive burden was observed while using the scaffolded writing tools, but a moderate decrease in text ownership and satisfaction was noted. Our results have broad implications for the design of AI-powered writing tools, including the need for personalized scaffolding mechanisms.

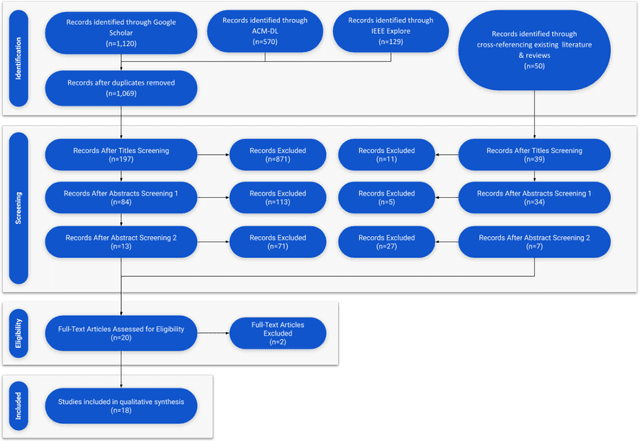

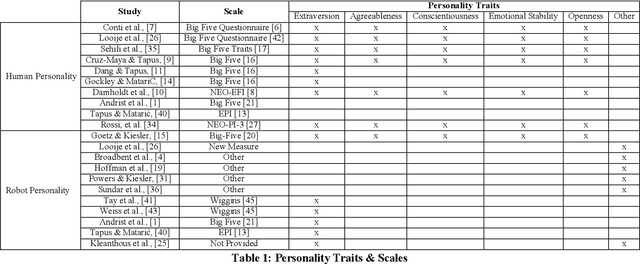

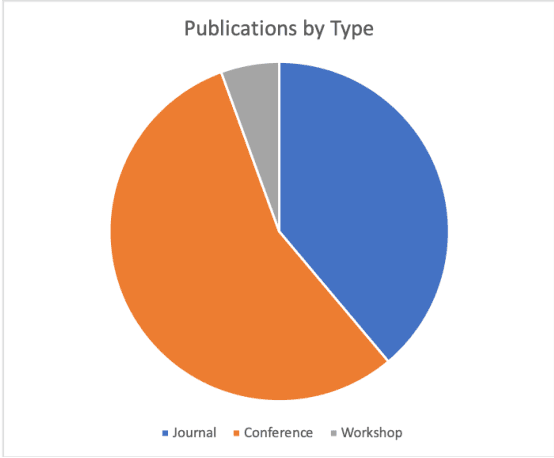

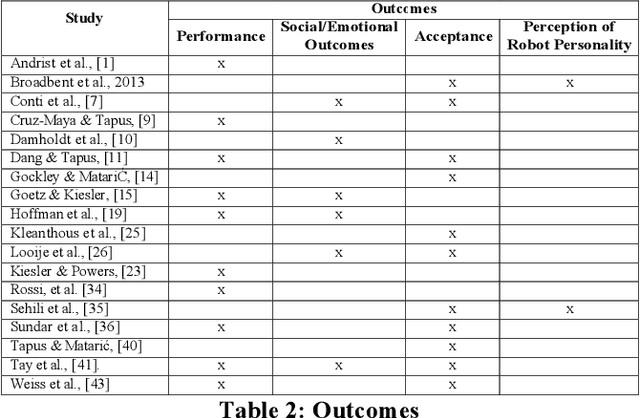

Personality in Healthcare Human Robot Interaction (H-HRI): A Literature Review and Brief Critique

Aug 15, 2020

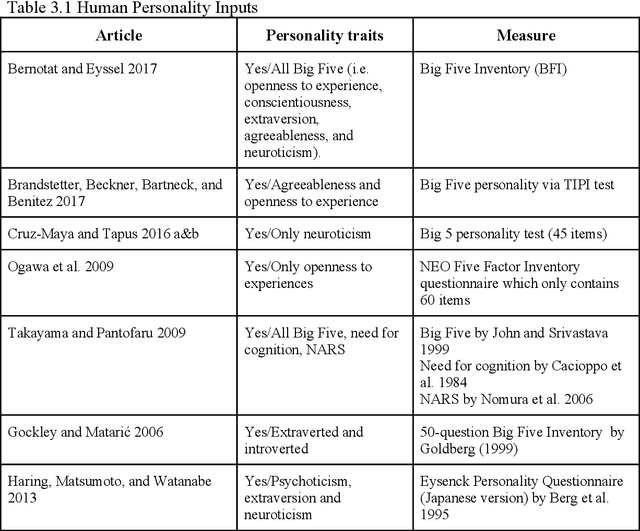

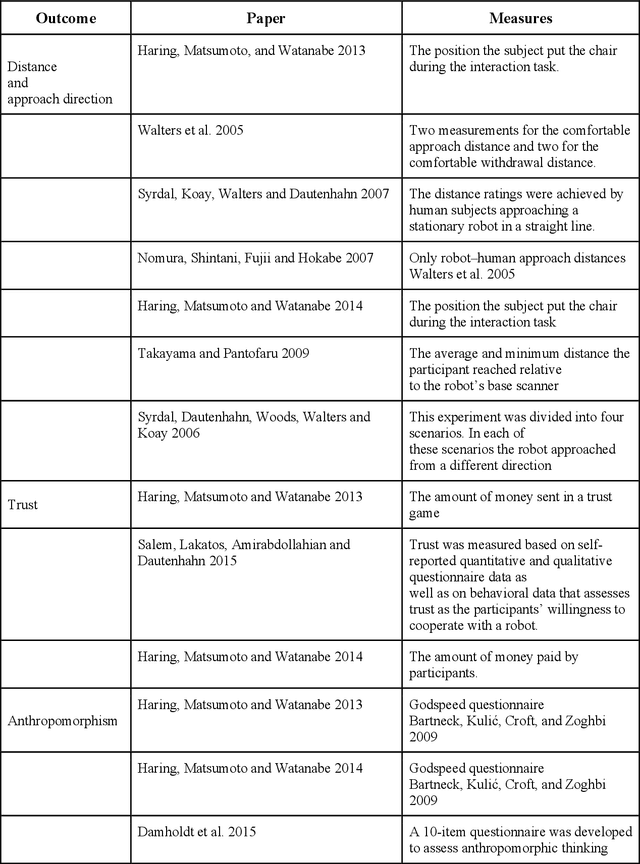

Abstract:Robots are becoming an important way to deliver health care, and personality is vital to understanding their effectiveness. Despite this, there is a lack of a systematic overarching understanding of personality in health care human robot interaction (H-HRI). To address this, the authors conducted a review that identified 18 studies on personality in H-HRI. This paper presents the results of that systematic literature review. Insights are derived from this review regarding the methodologies, outcomes, and samples utilized. The authors of this review discuss findings across this literature while identifying several gaps worthy of attention. Overall, this paper is an important starting point in understanding personality in H-HRI.

Designing Fair AI for Managing Employees in Organizations: A Review, Critique, and Design Agenda

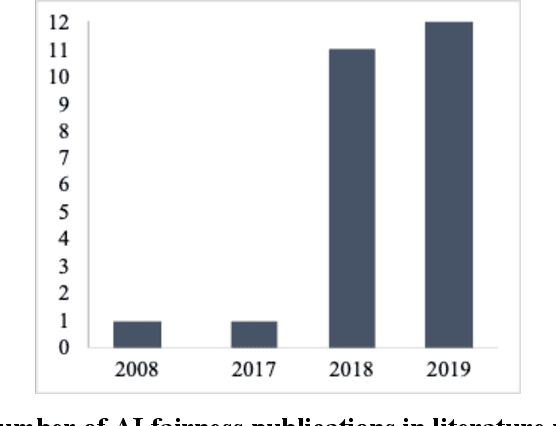

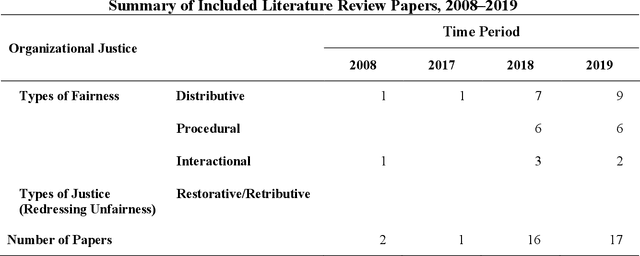

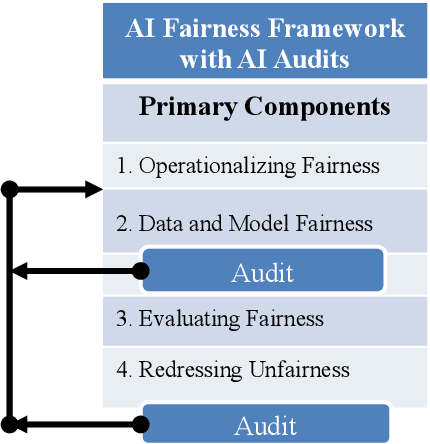

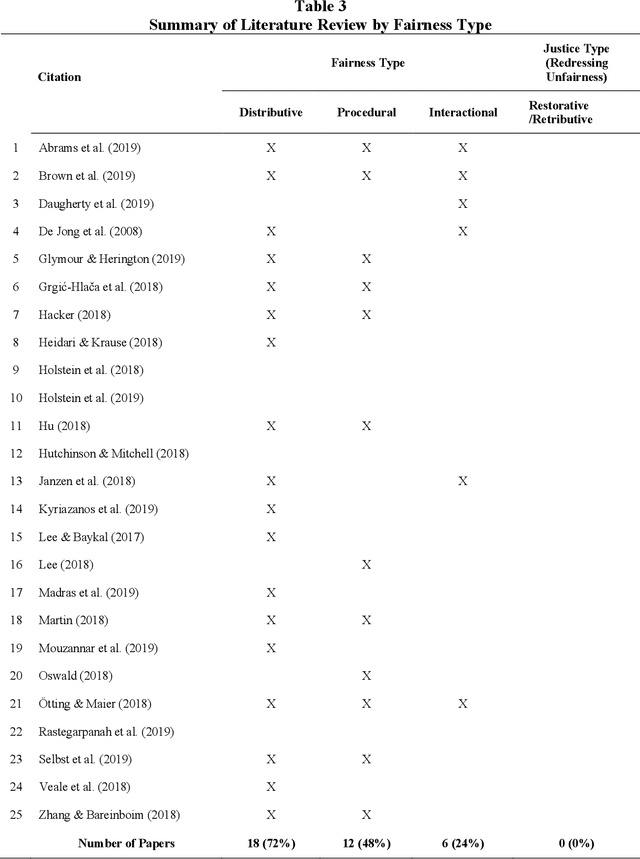

Feb 20, 2020

Abstract:Organizations are rapidly deploying artificial intelligence (AI) systems to manage their workers. However, AI has been found at times to be unfair to workers. Unfairness toward workers has been associated with decreased worker effort and increased worker turnover. To avoid such problems, AI systems must be designed to support fairness and redress instances of unfairness. Despite the attention related to AI unfairness, there has not been a theoretical and systematic approach to developing a design agenda. This paper addresses the issue in three ways. First, we introduce the organizational justice theory, three different fairness types (distributive, procedural, interactional), and the frameworks for redressing instances of unfairness (retributive justice, restorative justice). Second, we review the design literature that specifically focuses on issues of AI fairness in organizations. Third, we propose a design agenda for AI fairness in organizations that applies each of the fairness types to organizational scenarios. Then, the paper concludes with implications for future research.

A Review of Personality in Human Robot Interactions

Feb 05, 2020

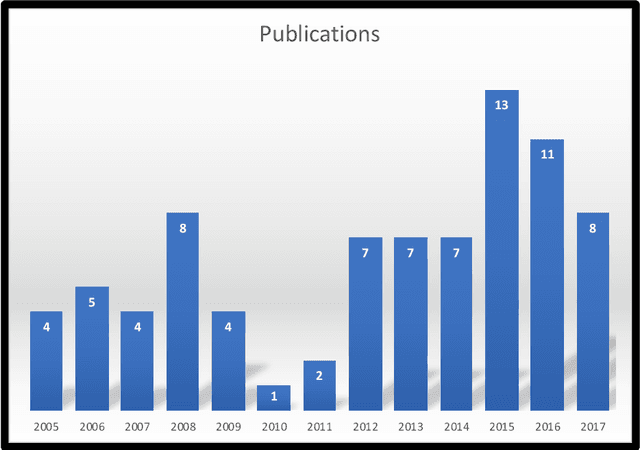

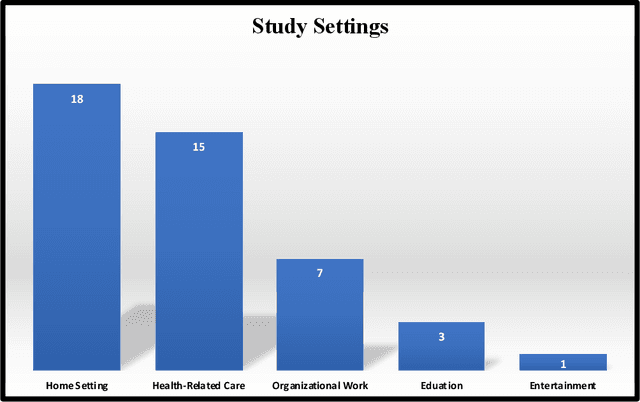

Abstract:Personality has been identified as a vital factor in understanding the quality of human robot interactions. Despite this the research in this area remains fragmented and lacks a coherent framework. This makes it difficult to understand what we know and identify what we do not. As a result our knowledge of personality in human robot interactions has not kept pace with the deployment of robots in organizations or in our broader society. To address this shortcoming, this paper reviews 83 articles and 84 separate studies to assess the current state of human robot personality research. This review: (1) highlights major thematic research areas, (2) identifies gaps in the literature, (3) derives and presents major conclusions from the literature and (4) offers guidance for future research.

Examining the Effects of Emotional Valence and Arousal on Takeover Performance in Conditionally Automated Driving

Jan 13, 2020

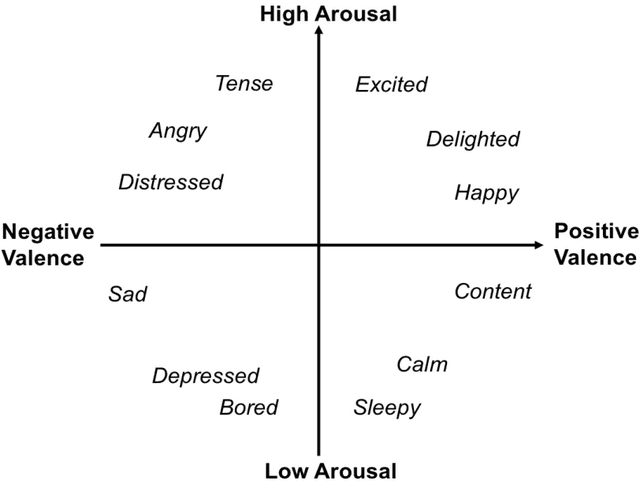

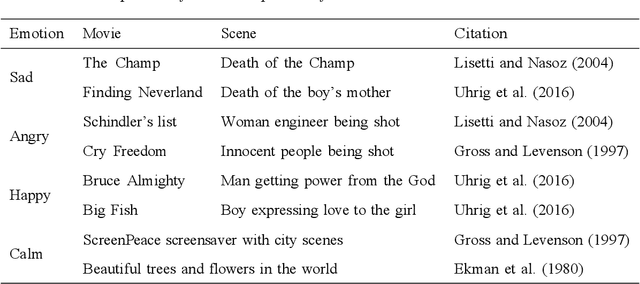

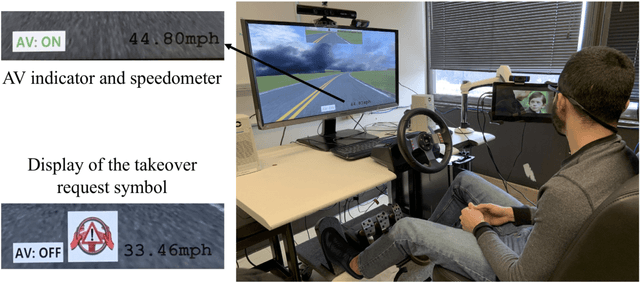

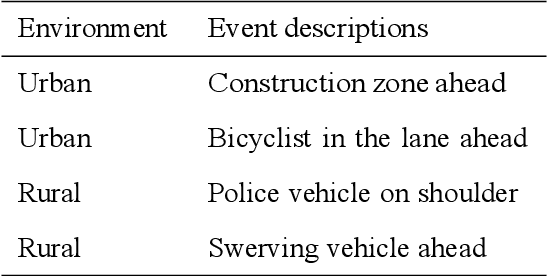

Abstract:In conditionally automated driving, drivers have difficulty in takeover transitions as they become increasingly decoupled from the operational level of driving. Factors influencing takeover performance, such as takeover lead time and the engagement of non-driving related tasks, have been studied in the past. However, despite the important role emotions play in human-machine interaction and in manual driving, little is known about how emotions influence drivers takeover performance. This study, therefore, examined the effects of emotional valence and arousal on drivers takeover timeliness and quality in conditionally automated driving. We conducted a driving simulation experiment with 32 participants. Movie clips were played for emotion induction. Participants with different levels of emotional valence and arousal were required to take over control from automated driving, and their takeover time and quality were analyzed. Results indicate that positive valence led to better takeover quality in the form of a smaller maximum resulting acceleration and a smaller maximum resulting jerk. However, high arousal did not yield an advantage in takeover time. This study contributes to the literature by demonstrating how emotional valence and arousal affect takeover performance. The benefits of positive emotions carry over from manual driving to conditionally automated driving while the benefits of arousal do not.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge