Kostas Marias

Synthetic Data Blueprint (SDB): A modular framework for the statistical, structural, and graph-based evaluation of synthetic tabular data

Dec 16, 2025

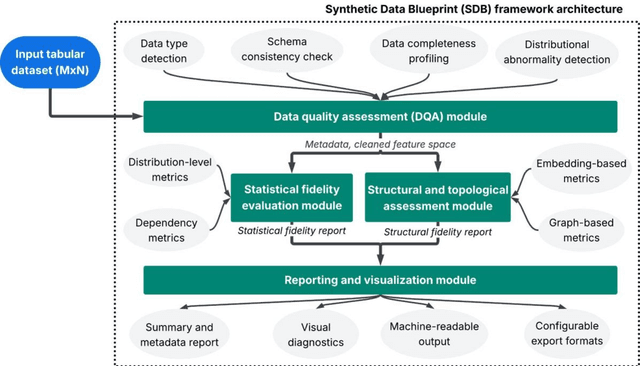

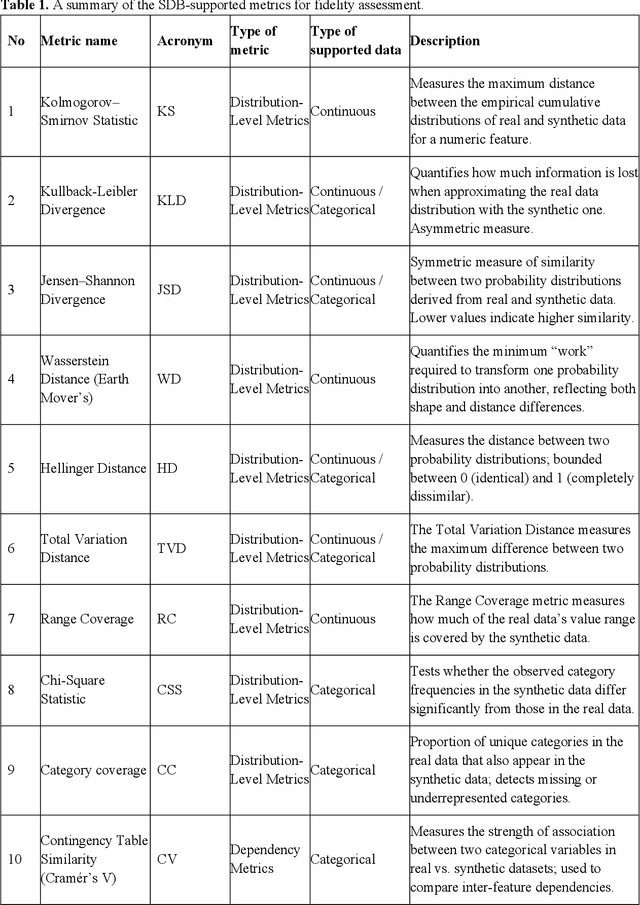

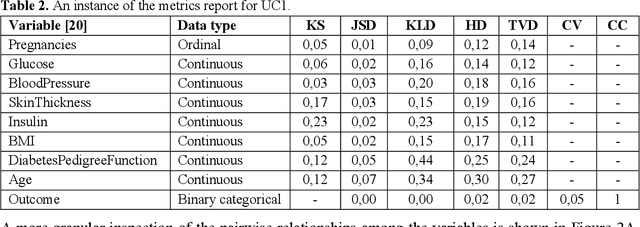

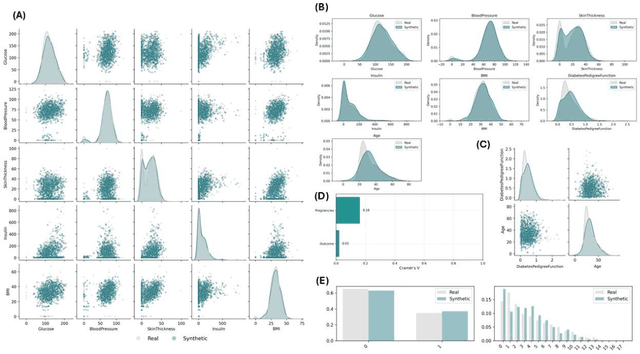

Abstract:In the rapidly evolving era of Artificial Intelligence (AI), synthetic data are widely used to accelerate innovation while preserving privacy and enabling broader data accessibility. However, the evaluation of synthetic data remains fragmented across heterogeneous metrics, ad-hoc scripts, and incomplete reporting practices. To address this gap, we introduce Synthetic Data Blueprint (SDB), a modular Pythonic based library to quantitatively and visually assess the fidelity of synthetic tabular data. SDB supports: (i) automated feature-type detection, (ii) distributional and dependency-level fidelity metrics, (iii) graph- and embedding-based structure preservation scores, and (iv) a rich suite of data visualization schemas. To demonstrate the breadth, robustness, and domain-agnostic applicability of the SDB, we evaluated the framework across three real-world use cases that differ substantially in scale, feature composition, statistical complexity, and downstream analytical requirements. These include: (i) healthcare diagnostics, (ii) socioeconomic and financial modelling, and (iii) cybersecurity and network traffic analysis. These use cases reveal how SDB can address diverse data fidelity assessment challenges, varying from mixed-type clinical variables to high-cardinality categorical attributes and high-dimensional telemetry signals, while at the same time offering a consistent, transparent, and reproducible benchmarking across heterogeneous domains.

MAMA-MIA: A Large-Scale Multi-Center Breast Cancer DCE-MRI Benchmark Dataset with Expert Segmentations

Jun 19, 2024Abstract:Current research in breast cancer Magnetic Resonance Imaging (MRI), especially with Artificial Intelligence (AI), faces challenges due to the lack of expert segmentations. To address this, we introduce the MAMA-MIA dataset, comprising 1506 multi-center dynamic contrast-enhanced MRI cases with expert segmentations of primary tumors and non-mass enhancement areas. These cases were sourced from four publicly available collections in The Cancer Imaging Archive (TCIA). Initially, we trained a deep learning model to automatically segment the cases, generating preliminary segmentations that significantly reduced expert segmentation time. Sixteen experts, averaging 9 years of experience in breast cancer, then corrected these segmentations, resulting in the final expert segmentations. Additionally, two radiologists conducted a visual inspection of the automatic segmentations to support future quality control studies. Alongside the expert segmentations, we provide 49 harmonized demographic and clinical variables and the pretrained weights of the well-known nnUNet architecture trained using the DCE-MRI full-images and expert segmentations. This dataset aims to accelerate the development and benchmarking of deep learning models and foster innovation in breast cancer diagnostics and treatment planning.

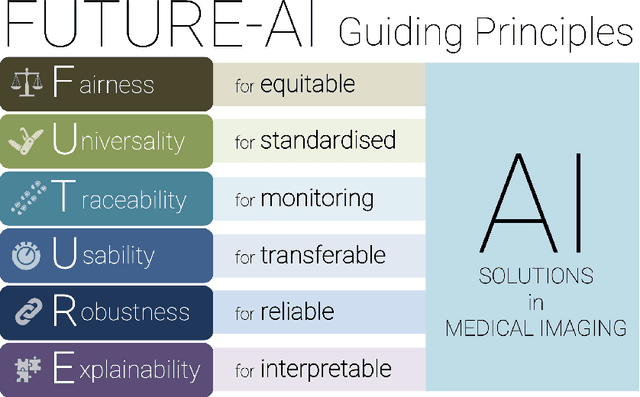

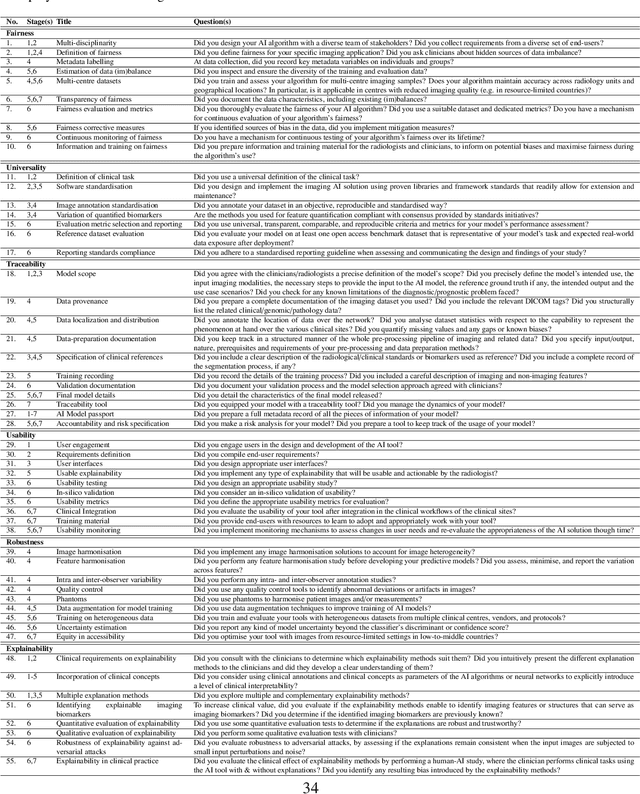

FUTURE-AI: Guiding Principles and Consensus Recommendations for Trustworthy Artificial Intelligence in Medical Imaging

Sep 29, 2021

Abstract:The recent advancements in artificial intelligence (AI) combined with the extensive amount of data generated by today's clinical systems, has led to the development of imaging AI solutions across the whole value chain of medical imaging, including image reconstruction, medical image segmentation, image-based diagnosis and treatment planning. Notwithstanding the successes and future potential of AI in medical imaging, many stakeholders are concerned of the potential risks and ethical implications of imaging AI solutions, which are perceived as complex, opaque, and difficult to comprehend, utilise, and trust in critical clinical applications. Despite these concerns and risks, there are currently no concrete guidelines and best practices for guiding future AI developments in medical imaging towards increased trust, safety and adoption. To bridge this gap, this paper introduces a careful selection of guiding principles drawn from the accumulated experiences, consensus, and best practices from five large European projects on AI in Health Imaging. These guiding principles are named FUTURE-AI and its building blocks consist of (i) Fairness, (ii) Universality, (iii) Traceability, (iv) Usability, (v) Robustness and (vi) Explainability. In a step-by-step approach, these guidelines are further translated into a framework of concrete recommendations for specifying, developing, evaluating, and deploying technically, clinically and ethically trustworthy AI solutions into clinical practice.

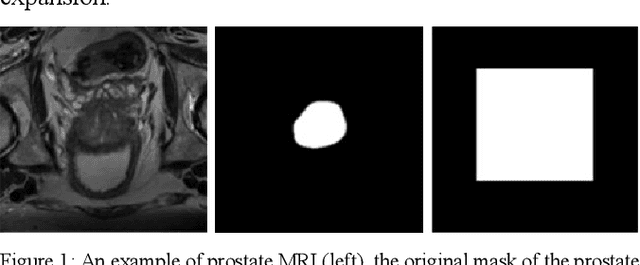

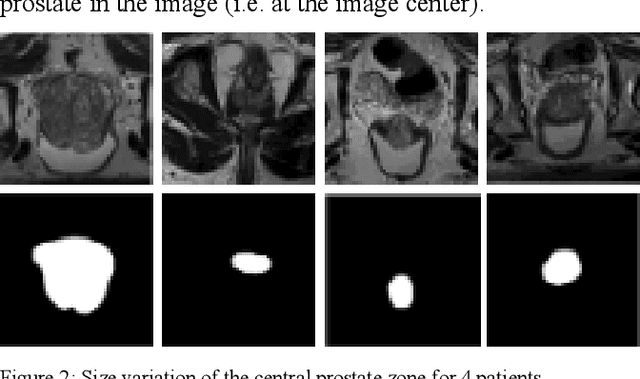

A new smart-cropping pipeline for prostate segmentation using deep learning networks

Jul 07, 2021

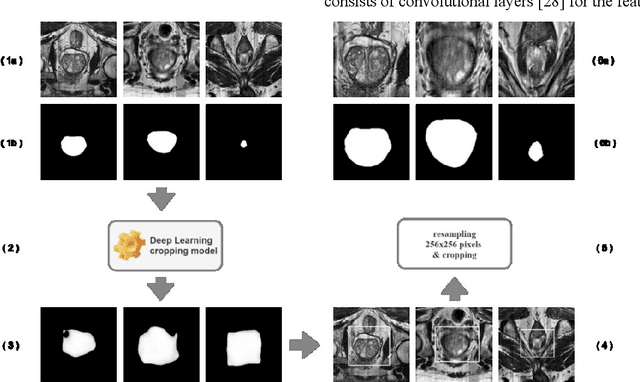

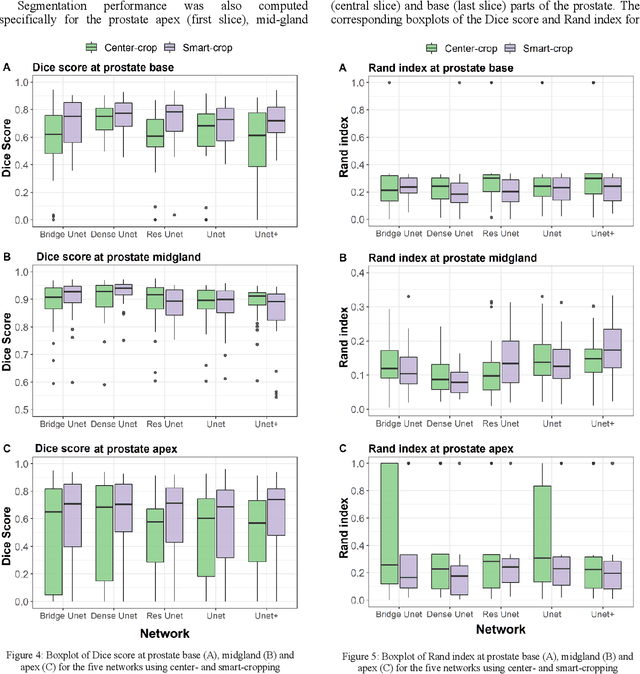

Abstract:Prostate segmentation from magnetic resonance imaging (MRI) is a challenging task. In recent years, several network architectures have been proposed to automate this process and alleviate the burden of manual annotation. Although the performance of these models has achieved promising results, there is still room for improvement before these models can be used safely and effectively in clinical practice. One of the major challenges in prostate MR image segmentation is the presence of class imbalance in the image labels where the background pixels dominate over the prostate. In the present work we propose a DL-based pipeline for cropping the region around the prostate from MRI images to produce a more balanced distribution of the foreground pixels (prostate) and the background pixels and improve segmentation accuracy. The effect of DL-cropping for improving the segmentation performance compared to standard center-cropping is assessed using five popular DL networks for prostate segmentation, namely U-net, U-net+, Res Unet++, Bridge U-net and Dense U-net. The proposed smart-cropping outperformed the standard center cropping in terms of segmentation accuracy for all the evaluated prostate segmentation networks. In terms of Dice score, the highest improvement was achieved for the U-net+ and ResU-net++ architectures corresponding to 8.9% and 8%, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge