Kedi Xu

Reasoning about the Unseen for Efficient Outdoor Object Navigation

Sep 18, 2023Abstract:Robots should exist anywhere humans do: indoors, outdoors, and even unmapped environments. In contrast, the focus of recent advancements in Object Goal Navigation(OGN) has targeted navigating in indoor environments by leveraging spatial and semantic cues that do not generalize outdoors. While these contributions provide valuable insights into indoor scenarios, the broader spectrum of real-world robotic applications often extends to outdoor settings. As we transition to the vast and complex terrains of outdoor environments, new challenges emerge. Unlike the structured layouts found indoors, outdoor environments lack clear spatial delineations and are riddled with inherent semantic ambiguities. Despite this, humans navigate with ease because we can reason about the unseen. We introduce a new task OUTDOOR, a new mechanism for Large Language Models (LLMs) to accurately hallucinate possible futures, and a new computationally aware success metric for pushing research forward in this more complex domain. Additionally, we show impressive results on both a simulated drone and physical quadruped in outdoor environments. Our agent has no premapping and our formalism outperforms naive LLM-based approaches

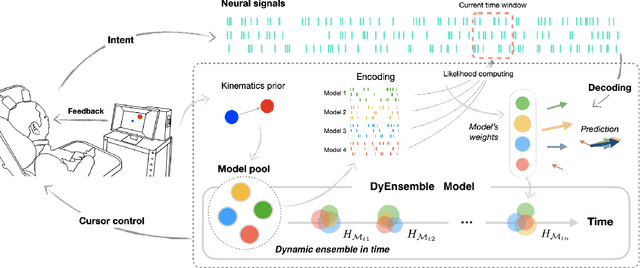

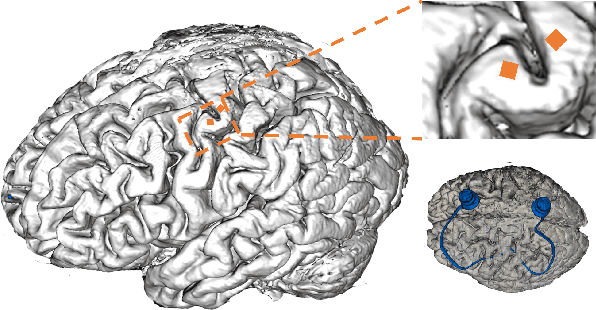

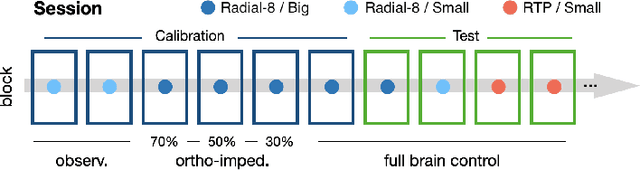

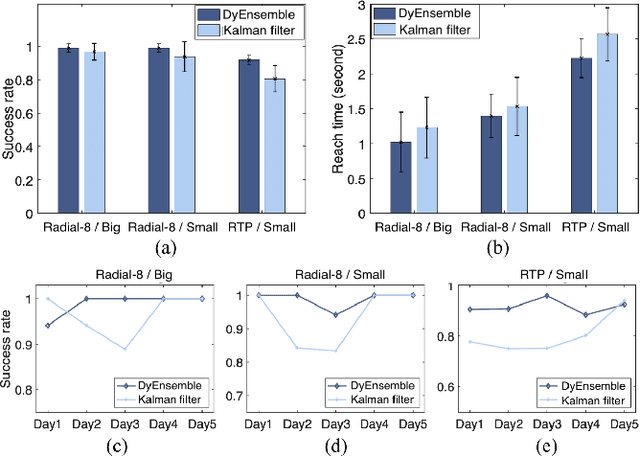

Dynamic Ensemble Bayesian Filter for Robust Control of a Human Brain-machine Interface

Apr 22, 2022

Abstract:Objective: Brain-machine interfaces (BMIs) aim to provide direct brain control of devices such as prostheses and computer cursors, which have demonstrated great potential for mobility restoration. One major limitation of current BMIs lies in the unstable performance in online control due to the variability of neural signals, which seriously hinders the clinical availability of BMIs. Method: To deal with the neural variability in online BMI control, we propose a dynamic ensemble Bayesian filter (DyEnsemble). DyEnsemble extends Bayesian filters with a dynamic measurement model, which adjusts its parameters in time adaptively with neural changes. This is achieved by learning a pool of candidate functions and dynamically weighting and assembling them according to neural signals. In this way, DyEnsemble copes with variability in signals and improves the robustness of online control. Results: Online BMI experiments with a human participant demonstrate that, compared with the velocity Kalman filter, DyEnsemble significantly improves the control accuracy (increases the success rate by 13.9% and reduces the reach time by 13.5% in the random target pursuit task) and robustness (performs more stably over different experiment days). Conclusion: Our results demonstrate the superiority of DyEnsemble in online BMI control. Significance: DyEnsemble frames a novel and flexible framework for robust neural decoding, which is beneficial to different neural decoding applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge