Jong-Chan Kim

Demand Layering for Real-Time DNN Inference with Minimized Memory Usage

Oct 08, 2022

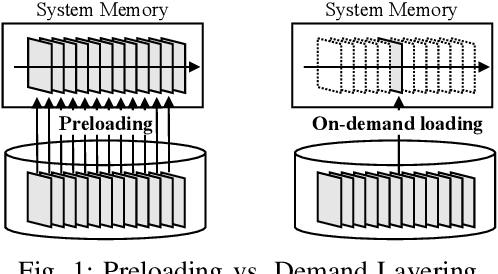

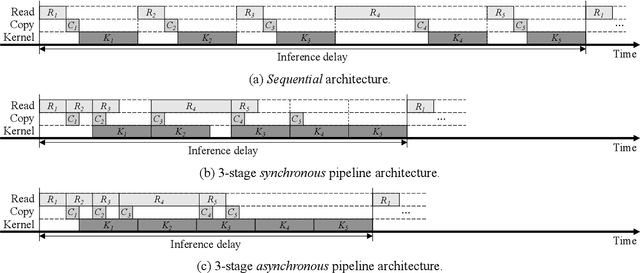

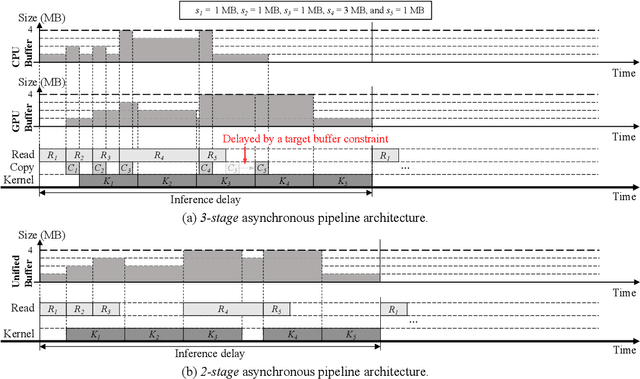

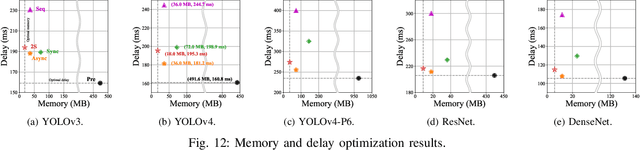

Abstract:When executing a deep neural network (DNN), its model parameters are loaded into GPU memory before execution, incurring a significant GPU memory burden. There are studies that reduce GPU memory usage by exploiting CPU memory as a swap device. However, this approach is not applicable in most embedded systems with integrated GPUs where CPU and GPU share a common memory. In this regard, we present Demand Layering, which employs a fast solid-state drive (SSD) as a co-running partner of a GPU and exploits the layer-by-layer execution of DNNs. In our approach, a DNN is loaded and executed in a layer-by-layer manner, minimizing the memory usage to the order of a single layer. Also, we developed a pipeline architecture that hides most additional delays caused by the interleaved parameter loadings alongside layer executions. Our implementation shows a 96.5% memory reduction with just 14.8% delay overhead on average for representative DNNs. Furthermore, by exploiting the memory-delay tradeoff, near-zero delay overhead (under 1 ms) can be achieved with a slightly increased memory usage (still an 88.4% reduction), showing the great potential of Demand Layering.

Cyclops: Open Platform for Scale Truck Platooning

Mar 03, 2022

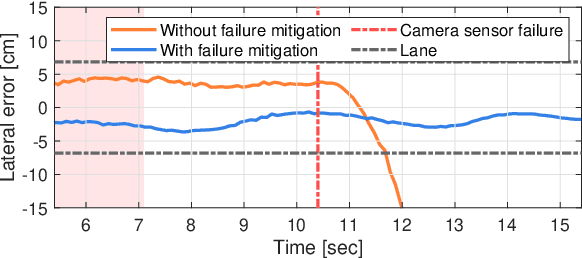

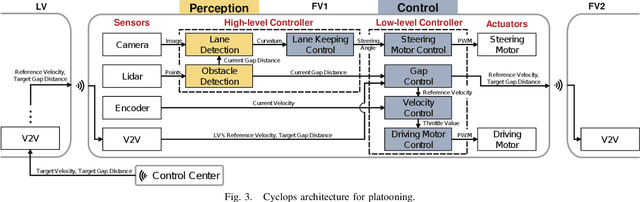

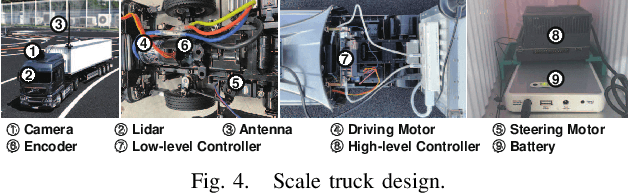

Abstract:Cyclops, introduced in this paper, is an open research platform for everyone that wants to validate novel ideas and approaches in the area of self-driving heavy-duty vehicle platooning. The platform consists of multiple 1/14 scale semi-trailer trucks, a scale proving ground, and associated computing, communication and control modules that enable self-driving on the proving ground. A perception system for each vehicle is composed of a lidar-based object tracking system and a lane detection/control system. The former is to maintain the gap to the leading vehicle and the latter is to maintain the vehicle within the lane by steering control. The lane detection system is optimized for truck platooning where the field of view of the front-facing camera is severely limited due to a small gap to the leading vehicle. This platform is particularly amenable to validate mitigation strategies for safety-critical situations. Indeed, a simplex structure is adopted in the embedded module for testing various fail safe operations. We illustrate a scenario where camera sensor fails in the perception system but the vehicle operates at a reduced capacity to a graceful stop. Details of the Cyclops including 3D CAD designs and algorithm source codes are released for those who want to build similar testbeds.

Energy-Efficient Adaptive System Reconfiguration for Dynamic Deadlines in Autonomous Driving

Jun 03, 2021

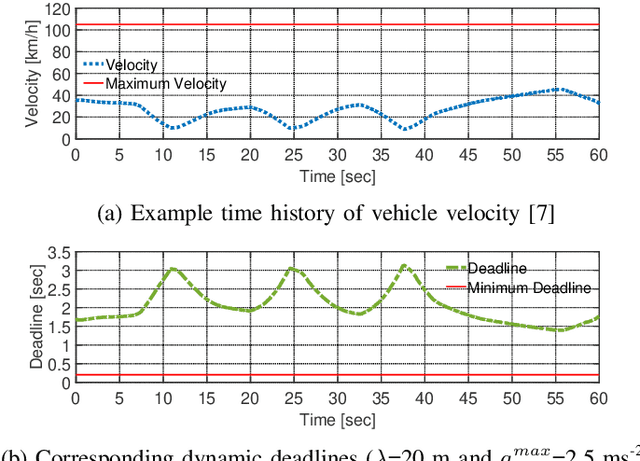

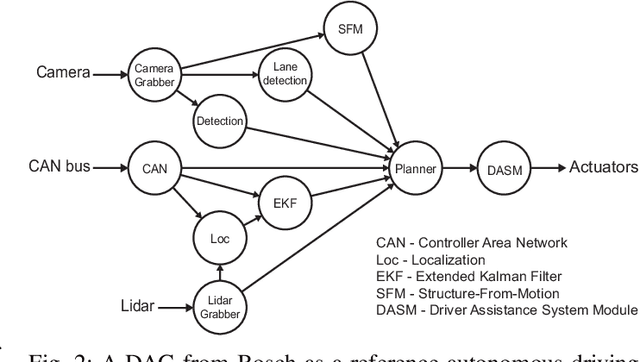

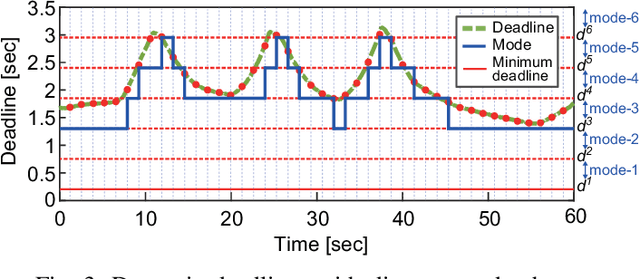

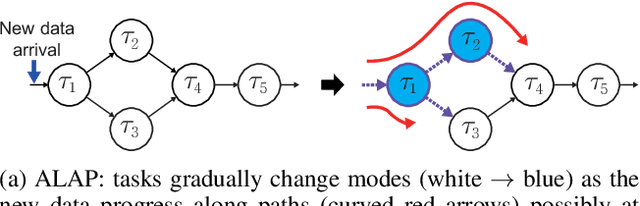

Abstract:The increasing computing demands of autonomous driving applications make energy optimizations critical for reducing battery capacity and vehicle weight. Current energy optimization methods typically target traditional real-time systems with static deadlines, resulting in conservative energy savings that are unable to exploit additional energy optimizations due to dynamic deadlines arising from the vehicle's change in velocity and driving context. We present an adaptive system optimization and reconfiguration approach that dynamically adapts the scheduling parameters and processor speeds to satisfy dynamic deadlines while consuming as little energy as possible. Our experimental results with an autonomous driving task set from Bosch and real-world driving data show energy reductions up to 46.4% on average in typical dynamic driving scenarios compared with traditional static energy optimization methods, demonstrating great potential for dynamic energy optimization gains by exploiting dynamic deadlines.

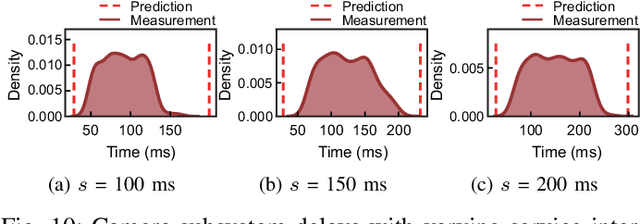

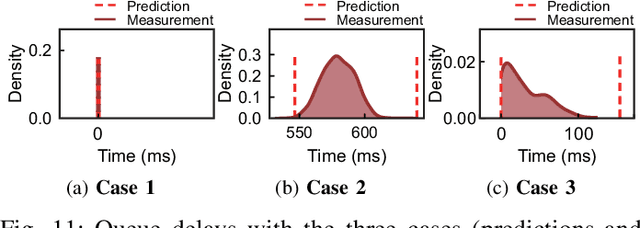

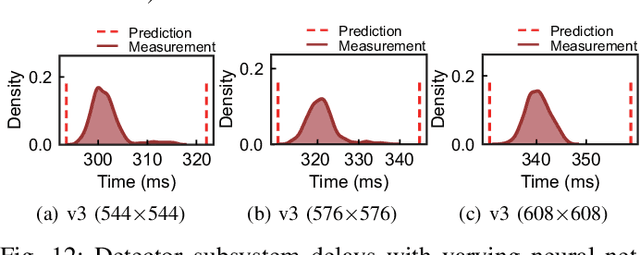

R-TOD: Real-Time Object Detector with Minimized End-to-End Delay for Autonomous Driving

Oct 23, 2020

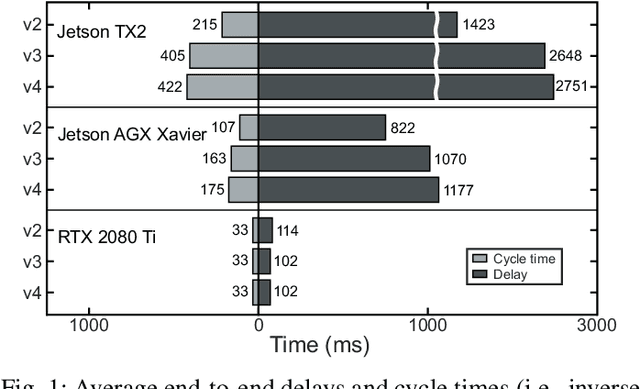

Abstract:For realizing safe autonomous driving, the end-to-end delays of real-time object detection systems should be thoroughly analyzed and minimized. However, despite recent development of neural networks with minimized inference delays, surprisingly little attention has been paid to their end-to-end delays from an object's appearance until its detection is reported. With this motivation, this paper aims to provide more comprehensive understanding of the end-to-end delay, through which precise best- and worst-case delay predictions are formulated, and three optimization methods are implemented: (i) on-demand capture, (ii) zero-slack pipeline, and (iii) contention-free pipeline. Our experimental results show a 76% reduction in the end-to-end delay of Darknet YOLO (You Only Look Once) v3 (from 1070 ms to 261 ms), thereby demonstrating the great potential of exploiting the end-to-end delay analysis for autonomous driving. Furthermore, as we only modify the system architecture and do not change the neural network architecture itself, our approach incurs no penalty on the detection accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge