Jingyi Hou

Unveiling the Impact of Multimodal Features on Chinese Spelling Correction: From Analysis to Design

Apr 10, 2025

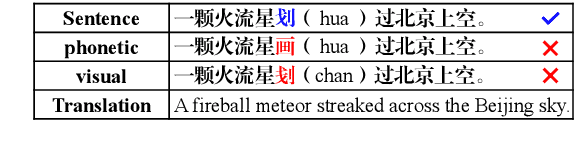

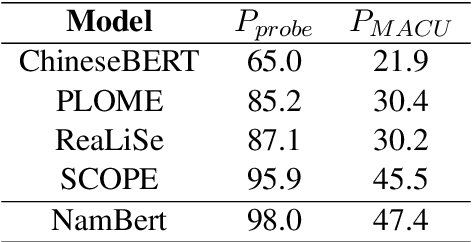

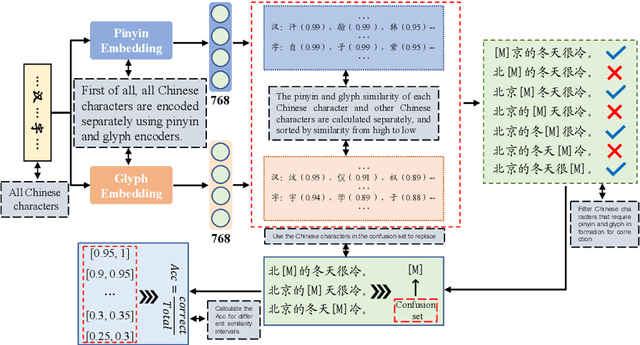

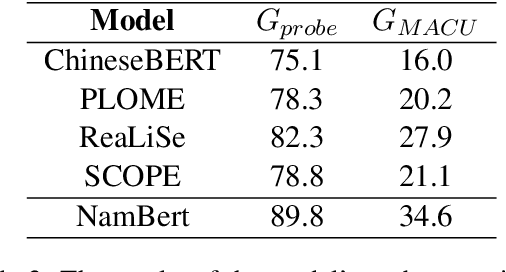

Abstract:The Chinese Spelling Correction (CSC) task focuses on detecting and correcting spelling errors in sentences. Current research primarily explores two approaches: traditional multimodal pre-trained models and large language models (LLMs). However, LLMs face limitations in CSC, particularly over-correction, making them suboptimal for this task. While existing studies have investigated the use of phonetic and graphemic information in multimodal CSC models, effectively leveraging these features to enhance correction performance remains a challenge. To address this, we propose the Multimodal Analysis for Character Usage (\textbf{MACU}) experiment, identifying potential improvements for multimodal correctison. Based on empirical findings, we introduce \textbf{NamBert}, a novel multimodal model for Chinese spelling correction. Experiments on benchmark datasets demonstrate NamBert's superiority over SOTA methods. We also conduct a comprehensive comparison between NamBert and LLMs, systematically evaluating their strengths and limitations in CSC. Our code and model are available at https://github.com/iioSnail/NamBert.

Discovering Predictable Latent Factors for Time Series Forecasting

Mar 18, 2023Abstract:Modern time series forecasting methods, such as Transformer and its variants, have shown strong ability in sequential data modeling. To achieve high performance, they usually rely on redundant or unexplainable structures to model complex relations between variables and tune the parameters with large-scale data. Many real-world data mining tasks, however, lack sufficient variables for relation reasoning, and therefore these methods may not properly handle such forecasting problems. With insufficient data, time series appear to be affected by many exogenous variables, and thus, the modeling becomes unstable and unpredictable. To tackle this critical issue, in this paper, we develop a novel algorithmic framework for inferring the intrinsic latent factors implied by the observable time series. The inferred factors are used to form multiple independent and predictable signal components that enable not only sparse relation reasoning for long-term efficiency but also reconstructing the future temporal data for accurate prediction. To achieve this, we introduce three characteristics, i.e., predictability, sufficiency, and identifiability, and model these characteristics via the powerful deep latent dynamics models to infer the predictable signal components. Empirical results on multiple real datasets show the efficiency of our method for different kinds of time series forecasting. The statistical analysis validates the predictability of the learned latent factors.

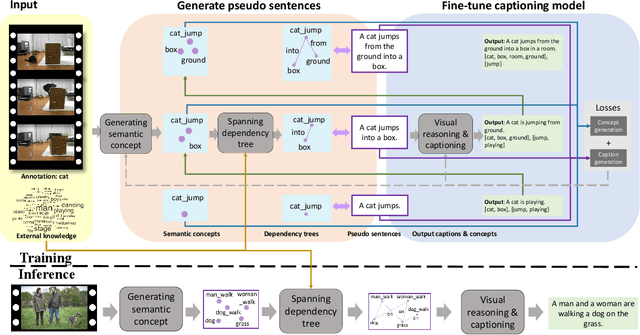

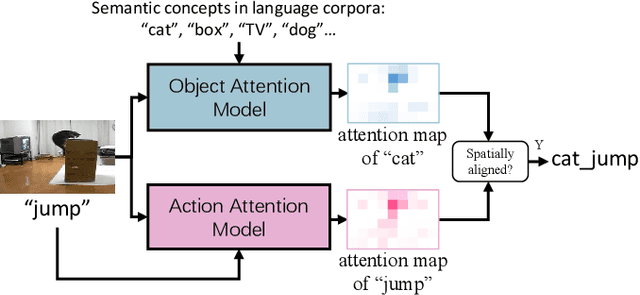

Video Captioning Using Weak Annotation

Sep 02, 2020

Abstract:Video captioning has shown impressive progress in recent years. One key reason of the performance improvements made by existing methods lie in massive paired video-sentence data, but collecting such strong annotation, i.e., high-quality sentences, is time-consuming and laborious. It is the fact that there now exist an amazing number of videos with weak annotation that only contains semantic concepts such as actions and objects. In this paper, we investigate using weak annotation instead of strong annotation to train a video captioning model. To this end, we propose a progressive visual reasoning method that progressively generates fine sentences from weak annotations by inferring more semantic concepts and their dependency relationships for video captioning. To model concept relationships, we use dependency trees that are spanned by exploiting external knowledge from large sentence corpora. Through traversing the dependency trees, the sentences are generated to train the captioning model. Accordingly, we develop an iterative refinement algorithm that refines sentences via spanning dependency trees and fine-tunes the captioning model using the refined sentences in an alternative training manner. Experimental results demonstrate that our method using weak annotation is very competitive to the state-of-the-art methods using strong annotation.

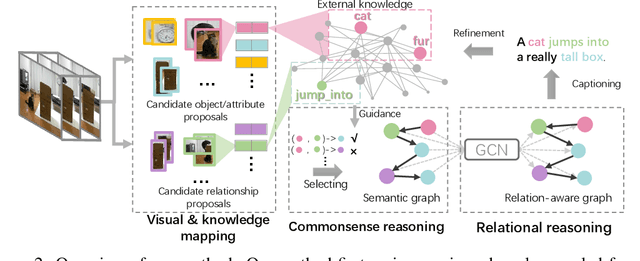

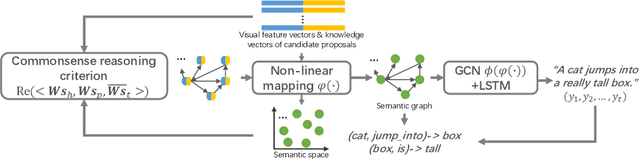

Relational Reasoning using Prior Knowledge for Visual Captioning

Jun 04, 2019

Abstract:Exploiting relationships among objects has achieved remarkable progress in interpreting images or videos by natural language. Most existing methods resort to first detecting objects and their relationships, and then generating textual descriptions, which heavily depends on pre-trained detectors and leads to performance drop when facing problems of heavy occlusion, tiny-size objects and long-tail in object detection. In addition, the separate procedure of detecting and captioning results in semantic inconsistency between the pre-defined object/relation categories and the target lexical words. We exploit prior human commonsense knowledge for reasoning relationships between objects without any pre-trained detectors and reaching semantic coherency within one image or video in captioning. The prior knowledge (e.g., in the form of knowledge graph) provides commonsense semantic correlation and constraint between objects that are not explicit in the image and video, serving as useful guidance to build semantic graph for sentence generation. Particularly, we present a joint reasoning method that incorporates 1) commonsense reasoning for embedding image or video regions into semantic space to build semantic graph and 2) relational reasoning for encoding semantic graph to generate sentences. Extensive experiments on the MS-COCO image captioning benchmark and the MSVD video captioning benchmark validate the superiority of our method on leveraging prior commonsense knowledge to enhance relational reasoning for visual captioning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge