Jingjing Xiao

ADMMiRNN: Training RNN with Stable Convergence via An Efficient ADMM Approach

Jun 17, 2020

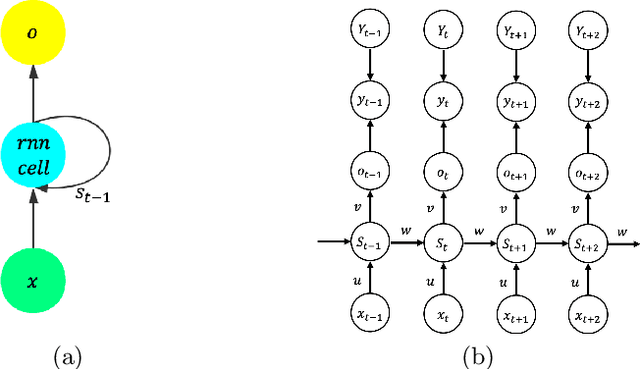

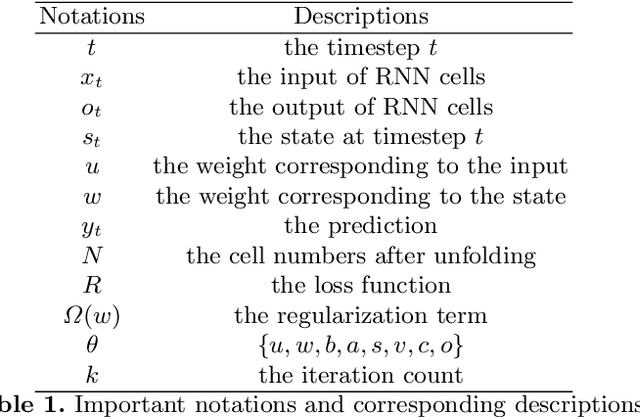

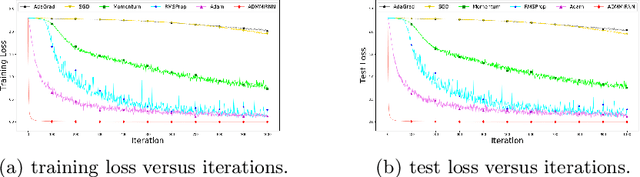

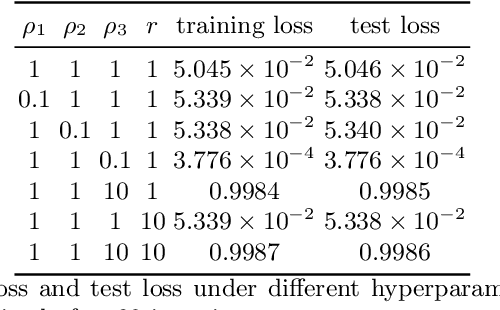

Abstract:It is hard to train Recurrent Neural Network (RNN) with stable convergence and avoid gradient vanishing and exploding, as the weights in the recurrent unit are repeated from iteration to iteration. Moreover, RNN is sensitive to the initialization of weights and bias, which brings difficulty in the training phase. With the gradient-free feature and immunity to poor conditions, the Alternating Direction Method of Multipliers (ADMM) has become a promising algorithm to train neural networks beyond traditional stochastic gradient algorithms. However, ADMM could not be applied to train RNN directly since the state in the recurrent unit is repetitively updated over timesteps. Therefore, this work builds a new framework named ADMMiRNN upon the unfolded form of RNN to address the above challenges simultaneously and provides novel update rules and theoretical convergence analysis. We explicitly specify key update rules in the iterations of ADMMiRNN with deliberately constructed approximation techniques and solutions to each subproblem instead of vanilla ADMM. Numerical experiments are conducted on MNIST and text classification tasks, where ADMMiRNN achieves convergent results and outperforms compared baselines. Furthermore, ADMMiRNN trains RNN in a more stable way without gradient vanishing or exploding compared to the stochastic gradient algorithms. Source code has been available at https://github.com/TonyTangYu/ADMMiRNN.

Semantic tracking: Single-target tracking with inter-supervised convolutional networks

Nov 19, 2016

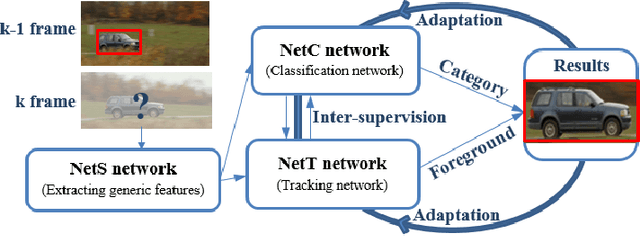

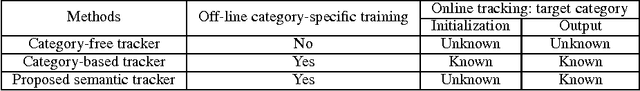

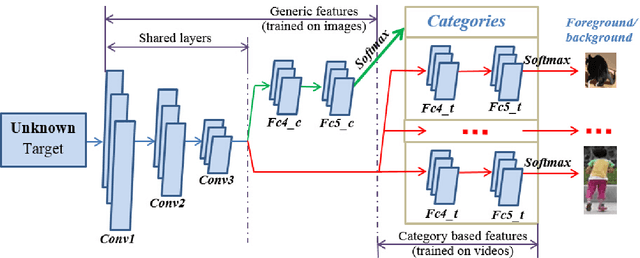

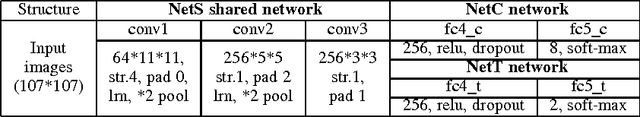

Abstract:This article presents a semantic tracker which simultaneously tracks a single target and recognises its category. In general, it is hard to design a tracking model suitable for all object categories, e.g., a rigid tracker for a car is not suitable for a deformable gymnast. Category-based trackers usually achieve superior tracking performance for the objects of that specific category, but have difficulties being generalised. Therefore, we propose a novel unified robust tracking framework which explicitly encodes both generic features and category-based features. The tracker consists of a shared convolutional network (NetS), which feeds into two parallel networks, NetC for classification and NetT for tracking. NetS is pre-trained on ImageNet to serve as a generic feature extractor across the different object categories for NetC and NetT. NetC utilises those features within fully connected layers to classify the object category. NetT has multiple branches, corresponding to multiple categories, to distinguish the tracked object from the background. Since each branch in NetT is trained by the videos of a specific category or groups of similar categories, NetT encodes category-based features for tracking. During online tracking, NetC and NetT jointly determine the target regions with the right category and foreground labels for target estimation. To improve the robustness and precision, NetC and NetT inter-supervise each other and trigger network adaptation when their outputs are ambiguous for the same image regions (i.e., when the category label contradicts the foreground/background classification). We have compared the performance of our tracker to other state-of-the-art trackers on a large-scale tracking benchmark (100 sequences)---the obtained results demonstrate the effectiveness of our proposed tracker as it outperformed other 38 state-of-the-art tracking algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge