Jethro Tan

Dielectric Tensor Prediction for Inorganic Materials Using Latent Information from Preferred Potential

May 15, 2024Abstract:Dielectrics are materials with widespread applications in flash memory, central processing units, photovoltaics, capacitors, etc. However, the availability of public dielectric data remains limited, hindering research and development efforts. Previously, machine learning models focused on predicting dielectric constants as scalars, overlooking the importance of dielectric tensors in understanding material properties under directional electric fields for material design and simulation. This study demonstrates the value of common equivariant structural embedding features derived from a universal neural network potential in enhancing the prediction of dielectric properties. To integrate channel information from various-rank latent features while preserving the desired SE(3) equivariance to the second-rank dielectric tensors, we design an equivariant readout decoder to predict the total, electronic, and ionic dielectric tensors individually, and compare our model with the state-of-the-art models. Finally, we evaluate our model by conducting virtual screening on thermodynamical stable structure candidates in Materials Project. The material Ba\textsubscript{2}SmTaO\textsubscript{6} with large band gaps ($E_g=3.36 \mathrm{eV}$) and dielectric constants ($\epsilon=93.81$) is successfully identified out of the 14k candidate set. The results show that our methods give good accuracy on predicting dielectric tensors of inorganic materials, emphasizing their potential in contributing to the discovery of novel dielectrics.

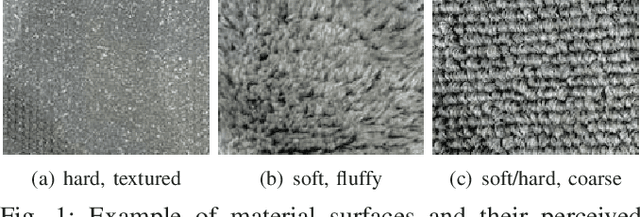

Deep Visuo-Tactile Learning: Estimation of Tactile Properties from Images

Oct 03, 2018

Abstract:Estimation of tactile properties from vision, such as slipperiness or roughness, is important to effectively interact with the environment. These tactile properties help us decide which actions we should choose and how to perform them. E.g., we can drive slower if we see that we have bad traction or grasp tighter if an item looks slippery. We believe that this ability also helps robots to enhance their understanding of the environment, and thus enables them to tailor their actions to the situation at hand. We therefore propose a model to estimate the degree of tactile properties from visual perception alone (e.g., the level of slipperiness or roughness). Our method extends a encoder-decoder network, in which the latent variables are visual and tactile features. In contrast to previous works, our method does not require manual labeling, but only RGB images and the corresponding tactile sensor data. All our data is collected with a webcam and uSkin tactile sensor mounted on the end-effector of a Sawyer robot, which strokes the surfaces of 25 different materials. We show that our model generalizes to materials not included in the training data by evaluating the feature space, indicating that it has learned to associate important tactile properties with images.

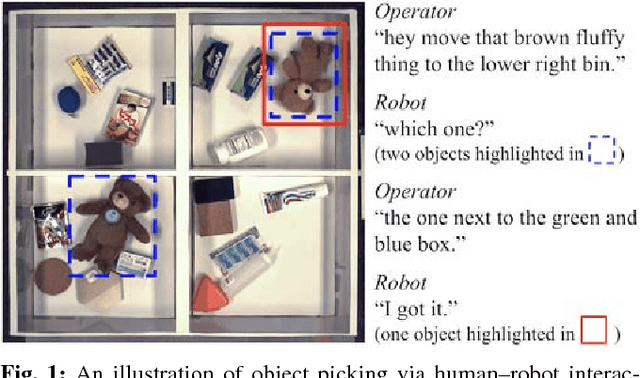

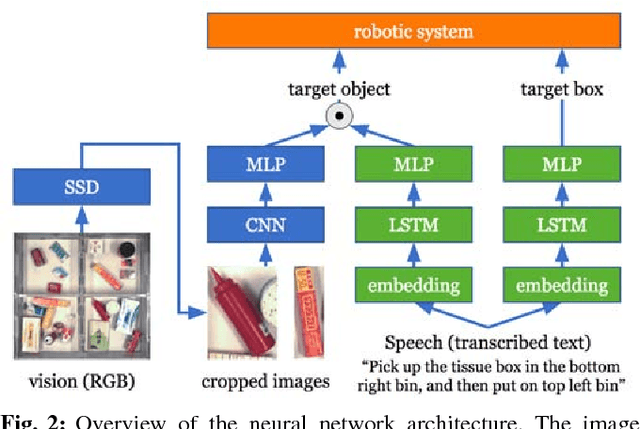

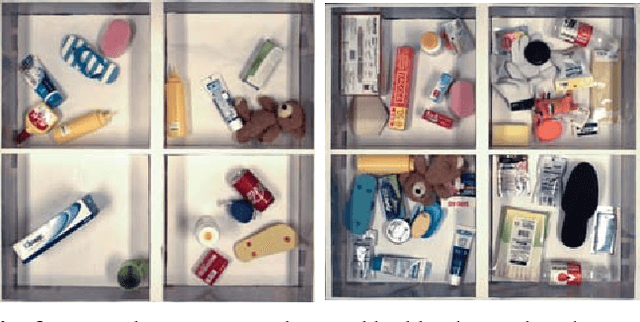

Interactively Picking Real-World Objects with Unconstrained Spoken Language Instructions

Mar 28, 2018

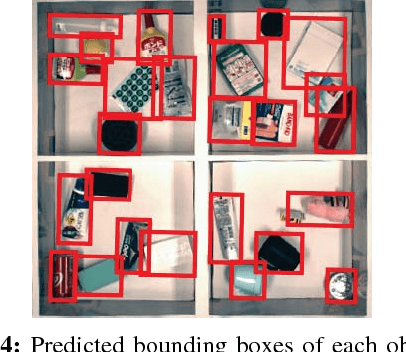

Abstract:Comprehension of spoken natural language is an essential component for robots to communicate with human effectively. However, handling unconstrained spoken instructions is challenging due to (1) complex structures including a wide variety of expressions used in spoken language and (2) inherent ambiguity in interpretation of human instructions. In this paper, we propose the first comprehensive system that can handle unconstrained spoken language and is able to effectively resolve ambiguity in spoken instructions. Specifically, we integrate deep-learning-based object detection together with natural language processing technologies to handle unconstrained spoken instructions, and propose a method for robots to resolve instruction ambiguity through dialogue. Through our experiments on both a simulated environment as well as a physical industrial robot arm, we demonstrate the ability of our system to understand natural instructions from human operators effectively, and how higher success rates of the object picking task can be achieved through an interactive clarification process.

Map-based Multi-Policy Reinforcement Learning: Enhancing Adaptability of Robots by Deep Reinforcement Learning

Oct 18, 2017

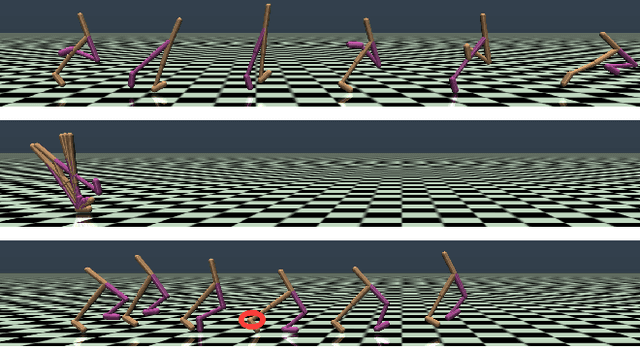

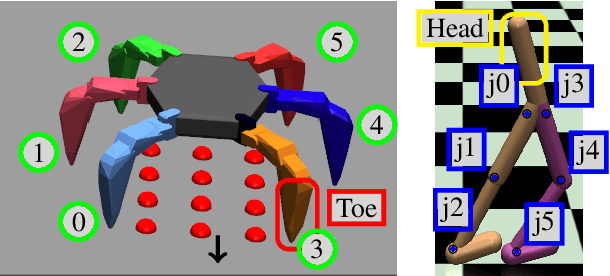

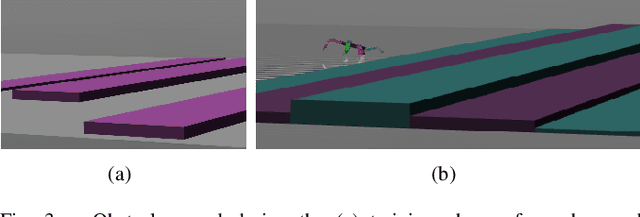

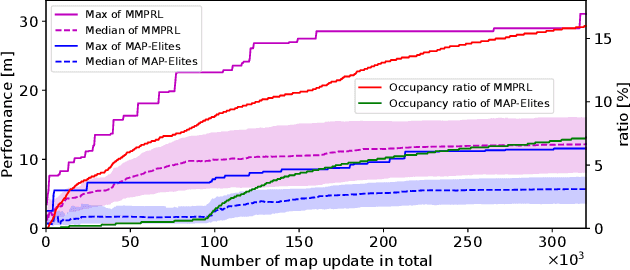

Abstract:In order for robots to perform mission-critical tasks, it is essential that they are able to quickly adapt to changes in their environment as well as to injuries and or other bodily changes. Deep reinforcement learning has been shown to be successful in training robot control policies for operation in complex environments. However, existing methods typically employ only a single policy. This can limit the adaptability since a large environmental modification might require a completely different behavior compared to the learning environment. To solve this problem, we propose Map-based Multi-Policy Reinforcement Learning (MMPRL), which aims to search and store multiple policies that encode different behavioral features while maximizing the expected reward in advance of the environment change. Thanks to these policies, which are stored into a multi-dimensional discrete map according to its behavioral feature, adaptation can be performed within reasonable time without retraining the robot. An appropriate pre-trained policy from the map can be recalled using Bayesian optimization. Our experiments show that MMPRL enables robots to quickly adapt to large changes without requiring any prior knowledge on the type of injuries that could occur. A highlight of the learned behaviors can be found here: https://youtu.be/QwInbilXNOE .

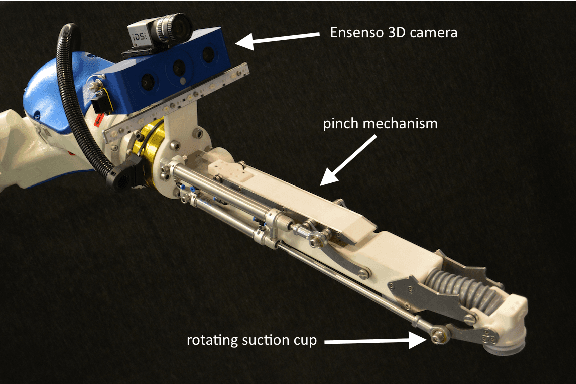

Team Delft's Robot Winner of the Amazon Picking Challenge 2016

Oct 18, 2016

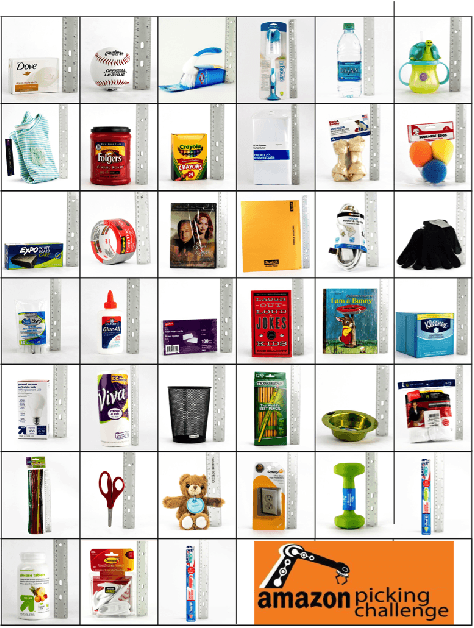

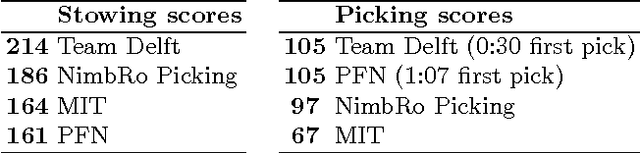

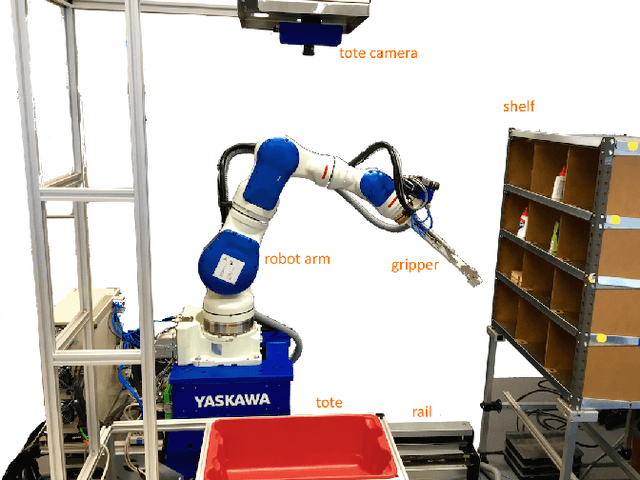

Abstract:This paper describes Team Delft's robot, which won the Amazon Picking Challenge 2016, including both the Picking and the Stowing competitions. The goal of the challenge is to automate pick and place operations in unstructured environments, specifically the shelves in an Amazon warehouse. Team Delft's robot is based on an industrial robot arm, 3D cameras and a customized gripper. The robot's software uses ROS to integrate off-the-shelf components and modules developed specifically for the competition, implementing Deep Learning and other AI techniques for object recognition and pose estimation, grasp planning and motion planning. This paper describes the main components in the system, and discusses its performance and results at the Amazon Picking Challenge 2016 finals.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge