Jeremiah Greer

Can a Single Model Master Both Multi-turn Conversations and Tool Use? CALM: A Unified Conversational Agentic Language Model

Feb 12, 2025

Abstract:Large Language Models (LLMs) with API-calling capabilities enabled building effective Language Agents (LA), while also revolutionizing the conventional task-oriented dialogue (TOD) paradigm. However, current approaches face a critical dilemma: TOD systems are often trained on a limited set of target APIs, requiring new data to maintain their quality when interfacing with new services, while LAs are not trained to maintain user intent over multi-turn conversations. Because both robust multi-turn management and advanced function calling are crucial for effective conversational agents, we evaluate these skills on three popular benchmarks: MultiWOZ 2.4 (TOD), BFCL V3 (LA), and API-Bank (LA), and our analyses reveal that specialized approaches excel in one domain but underperform in the other. To bridge this chasm, we introduce CALM (Conversational Agentic Language Model), a unified approach that integrates both conversational and agentic capabilities. We created CALM-IT, a carefully constructed multi-task dataset that interleave multi-turn ReAct reasoning with complex API usage. Using CALM-IT, we train three models CALM 8B, CALM 70B, and CALM 405B, which outperform top domain-specific models, including GPT-4o, across all three benchmarks.

NaRLE: Natural Language Models using Reinforcement Learning with Emotion Feedback

Oct 05, 2021

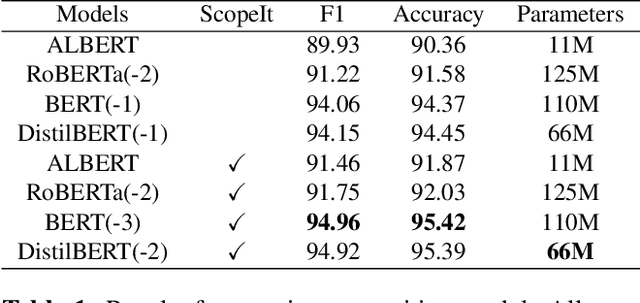

Abstract:Current research in dialogue systems is focused on conversational assistants working on short conversations in either task-oriented or open domain settings. In this paper, we focus on improving task-based conversational assistants online, primarily those working on document-type conversations (e.g., emails) whose contents may or may not be completely related to the assistant's task. We propose "NARLE" a deep reinforcement learning (RL) framework for improving the natural language understanding (NLU) component of dialogue systems online without the need to collect human labels for customer data. The proposed solution associates user emotion with the assistant's action and uses that to improve NLU models using policy gradients. For two intent classification problems, we empirically show that using reinforcement learning to fine tune the pre-trained supervised learning models improves performance up to 43%. Furthermore, we demonstrate the robustness of the method to partial and noisy implicit feedback.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge