Jasabanta Patro

Multimodal Fact Checking with Unified Visual, Textual, and Contextual Representations

Aug 07, 2025Abstract:The growing rate of multimodal misinformation, where claims are supported by both text and images, poses significant challenges to fact-checking systems that rely primarily on textual evidence. In this work, we have proposed a unified framework for fine-grained multimodal fact verification called "MultiCheck", designed to reason over structured textual and visual signals. Our architecture combines dedicated encoders for text and images with a fusion module that captures cross-modal relationships using element-wise interactions. A classification head then predicts the veracity of a claim, supported by a contrastive learning objective that encourages semantic alignment between claim-evidence pairs in a shared latent space. We evaluate our approach on the Factify 2 dataset, achieving a weighted F1 score of 0.84, substantially outperforming the baseline. These results highlight the effectiveness of explicit multimodal reasoning and demonstrate the potential of our approach for scalable and interpretable fact-checking in complex, real-world scenarios.

On VLMs for Diverse Tasks in Multimodal Meme Classification

May 27, 2025Abstract:In this paper, we present a comprehensive and systematic analysis of vision-language models (VLMs) for disparate meme classification tasks. We introduced a novel approach that generates a VLM-based understanding of meme images and fine-tunes the LLMs on textual understanding of the embedded meme text for improving the performance. Our contributions are threefold: (1) Benchmarking VLMs with diverse prompting strategies purposely to each sub-task; (2) Evaluating LoRA fine-tuning across all VLM components to assess performance gains; and (3) Proposing a novel approach where detailed meme interpretations generated by VLMs are used to train smaller language models (LLMs), significantly improving classification. The strategy of combining VLMs with LLMs improved the baseline performance by 8.34%, 3.52% and 26.24% for sarcasm, offensive and sentiment classification, respectively. Our results reveal the strengths and limitations of VLMs and present a novel strategy for meme understanding.

Improving the fact-checking performance of language models by relying on their entailment ability

May 21, 2025Abstract:Automated fact-checking is a crucial task in this digital age. To verify a claim, current approaches majorly follow one of two strategies i.e. (i) relying on embedded knowledge of language models, and (ii) fine-tuning them with evidence pieces. While the former can make systems to hallucinate, the later have not been very successful till date. The primary reason behind this is that fact verification is a complex process. Language models have to parse through multiple pieces of evidence before making a prediction. Further, the evidence pieces often contradict each other. This makes the reasoning process even more complex. We proposed a simple yet effective approach where we relied on entailment and the generative ability of language models to produce ''supporting'' and ''refuting'' justifications (for the truthfulness of a claim). We trained language models based on these justifications and achieved superior results. Apart from that, we did a systematic comparison of different prompting and fine-tuning strategies, as it is currently lacking in the literature. Some of our observations are: (i) training language models with raw evidence sentences registered an improvement up to 8.20% in macro-F1, over the best performing baseline for the RAW-FC dataset, (ii) similarly, training language models with prompted claim-evidence understanding (TBE-2) registered an improvement (with a margin up to 16.39%) over the baselines for the same dataset, (iii) training language models with entailed justifications (TBE-3) outperformed the baselines by a huge margin (up to 28.57% and 44.26% for LIAR-RAW and RAW-FC, respectively). We have shared our code repository to reproduce the results.

Revealing the impact of synthetic native samples and multi-tasking strategies in Hindi-English code-mixed humour and sarcasm detection

Dec 17, 2024

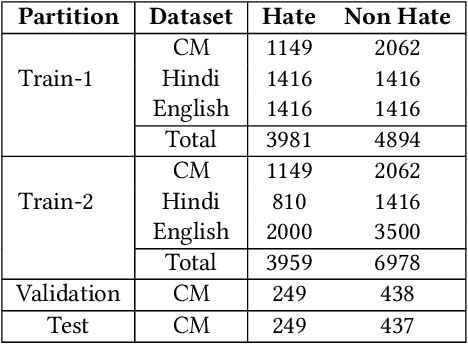

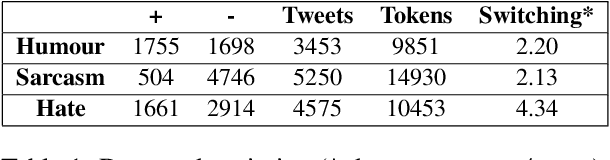

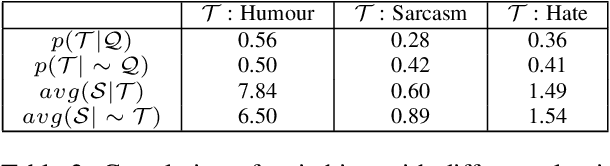

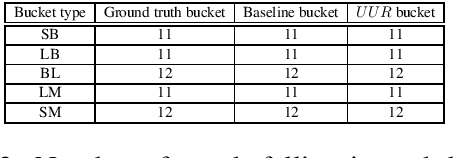

Abstract:In this paper, we reported our experiments with various strategies to improve code-mixed humour and sarcasm detection. We did all of our experiments for Hindi-English code-mixed scenario, as we have the linguistic expertise for the same. We experimented with three approaches, namely (i) native sample mixing, (ii) multi-task learning (MTL), and (iii) prompting very large multilingual language models (VMLMs). In native sample mixing, we added monolingual task samples in code-mixed training sets. In MTL learning, we relied on native and code-mixed samples of a semantically related task (hate detection in our case). Finally, in our third approach, we evaluated the efficacy of VMLMs via few-shot context prompting. Some interesting findings we got are (i) adding native samples improved humor (raising the F1-score up to 6.76%) and sarcasm (raising the F1-score up to 8.64%) detection, (ii) training MLMs in an MTL framework boosted performance for both humour (raising the F1-score up to 10.67%) and sarcasm (increment up to 12.35% in F1-score) detection, and (iii) prompting VMLMs couldn't outperform the other approaches. Finally, our ablation studies and error analysis discovered the cases where our model is yet to improve. We provided our code for reproducibility.

Improving code-mixed hate detection by native sample mixing: A case study for Hindi-English code-mixed scenario

May 31, 2024

Abstract:Hate detection has long been a challenging task for the NLP community. The task becomes complex in a code-mixed environment because the models must understand the context and the hate expressed through language alteration. Compared to the monolingual setup, we see very less work on code-mixed hate as large-scale annotated hate corpora are unavailable to make the study. To overcome this bottleneck, we propose using native language hate samples. We hypothesise that in the era of multilingual language models (MLMs), hate in code-mixed settings can be detected by majorly relying on the native language samples. Even though the NLP literature reports the effectiveness of MLMs on hate detection in many cross-lingual settings, their extensive evaluation in a code-mixed scenario is yet to be done. This paper attempts to fill this gap through rigorous empirical experiments. We considered the Hindi-English code-mixed setup as a case study as we have the linguistic expertise for the same. Some of the interesting observations we got are: (i) adding native hate samples in the code-mixed training set, even in small quantity, improved the performance of MLMs for code-mixed hate detection, (ii) MLMs trained with native samples alone observed to be detecting code-mixed hate to a large extent, (iii) The visualisation of attention scores revealed that, when native samples were included in training, MLMs could better focus on the hate emitting words in the code-mixed context, and (iv) finally, when hate is subjective or sarcastic, naively mixing native samples doesn't help much to detect code-mixed hate. We will release the data and code repository to reproduce the reported results.

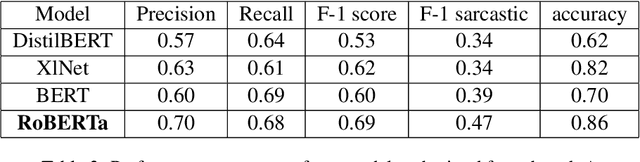

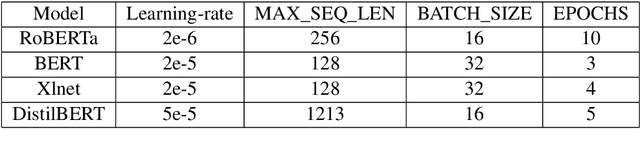

IISERB Brains at SemEval 2022 Task 6: A Deep-learning Framework to Identify Intended Sarcasm in English

Mar 04, 2022

Abstract:This paper describes the system architectures and the models submitted by our team "IISERBBrains" to SemEval 2022 Task 6 competition. We contested for all three sub-tasks floated for the English dataset. On the leader-board, wegot19th rank out of43 teams for sub-taskA, the 8th rank out of22 teams for sub-task B,and13th rank out of 16 teams for sub-taskC. Apart from the submitted results and models, we also report the other models and results that we obtained through our experiments after organizers published the gold labels of their evaluation data

Code-switching patterns can be an effective route to improve performance of downstream NLP applications: A case study of humour, sarcasm and hate speech detection

May 05, 2020

Abstract:In this paper we demonstrate how code-switching patterns can be utilised to improve various downstream NLP applications. In particular, we encode different switching features to improve humour, sarcasm and hate speech detection tasks. We believe that this simple linguistic observation can also be potentially helpful in improving other similar NLP applications.

All that is English may be Hindi: Enhancing language identification through automatic ranking of likeliness of word borrowing in social media

Jul 29, 2017

Abstract:In this paper, we present a set of computational methods to identify the likeliness of a word being borrowed, based on the signals from social media. In terms of Spearman correlation coefficient values, our methods perform more than two times better (nearly 0.62) in predicting the borrowing likeliness compared to the best performing baseline (nearly 0.26) reported in literature. Based on this likeliness estimate we asked annotators to re-annotate the language tags of foreign words in predominantly native contexts. In 88 percent of cases the annotators felt that the foreign language tag should be replaced by native language tag, thus indicating a huge scope for improvement of automatic language identification systems.

Is this word borrowed? An automatic approach to quantify the likeliness of borrowing in social media

Mar 15, 2017

Abstract:Code-mixing or code-switching are the effortless phenomena of natural switching between two or more languages in a single conversation. Use of a foreign word in a language; however, does not necessarily mean that the speaker is code-switching because often languages borrow lexical items from other languages. If a word is borrowed, it becomes a part of the lexicon of a language; whereas, during code-switching, the speaker is aware that the conversation involves foreign words or phrases. Identifying whether a foreign word used by a bilingual speaker is due to borrowing or code-switching is a fundamental importance to theories of multilingualism, and an essential prerequisite towards the development of language and speech technologies for multilingual communities. In this paper, we present a series of novel computational methods to identify the borrowed likeliness of a word, based on the social media signals. We first propose context based clustering method to sample a set of candidate words from the social media data.Next, we propose three novel and similar metrics based on the usage of these words by the users in different tweets; these metrics were used to score and rank the candidate words indicating their borrowed likeliness. We compare these rankings with a ground truth ranking constructed through a human judgment experiment. The Spearman's rank correlation between the two rankings (nearly 0.62 for all the three metric variants) is more than double the value (0.26) of the most competitive existing baseline reported in the literature. Some other striking observations are, (i) the correlation is higher for the ground truth data elicited from the younger participants (age less than 30) than that from the older participants, and (ii )those participants who use mixed-language for tweeting the least, provide the best signals of borrowing.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge