Jamal Bentahar

AtlasPatch: An Efficient and Scalable Tool for Whole Slide Image Preprocessing in Computational Pathology

Feb 03, 2026Abstract:Whole-slide image (WSI) preprocessing, typically comprising tissue detection followed by patch extraction, is foundational to AI-driven computational pathology workflows. This remains a major computational bottleneck as existing tools either rely on inaccurate heuristic thresholding for tissue detection, or adopt AI-based approaches trained on limited-diversity data that operate at the patch level, incurring substantial computational complexity. We present AtlasPatch, an efficient and scalable slide preprocessing framework for accurate tissue detection and high-throughput patch extraction with minimal computational overhead. AtlasPatch's tissue detection module is trained on a heterogeneous and semi-manually annotated dataset of ~30,000 WSI thumbnails, using efficient fine-tuning of the Segment-Anything model. The tool extrapolates tissue masks from thumbnails to full-resolution slides to extract patch coordinates at user-specified magnifications, with options to stream patches directly into common image encoders for embedding or store patch images, all efficiently parallelized across CPUs and GPUs. We assess AtlasPatch across segmentation precision, computational complexity, and downstream multiple-instance learning, matching state-of-the-art performance while operating at a fraction of their computational cost. AtlasPatch is open-source and available at https://github.com/AtlasAnalyticsLab/AtlasPatch.

Comprehensive Review of Reinforcement Learning for Medical Ultrasound Imaging

Mar 19, 2025

Abstract:Medical Ultrasound (US) imaging has seen increasing demands over the past years, becoming one of the most preferred imaging modalities in clinical practice due to its affordability, portability, and real-time capabilities. However, it faces several challenges that limit its applicability, such as operator dependency, variability in interpretation, and limited resolution, which are amplified by the low availability of trained experts. This calls for the need of autonomous systems that are capable of reducing the dependency on humans for increased efficiency and throughput. Reinforcement Learning (RL) comes as a rapidly advancing field under Artificial Intelligence (AI) that allows the development of autonomous and intelligent agents that are capable of executing complex tasks through rewarded interactions with their environments. Existing surveys on advancements in the US scanning domain predominantly focus on partially autonomous solutions leveraging AI for scanning guidance, organ identification, plane recognition, and diagnosis. However, none of these surveys explore the intersection between the stages of the US process and the recent advancements in RL solutions. To bridge this gap, this review proposes a comprehensive taxonomy that integrates the stages of the US process with the RL development pipeline. This taxonomy not only highlights recent RL advancements in the US domain but also identifies unresolved challenges crucial for achieving fully autonomous US systems. This work aims to offer a thorough review of current research efforts, highlighting the potential of RL in building autonomous US solutions while identifying limitations and opportunities for further advancements in this field.

CACTUS: An Open Dataset and Framework for Automated Cardiac Assessment and Classification of Ultrasound Images Using Deep Transfer Learning

Mar 07, 2025

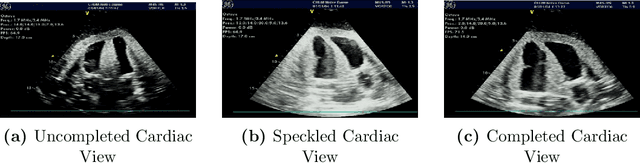

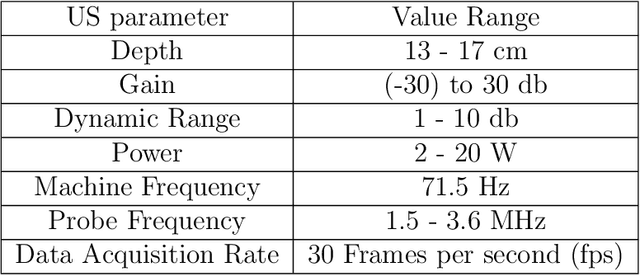

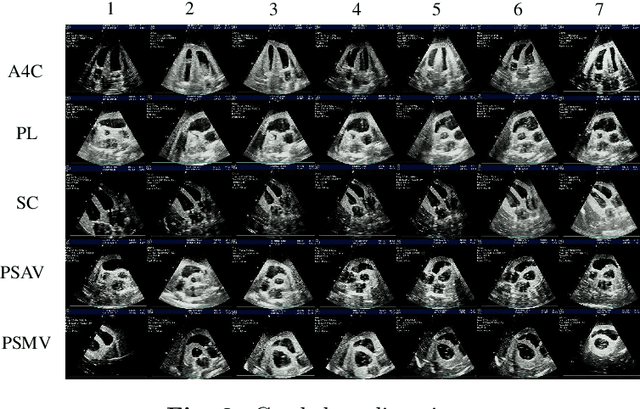

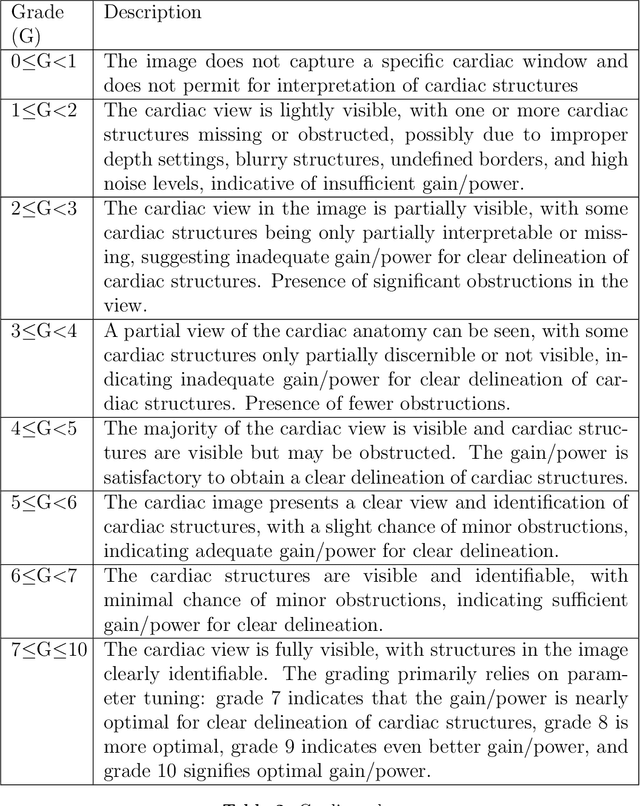

Abstract:Cardiac ultrasound (US) scanning is a commonly used techniques in cardiology to diagnose the health of the heart and its proper functioning. Therefore, it is necessary to consider ways to automate these tasks and assist medical professionals in classifying and assessing cardiac US images. Machine learning (ML) techniques are regarded as a prominent solution due to their success in numerous applications aimed at enhancing the medical field, including addressing the shortage of echography technicians. However, the limited availability of medical data presents a significant barrier to applying ML in cardiology, particularly regarding US images of the heart. This paper addresses this challenge by introducing the first open graded dataset for Cardiac Assessment and ClassificaTion of UltraSound (CACTUS), which is available online. This dataset contains images obtained from scanning a CAE Blue Phantom and representing various heart views and different quality levels, exceeding the conventional cardiac views typically found in the literature. Additionally, the paper introduces a Deep Learning (DL) framework consisting of two main components. The first component classifies cardiac US images based on the heart view using a Convolutional Neural Network (CNN). The second component uses Transfer Learning (TL) to fine-tune the knowledge from the first component and create a model for grading and assessing cardiac images. The framework demonstrates high performance in both classification and grading, achieving up to 99.43% accuracy and as low as 0.3067 error, respectively. To showcase its robustness, the framework is further fine-tuned using new images representing additional cardiac views and compared to several other state-of-the-art architectures. The framework's outcomes and performance in handling real-time scans were also assessed using a questionnaire answered by cardiac experts.

Blockchain-assisted Demonstration Cloning for Multi-Agent Deep Reinforcement Learning

Jan 19, 2025

Abstract:Multi-Agent Deep Reinforcement Learning (MDRL) is a promising research area in which agents learn complex behaviors in cooperative or competitive environments. However, MDRL comes with several challenges that hinder its usability, including sample efficiency, curse of dimensionality, and environment exploration. Recent works proposing Federated Reinforcement Learning (FRL) to tackle these issues suffer from problems related to model restrictions and maliciousness. Other proposals using reward shaping require considerable engineering and could lead to local optima. In this paper, we propose a novel Blockchain-assisted Multi-Expert Demonstration Cloning (MEDC) framework for MDRL. The proposed method utilizes expert demonstrations in guiding the learning of new MDRL agents, by suggesting exploration actions in the environment. A model sharing framework on Blockchain is designed to allow users to share their trained models, which can be allocated as expert models to requesting users to aid in training MDRL systems. A Consortium Blockchain is adopted to enable traceable and autonomous execution without the need for a single trusted entity. Smart Contracts are designed to manage users and models allocation, which are shared using IPFS. The proposed framework is tested on several applications, and is benchmarked against existing methods in FRL, Reward Shaping, and Imitation Learning-assisted RL. The results show the outperformance of the proposed framework in terms of learning speed and resiliency to faulty and malicious models.

Adaptive Target Localization under Uncertainty using Multi-Agent Deep Reinforcement Learning with Knowledge Transfer

Jan 19, 2025Abstract:Target localization is a critical task in sensitive applications, where multiple sensing agents communicate and collaborate to identify the target location based on sensor readings. Existing approaches investigated the use of Multi-Agent Deep Reinforcement Learning (MADRL) to tackle target localization. Nevertheless, these methods do not consider practical uncertainties, like false alarms when the target does not exist or when it is unreachable due to environmental complexities. To address these drawbacks, this work proposes a novel MADRL-based method for target localization in uncertain environments. The proposed MADRL method employs Proximal Policy Optimization to optimize the decision-making of sensing agents, which is represented in the form of an actor-critic structure using Convolutional Neural Networks. The observations of the agents are designed in an optimized manner to capture essential information in the environment, and a team-based reward functions is proposed to produce cooperative agents. The MADRL method covers three action dimensionalities that control the agents' mobility to search the area for the target, detect its existence, and determine its reachability. Using the concept of Transfer Learning, a Deep Learning model builds on the knowledge from the MADRL model to accurately estimating the target location if it is unreachable, resulting in shared representations between the models for faster learning and lower computational complexity. Collectively, the final combined model is capable of searching for the target, determining its existence and reachability, and estimating its location accurately. The proposed method is tested using a radioactive target localization environment and benchmarked against existing methods, showing its efficacy.

Machine Learning Innovations in CPR: A Comprehensive Survey on Enhanced Resuscitation Techniques

Nov 03, 2024Abstract:This survey paper explores the transformative role of Machine Learning (ML) and Artificial Intelligence (AI) in Cardiopulmonary Resuscitation (CPR). It examines the evolution from traditional CPR methods to innovative ML-driven approaches, highlighting the impact of predictive modeling, AI-enhanced devices, and real-time data analysis in improving resuscitation outcomes. The paper provides a comprehensive overview, classification, and critical analysis of current applications, challenges, and future directions in this emerging field.

On-Demand Model and Client Deployment in Federated Learning with Deep Reinforcement Learning

May 12, 2024Abstract:In Federated Learning (FL), the limited accessibility of data from diverse locations and user types poses a significant challenge due to restricted user participation. Expanding client access and diversifying data enhance models by incorporating diverse perspectives, thereby enhancing adaptability. However, challenges arise in dynamic and mobile environments where certain devices may become inaccessible as FL clients, impacting data availability and client selection methods. To address this, we propose an On-Demand solution, deploying new clients using Docker Containers on-the-fly. Our On-Demand solution, employing Deep Reinforcement Learning (DRL), targets client availability and selection, while considering data shifts, and container deployment complexities. It employs an autonomous end-to-end solution for handling model deployment and client selection. The DRL strategy uses a Markov Decision Process (MDP) framework, with a Master Learner and a Joiner Learner. The designed cost functions represent the complexity of the dynamic client deployment and selection. Simulated tests show that our architecture can easily adjust to changes in the environment and respond to On-Demand requests. This underscores its ability to improve client availability, capability, accuracy, and learning efficiency, surpassing heuristic and tabular reinforcement learning solutions.

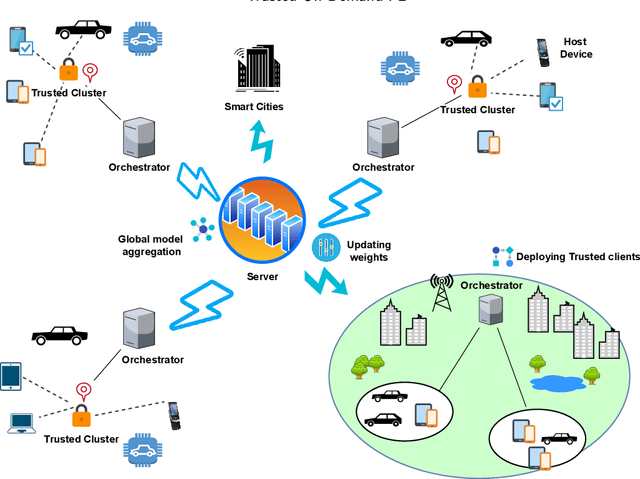

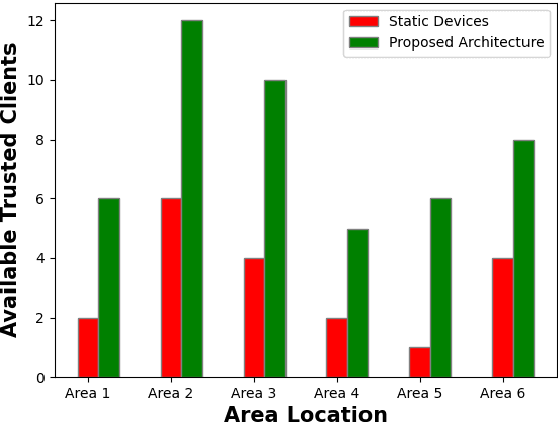

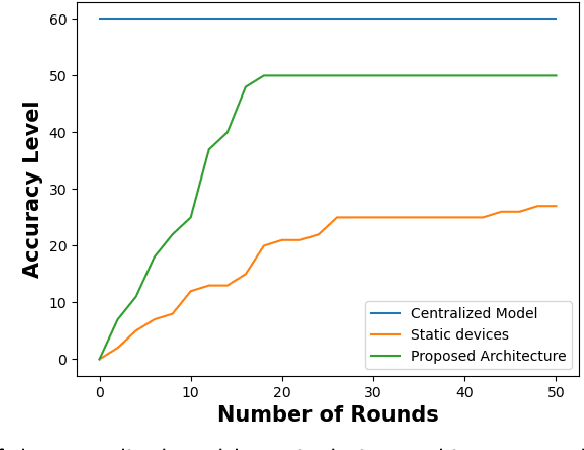

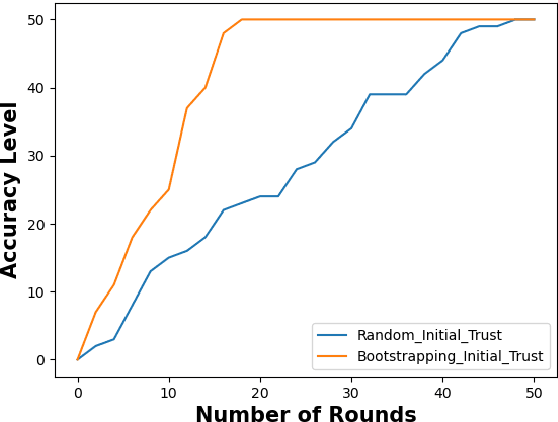

Trust Driven On-Demand Scheme for Client Deployment in Federated Learning

May 01, 2024

Abstract:Containerization technology plays a crucial role in Federated Learning (FL) setups, expanding the pool of potential clients and ensuring the availability of specific subsets for each learning iteration. However, doubts arise about the trustworthiness of devices deployed as clients in FL scenarios, especially when container deployment processes are involved. Addressing these challenges is important, particularly in managing potentially malicious clients capable of disrupting the learning process or compromising the entire model. In our research, we are motivated to integrate a trust element into the client selection and model deployment processes within our system architecture. This is a feature lacking in the initial client selection and deployment mechanism of the On-Demand architecture. We introduce a trust mechanism, named "Trusted-On-Demand-FL", which establishes a relationship of trust between the server and the pool of eligible clients. Utilizing Docker in our deployment strategy enables us to monitor and validate participant actions effectively, ensuring strict adherence to agreed-upon protocols while strengthening defenses against unauthorized data access or tampering. Our simulations rely on a continuous user behavior dataset, deploying an optimization model powered by a genetic algorithm to efficiently select clients for participation. By assigning trust values to individual clients and dynamically adjusting these values, combined with penalizing malicious clients through decreased trust scores, our proposed framework identifies and isolates harmful clients. This approach not only reduces disruptions to regular rounds but also minimizes instances of round dismissal, Consequently enhancing both system stability and security.

Enhancing IoT Intelligence: A Transformer-based Reinforcement Learning Methodology

Apr 05, 2024

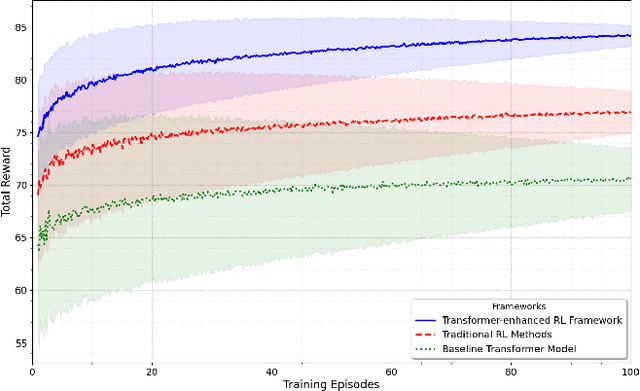

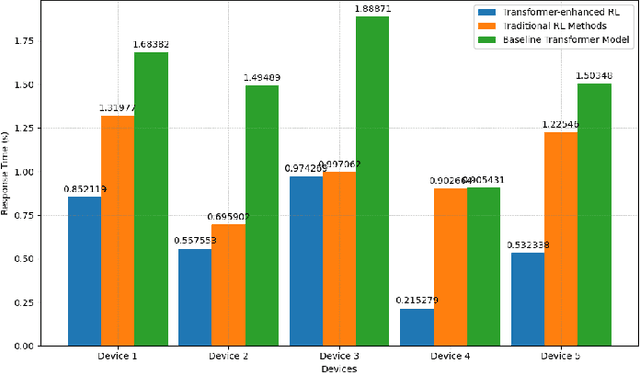

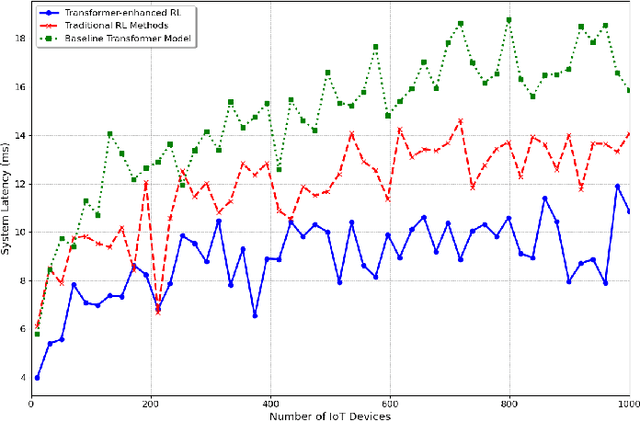

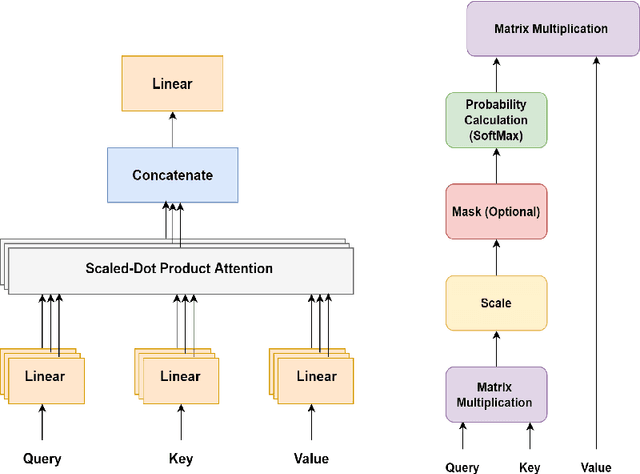

Abstract:The proliferation of the Internet of Things (IoT) has led to an explosion of data generated by interconnected devices, presenting both opportunities and challenges for intelligent decision-making in complex environments. Traditional Reinforcement Learning (RL) approaches often struggle to fully harness this data due to their limited ability to process and interpret the intricate patterns and dependencies inherent in IoT applications. This paper introduces a novel framework that integrates transformer architectures with Proximal Policy Optimization (PPO) to address these challenges. By leveraging the self-attention mechanism of transformers, our approach enhances RL agents' capacity for understanding and acting within dynamic IoT environments, leading to improved decision-making processes. We demonstrate the effectiveness of our method across various IoT scenarios, from smart home automation to industrial control systems, showing marked improvements in decision-making efficiency and adaptability. Our contributions include a detailed exploration of the transformer's role in processing heterogeneous IoT data, a comprehensive evaluation of the framework's performance in diverse environments, and a benchmark against traditional RL methods. The results indicate significant advancements in enabling RL agents to navigate the complexities of IoT ecosystems, highlighting the potential of our approach to revolutionize intelligent automation and decision-making in the IoT landscape.

A Comprehensive Survey on Applications of Transformers for Deep Learning Tasks

Jun 11, 2023

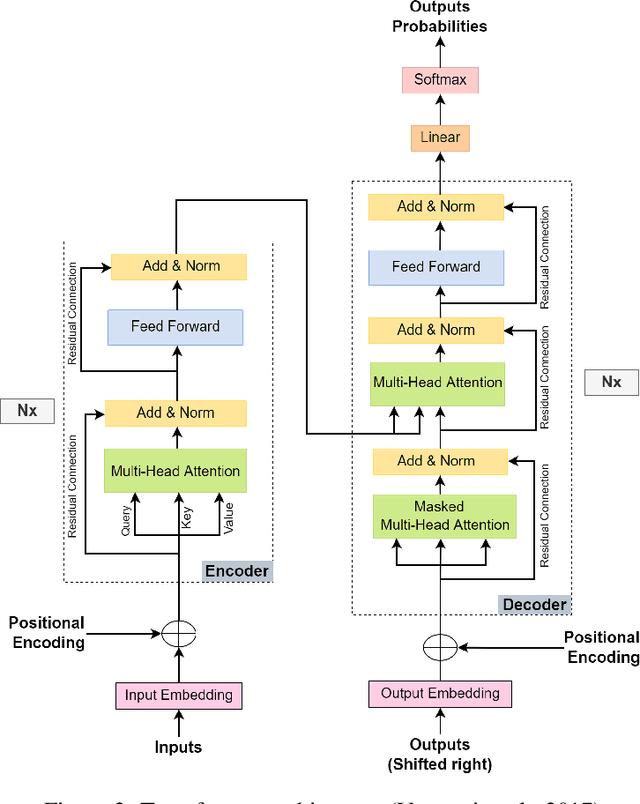

Abstract:Transformer is a deep neural network that employs a self-attention mechanism to comprehend the contextual relationships within sequential data. Unlike conventional neural networks or updated versions of Recurrent Neural Networks (RNNs) such as Long Short-Term Memory (LSTM), transformer models excel in handling long dependencies between input sequence elements and enable parallel processing. As a result, transformer-based models have attracted substantial interest among researchers in the field of artificial intelligence. This can be attributed to their immense potential and remarkable achievements, not only in Natural Language Processing (NLP) tasks but also in a wide range of domains, including computer vision, audio and speech processing, healthcare, and the Internet of Things (IoT). Although several survey papers have been published highlighting the transformer's contributions in specific fields, architectural differences, or performance evaluations, there is still a significant absence of a comprehensive survey paper encompassing its major applications across various domains. Therefore, we undertook the task of filling this gap by conducting an extensive survey of proposed transformer models from 2017 to 2022. Our survey encompasses the identification of the top five application domains for transformer-based models, namely: NLP, Computer Vision, Multi-Modality, Audio and Speech Processing, and Signal Processing. We analyze the impact of highly influential transformer-based models in these domains and subsequently classify them based on their respective tasks using a proposed taxonomy. Our aim is to shed light on the existing potential and future possibilities of transformers for enthusiastic researchers, thus contributing to the broader understanding of this groundbreaking technology.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge