Jadie Adams

Steerable Pluralism: Pluralistic Alignment via Few-Shot Comparative Regression

Aug 11, 2025Abstract:Large language models (LLMs) are currently aligned using techniques such as reinforcement learning from human feedback (RLHF). However, these methods use scalar rewards that can only reflect user preferences on average. Pluralistic alignment instead seeks to capture diverse user preferences across a set of attributes, moving beyond just helpfulness and harmlessness. Toward this end, we propose a steerable pluralistic model based on few-shot comparative regression that can adapt to individual user preferences. Our approach leverages in-context learning and reasoning, grounded in a set of fine-grained attributes, to compare response options and make aligned choices. To evaluate our algorithm, we also propose two new steerable pluralistic benchmarks by adapting the Moral Integrity Corpus (MIC) and the HelpSteer2 datasets, demonstrating the applicability of our approach to value-aligned decision-making and reward modeling, respectively. Our few-shot comparative regression approach is interpretable and compatible with different attributes and LLMs, while outperforming multiple baseline and state-of-the-art methods. Our work provides new insights and research directions in pluralistic alignment, enabling a more fair and representative use of LLMs and advancing the state-of-the-art in ethical AI.

Personalized Attacks of Social Engineering in Multi-turn Conversations -- LLM Agents for Simulation and Detection

Mar 18, 2025

Abstract:The rapid advancement of conversational agents, particularly chatbots powered by Large Language Models (LLMs), poses a significant risk of social engineering (SE) attacks on social media platforms. SE detection in multi-turn, chat-based interactions is considerably more complex than single-instance detection due to the dynamic nature of these conversations. A critical factor in mitigating this threat is understanding the mechanisms through which SE attacks operate, specifically how attackers exploit vulnerabilities and how victims' personality traits contribute to their susceptibility. In this work, we propose an LLM-agentic framework, SE-VSim, to simulate SE attack mechanisms by generating multi-turn conversations. We model victim agents with varying personality traits to assess how psychological profiles influence susceptibility to manipulation. Using a dataset of over 1000 simulated conversations, we examine attack scenarios in which adversaries, posing as recruiters, funding agencies, and journalists, attempt to extract sensitive information. Based on this analysis, we present a proof of concept, SE-OmniGuard, to offer personalized protection to users by leveraging prior knowledge of the victims personality, evaluating attack strategies, and monitoring information exchanges in conversations to identify potential SE attempts.

Point2SSM++: Self-Supervised Learning of Anatomical Shape Models from Point Clouds

May 15, 2024

Abstract:Correspondence-based statistical shape modeling (SSM) stands as a powerful technology for morphometric analysis in clinical research. SSM facilitates population-level characterization and quantification of anatomical shapes such as bones and organs, aiding in pathology and disease diagnostics and treatment planning. Despite its potential, SSM remains under-utilized in medical research due to the significant overhead associated with automatic construction methods, which demand complete, aligned shape surface representations. Additionally, optimization-based techniques rely on bias-inducing assumptions or templates and have prolonged inference times as the entire cohort is simultaneously optimized. To overcome these challenges, we introduce Point2SSM++, a principled, self-supervised deep learning approach that directly learns correspondence points from point cloud representations of anatomical shapes. Point2SSM++ is robust to misaligned and inconsistent input, providing SSM that accurately samples individual shape surfaces while effectively capturing population-level statistics. Additionally, we present principled extensions of Point2SSM++ to adapt it for dynamic spatiotemporal and multi-anatomy use cases, demonstrating the broad versatility of the Point2SSM++ framework. Furthermore, we present extensions of Point2SSM++ tailored for dynamic spatiotemporal and multi-anatomy scenarios, showcasing the broad versatility of the framework. Through extensive validation across diverse anatomies, evaluation metrics, and clinically relevant downstream tasks, we demonstrate Point2SSM++'s superiority over existing state-of-the-art deep learning models and traditional approaches. Point2SSM++ substantially enhances the feasibility of SSM generation and significantly broadens its array of potential clinical applications.

Weakly Supervised Bayesian Shape Modeling from Unsegmented Medical Images

May 15, 2024

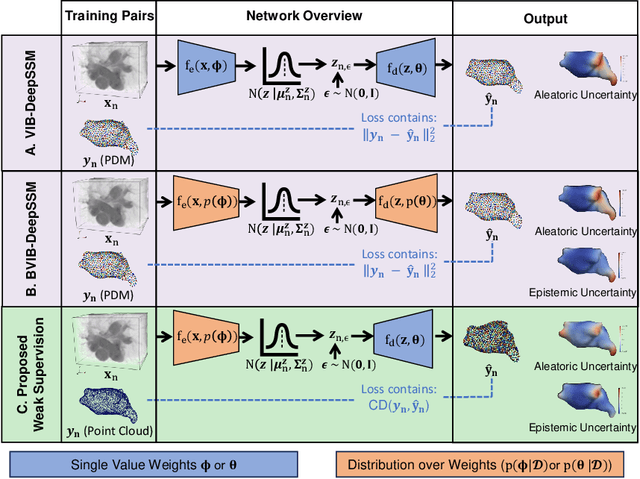

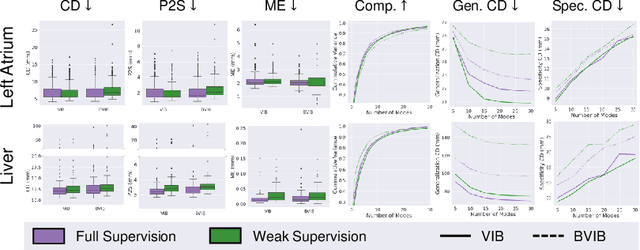

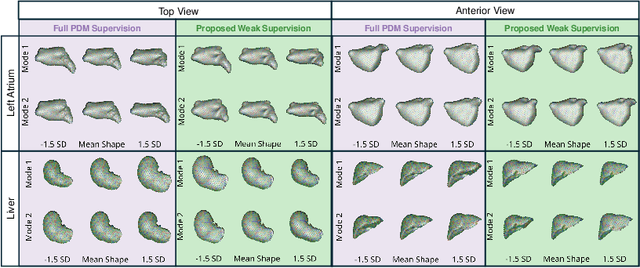

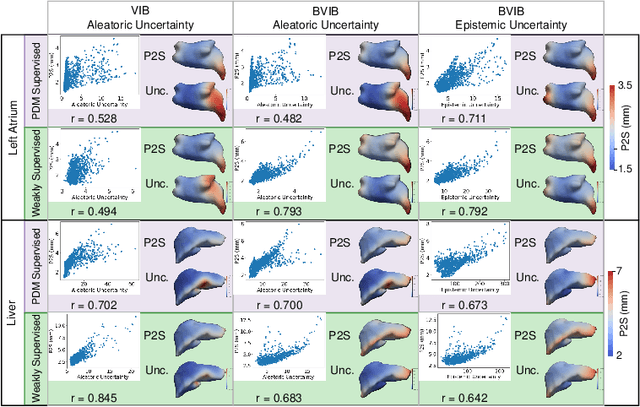

Abstract:Anatomical shape analysis plays a pivotal role in clinical research and hypothesis testing, where the relationship between form and function is paramount. Correspondence-based statistical shape modeling (SSM) facilitates population-level morphometrics but requires a cumbersome, potentially bias-inducing construction pipeline. Recent advancements in deep learning have streamlined this process in inference by providing SSM prediction directly from unsegmented medical images. However, the proposed approaches are fully supervised and require utilizing a traditional SSM construction pipeline to create training data, thus inheriting the associated burdens and limitations. To address these challenges, we introduce a weakly supervised deep learning approach to predict SSM from images using point cloud supervision. Specifically, we propose reducing the supervision associated with the state-of-the-art fully Bayesian variational information bottleneck DeepSSM (BVIB-DeepSSM) model. BVIB-DeepSSM is an effective, principled framework for predicting probabilistic anatomical shapes from images with quantification of both aleatoric and epistemic uncertainties. Whereas the original BVIB-DeepSSM method requires strong supervision in the form of ground truth correspondence points, the proposed approach utilizes weak supervision via point cloud surface representations, which are more readily obtainable. Furthermore, the proposed approach learns correspondence in a completely data-driven manner without prior assumptions about the expected variability in shape cohort. Our experiments demonstrate that this approach yields similar accuracy and uncertainty estimation to the fully supervised scenario while substantially enhancing the feasibility of model training for SSM construction.

SCorP: Statistics-Informed Dense Correspondence Prediction Directly from Unsegmented Medical Images

Apr 27, 2024Abstract:Statistical shape modeling (SSM) is a powerful computational framework for quantifying and analyzing the geometric variability of anatomical structures, facilitating advancements in medical research, diagnostics, and treatment planning. Traditional methods for shape modeling from imaging data demand significant manual and computational resources. Additionally, these methods necessitate repeating the entire modeling pipeline to derive shape descriptors (e.g., surface-based point correspondences) for new data. While deep learning approaches have shown promise in streamlining the construction of SSMs on new data, they still rely on traditional techniques to supervise the training of the deep networks. Moreover, the predominant linearity assumption of traditional approaches restricts their efficacy, a limitation also inherited by deep learning models trained using optimized/established correspondences. Consequently, representing complex anatomies becomes challenging. To address these limitations, we introduce SCorP, a novel framework capable of predicting surface-based correspondences directly from unsegmented images. By leveraging the shape prior learned directly from surface meshes in an unsupervised manner, the proposed model eliminates the need for an optimized shape model for training supervision. The strong shape prior acts as a teacher and regularizes the feature learning of the student network to guide it in learning image-based features that are predictive of surface correspondences. The proposed model streamlines the training and inference phases by removing the supervision for the correspondence prediction task while alleviating the linearity assumption.

Estimation and Analysis of Slice Propagation Uncertainty in 3D Anatomy Segmentation

Mar 18, 2024

Abstract:Supervised methods for 3D anatomy segmentation demonstrate superior performance but are often limited by the availability of annotated data. This limitation has led to a growing interest in self-supervised approaches in tandem with the abundance of available un-annotated data. Slice propagation has emerged as an self-supervised approach that leverages slice registration as a self-supervised task to achieve full anatomy segmentation with minimal supervision. This approach significantly reduces the need for domain expertise, time, and the cost associated with building fully annotated datasets required for training segmentation networks. However, this shift toward reduced supervision via deterministic networks raises concerns about the trustworthiness and reliability of predictions, especially when compared with more accurate supervised approaches. To address this concern, we propose the integration of calibrated uncertainty quantification (UQ) into slice propagation methods, providing insights into the model's predictive reliability and confidence levels. Incorporating uncertainty measures enhances user confidence in self-supervised approaches, thereby improving their practical applicability. We conducted experiments on three datasets for 3D abdominal segmentation using five UQ methods. The results illustrate that incorporating UQ improves not only model trustworthiness, but also segmentation accuracy. Furthermore, our analysis reveals various failure modes of slice propagation methods that might not be immediately apparent to end-users. This study opens up new research avenues to improve the accuracy and trustworthiness of slice propagation methods.

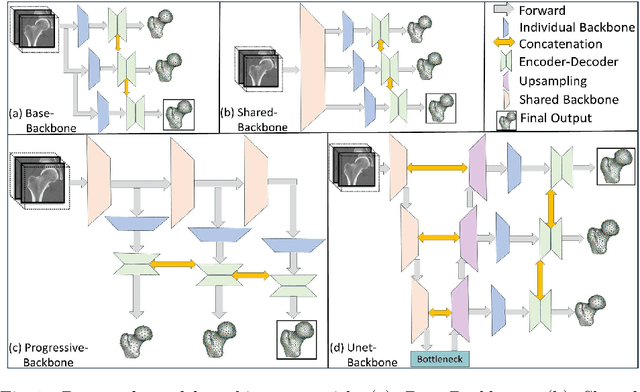

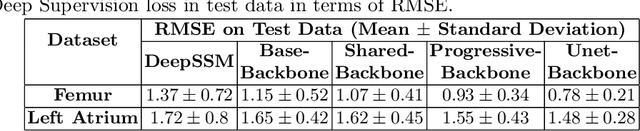

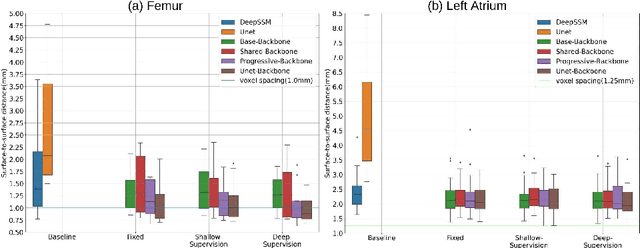

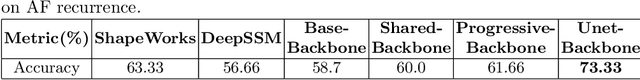

Progressive DeepSSM: Training Methodology for Image-To-Shape Deep Models

Oct 02, 2023

Abstract:Statistical shape modeling (SSM) is an enabling quantitative tool to study anatomical shapes in various medical applications. However, directly using 3D images in these applications still has a long way to go. Recent deep learning methods have paved the way for reducing the substantial preprocessing steps to construct SSMs directly from unsegmented images. Nevertheless, the performance of these models is not up to the mark. Inspired by multiscale/multiresolution learning, we propose a new training strategy, progressive DeepSSM, to train image-to-shape deep learning models. The training is performed in multiple scales, and each scale utilizes the output from the previous scale. This strategy enables the model to learn coarse shape features in the first scales and gradually learn detailed fine shape features in the later scales. We leverage shape priors via segmentation-guided multi-task learning and employ deep supervision loss to ensure learning at each scale. Experiments show the superiority of models trained by the proposed strategy from both quantitative and qualitative perspectives. This training methodology can be employed to improve the stability and accuracy of any deep learning method for inferring statistical representations of anatomies from medical images and can be adopted by existing deep learning methods to improve model accuracy and training stability.

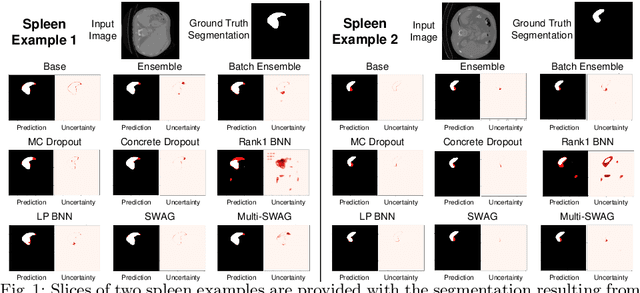

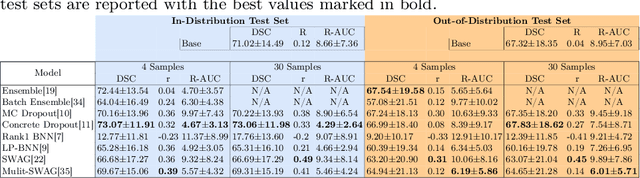

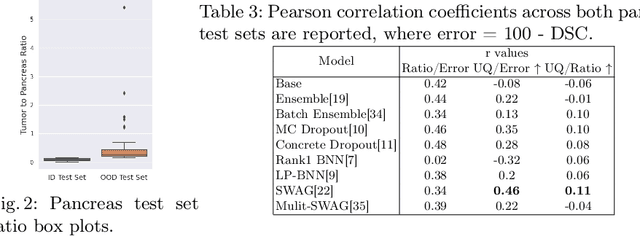

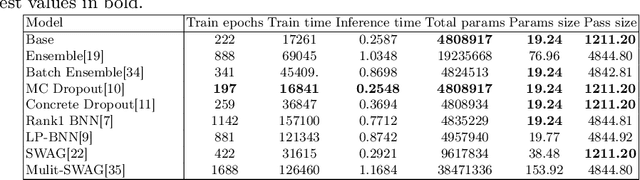

Benchmarking Scalable Epistemic Uncertainty Quantification in Organ Segmentation

Aug 15, 2023

Abstract:Deep learning based methods for automatic organ segmentation have shown promise in aiding diagnosis and treatment planning. However, quantifying and understanding the uncertainty associated with model predictions is crucial in critical clinical applications. While many techniques have been proposed for epistemic or model-based uncertainty estimation, it is unclear which method is preferred in the medical image analysis setting. This paper presents a comprehensive benchmarking study that evaluates epistemic uncertainty quantification methods in organ segmentation in terms of accuracy, uncertainty calibration, and scalability. We provide a comprehensive discussion of the strengths, weaknesses, and out-of-distribution detection capabilities of each method as well as recommendations for future improvements. These findings contribute to the development of reliable and robust models that yield accurate segmentations while effectively quantifying epistemic uncertainty.

Point2SSM: Learning Morphological Variations of Anatomies from Point Cloud

May 23, 2023

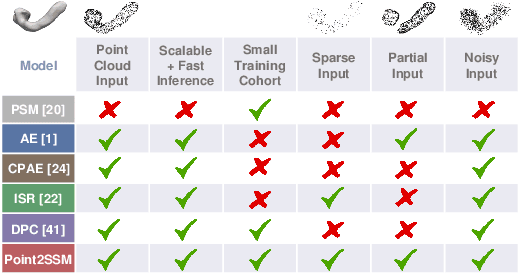

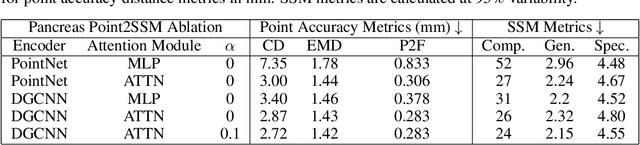

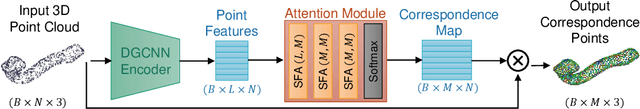

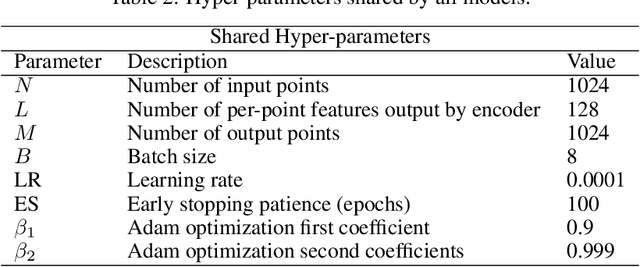

Abstract:We introduce Point2SSM, a novel unsupervised learning approach that can accurately construct correspondence-based statistical shape models (SSMs) of anatomy directly from point clouds. SSMs are crucial in clinical research for analyzing the population-level morphological variation in bones and organs. However, traditional methods for creating SSMs have limitations that hinder their widespread adoption, such as the need for noise-free surface meshes or binary volumes, reliance on assumptions or predefined templates, and simultaneous optimization of the entire cohort leading to lengthy inference times given new data. Point2SSM overcomes these barriers by providing a data-driven solution that infers SSMs directly from raw point clouds, reducing inference burdens and increasing applicability as point clouds are more easily acquired. Deep learning on 3D point clouds has seen recent success in unsupervised representation learning, point-to-point matching, and shape correspondence; however, their application to constructing SSMs of anatomies is largely unexplored. In this work, we benchmark state-of-the-art point cloud deep networks on the task of SSM and demonstrate that they are not robust to the challenges of anatomical SSM, such as noisy, sparse, or incomplete input and significantly limited training data. Point2SSM addresses these challenges via an attention-based module that provides correspondence mappings from learned point features. We demonstrate that the proposed method significantly outperforms existing networks in terms of both accurate surface sampling and correspondence, better capturing population-level statistics.

Fully Bayesian VIB-DeepSSM

May 09, 2023

Abstract:Statistical shape modeling (SSM) enables population-based quantitative analysis of anatomical shapes, informing clinical diagnosis. Deep learning approaches predict correspondence-based SSM directly from unsegmented 3D images but require calibrated uncertainty quantification, motivating Bayesian formulations. Variational information bottleneck DeepSSM (VIB-DeepSSM) is an effective, principled framework for predicting probabilistic shapes of anatomy from images with aleatoric uncertainty quantification. However, VIB is only half-Bayesian and lacks epistemic uncertainty inference. We derive a fully Bayesian VIB formulation from both the probably approximately correct (PAC)-Bayes and variational inference perspectives. We demonstrate the efficacy of two scalable approaches for Bayesian VIB with epistemic uncertainty: concrete dropout and batch ensemble. Additionally, we introduce a novel combination of the two that further enhances uncertainty calibration via multimodal marginalization. Experiments on synthetic shapes and left atrium data demonstrate that the fully Bayesian VIB network predicts SSM from images with improved uncertainty reasoning without sacrificing accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge