J. Kevin O'Regan

A computational model of infant sensorimotor exploration in the mobile paradigm

Apr 24, 2025

Abstract:We present a computational model of the mechanisms that may determine infants' behavior in the "mobile paradigm". This paradigm has been used in developmental psychology to explore how infants learn the sensory effects of their actions. In this paradigm, a mobile (an articulated and movable object hanging above an infant's crib) is connected to one of the infant's limbs, prompting the infant to preferentially move that "connected" limb. This ability to detect a "sensorimotor contingency" is considered to be a foundational cognitive ability in development. To understand how infants learn sensorimotor contingencies, we built a model that attempts to replicate infant behavior. Our model incorporates a neural network, action-outcome prediction, exploration, motor noise, preferred activity level, and biologically-inspired motor control. We find that simulations with our model replicate the classic findings in the literature showing preferential movement of the connected limb. An interesting observation is that the model sometimes exhibits a burst of movement after the mobile is disconnected, casting light on a similar occasional finding in infants. In addition to these general findings, the simulations also replicate data from two recent more detailed studies using a connection with the mobile that was either gradual or all-or-none. A series of ablation studies further shows that the inclusion of mechanisms of action-outcome prediction, exploration, motor noise, and biologically-inspired motor control was essential for the model to correctly replicate infant behavior. This suggests that these components are also involved in infants' sensorimotor learning.

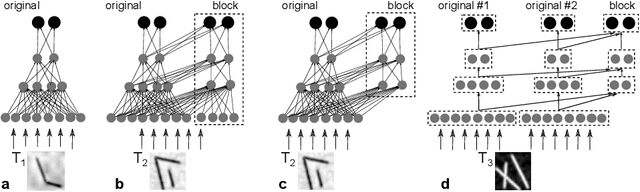

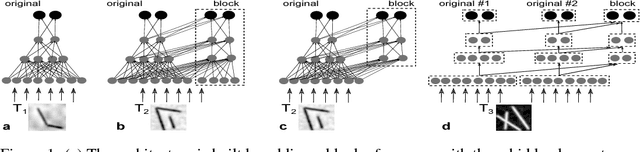

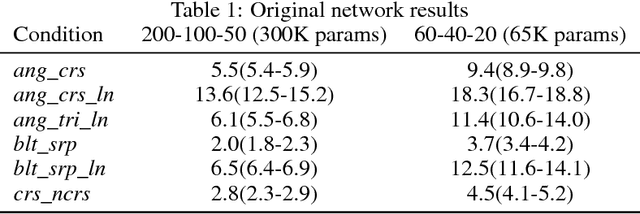

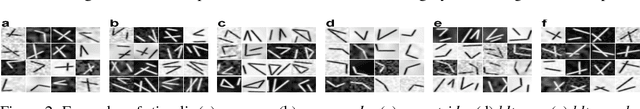

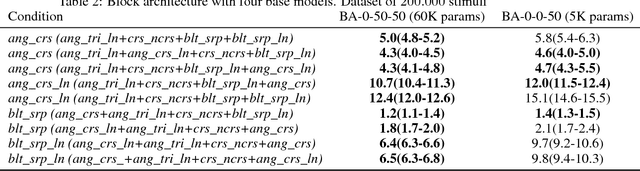

Knowledge transfer in deep block-modular neural networks

Jul 24, 2019

Abstract:Although deep neural networks (DNNs) have demonstrated impressive results during the last decade, they remain highly specialized tools, which are trained -- often from scratch -- to solve each particular task. The human brain, in contrast, significantly re-uses existing capacities when learning to solve new tasks. In the current study we explore a block-modular architecture for DNNs, which allows parts of the existing network to be re-used to solve a new task without a decrease in performance when solving the original task. We show that networks with such architectures can outperform networks trained from scratch, or perform comparably, while having to learn nearly 10 times fewer weights than the networks trained from scratch.

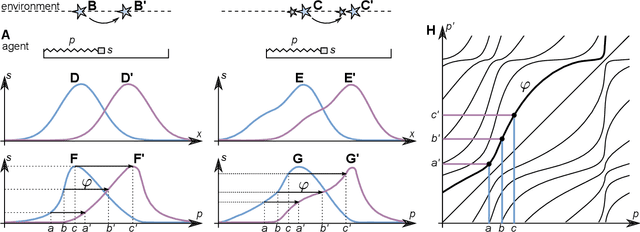

Learning abstract perceptual notions: the example of space

Jul 24, 2019

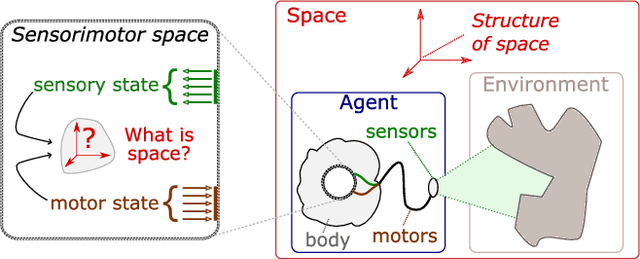

Abstract:Humans are extremely swift learners. We are able to grasp highly abstract notions, whether they come from art perception or pure mathematics. Current machine learning techniques demonstrate astonishing results in extracting patterns in information. Yet the abstract notions we possess are more than just statistical patterns in the incoming information. Sensorimotor theory suggests that they represent functions, laws, describing how the information can be transformed, or, in other words, they represent the statistics of sensorimotor changes rather than sensory inputs themselves. The aim of our work is to suggest a way for machine learning and sensorimotor theory to benefit from each other so as to pave the way toward new horizons in learning. We show in this study that a highly abstract notion, that of space, can be seen as a collection of laws of transformations of sensory information and that these laws could in theory be learned by a naive agent. As an illustration we do a one-dimensional simulation in which an agent extracts spatial knowledge in the form of internalized ("sensible") rigid displacements. The agent uses them to encode its own displacements in a way which is isometrically related to external space. Though the algorithm allowing acquisition of rigid displacements is designed \emph{ad hoc}, we believe it can stimulate the development of unsupervised learning techniques leading to similar results.

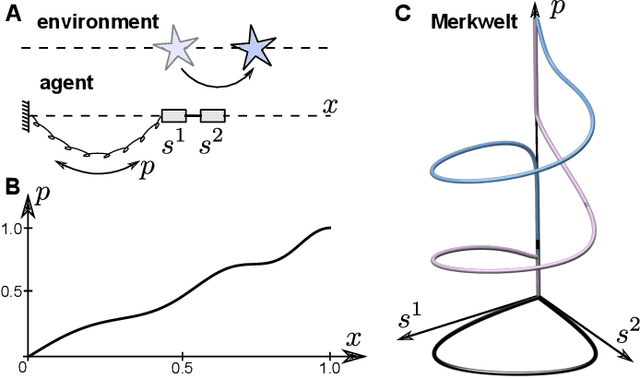

Learning agent's spatial configuration from sensorimotor invariants

Oct 03, 2018

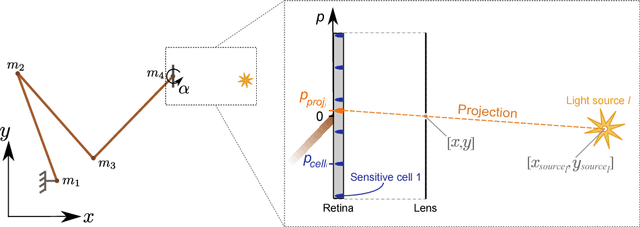

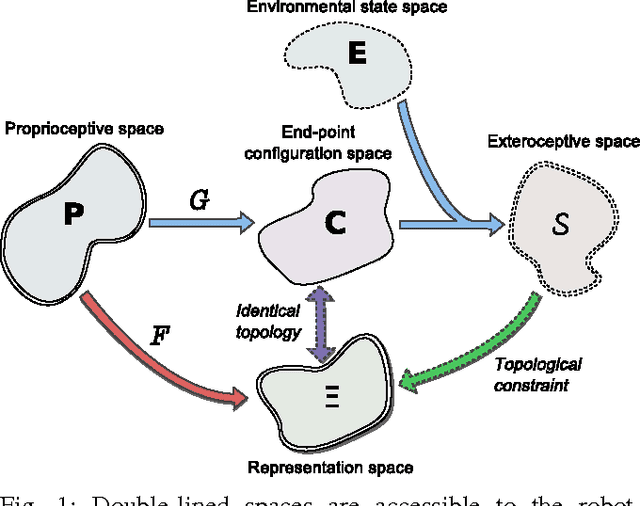

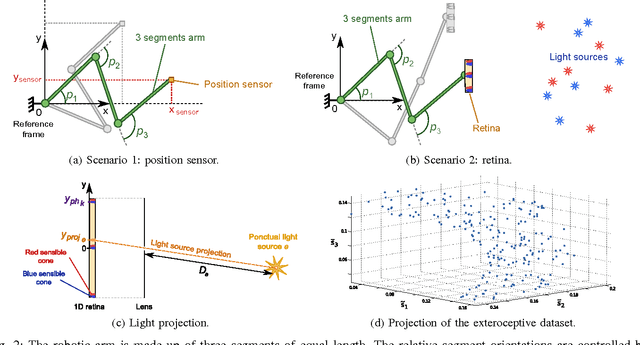

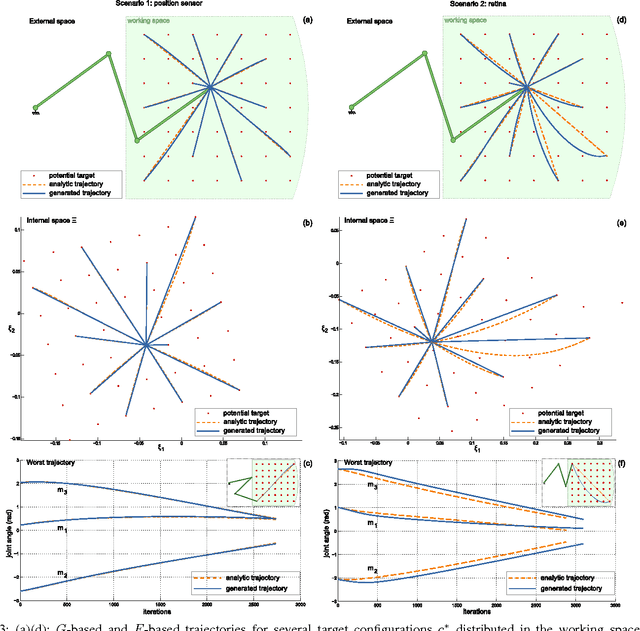

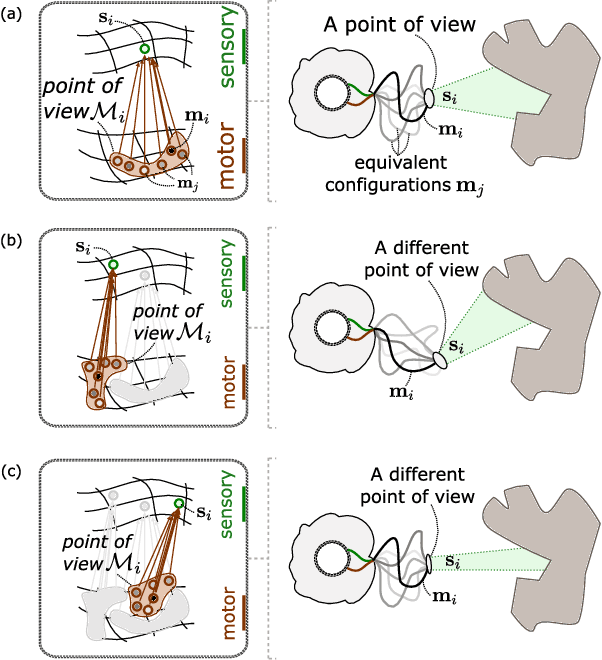

Abstract:The design of robotic systems is largely dictated by our purely human intuition about how we perceive the world. This intuition has been proven incorrect with regard to a number of critical issues, such as visual change blindness. In order to develop truly autonomous robots, we must step away from this intuition and let robotic agents develop their own way of perceiving. The robot should start from scratch and gradually develop perceptual notions, under no prior assumptions, exclusively by looking into its sensorimotor experience and identifying repetitive patterns and invariants. One of the most fundamental perceptual notions, space, cannot be an exception to this requirement. In this paper we look into the prerequisites for the emergence of simplified spatial notions on the basis of a robot's sensorimotor flow. We show that the notion of space as environment-independent cannot be deduced solely from exteroceptive information, which is highly variable and is mainly determined by the contents of the environment. The environment-independent definition of space can be approached by looking into the functions that link the motor commands to changes in exteroceptive inputs. In a sufficiently rich environment, the kernels of these functions correspond uniquely to the spatial configuration of the agent's exteroceptors. We simulate a redundant robotic arm with a retina installed at its end-point and show how this agent can learn the configuration space of its retina. The resulting manifold has the topology of the Cartesian product of a plane and a circle, and corresponds to the planar position and orientation of the retina.

* 26 pages, 5 images, published in Robotics and Autonomous Systems

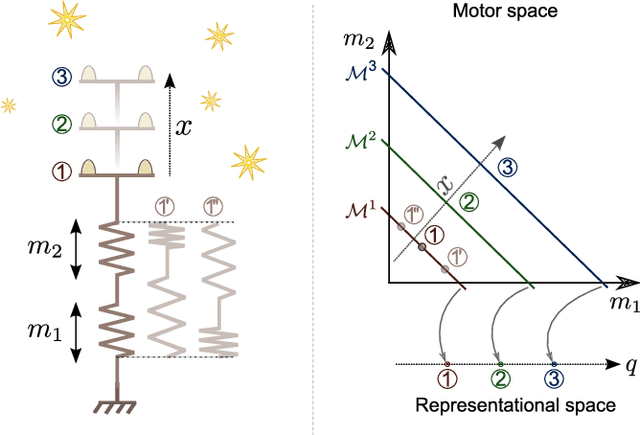

Learning an internal representation of the end-effector configuration space

Oct 03, 2018

Abstract:Current machine learning techniques proposed to automatically discover a robot kinematics usually rely on a priori information about the robot's structure, sensors properties or end-effector position. This paper proposes a method to estimate a certain aspect of the forward kinematics model with no such information. An internal representation of the end-effector configuration is generated from unstructured proprioceptive and exteroceptive data flow under very limited assumptions. A mapping from the proprioceptive space to this representational space can then be used to control the robot.

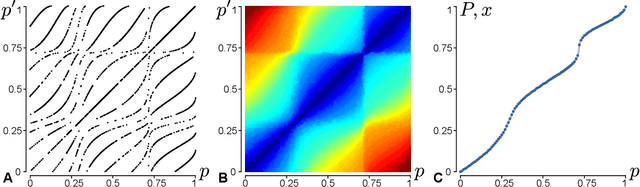

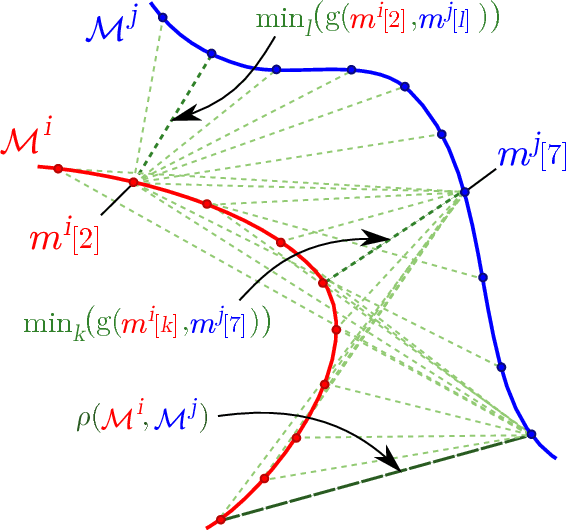

Discovering space - Grounding spatial topology and metric regularity in a naive agent's sensorimotor experience

Oct 03, 2018

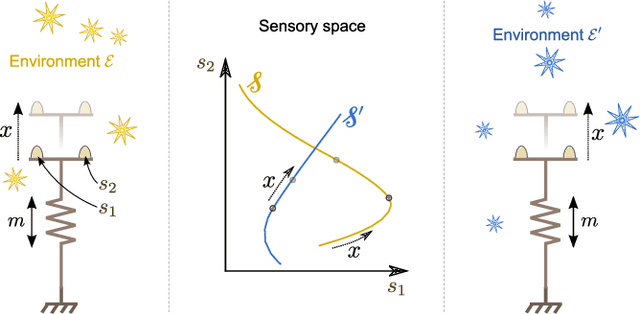

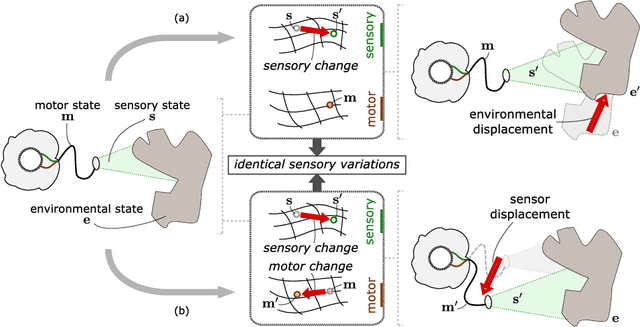

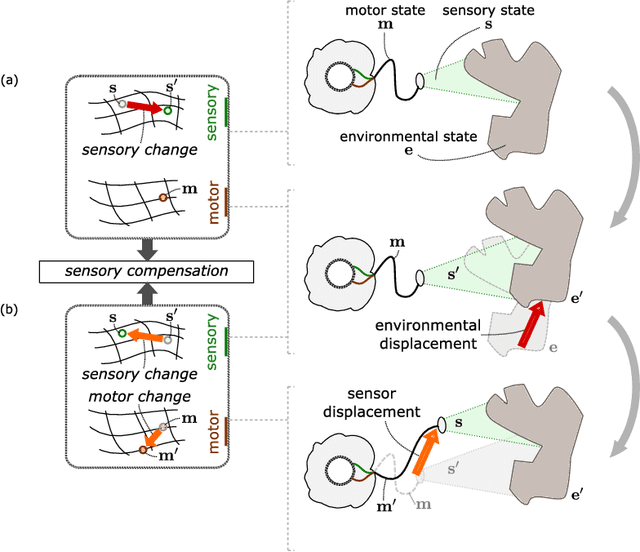

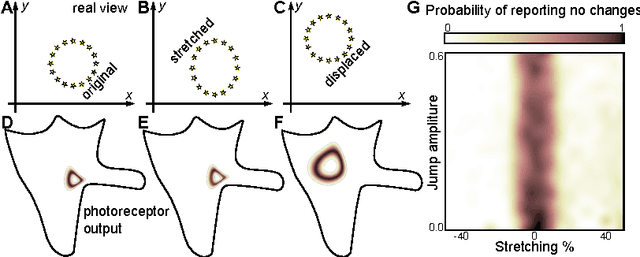

Abstract:In line with the sensorimotor contingency theory, we investigate the problem of the perception of space from a fundamental sensorimotor perspective. Despite its pervasive nature in our perception of the world, the origin of the concept of space remains largely mysterious. For example in the context of artificial perception, this issue is usually circumvented by having engineers pre-define the spatial structure of the problem the agent has to face. We here show that the structure of space can be autonomously discovered by a naive agent in the form of sensorimotor regularities, that correspond to so called compensable sensory experiences: these are experiences that can be generated either by the agent or its environment. By detecting such compensable experiences the agent can infer the topological and metric structure of the external space in which its body is moving. We propose a theoretical description of the nature of these regularities and illustrate the approach on a simulated robotic arm equipped with an eye-like sensor, and which interacts with an object. Finally we show how these regularities can be used to build an internal representation of the sensor's external spatial configuration.

Block Neural Network Avoids Catastrophic Forgetting When Learning Multiple Task

Nov 28, 2017

Abstract:In the present work we propose a Deep Feed Forward network architecture which can be trained according to a sequential learning paradigm, where tasks of increasing difficulty are learned sequentially, yet avoiding catastrophic forgetting. The proposed architecture can re-use the features learned on previous tasks in a new task when the old tasks and the new one are related. The architecture needs fewer computational resources (neurons and connections) and less data for learning the new task than a network trained from scratch

Hyper-dimensional computing for a visual question-answering system that is trainable end-to-end

Nov 28, 2017

Abstract:In this work we propose a system for visual question answering. Our architecture is composed of two parts, the first part creates the logical knowledge base given the image. The second part evaluates questions against the knowledge base. Differently from previous work, the knowledge base is represented using hyper-dimensional computing. This choice has the advantage that all the operations in the system, namely creating the knowledge base and evaluating the questions against it, are differentiable, thereby making the system easily trainable in an end-to-end fashion.

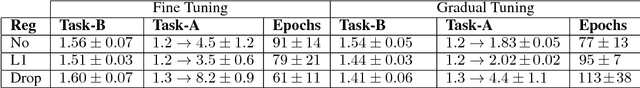

Gradual Tuning: a better way of Fine Tuning the parameters of a Deep Neural Network

Nov 28, 2017

Abstract:In this paper we present an alternative strategy for fine-tuning the parameters of a network. We named the technique Gradual Tuning. Once trained on a first task, the network is fine-tuned on a second task by modifying a progressively larger set of the network's parameters. We test Gradual Tuning on different transfer learning tasks, using networks of different sizes trained with different regularization techniques. The result shows that compared to the usual fine tuning, our approach significantly reduces catastrophic forgetting of the initial task, while still retaining comparable if not better performance on the new task.

Space as an invention of biological organisms

Aug 09, 2013

Abstract:The question of the nature of space around us has occupied thinkers since the dawn of humanity, with scientists and philosophers today implicitly assuming that space is something that exists objectively. Here we show that this does not have to be the case: the notion of space could emerge when biological organisms seek an economic representation of their sensorimotor flow. The emergence of spatial notions does not necessitate the existence of real physical space, but only requires the presence of sensorimotor invariants called `compensable' sensory changes. We show mathematically and then in simulations that na\"ive agents making no assumptions about the existence of space are able to learn these invariants and to build the abstract notion that physicists call rigid displacement, which is independent of what is being displaced. Rigid displacements may underly perception of space as an unchanging medium within which objects are described by their relative positions. Our findings suggest that the question of the nature of space, currently exclusive to philosophy and physics, should also be addressed from the standpoint of neuroscience and artificial intelligence.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge