Huantao Ren

3D-PointZshotS: Geometry-Aware 3D Point Cloud Zero-Shot Semantic Segmentation Narrowing the Visual-Semantic Gap

Apr 16, 2025Abstract:Existing zero-shot 3D point cloud segmentation methods often struggle with limited transferability from seen classes to unseen classes and from semantic to visual space. To alleviate this, we introduce 3D-PointZshotS, a geometry-aware zero-shot segmentation framework that enhances both feature generation and alignment using latent geometric prototypes (LGPs). Specifically, we integrate LGPs into a generator via a cross-attention mechanism, enriching semantic features with fine-grained geometric details. To further enhance stability and generalization, we introduce a self-consistency loss, which enforces feature robustness against point-wise perturbations. Additionally, we re-represent visual and semantic features in a shared space, bridging the semantic-visual gap and facilitating knowledge transfer to unseen classes. Experiments on three real-world datasets, namely ScanNet, SemanticKITTI, and S3DIS, demonstrate that our method achieves superior performance over four baselines in terms of harmonic mIoU. The code is available at \href{https://github.com/LexieYang/3D-PointZshotS}{Github}.

DG-MVP: 3D Domain Generalization via Multiple Views of Point Clouds for Classification

Apr 16, 2025

Abstract:Deep neural networks have achieved significant success in 3D point cloud classification while relying on large-scale, annotated point cloud datasets, which are labor-intensive to build. Compared to capturing data with LiDAR sensors and then performing annotation, it is relatively easier to sample point clouds from CAD models. Yet, data sampled from CAD models is regular, and does not suffer from occlusion and missing points, which are very common for LiDAR data, creating a large domain shift. Therefore, it is critical to develop methods that can generalize well across different point cloud domains. %In this paper, we focus on the 3D point cloud domain generalization problem. Existing 3D domain generalization methods employ point-based backbones to extract point cloud features. Yet, by analyzing point utilization of point-based methods and observing the geometry of point clouds from different domains, we have found that a large number of point features are discarded by point-based methods through the max-pooling operation. This is a significant waste especially considering the fact that domain generalization is more challenging than supervised learning, and point clouds are already affected by missing points and occlusion to begin with. To address these issues, we propose a novel method for 3D point cloud domain generalization, which can generalize to unseen domains of point clouds. Our proposed method employs multiple 2D projections of a 3D point cloud to alleviate the issue of missing points and involves a simple yet effective convolution-based model to extract features. The experiments, performed on the PointDA-10 and Sim-to-Real benchmarks, demonstrate the effectiveness of our proposed method, which outperforms different baselines, and can transfer well from synthetic domain to real-world domain.

LVP-CLIP:Revisiting CLIP for Continual Learning with Label Vector Pool

Dec 08, 2024

Abstract:Continual learning aims to update a model so that it can sequentially learn new tasks without forgetting previously acquired knowledge. Recent continual learning approaches often leverage the vision-language model CLIP for its high-dimensional feature space and cross-modality feature matching. Traditional CLIP-based classification methods identify the most similar text label for a test image by comparing their embeddings. However, these methods are sensitive to the quality of text phrases and less effective for classes lacking meaningful text labels. In this work, we rethink CLIP-based continual learning and introduce the concept of Label Vector Pool (LVP). LVP replaces text labels with training images as similarity references, eliminating the need for ideal text descriptions. We present three variations of LVP and evaluate their performance on class and domain incremental learning tasks. Leveraging CLIP's high dimensional feature space, LVP learning algorithms are task-order invariant. The new knowledge does not modify the old knowledge, hence, there is minimum forgetting. Different tasks can be learned independently and in parallel with low computational and memory demands. Experimental results show that proposed LVP-based methods outperform the current state-of-the-art baseline by a significant margin of 40.7%.

GaitPoint+: A Gait Recognition Network Incorporating Point Cloud Analysis and Recycling

Apr 16, 2024Abstract:Gait is a behavioral biometric modality that can be used to recognize individuals by the way they walk from a far distance. Most existing gait recognition approaches rely on either silhouettes or skeletons, while their joint use is underexplored. Features from silhouettes and skeletons can provide complementary information for more robust recognition against appearance changes or pose estimation errors. To exploit the benefits of both silhouette and skeleton features, we propose a new gait recognition network, referred to as the GaitPoint+. Our approach models skeleton key points as a 3D point cloud, and employs a computational complexity-conscious 3D point processing approach to extract skeleton features, which are then combined with silhouette features for improved accuracy. Since silhouette- or CNN-based methods already require considerable amount of computational resources, it is preferable that the key point learning module is faster and more lightweight. We present a detailed analysis of the utilization of every human key point after the use of traditional max-pooling, and show that while elbow and ankle points are used most commonly, many useful points are discarded by max-pooling. Thus, we present a method to recycle some of the discarded points by a Recycling Max-Pooling module, during processing of skeleton point clouds, and achieve further performance improvement. We provide a comprehensive set of experimental results showing that (i) incorporating skeleton features obtained by a point-based 3D point cloud processing approach boosts the performance of three different state-of-the-art silhouette- and CNN-based baselines; (ii) recycling the discarded points increases the accuracy further. Ablation studies are also provided to show the effectiveness and contribution of different components of our approach.

Only My Model On My Data: A Privacy Preserving Approach Protecting one Model and Deceiving Unauthorized Black-Box Models

Feb 14, 2024

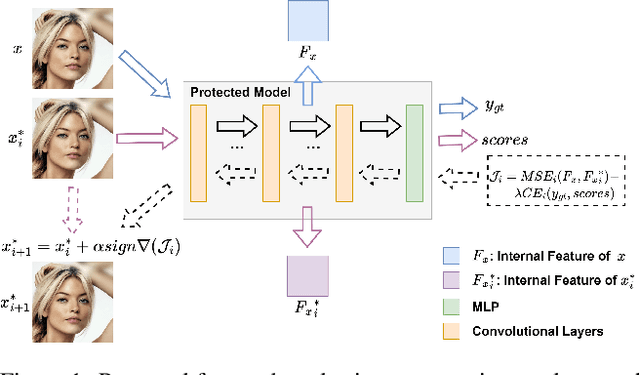

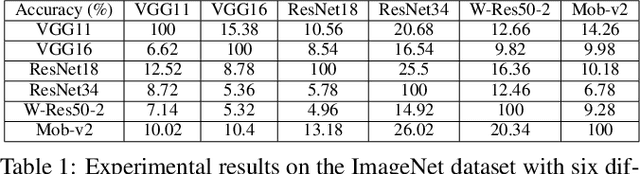

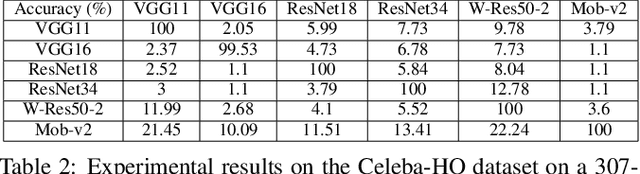

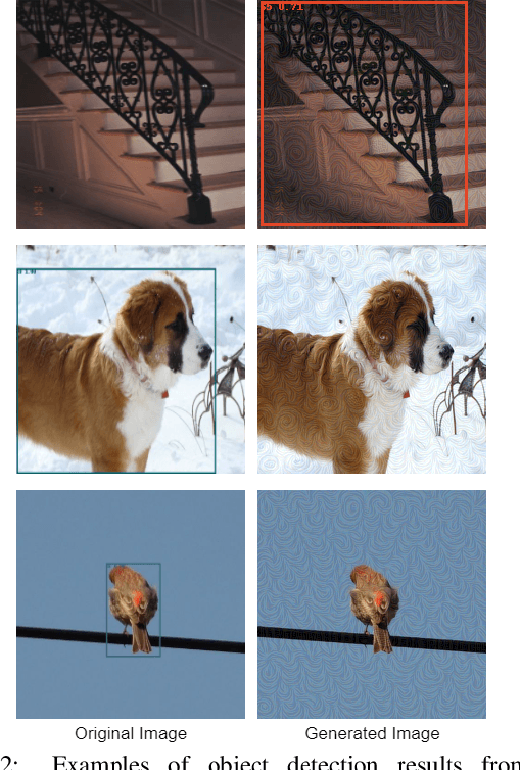

Abstract:Deep neural networks are extensively applied to real-world tasks, such as face recognition and medical image classification, where privacy and data protection are critical. Image data, if not protected, can be exploited to infer personal or contextual information. Existing privacy preservation methods, like encryption, generate perturbed images that are unrecognizable to even humans. Adversarial attack approaches prohibit automated inference even for authorized stakeholders, limiting practical incentives for commercial and widespread adaptation. This pioneering study tackles an unexplored practical privacy preservation use case by generating human-perceivable images that maintain accurate inference by an authorized model while evading other unauthorized black-box models of similar or dissimilar objectives, and addresses the previous research gaps. The datasets employed are ImageNet, for image classification, Celeba-HQ dataset, for identity classification, and AffectNet, for emotion classification. Our results show that the generated images can successfully maintain the accuracy of a protected model and degrade the average accuracy of the unauthorized black-box models to 11.97%, 6.63%, and 55.51% on ImageNet, Celeba-HQ, and AffectNet datasets, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge