Hoi-Jun Yoo

FlashMoE: Reducing SSD I/O Bottlenecks via ML-Based Cache Replacement for Mixture-of-Experts Inference on Edge Devices

Jan 22, 2026Abstract:Recently, Mixture-of-Experts (MoE) models have gained attention for efficiently scaling large language models. Although these models are extremely large, their sparse activation enables inference to be performed by accessing only a fraction of the model at a time. This property opens the possibility of on-device inference of MoE, which was previously considered infeasible for such large models. Consequently, various systems have been proposed to leverage this sparsity and enable efficient MoE inference for edge devices. However, previous MoE inference systems like Fiddler[8] or DAOP[13] rely on DRAM-based offloading and are not suitable for memory constrained on-device environments. As recent MoE models grow to hundreds of gigabytes, RAM-offloading solutions become impractical. To address this, we propose FlashMoE, a system that offloads inactive experts to SSD, enabling efficient MoE inference under limited RAM. FlashMoE incorporates a lightweight ML-based caching strategy that adaptively combines recency and frequency signals to maximize expert reuse, significantly reducing storage I/O. In addition, we built a user-grade desktop platform to demonstrate the practicality of FlashMoE. On this real hardware setup, FlashMoE improves cache hit rate by up to 51% over well-known offloading policies such as LRU and LFU, and achieves up to 2.6x speedup compared to existing MoE inference systems.

SeVeDo: A Heterogeneous Transformer Accelerator for Low-Bit Inference via Hierarchical Group Quantization and SVD-Guided Mixed Precision

Dec 15, 2025Abstract:Low-bit quantization is a promising technique for efficient transformer inference by reducing computational and memory overhead. However, aggressive bitwidth reduction remains challenging due to activation outliers, leading to accuracy degradation. Existing methods, such as outlier-handling and group quantization, achieve high accuracy but incur substantial energy consumption. To address this, we propose SeVeDo, an energy-efficient SVD-based heterogeneous accelerator that structurally separates outlier-sensitive components into a high-precision low-rank path, while the remaining computations are executed in a low-bit residual datapath with group quantization. To further enhance efficiency, Hierarchical Group Quantization (HGQ) combines coarse-grained floating-point scaling with fine-grained shifting, effectively reducing dequantization cost. Also, SVD-guided mixed precision (SVD-MP) statically allocates higher bitwidths to precision-sensitive components identified through low-rank decomposition, thereby minimizing floating-point operation cost. Experimental results show that SeVeDo achieves a peak energy efficiency of 13.8TOPS/W, surpassing conventional designs, with 12.7TOPS/W on ViT-Base and 13.4TOPS/W on Llama2-7B benchmarks.

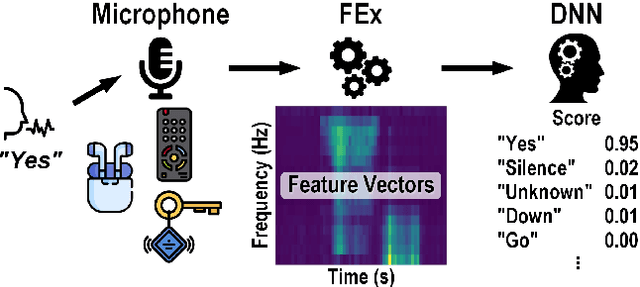

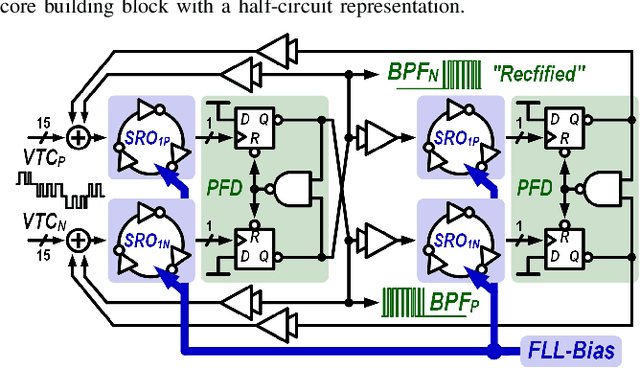

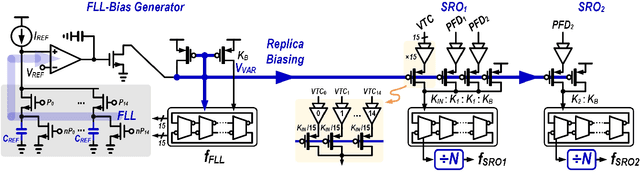

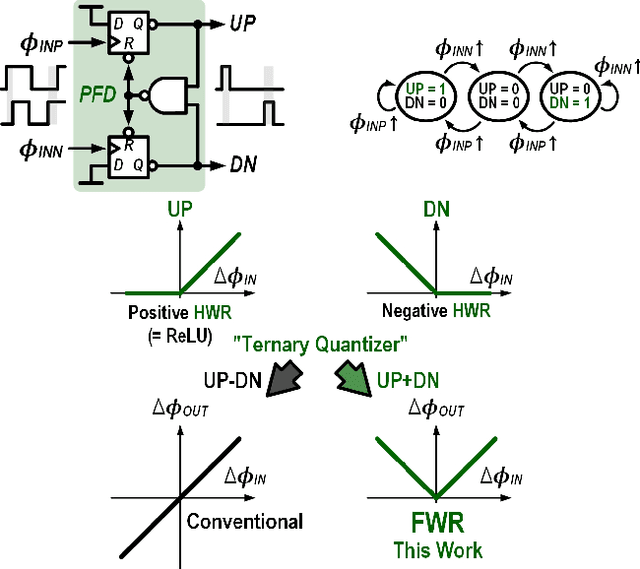

A 23 $μ$W Keyword Spotting IC with Ring-Oscillator-Based Time-Domain Feature Extraction

Aug 01, 2022

Abstract:This article presents the first keyword spotting (KWS) IC which uses a ring-oscillator-based time-domain processing technique for its analog feature extractor (FEx). Its extensive usage of time-encoding schemes allows the analog audio signal to be processed in a fully time-domain manner except for the voltage-to-time conversion stage of the analog front-end. Benefiting from fundamental building blocks based on digital logic gates, it offers a better technology scalability compared to conventional voltage-domain designs. Fabricated in a 65 nm CMOS process, the prototyped KWS IC occupies 2.03mm$^{2}$ and dissipates 23 $\mu$W power consumption including analog FEx and digital neural network classifier. The 16-channel time-domain FEx achieves 54.89 dB dynamic range for 16 ms frame shift size while consuming 9.3 $\mu$W. The measurement result verifies that the proposed IC performs a 12-class KWS task on the Google Speech Command Dataset (GSCD) with >86% accuracy and 12.4 ms latency.

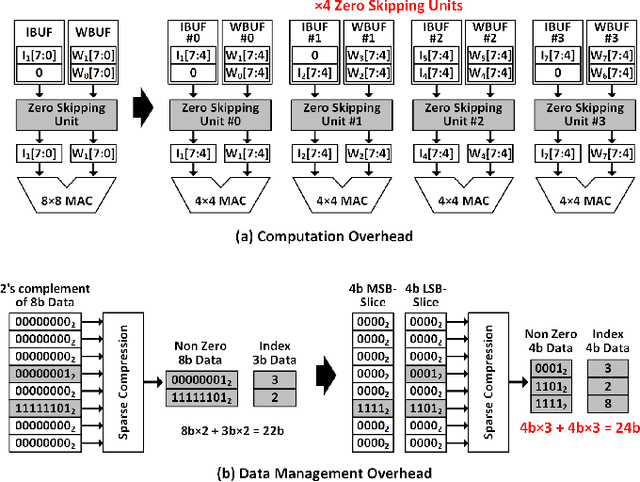

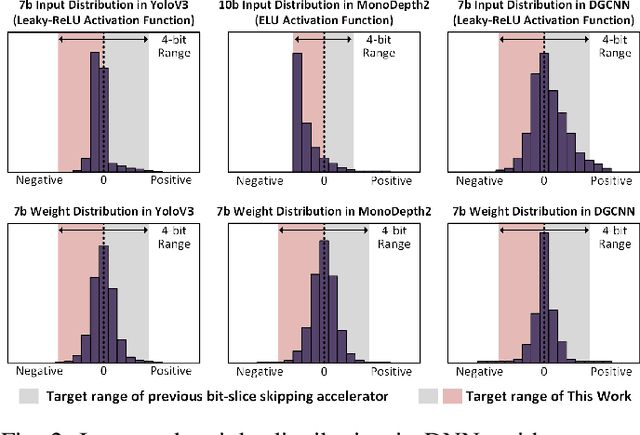

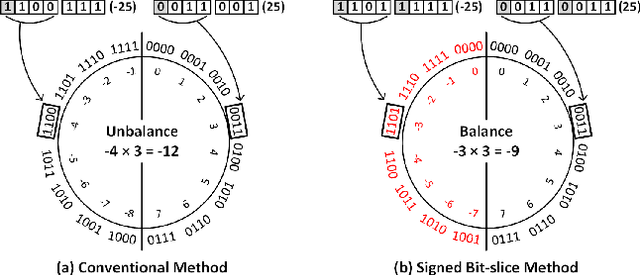

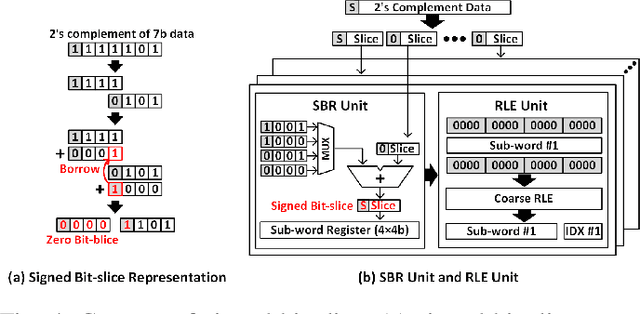

Energy-efficient Dense DNN Acceleration with Signed Bit-slice Architecture

Mar 15, 2022

Abstract:As the number of deep neural networks (DNNs) to be executed on a mobile system-on-chip (SoC) increases, the mobile SoC suffers from the real-time DNN acceleration within its limited hardware resources and power budget. Although the previous mobile neural processing units (NPUs) take advantage of low-bit computing and exploitation of the sparsity, it is incapable of accelerating high-precision and dense DNNs. This paper proposes energy-efficient signed bit-slice architecture which accelerates both high-precision and dense DNNs by exploiting a large number of zero values of signed bit-slices. Proposed signed bit-slice representation (SBR) changes signed $1111_{2}$ bit-slice to $0000_{2}$ by borrowing a $1$ value from its lower order of bit-slice. As a result, it generates a large number of zero bit-slices even in dense DNNs. Moreover, it balances the positive and negative values of 2's complement data, allowing bit-slice based output speculation which pre-computes high order of bit-slices and skips the remaining dense low order of bit-slices. The signed bit-slice architecture compresses and skips the zero input signed bit-slices, and the zero skipping unit also supports the output skipping by masking the speculated inputs as zero. Additionally, the heterogeneous network-on-chip (NoC) benefits the exploitation of data reusability and reduction of transmission bandwidth. The paper introduces a specialized instruction set architecture (ISA) and a hierarchical instruction decoder for the control of the signed bit-slice architecture. Finally, the signed bit-slice architecture outperforms the previous bit-slice accelerator, Bit-fusion, over $\times3.65$ higher area-efficiency, $\times3.88$ higher energy-efficiency, and $\times5.35$ higher throughput.

GST: Group-Sparse Training for Accelerating Deep Reinforcement Learning

Jan 24, 2021

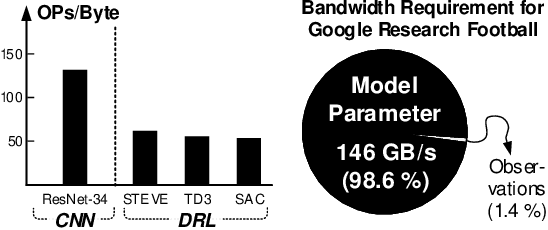

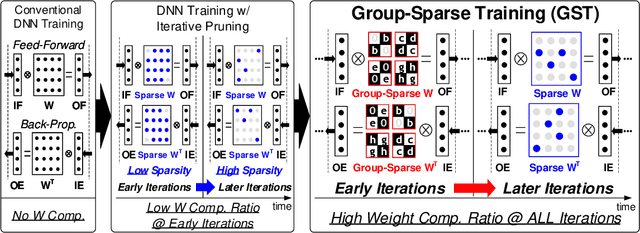

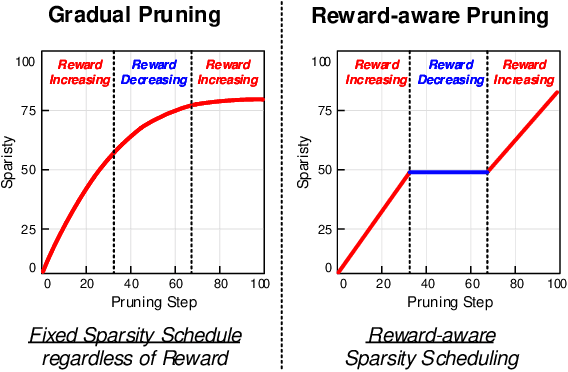

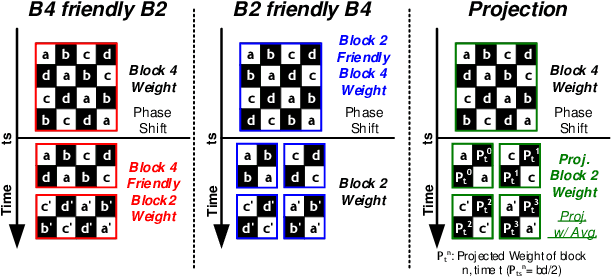

Abstract:Deep reinforcement learning (DRL) has shown remarkable success in sequential decision-making problems but suffers from a long training time to obtain such good performance. Many parallel and distributed DRL training approaches have been proposed to solve this problem, but it is difficult to utilize them on resource-limited devices. In order to accelerate DRL in real-world edge devices, memory bandwidth bottlenecks due to large weight transactions have to be resolved. However, previous iterative pruning not only shows a low compression ratio at the beginning of training but also makes DRL training unstable. To overcome these shortcomings, we propose a novel weight compression method for DRL training acceleration, named group-sparse training (GST). GST selectively utilizes block-circulant compression to maintain a high weight compression ratio during all iterations of DRL training and dynamically adapt target sparsity through reward-aware pruning for stable training. Thanks to the features, GST achieves a 25 \%p $\sim$ 41.5 \%p higher average compression ratio than the iterative pruning method without reward drop in Mujoco Halfcheetah-v2 and Mujoco humanoid-v2 environment with TD3 training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge