GST: Group-Sparse Training for Accelerating Deep Reinforcement Learning

Paper and Code

Jan 24, 2021

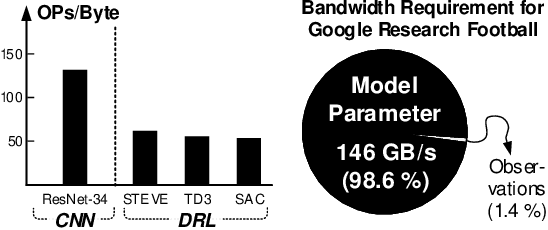

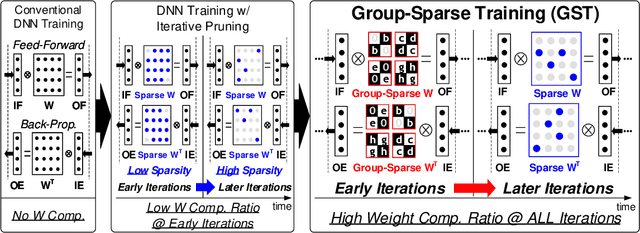

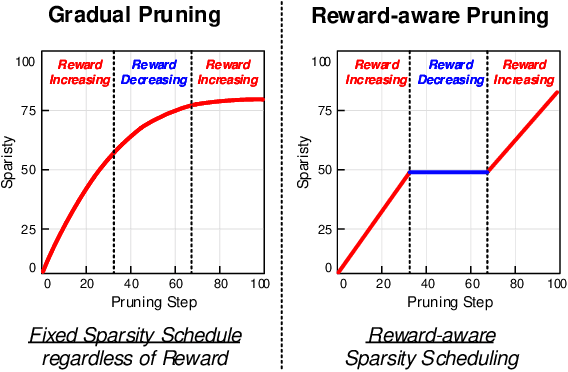

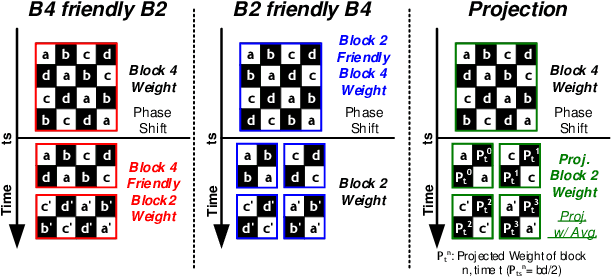

Deep reinforcement learning (DRL) has shown remarkable success in sequential decision-making problems but suffers from a long training time to obtain such good performance. Many parallel and distributed DRL training approaches have been proposed to solve this problem, but it is difficult to utilize them on resource-limited devices. In order to accelerate DRL in real-world edge devices, memory bandwidth bottlenecks due to large weight transactions have to be resolved. However, previous iterative pruning not only shows a low compression ratio at the beginning of training but also makes DRL training unstable. To overcome these shortcomings, we propose a novel weight compression method for DRL training acceleration, named group-sparse training (GST). GST selectively utilizes block-circulant compression to maintain a high weight compression ratio during all iterations of DRL training and dynamically adapt target sparsity through reward-aware pruning for stable training. Thanks to the features, GST achieves a 25 \%p $\sim$ 41.5 \%p higher average compression ratio than the iterative pruning method without reward drop in Mujoco Halfcheetah-v2 and Mujoco humanoid-v2 environment with TD3 training.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge