Hamdi A. Tchelepi

Learning CO$_2$ plume migration in faulted reservoirs with Graph Neural Networks

Jun 16, 2023

Abstract:Deep-learning-based surrogate models provide an efficient complement to numerical simulations for subsurface flow problems such as CO$_2$ geological storage. Accurately capturing the impact of faults on CO$_2$ plume migration remains a challenge for many existing deep learning surrogate models based on Convolutional Neural Networks (CNNs) or Neural Operators. We address this challenge with a graph-based neural model leveraging recent developments in the field of Graph Neural Networks (GNNs). Our model combines graph-based convolution Long-Short-Term-Memory (GConvLSTM) with a one-step GNN model, MeshGraphNet (MGN), to operate on complex unstructured meshes and limit temporal error accumulation. We demonstrate that our approach can accurately predict the temporal evolution of gas saturation and pore pressure in a synthetic reservoir with impermeable faults. Our results exhibit a better accuracy and a reduced temporal error accumulation compared to the standard MGN model. We also show the excellent generalizability of our algorithm to mesh configurations, boundary conditions, and heterogeneous permeability fields not included in the training set. This work highlights the potential of GNN-based methods to accurately and rapidly model subsurface flow with complex faults and fractures.

MeshfreeFlowNet: A Physics-Constrained Deep Continuous Space-Time Super-Resolution Framework

May 01, 2020

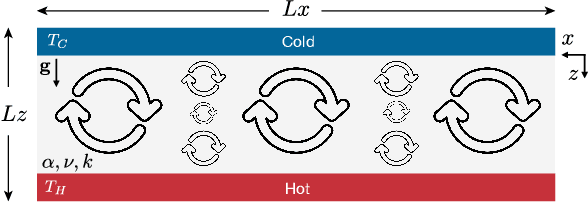

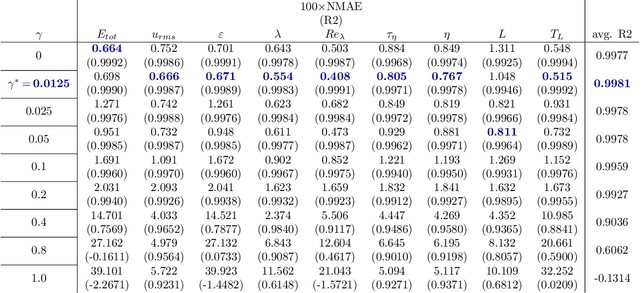

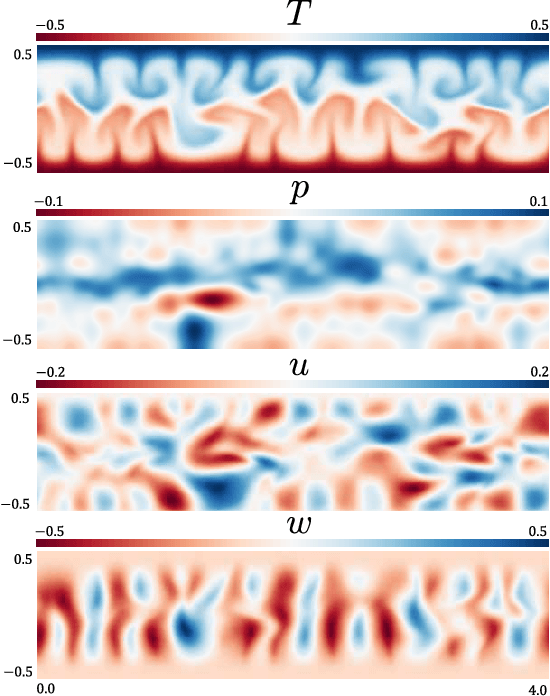

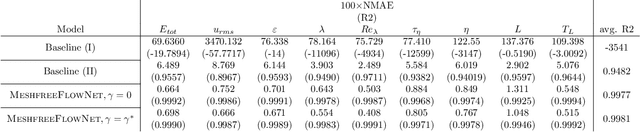

Abstract:We propose MeshfreeFlowNet, a novel deep learning-based super-resolution framework to generate continuous (grid-free) spatio-temporal solutions from the low-resolution inputs. While being computationally efficient, MeshfreeFlowNet accurately recovers the fine-scale quantities of interest. MeshfreeFlowNet allows for: (i) the output to be sampled at all spatio-temporal resolutions, (ii) a set of Partial Differential Equation (PDE) constraints to be imposed, and (iii) training on fixed-size inputs on arbitrarily sized spatio-temporal domains owing to its fully convolutional encoder. We empirically study the performance of MeshfreeFlowNet on the task of super-resolution of turbulent flows in the Rayleigh-Benard convection problem. Across a diverse set of evaluation metrics, we show that MeshfreeFlowNet significantly outperforms existing baselines. Furthermore, we provide a large scale implementation of MeshfreeFlowNet and show that it efficiently scales across large clusters, achieving 96.80% scaling efficiency on up to 128 GPUs and a training time of less than 4 minutes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge