Guénolé Silvestre

Understanding Task Aggregation for Generalizable Ultrasound Foundation Models

Mar 18, 2026Abstract:Foundation models promise to unify multiple clinical tasks within a single framework, but recent ultrasound studies report that unified models can underperform task-specific baselines. We hypothesize that this degradation arises not from model capacity limitations, but from task aggregation strategies that ignore interactions between task heterogeneity and available training data scale. In this work, we systematically analyze when heterogeneous ultrasound tasks can be jointly learned without performance loss, establishing practical criteria for task aggregation in unified clinical imaging models. We introduce M2DINO, a multi-organ, multi-task framework built on DINOv3 with task-conditioned Mixture-of-Experts blocks for adaptive capacity allocation. We systematically evaluate 27 ultrasound tasks spanning segmentation, classification, detection, and regression under three paradigms: task-specific, clinically-grouped, and all-task unified training. Our results show that aggregation effectiveness depends strongly on training data scale. While clinically-grouped training can improve performance in data-rich settings, it may induce substantial negative transfer in low-data settings. In contrast, all-task unified training exhibits more consistent performance across clinical groups. We further observe that task sensitivity varies by task type in our experiments: segmentation shows the largest performance drops compared with regression and classification. These findings provide practical guidance for ultrasound foundation models, emphasizing that aggregation strategies should jointly consider training data availability and task characteristics rather than relying on clinical taxonomy alone.

Dual Agreement Consistency Learning with Foundation Models for Semi-Supervised Fetal Heart Ultrasound Segmentation and Diagnosis

Mar 18, 2026Abstract:Congenital heart disease (CHD) screening from fetal echocardiography requires accurate analysis of multiple standard cardiac views, yet developing reliable artificial intelligence models remains challenging due to limited annotations and variable image quality. In this work, we propose FM-DACL, a semi-supervised Dual Agreement Consistency Learning framework for the FETUS 2026 challenge on fetal heart ultrasound segmentation and diagnosis. The method combines a pretrained ultrasound foundation model (EchoCare) with a convolutional network through heterogeneous co-training and an exponential moving average teacher to better exploit unlabeled data. Experiments on the multi-center challenge dataset show that FM-DACL achieves a Dice score of 59.66 and NSD of 42.82 using heterogeneous backbones, demonstrating the feasibility of the proposed semi-supervised framework. These results suggest that FM-DACL provides a flexible approach for leveraging heterogeneous models in low-annotation fetal cardiac ultrasound analysis. The code is available on https://github.com/13204942/FM-DACL.

Entropy-Guided Agreement-Diversity: A Semi-Supervised Active Learning Framework for Fetal Head Segmentation in Ultrasound

Jan 24, 2026Abstract:Fetal ultrasound (US) data is often limited due to privacy and regulatory restrictions, posing challenges for training deep learning (DL) models. While semi-supervised learning (SSL) is commonly used for fetal US image analysis, existing SSL methods typically rely on random limited selection, which can lead to suboptimal model performance by overfitting to homogeneous labeled data. To address this, we propose a two-stage Active Learning (AL) sampler, Entropy-Guided Agreement-Diversity (EGAD), for fetal head segmentation. Our method first selects the most uncertain samples using predictive entropy, and then refines the final selection using the agreement-diversity score combining cosine similarity and mutual information. Additionally, our SSL framework employs a consistency learning strategy with feature downsampling to further enhance segmentation performance. In experiments, SSL-EGAD achieves an average Dice score of 94.57\% and 96.32\% on two public datasets for fetal head segmentation, using 5\% and 10\% labeled data for training, respectively. Our method outperforms current SSL models and showcases consistent robustness across diverse pregnancy stage data. The code is available on \href{https://github.com/13204942/Semi-supervised-EGAD}{GitHub}.

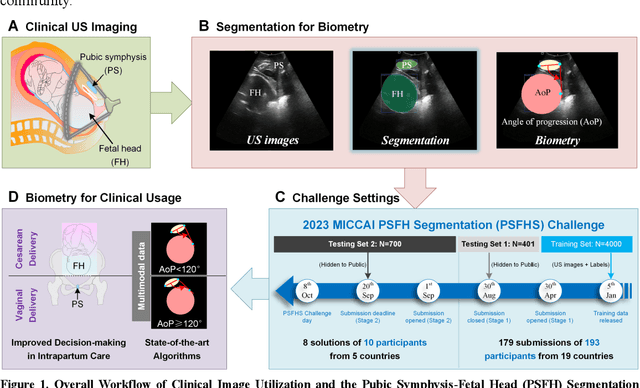

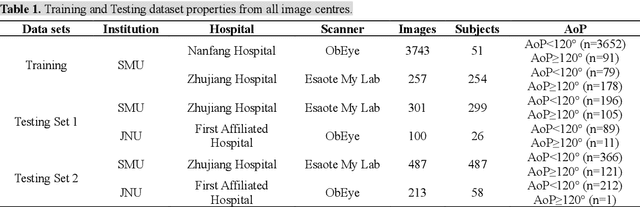

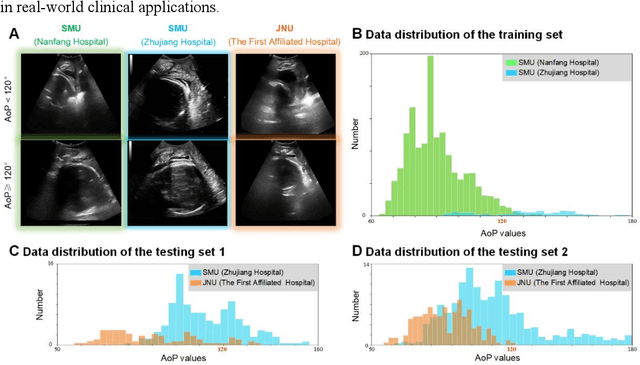

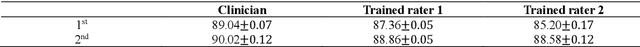

PSFHS Challenge Report: Pubic Symphysis and Fetal Head Segmentation from Intrapartum Ultrasound Images

Sep 17, 2024

Abstract:Segmentation of the fetal and maternal structures, particularly intrapartum ultrasound imaging as advocated by the International Society of Ultrasound in Obstetrics and Gynecology (ISUOG) for monitoring labor progression, is a crucial first step for quantitative diagnosis and clinical decision-making. This requires specialized analysis by obstetrics professionals, in a task that i) is highly time- and cost-consuming and ii) often yields inconsistent results. The utility of automatic segmentation algorithms for biometry has been proven, though existing results remain suboptimal. To push forward advancements in this area, the Grand Challenge on Pubic Symphysis-Fetal Head Segmentation (PSFHS) was held alongside the 26th International Conference on Medical Image Computing and Computer Assisted Intervention (MICCAI 2023). This challenge aimed to enhance the development of automatic segmentation algorithms at an international scale, providing the largest dataset to date with 5,101 intrapartum ultrasound images collected from two ultrasound machines across three hospitals from two institutions. The scientific community's enthusiastic participation led to the selection of the top 8 out of 179 entries from 193 registrants in the initial phase to proceed to the competition's second stage. These algorithms have elevated the state-of-the-art in automatic PSFHS from intrapartum ultrasound images. A thorough analysis of the results pinpointed ongoing challenges in the field and outlined recommendations for future work. The top solutions and the complete dataset remain publicly available, fostering further advancements in automatic segmentation and biometry for intrapartum ultrasound imaging.

Generative Diffusion Model Bootstraps Zero-shot Classification of Fetal Ultrasound Images In Underrepresented African Populations

Jul 29, 2024

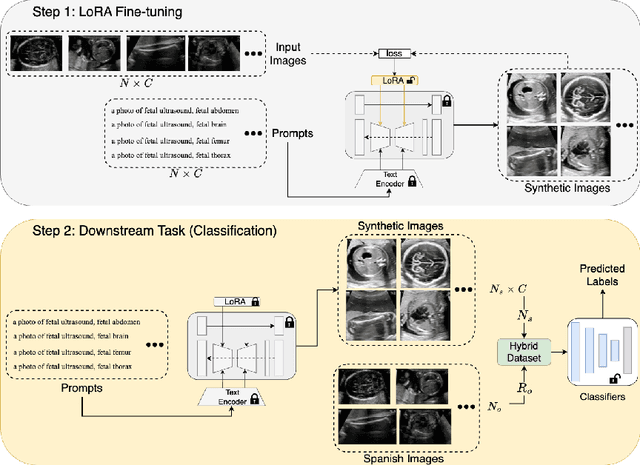

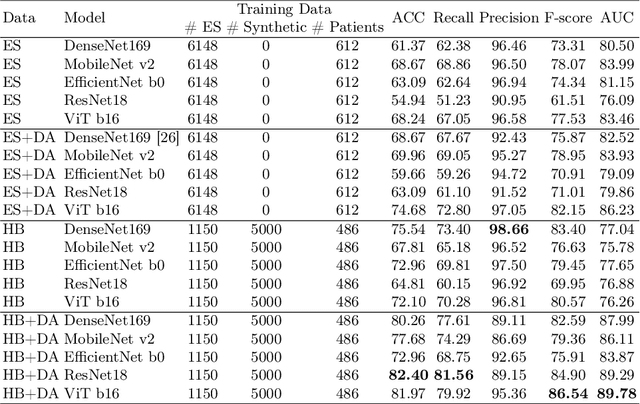

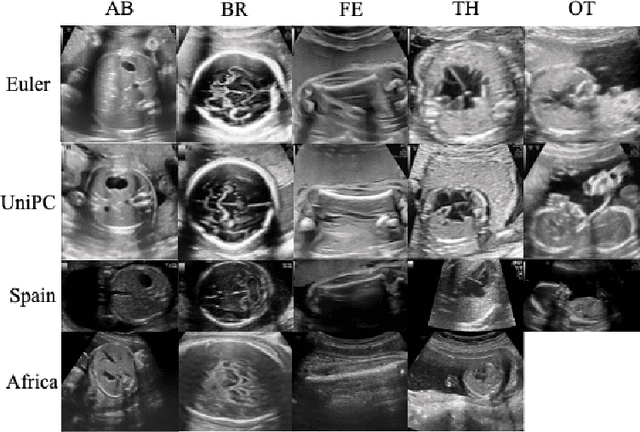

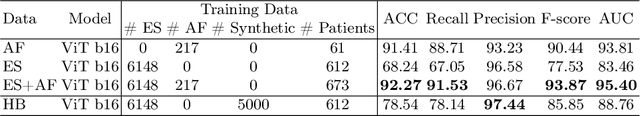

Abstract:Developing robust deep learning models for fetal ultrasound image analysis requires comprehensive, high-quality datasets to effectively learn informative data representations within the domain. However, the scarcity of labelled ultrasound images poses substantial challenges, especially in low-resource settings. To tackle this challenge, we leverage synthetic data to enhance the generalizability of deep learning models. This study proposes a diffusion-based method, Fetal Ultrasound LoRA (FU-LoRA), which involves fine-tuning latent diffusion models using the LoRA technique to generate synthetic fetal ultrasound images. These synthetic images are integrated into a hybrid dataset that combines real-world and synthetic images to improve the performance of zero-shot classifiers in low-resource settings. Our experimental results on fetal ultrasound images from African cohorts demonstrate that FU-LoRA outperforms the baseline method by a 13.73% increase in zero-shot classification accuracy. Furthermore, FU-LoRA achieves the highest accuracy of 82.40%, the highest F-score of 86.54%, and the highest AUC of 89.78%. It demonstrates that the FU-LoRA method is effective in the zero-shot classification of fetal ultrasound images in low-resource settings. Our code and data are publicly accessible on https://github.com/13204942/FU-LoRA.

Segmenting Fetal Head with Efficient Fine-tuning Strategies in Low-resource Settings: an empirical study with U-Net

Jul 29, 2024

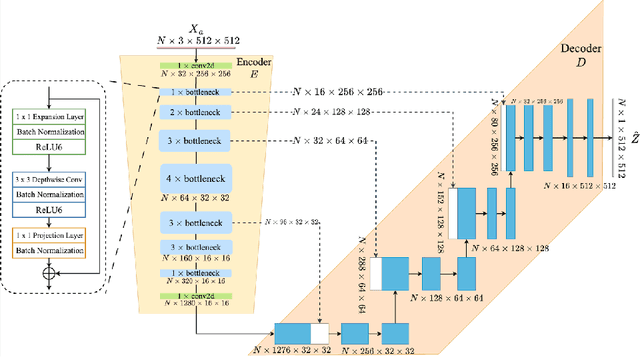

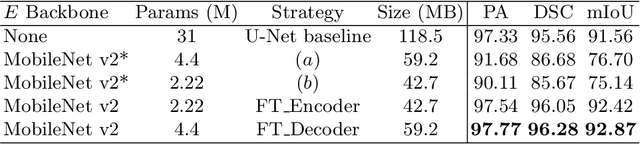

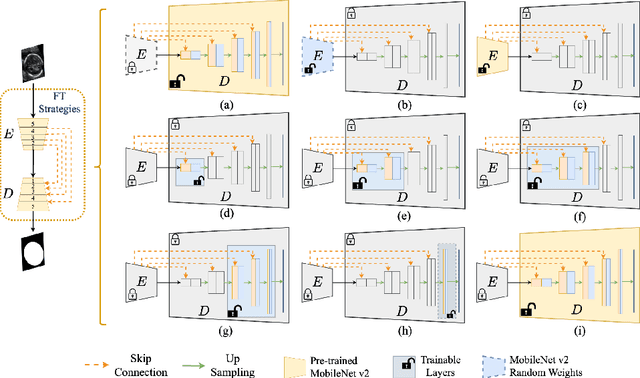

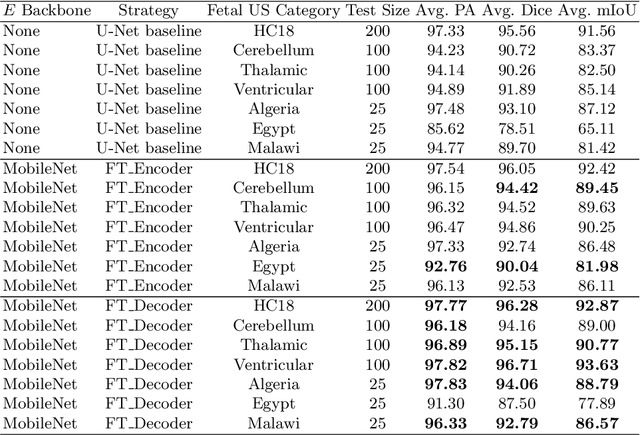

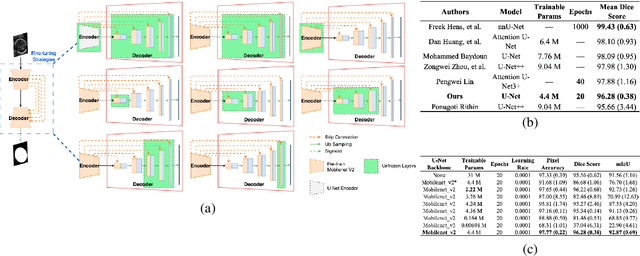

Abstract:Accurate measurement of fetal head circumference is crucial for estimating fetal growth during routine prenatal screening. Prior to measurement, it is necessary to accurately identify and segment the region of interest, specifically the fetal head, in ultrasound images. Recent advancements in deep learning techniques have shown significant progress in segmenting the fetal head using encoder-decoder models. Among these models, U-Net has become a standard approach for accurate segmentation. However, training an encoder-decoder model can be a time-consuming process that demands substantial computational resources. Moreover, fine-tuning these models is particularly challenging when there is a limited amount of data available. There are still no "best-practice" guidelines for optimal fine-tuning of U-net for fetal ultrasound image segmentation. This work summarizes existing fine-tuning strategies with various backbone architectures, model components, and fine-tuning strategies across ultrasound data from Netherlands, Spain, Malawi, Egypt and Algeria. Our study shows that (1) fine-tuning U-Net leads to better performance than training from scratch, (2) fine-tuning strategies in decoder are superior to other strategies, (3) network architecture with less number of parameters can achieve similar or better performance. We also demonstrate the effectiveness of fine-tuning strategies in low-resource settings and further expand our experiments into few-shot learning. Lastly, we publicly released our code and specific fine-tuned weights.

Evaluate Fine-tuning Strategies for Fetal Head Ultrasound Image Segmentation with U-Net

Jul 18, 2023

Abstract:Fetal head segmentation is a crucial step in measuring the fetal head circumference (HC) during gestation, an important biometric in obstetrics for monitoring fetal growth. However, manual biometry generation is time-consuming and results in inconsistent accuracy. To address this issue, convolutional neural network (CNN) models have been utilized to improve the efficiency of medical biometry. But training a CNN network from scratch is a challenging task, we proposed a Transfer Learning (TL) method. Our approach involves fine-tuning (FT) a U-Net network with a lightweight MobileNet as the encoder to perform segmentation on a set of fetal head ultrasound (US) images with limited effort. This method addresses the challenges associated with training a CNN network from scratch. It suggests that our proposed FT strategy yields segmentation performance that is comparable when trained with a reduced number of parameters by 85.8%. And our proposed FT strategy outperforms other strategies with smaller trainable parameter sizes below 4.4 million. Thus, we contend that it can serve as a dependable FT approach for reducing the size of models in medical image analysis. Our key findings highlight the importance of the balance between model performance and size in developing Artificial Intelligence (AI) applications by TL methods. Code is available at https://github.com/13204942/FT_Methods_for_Fetal_Head_Segmentation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge