Fu Xing Long

Landscape-Aware Automated Algorithm Configuration using Multi-output Mixed Regression and Classification

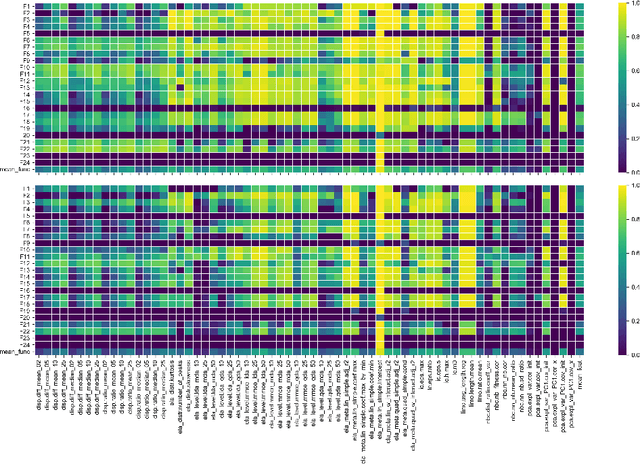

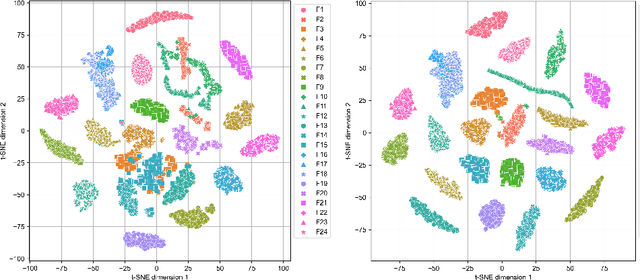

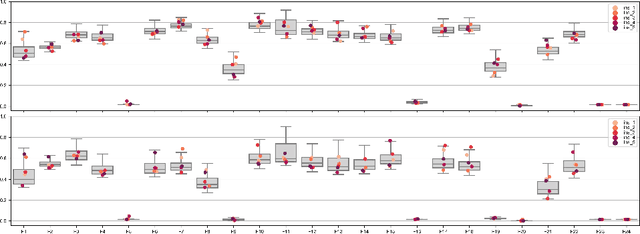

Sep 02, 2024Abstract:In landscape-aware algorithm selection problem, the effectiveness of feature-based predictive models strongly depends on the representativeness of training data for practical applications. In this work, we investigate the potential of randomly generated functions (RGF) for the model training, which cover a much more diverse set of optimization problem classes compared to the widely-used black-box optimization benchmarking (BBOB) suite. Correspondingly, we focus on automated algorithm configuration (AAC), that is, selecting the best suited algorithm and fine-tuning its hyperparameters based on the landscape features of problem instances. Precisely, we analyze the performance of dense neural network (NN) models in handling the multi-output mixed regression and classification tasks using different training data sets, such as RGF and many-affine BBOB (MA-BBOB) functions. Based on our results on the BBOB functions in 5d and 20d, near optimal configurations can be identified using the proposed approach, which can most of the time outperform the off-the-shelf default configuration considered by practitioners with limited knowledge about AAC. Furthermore, the predicted configurations are competitive against the single best solver in many cases. Overall, configurations with better performance can be best identified by using NN models trained on a combination of RGF and MA-BBOB functions.

Challenges of ELA-guided Function Evolution using Genetic Programming

May 24, 2023

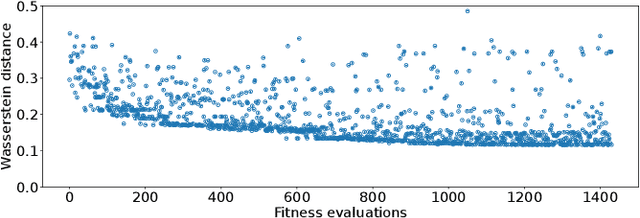

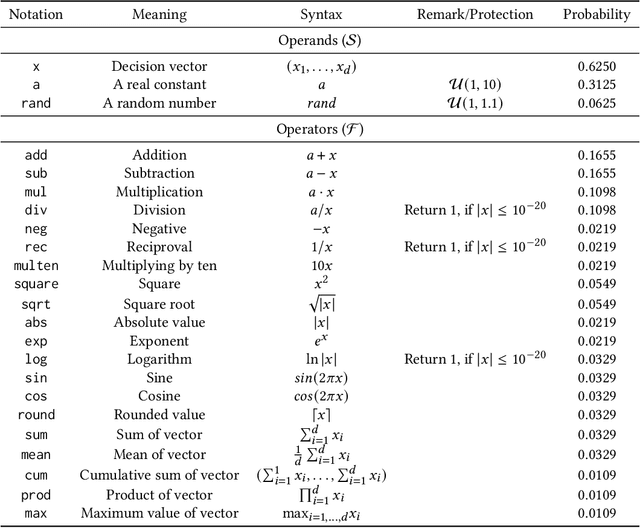

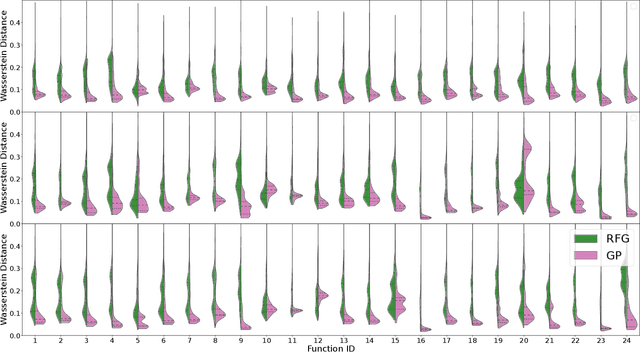

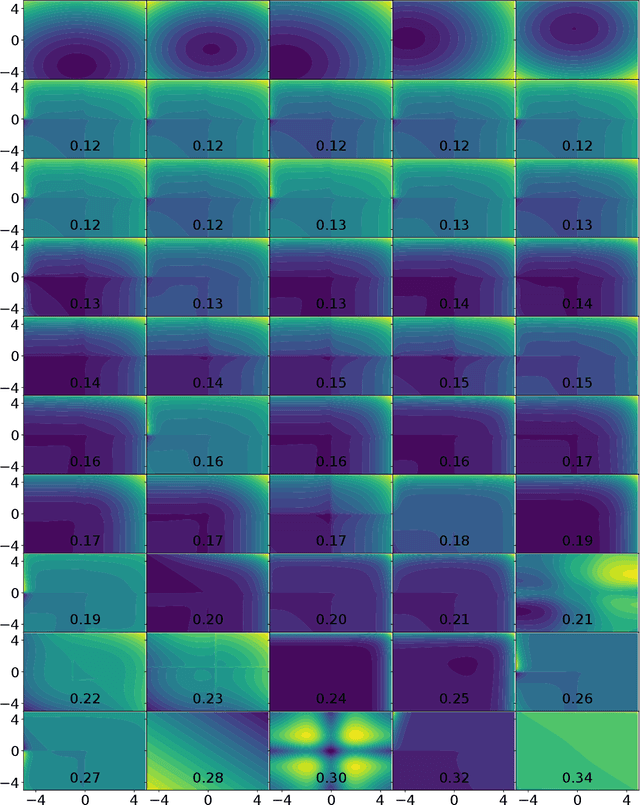

Abstract:Within the optimization community, the question of how to generate new optimization problems has been gaining traction in recent years. Within topics such as instance space analysis (ISA), the generation of new problems can provide new benchmarks which are not yet explored in existing research. Beyond that, this function generation can also be exploited for solving complex real-world optimization problems. By generating functions with similar properties to the target problem, we can create a robust test set for algorithm selection and configuration. However, the generation of functions with specific target properties remains challenging. While features exist to capture low-level landscape properties, they might not always capture the intended high-level features. We show that a genetic programming (GP) approach guided by these exploratory landscape analysis (ELA) properties is not always able to find satisfying functions. Our results suggest that careful considerations of the weighting of landscape properties, as well as the distance measure used, might be required to evolve functions that are sufficiently representative to the target landscape.

DoE2Vec: Deep-learning Based Features for Exploratory Landscape Analysis

Mar 31, 2023

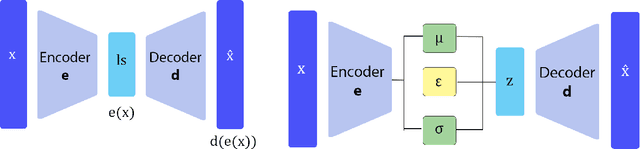

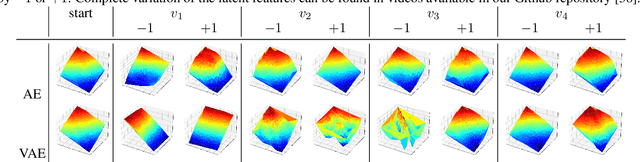

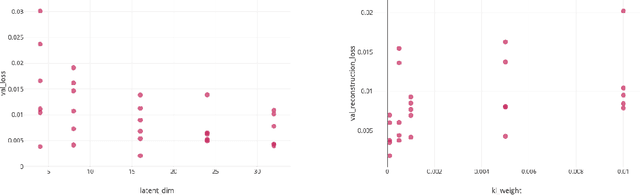

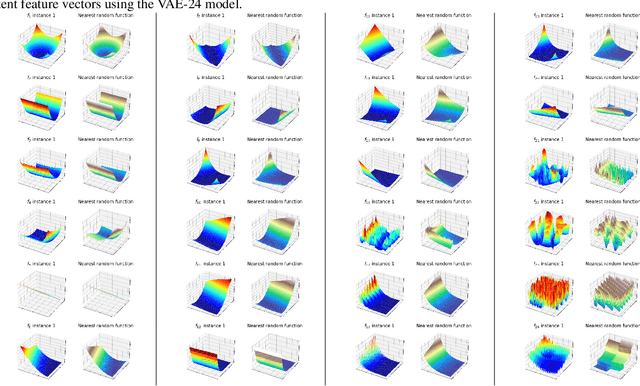

Abstract:We propose DoE2Vec, a variational autoencoder (VAE)-based methodology to learn optimization landscape characteristics for downstream meta-learning tasks, e.g., automated selection of optimization algorithms. Principally, using large training data sets generated with a random function generator, DoE2Vec self-learns an informative latent representation for any design of experiments (DoE). Unlike the classical exploratory landscape analysis (ELA) method, our approach does not require any feature engineering and is easily applicable for high dimensional search spaces. For validation, we inspect the quality of latent reconstructions and analyze the latent representations using different experiments. The latent representations not only show promising potentials in identifying similar (cheap-to-evaluate) surrogate functions, but also can significantly boost performances when being used complementary to the classical ELA features in classification tasks.

BBOB Instance Analysis: Landscape Properties and Algorithm Performance across Problem Instances

Nov 29, 2022

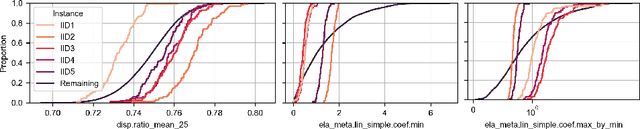

Abstract:Benchmarking is a key aspect of research into optimization algorithms, and as such the way in which the most popular benchmark suites are designed implicitly guides some parts of algorithm design. One of these suites is the black-box optimization benchmarking (BBOB) suite of 24 single-objective noiseless functions, which has been a standard for over a decade. Within this problem suite, different instances of a single problem can be created, which is beneficial for testing the stability and invariance of algorithms under transformations. In this paper, we investigate the BBOB instance creation protocol by considering a set of 500 instances for each BBOB problem. Using exploratory landscape analysis, we show that the distribution of landscape features across BBOB instances is highly diverse for a large set of problems. In addition, we run a set of eight algorithms across these 500 instances, and investigate for which cases statistically significant differences in performance occur. We argue that, while the transformations applied in BBOB instances do indeed seem to preserve the high-level properties of the functions, their difference in practice should not be overlooked, particularly when treating the problems as box-constrained instead of unconstrained.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge