Fen Xia

TreeMAN: Tree-enhanced Multimodal Attention Network for ICD Coding

May 29, 2023Abstract:ICD coding is designed to assign the disease codes to electronic health records (EHRs) upon discharge, which is crucial for billing and clinical statistics. In an attempt to improve the effectiveness and efficiency of manual coding, many methods have been proposed to automatically predict ICD codes from clinical notes. However, most previous works ignore the decisive information contained in structured medical data in EHRs, which is hard to be captured from the noisy clinical notes. In this paper, we propose a Tree-enhanced Multimodal Attention Network (TreeMAN) to fuse tabular features and textual features into multimodal representations by enhancing the text representations with tree-based features via the attention mechanism. Tree-based features are constructed according to decision trees learned from structured multimodal medical data, which capture the decisive information about ICD coding. We can apply the same multi-label classifier from previous text models to the multimodal representations to predict ICD codes. Experiments on two MIMIC datasets show that our method outperforms prior state-of-the-art ICD coding approaches. The code is available at https://github.com/liu-zichen/TreeMAN.

A General Distributed Dual Coordinate Optimization Framework for Regularized Loss Minimization

Aug 25, 2017

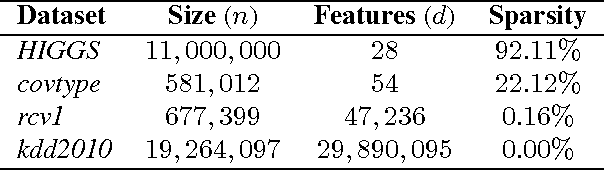

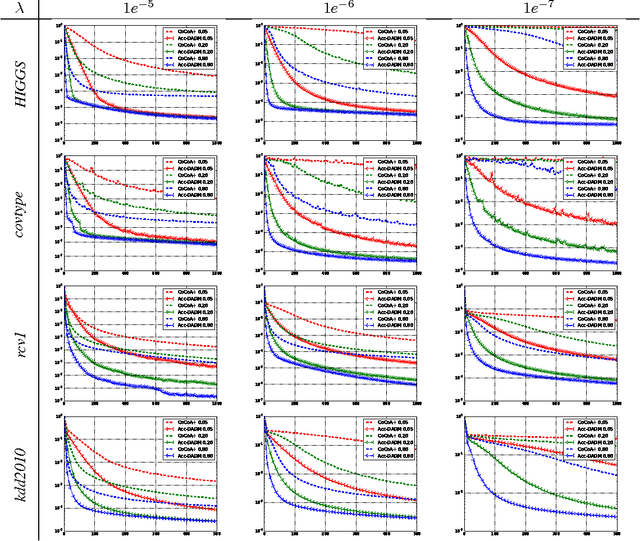

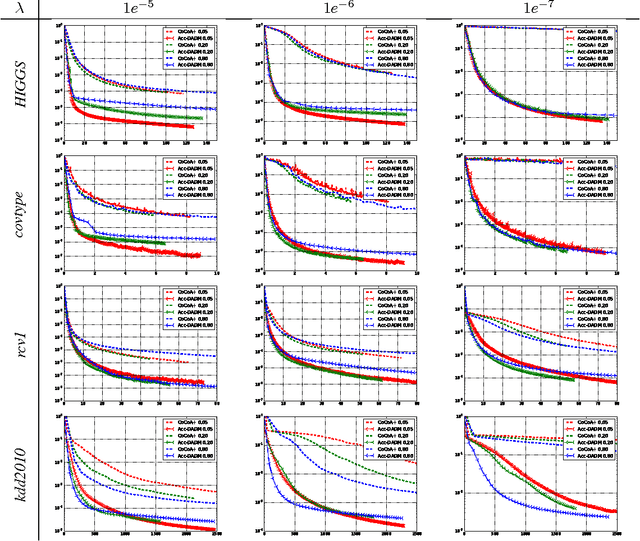

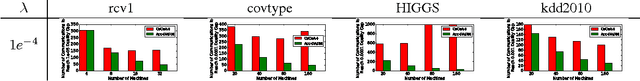

Abstract:In modern large-scale machine learning applications, the training data are often partitioned and stored on multiple machines. It is customary to employ the "data parallelism" approach, where the aggregated training loss is minimized without moving data across machines. In this paper, we introduce a novel distributed dual formulation for regularized loss minimization problems that can directly handle data parallelism in the distributed setting. This formulation allows us to systematically derive dual coordinate optimization procedures, which we refer to as Distributed Alternating Dual Maximization (DADM). The framework extends earlier studies described in (Boyd et al., 2011; Ma et al., 2015a; Jaggi et al., 2014; Yang, 2013) and has rigorous theoretical analyses. Moreover with the help of the new formulation, we develop the accelerated version of DADM (Acc-DADM) by generalizing the acceleration technique from (Shalev-Shwartz and Zhang, 2014) to the distributed setting. We also provide theoretical results for the proposed accelerated version and the new result improves previous ones (Yang, 2013; Ma et al., 2015a) whose runtimes grow linearly on the condition number. Our empirical studies validate our theory and show that our accelerated approach significantly improves the previous state-of-the-art distributed dual coordinate optimization algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge