Fangyu Li

Effective and Efficient Training for Sequential Recommendation Using Cumulative Cross-Entropy Loss

Jan 03, 2023Abstract:Increasing research interests focus on sequential recommender systems, aiming to model dynamic sequence representation precisely. However, the most commonly used loss function in state-of-the-art sequential recommendation models has essential limitations. To name a few, Bayesian Personalized Ranking (BPR) loss suffers the vanishing gradient problem from numerous negative sampling and predictionbiases; Binary Cross-Entropy (BCE) loss subjects to negative sampling numbers, thereby it is likely to ignore valuable negative examples and reduce the training efficiency; Cross-Entropy (CE) loss only focuses on the last timestamp of the training sequence, which causes low utilization of sequence information and results in inferior user sequence representation. To avoid these limitations, in this paper, we propose to calculate Cumulative Cross-Entropy (CCE) loss over the sequence. CCE is simple and direct, which enjoys the virtues of painless deployment, no negative sampling, and effective and efficient training. We conduct extensive experiments on five benchmark datasets to demonstrate the effectiveness and efficiency of CCE. The results show that employing CCE loss on three state-of-the-art models GRU4Rec, SASRec, and S3-Rec can reach 125.63%, 69.90%, and 33.24% average improvement of full ranking NDCG@5, respectively. Using CCE, the performance curve of the models on the test data increases rapidly with the wall clock time, and is superior to that of other loss functions in almost the whole process of model training.

Embodied View-Contrastive 3D Feature Learning

Jul 10, 2019

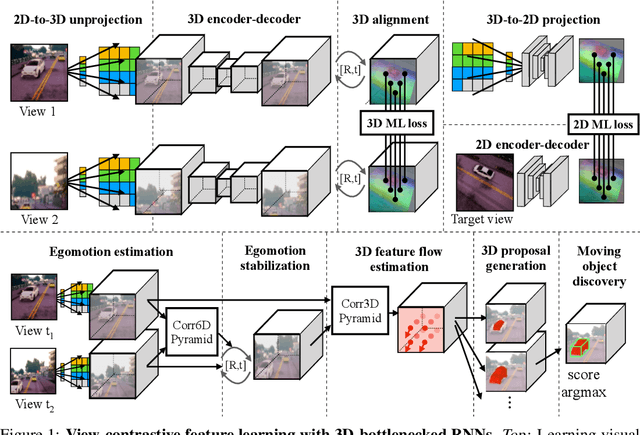

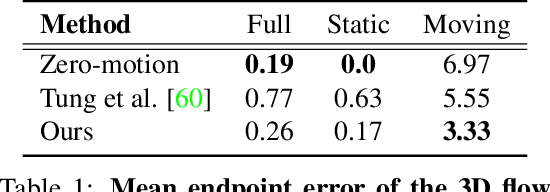

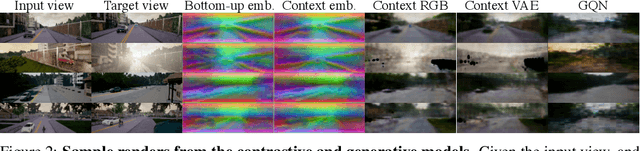

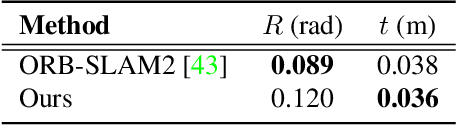

Abstract:Humans can effortlessly imagine the occluded side of objects in a photograph. We do not simply see the photograph as a flat 2D surface, we perceive the 3D visual world captured in it, by using our imagination to inpaint the information lost during camera projection. We propose neural architectures that similarly learn to approximately imagine abstractions of the 3D world depicted in 2D images. We show that this capability suffices to localize moving objects in 3D, without using any human annotations. Our models are recurrent neural networks that consume RGB or RGB-D videos, and learn to predict novel views of the scene from queried camera viewpoints. They are equipped with a 3D representation bottleneck that learns an egomotion-stabilized and geometrically consistent deep feature map of the 3D world scene. They estimate camera motion from frame to frame, and cancel it from the extracted 2D features before fusing them in the latent 3D map. We handle multimodality and stochasticity in prediction using ranking-based contrastive losses, and show that they can scale to photorealistic imagery, in contrast to regression or VAE alternatives. Our model proposes 3D boxes for moving objects by estimating a 3D motion flow field between its temporally consecutive 3D imaginations, and thresholding motion magnitude: camera motion has been cancelled in the latent 3D space, and thus any non-zero motion is an indication of an independently moving object. Our work underlines the importance of 3D representations and egomotion stabilization for visual recognition, and proposes a viable computational model for learning 3D visual feature representations and 3D object bounding boxes supervised by moving and watching objects move.

PoTrojan: powerful neural-level trojan designs in deep learning models

Feb 08, 2018

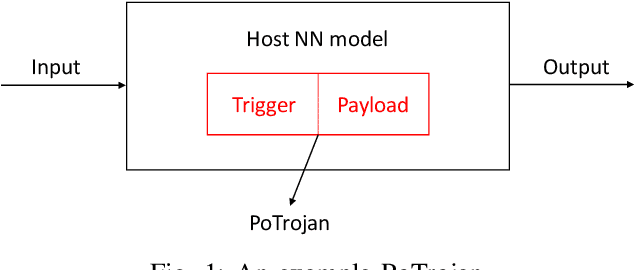

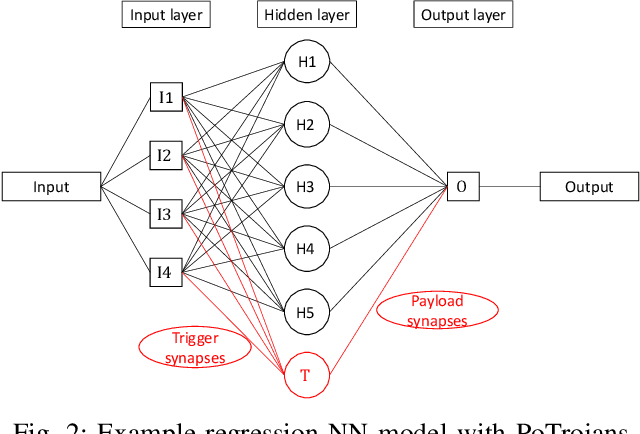

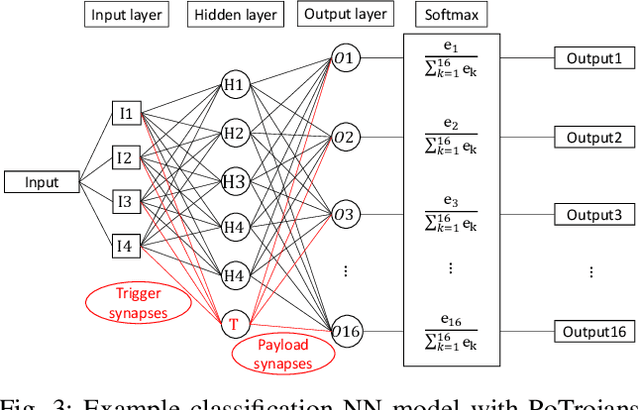

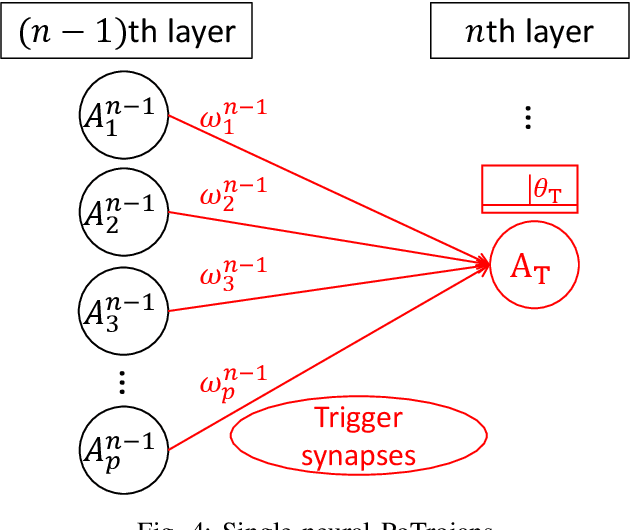

Abstract:With the popularity of deep learning (DL), artificial intelligence (AI) has been applied in many areas of human life. Neural network or artificial neural network (NN), the main technique behind DL, has been extensively studied to facilitate computer vision and natural language recognition. However, the more we rely on information technology, the more vulnerable we are. That is, malicious NNs could bring huge threat in the so-called coming AI era. In this paper, for the first time in the literature, we propose a novel approach to design and insert powerful neural-level trojans or PoTrojan in pre-trained NN models. Most of the time, PoTrojans remain inactive, not affecting the normal functions of their host NN models. PoTrojans could only be triggered in very rare conditions. Once activated, however, the PoTrojans could cause the host NN models to malfunction, either falsely predicting or classifying, which is a significant threat to human society of the AI era. We would explain the principles of PoTrojans and the easiness of designing and inserting them in pre-trained deep learning models. PoTrojans doesn't modify the existing architecture or parameters of the pre-trained models, without re-training. Hence, the proposed method is very efficient.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge