Elias Zea

Deep Learning-Driven Downscaling for Climate Risk Assessment of Projected Temperature Extremes in the Nordic Region

Nov 05, 2025

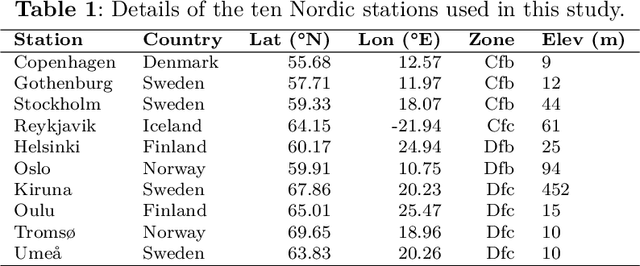

Abstract:Rapid changes and increasing climatic variability across the widely varied Koppen-Geiger regions of northern Europe generate significant needs for adaptation. Regional planning needs high-resolution projected temperatures. This work presents an integrative downscaling framework that incorporates Vision Transformer (ViT), Convolutional Long Short-Term Memory (ConvLSTM), and Geospatial Spatiotemporal Transformer with Attention and Imbalance-Aware Network (GeoStaNet) models. The framework is evaluated with a multicriteria decision system, Deep Learning-TOPSIS (DL-TOPSIS), for ten strategically chosen meteorological stations encompassing the temperate oceanic (Cfb), subpolar oceanic (Cfc), warm-summer continental (Dfb), and subarctic (Dfc) climate regions. Norwegian Earth System Model (NorESM2-LM) Coupled Model Intercomparison Project Phase 6 (CMIP6) outputs were bias-corrected during the 1951-2014 period and subsequently validated against earlier observations of day-to-day temperature metrics and diurnal range statistics. The ViT showed improved performance (Root Mean Squared Error (RMSE): 1.01 degrees C; R^2: 0.92), allowing for production of credible downscaled projections. Under the SSP5-8.5 scenario, the Dfc and Dfb climate zones are projected to warm by 4.8 degrees C and 3.9 degrees C, respectively, by 2100, with expansion in the diurnal temperature range by more than 1.5 degrees C. The Time of Emergence signal first appears in subarctic winter seasons (Dfc: approximately 2032), signifying an urgent need for adaptation measures. The presented framework offers station-based, high-resolution estimates of uncertainties and extremes, with direct uses for adaptation policy over high-latitude regions with fast environmental change.

Regional climate projections using a deep-learning-based model-ranking and downscaling framework: Application to European climate zones

Feb 28, 2025Abstract:Accurate regional climate forecast calls for high-resolution downscaling of Global Climate Models (GCMs). This work presents a deep-learning-based multi-model evaluation and downscaling framework ranking 32 Coupled Model Intercomparison Project Phase 6 (CMIP6) models using a Deep Learning-TOPSIS (DL-TOPSIS) mechanism and so refines outputs using advanced deep-learning models. Using nine performance criteria, five K\"oppen-Geiger climate zones -- Tropical, Arid, Temperate, Continental, and Polar -- are investigated over four seasons. While TaiESM1 and CMCC-CM2-SR5 show notable biases, ranking results show that NorESM2-LM, GISS-E2-1-G, and HadGEM3-GC31-LL outperform other models. Four models contribute to downscaling the top-ranked GCMs to 0.1$^{\circ}$ resolution: Vision Transformer (ViT), Geospatial Spatiotemporal Transformer with Attention and Imbalance-Aware Network (GeoSTANet), CNN-LSTM, and CNN-Long Short-Term Memory (ConvLSTM). Effectively capturing temperature extremes (TXx, TNn), GeoSTANet achieves the highest accuracy (Root Mean Square Error (RMSE) = 1.57$^{\circ}$C, Kling-Gupta Efficiency (KGE) = 0.89, Nash-Sutcliffe Efficiency (NSE) = 0.85, Correlation ($r$) = 0.92), so reducing RMSE by 20% over ConvLSTM. CNN-LSTM and ConvLSTM do well in Continental and Temperate zones; ViT finds fine-scale temperature fluctuations difficult. These results confirm that multi-criteria ranking improves GCM selection for regional climate studies and transformer-based downscaling exceeds conventional deep-learning methods. This framework offers a scalable method to enhance high-resolution climate projections, benefiting impact assessments and adaptation plans.

Sparse wavefield reconstruction and denoising with boostlets

Feb 12, 2025Abstract:Boostlets are spatiotemporal functions that decompose nondispersive wavefields into a collection of localized waveforms parametrized by dilations, hyperbolic rotations, and translations. We study the sparsity properties of boostlets and find that the resulting decompositions are significantly sparser than those of other state-of-the-art representation systems, such as wavelets and shearlets. This translates into improved denoising performance when hard-thresholding the boostlet coefficients. The results suggest that boostlets offer a natural framework for sparsely decomposing wavefields in unified space-time.

A data-driven two-microphone method for in-situ sound absorption measurements

Feb 06, 2025

Abstract:This work presents a data-driven approach to estimating the sound absorption coefficient of an infinite porous slab using a neural network and a two-microphone measurement on a finite porous sample. A 1D-convolutional network predicts the sound absorption coefficient from the complex-valued transfer function between the sound pressure measured at the two microphone positions. The network is trained and validated with numerical data generated by a boundary element model using the Delany-Bazley-Miki model, demonstrating accurate predictions for various numerical samples. The method is experimentally validated with baffled rectangular samples of a fibrous material, where sample size and source height are varied. The results show that the neural network offers the possibility to reliably predict the in-situ sound absorption of a porous material using the traditional two-microphone method as if the sample were infinite. The normal-incidence sound absorption coefficient obtained by the network compares well with that obtained theoretically and in an impedance tube. The proposed method has promising perspectives for estimating the sound absorption coefficient of acoustic materials after installation and in realistic operational conditions.

A continuous boostlet transform for acoustic waves in space-time

Mar 17, 2024

Abstract:Sparse representation systems that encode the signal architecture have had an exceptional impact on sampling and compression paradigms. Remarkable examples are multi-scale directional systems, which, similar to our vision system, encode the underlying architecture of natural images with sparse features. Inspired by this philosophy, the present study introduces a representation system for acoustic waves in 2D space-time, referred to as the boostlet transform, which encodes sparse features of natural acoustic fields with the Poincar\'e group and isotropic dilations. Continuous boostlets, $\psi_{a,\theta,\tau}(\varsigma) = a^{-1} \psi \left(D_a^{-1} B_\theta^{-1}(\varsigma-\tau)\right) \in L^2(\mathbb{R}^2)$, are spatiotemporal functions parametrized with dilations $a > 0$, Lorentz boosts $\theta \in \mathbb{R}$, and translations $\smash{\tau \in \mathbb{R}^2}$ in space--time. The admissibility condition requires that boostlets are supported away from the acoustic radiation cone, i.e., have phase velocities other than the speed of sound, resulting in a peculiar scaling function. The continuous boostlet transform is an isometry for $L^2(\mathbb{R}^2)$, and a sparsity analysis with experimentally measured fields indicates that boostlet coefficients decay faster than wavelets, curvelets, wave atoms, and shearlets. The uncertainty principles and minimizers associated with the boostlet transform are derived and interpreted physically.

An experiment on an automated literature survey of data-driven speech enhancement methods

Oct 10, 2023

Abstract:The increasing number of scientific publications in acoustics, in general, presents difficulties in conducting traditional literature surveys. This work explores the use of a generative pre-trained transformer (GPT) model to automate a literature survey of 116 articles on data-driven speech enhancement methods. The main objective is to evaluate the capabilities and limitations of the model in providing accurate responses to specific queries about the papers selected from a reference human-based survey. While we see great potential to automate literature surveys in acoustics, improvements are needed to address technical questions more clearly and accurately.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge