Dimitrios A. Adamos

School of Music Studies, Aristotle University of Thessaloniki, Neuroinformatics GRoup, Aristotle University of Thessaloniki

EEG-D3: A Solution to the Hidden Overfitting Problem of Deep Learning Models

Dec 15, 2025Abstract:Deep learning for decoding EEG signals has gained traction, with many claims to state-of-the-art accuracy. However, despite the convincing benchmark performance, successful translation to real applications is limited. The frequent disconnect between performance on controlled BCI benchmarks and its lack of generalisation to practical settings indicates hidden overfitting problems. We introduce Disentangled Decoding Decomposition (D3), a weakly supervised method for training deep learning models across EEG datasets. By predicting the place in the respective trial sequence from which the input window was sampled, EEG-D3 separates latent components of brain activity, akin to non-linear ICA. We utilise a novel model architecture with fully independent sub-networks for strict interpretability. We outline a feature interpretation paradigm to contrast the component activation profiles on different datasets and inspect the associated temporal and spatial filters. The proposed method reliably separates latent components of brain activity on motor imagery data. Training downstream classifiers on an appropriate subset of these components prevents hidden overfitting caused by task-correlated artefacts, which severely affects end-to-end classifiers. We further exploit the linearly separable latent space for effective few-shot learning on sleep stage classification. The ability to distinguish genuine components of brain activity from spurious features results in models that avoid the hidden overfitting problem and generalise well to real-world applications, while requiring only minimal labelled data. With interest to the neuroscience community, the proposed method gives researchers a tool to separate individual brain processes and potentially even uncover heretofore unknown dynamics.

Advancing Brainwave Modeling with a Codebook-Based Foundation Model

May 22, 2025Abstract:Recent advances in large-scale pre-trained Electroencephalogram (EEG) models have shown great promise, driving progress in Brain-Computer Interfaces (BCIs) and healthcare applications. However, despite their success, many existing pre-trained models have struggled to fully capture the rich information content of neural oscillations, a limitation that fundamentally constrains their performance and generalizability across diverse BCI tasks. This limitation is frequently rooted in suboptimal architectural design choices which constrain their representational capacity. In this work, we introduce LaBraM++, an enhanced Large Brainwave Foundation Model (LBM) that incorporates principled improvements grounded in robust signal processing foundations. LaBraM++ demonstrates substantial gains across a variety of tasks, consistently outperforming its originally-based architecture and achieving competitive results when compared to other open-source LBMs. Its superior performance and training efficiency highlight its potential as a strong foundation for future advancements in LBMs.

Latent Alignment with Deep Set EEG Decoders

Nov 29, 2023

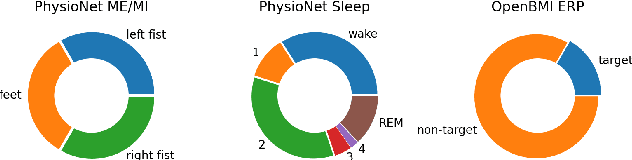

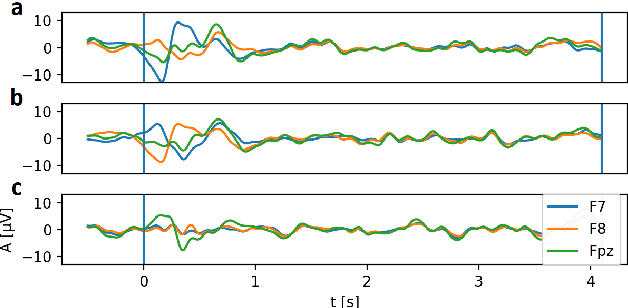

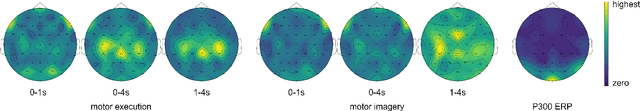

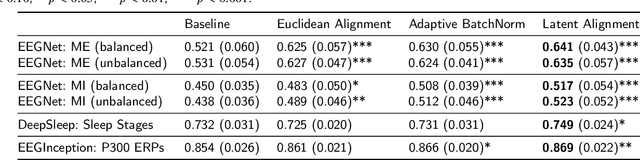

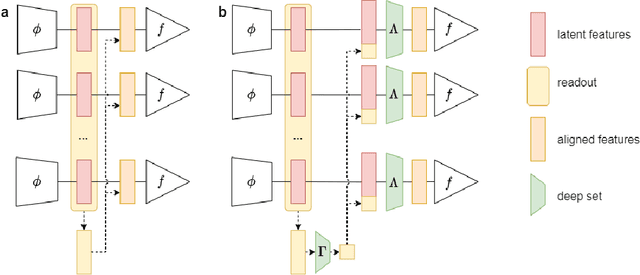

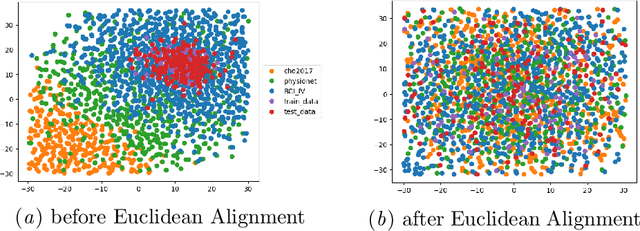

Abstract:The variability in EEG signals between different individuals poses a significant challenge when implementing brain-computer interfaces (BCI). Commonly proposed solutions to this problem include deep learning models, due to their increased capacity and generalization, as well as explicit domain adaptation techniques. Here, we introduce the Latent Alignment method that won the Benchmarks for EEG Transfer Learning (BEETL) competition and present its formulation as a deep set applied on the set of trials from a given subject. Its performance is compared to recent statistical domain adaptation techniques under various conditions. The experimental paradigms include motor imagery (MI), oddball event-related potentials (ERP) and sleep stage classification, where different well-established deep learning models are applied on each task. Our experimental results show that performing statistical distribution alignment at later stages in a deep learning model is beneficial to the classification accuracy, yielding the highest performance for our proposed method. We further investigate practical considerations that arise in the context of using deep learning and statistical alignment for EEG decoding. In this regard, we study class-discriminative artifacts that can spuriously improve results for deep learning models, as well as the impact of class-imbalance on alignment. We delineate a trade-off relationship between increased classification accuracy when alignment is performed at later modeling stages, and susceptibility to class-imbalance in the set of trials that the statistics are computed on.

2021 BEETL Competition: Advancing Transfer Learning for Subject Independence & Heterogenous EEG Data Sets

Feb 14, 2022

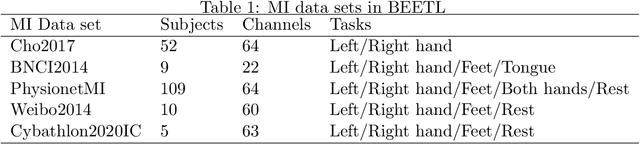

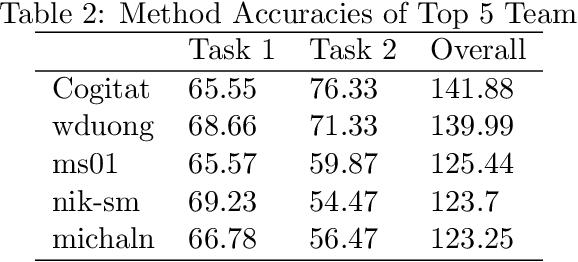

Abstract:Transfer learning and meta-learning offer some of the most promising avenues to unlock the scalability of healthcare and consumer technologies driven by biosignal data. This is because current methods cannot generalise well across human subjects' data and handle learning from different heterogeneously collected data sets, thus limiting the scale of training data. On the other side, developments in transfer learning would benefit significantly from a real-world benchmark with immediate practical application. Therefore, we pick electroencephalography (EEG) as an exemplar for what makes biosignal machine learning hard. We design two transfer learning challenges around diagnostics and Brain-Computer-Interfacing (BCI), that have to be solved in the face of low signal-to-noise ratios, major variability among subjects, differences in the data recording sessions and techniques, and even between the specific BCI tasks recorded in the dataset. Task 1 is centred on the field of medical diagnostics, addressing automatic sleep stage annotation across subjects. Task 2 is centred on Brain-Computer Interfacing (BCI), addressing motor imagery decoding across both subjects and data sets. The BEETL competition with its over 30 competing teams and its 3 winning entries brought attention to the potential of deep transfer learning and combinations of set theory and conventional machine learning techniques to overcome the challenges. The results set a new state-of-the-art for the real-world BEETL benchmark.

Team Cogitat at NeurIPS 2021: Benchmarks for EEG Transfer Learning Competition

Feb 01, 2022

Abstract:Building subject-independent deep learning models for EEG decoding faces the challenge of strong covariate-shift across different datasets, subjects and recording sessions. Our approach to address this difficulty is to explicitly align feature distributions at various layers of the deep learning model, using both simple statistical techniques as well as trainable methods with more representational capacity. This follows in a similar vein as covariance-based alignment methods, often used in a Riemannian manifold context. The methodology proposed herein won first place in the 2021 Benchmarks in EEG Transfer Learning (BEETL) competition, hosted at the NeurIPS conference. The first task of the competition consisted of sleep stage classification, which required the transfer of models trained on younger subjects to perform inference on multiple subjects of older age groups without personalized calibration data, requiring subject-independent models. The second task required to transfer models trained on the subjects of one or more source motor imagery datasets to perform inference on two target datasets, providing a small set of personalized calibration data for multiple test subjects.

EEGminer: Discovering Interpretable Features of Brain Activity with Learnable Filters

Oct 19, 2021

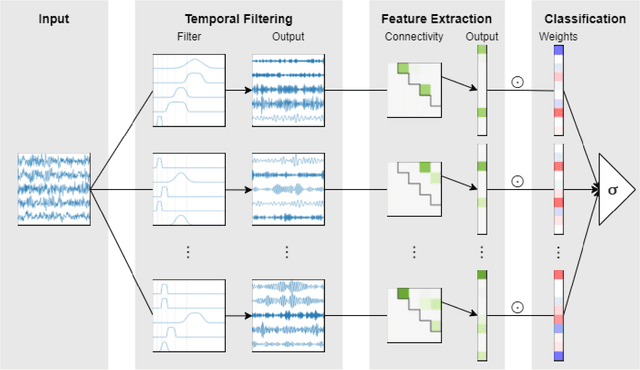

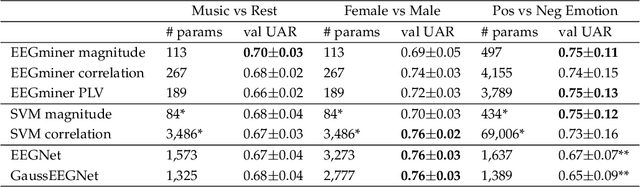

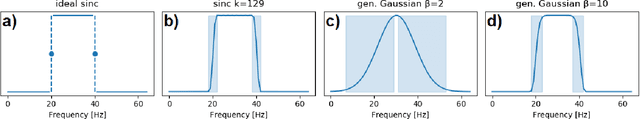

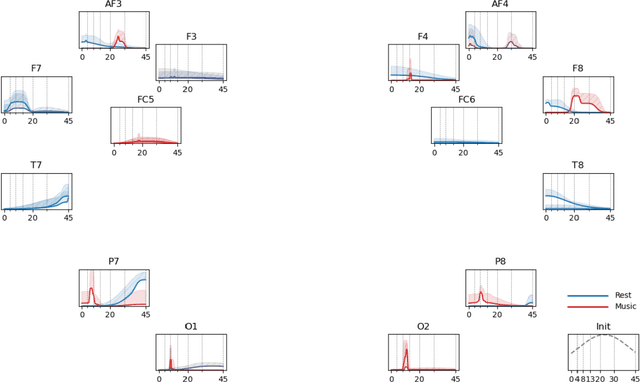

Abstract:Patterns of brain activity are associated with different brain processes and can be used to identify different brain states and make behavioral predictions. However, the relevant features are not readily apparent and accessible. To mine informative latent representations from multichannel EEG recordings, we propose a novel differentiable EEG decoding pipeline consisting of learnable filters and a pre-determined feature extraction module. Specifically, we introduce filters parameterized by generalized Gaussian functions that offer a smooth derivative for stable end-to-end model training and allow for learning interpretable features. For the feature module, we use signal magnitude and functional connectivity. We demonstrate the utility of our model towards emotion recognition from EEG signals on the SEED dataset, as well as on a new EEG dataset of unprecedented size (i.e., 763 subjects), where we identify consistent trends of music perception and related individual differences. The discovered features align with previous neuroscience studies and offer new insights, such as marked differences in the functional connectivity profile between left and right temporal areas during music listening. This agrees with the respective specialisation of the temporal lobes regarding music perception proposed in the literature.

A Tutorial on Graph Theory for Brain Signal Analysis

Jul 11, 2020

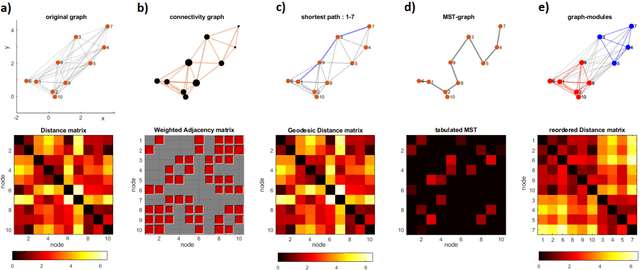

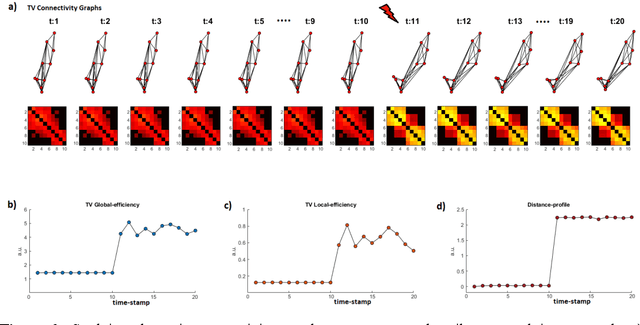

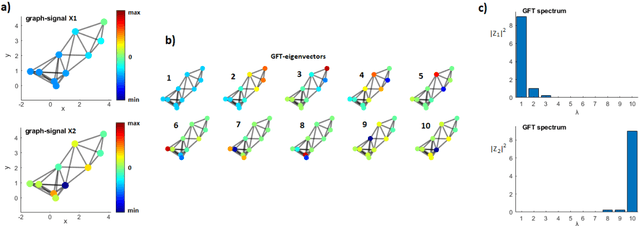

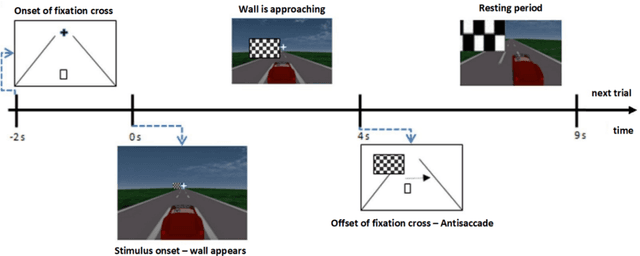

Abstract:This tutorial paper refers to the use of graph-theoretic concepts for analyzing brain signals. For didactic purposes it splits into two parts: theory and application. In the first part, we commence by introducing some basic elements from graph theory and stemming algorithmic tools, which can be employed for data-analytic purposes. Next, we describe how these concepts are adapted for handling evolving connectivity and gaining insights into network reorganization. Finally, the notion of signals residing on a given graph is introduced and elements from the emerging field of graph signal processing (GSP) are provided. The second part serves as a pragmatic demonstration of the tools and techniques described earlier. It is based on analyzing a multi-trial dataset containing single-trial responses from a visual ERP paradigm. The paper ends with a brief outline of the most recent trends in graph theory that are about to shape brain signal processing in the near future and a more general discussion on the relevance of graph-theoretic methodologies for analyzing continuous-mode neural recordings.

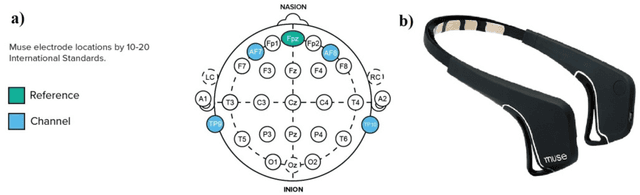

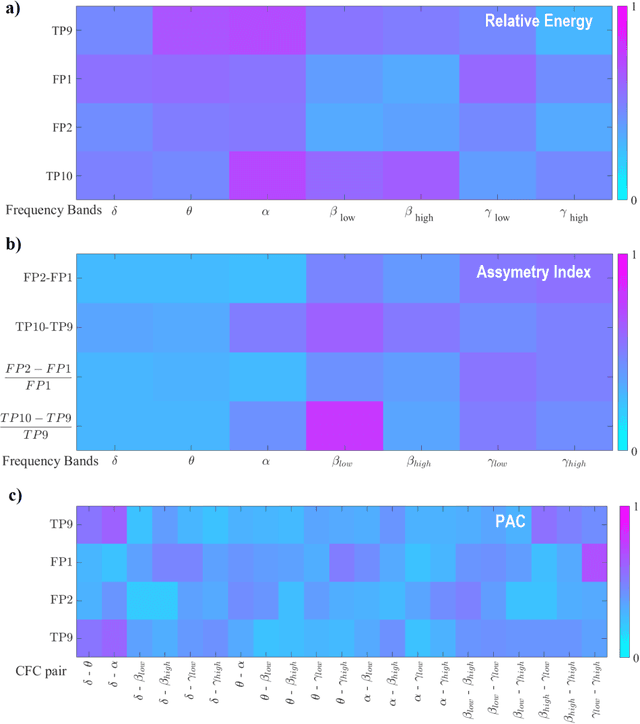

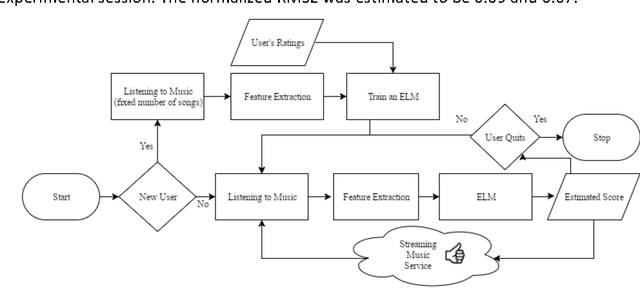

A Consumer BCI for Automated Music Evaluation Within a Popular On-Demand Music Streaming Service - Taking Listener's Brainwaves to Extremes

Sep 30, 2016

Abstract:We investigated the possibility of using a machine-learning scheme in conjunction with commercial wearable EEG-devices for translating listener's subjective experience of music into scores that can be used for the automated annotation of music in popular on-demand streaming services. Based on the established -neuroscientifically sound- concepts of brainwave frequency bands, activation asymmetry index and cross-frequency-coupling (CFC), we introduce a Brain Computer Interface (BCI) system that automatically assigns a rating score to the listened song. Our research operated in two distinct stages: i) a generic feature engineering stage, in which features from signal-analytics were ranked and selected based on their ability to associate music induced perturbations in brainwaves with listener's appraisal of music. ii) a personalization stage, during which the efficiency of ex- treme learning machines (ELMs) is exploited so as to translate the derived pat- terns into a listener's score. Encouraging experimental results, from a pragmatic use of the system, are presented.

* 12th IFIP WG 12.5 International Conference and Workshops, AIAI 2016, Thessaloniki, Greece, September 16-18, 2016, Proceedings

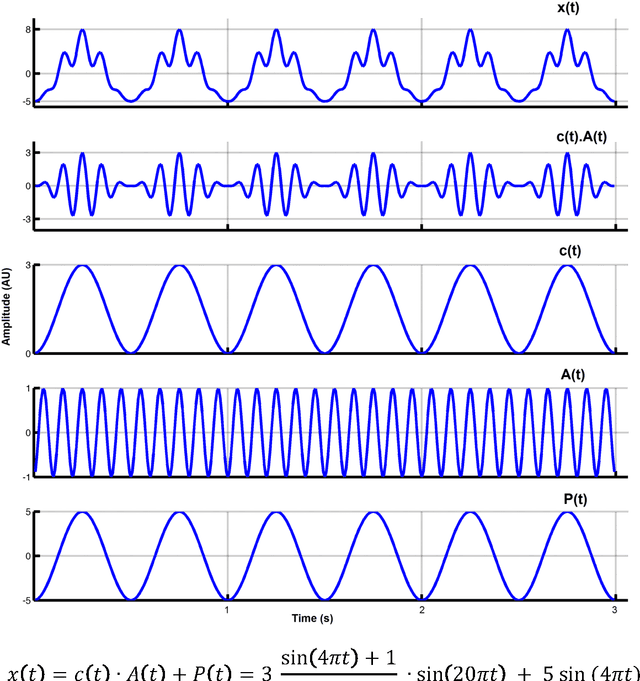

Towards the bio-personalization of music recommendation systems: A single-sensor EEG biomarker of subjective music preference

Sep 21, 2016

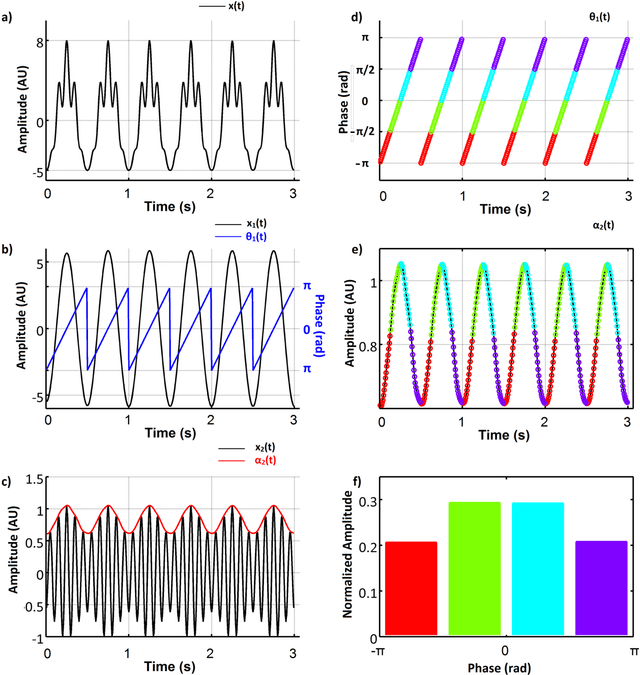

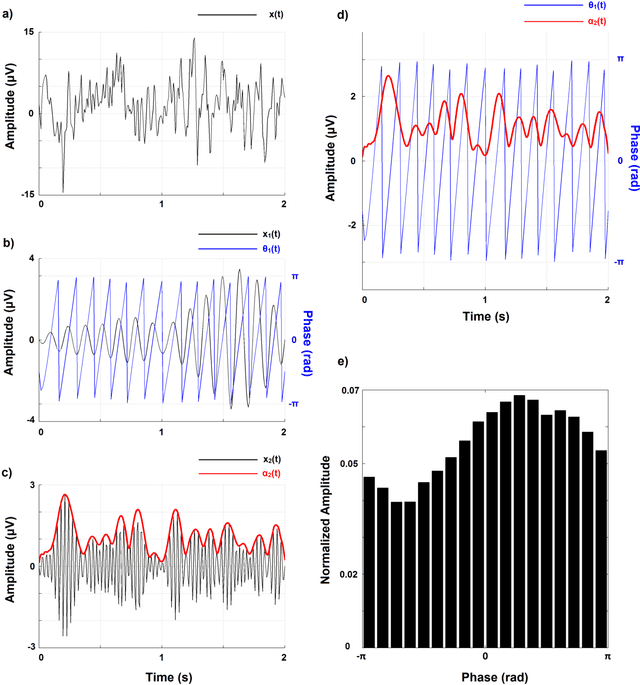

Abstract:Recent advances in biosensors technology and mobile electroencephalographic (EEG) interfaces have opened new application fields for cognitive monitoring. A computable biomarker for the assessment of spontaneous aesthetic brain responses during music listening is introduced here. It derives from well-established measures of cross-frequency coupling (CFC) and quantifies the music-induced alterations in the dynamic relationships between brain rhythms. During a stage of exploratory analysis, and using the signals from a suitably designed experiment, we established the biomarker, which acts on brain activations recorded over the left prefrontal cortex and focuses on the functional coupling between high-beta and low-gamma oscillations. Based on data from an additional experimental paradigm, we validated the introduced biomarker and showed its relevance for expressing the subjective aesthetic appreciation of a piece of music. Our approach resulted in an affordable tool that can promote human-machine interaction and, by serving as a personalized music annotation strategy, can be potentially integrated into modern flexible music recommendation systems. Keywords: Cross-frequency coupling; Human-computer interaction; Brain-computer interface

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge