Diane Brentari

Self-Supervised Video Transformers for Isolated Sign Language Recognition

Sep 02, 2023

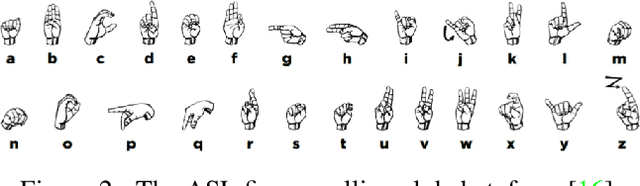

Abstract:This paper presents an in-depth analysis of various self-supervision methods for isolated sign language recognition (ISLR). We consider four recently introduced transformer-based approaches to self-supervised learning from videos, and four pre-training data regimes, and study all the combinations on the WLASL2000 dataset. Our findings reveal that MaskFeat achieves performance superior to pose-based and supervised video models, with a top-1 accuracy of 79.02% on gloss-based WLASL2000. Furthermore, we analyze these models' ability to produce representations of ASL signs using linear probing on diverse phonological features. This study underscores the value of architecture and pre-training task choices in ISLR. Specifically, our results on WLASL2000 highlight the power of masked reconstruction pre-training, and our linear probing results demonstrate the importance of hierarchical vision transformers for sign language representation.

Open-Domain Sign Language Translation Learned from Online Video

May 25, 2022

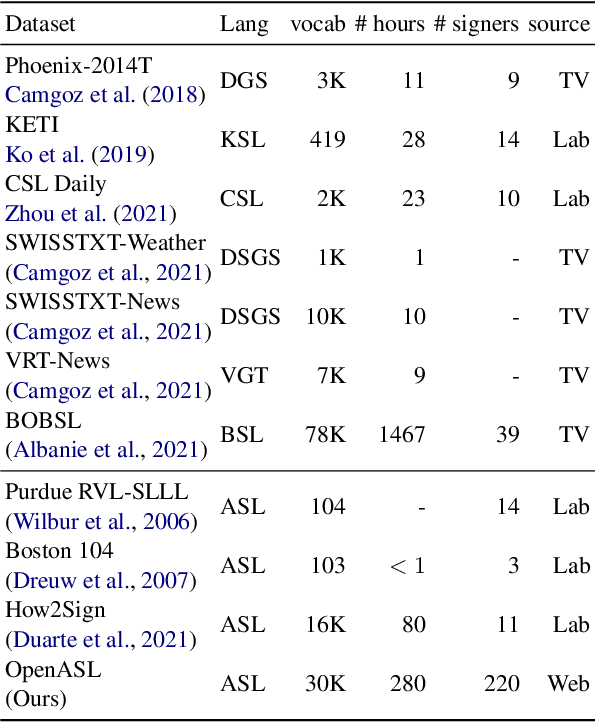

Abstract:Existing work on sign language translation--that is, translation from sign language videos into sentences in a written language--has focused mainly on (1) data collected in a controlled environment or (2) data in a specific domain, which limits the applicability to real-world settings. In this paper, we introduce OpenASL, a large-scale ASL-English dataset collected from online video sites (e.g., YouTube). OpenASL contains 288 hours of ASL videos in various domains (news, VLOGs, etc.) from over 200 signers and is the largest publicly available ASL translation dataset to date. To tackle the challenges of sign language translation in realistic settings and without glosses, we propose a set of techniques including sign search as a pretext task for pre-training and fusion of mouthing and handshape features. The proposed techniques produce consistent and large improvements in translation quality, over baseline models based on prior work. Our data, code and model will be publicly available at https://github.com/chevalierNoir/OpenASL

Searching for fingerspelled content in American Sign Language

Mar 24, 2022

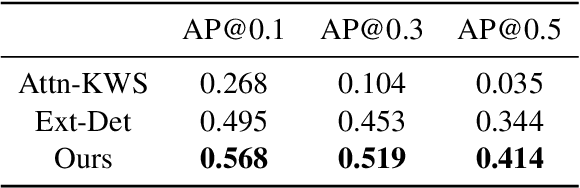

Abstract:Natural language processing for sign language video - including tasks like recognition, translation, and search - is crucial for making artificial intelligence technologies accessible to deaf individuals, and is gaining research interest in recent years. In this paper, we address the problem of searching for fingerspelled key-words or key phrases in raw sign language videos. This is an important task since significant content in sign language is often conveyed via fingerspelling, and to our knowledge the task has not been studied before. We propose an end-to-end model for this task, FSS-Net, that jointly detects fingerspelling and matches it to a text sequence. Our experiments, done on a large public dataset of ASL fingerspelling in the wild, show the importance of fingerspelling detection as a component of a search and retrieval model. Our model significantly outperforms baseline methods adapted from prior work on related tasks

Fingerspelling Detection in American Sign Language

Apr 03, 2021

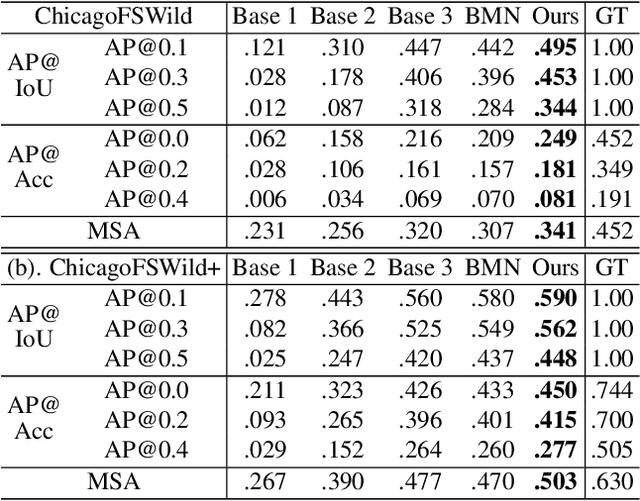

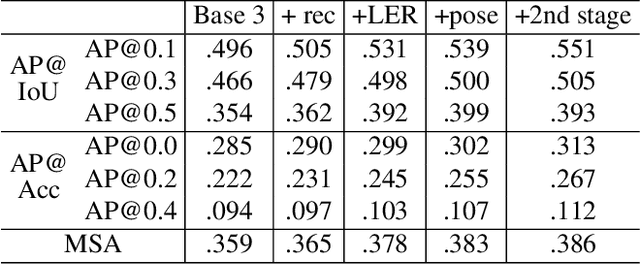

Abstract:Fingerspelling, in which words are signed letter by letter, is an important component of American Sign Language. Most previous work on automatic fingerspelling recognition has assumed that the boundaries of fingerspelling regions in signing videos are known beforehand. In this paper, we consider the task of fingerspelling detection in raw, untrimmed sign language videos. This is an important step towards building real-world fingerspelling recognition systems. We propose a benchmark and a suite of evaluation metrics, some of which reflect the effect of detection on the downstream fingerspelling recognition task. In addition, we propose a new model that learns to detect fingerspelling via multi-task training, incorporating pose estimation and fingerspelling recognition (transcription) along with detection, and compare this model to several alternatives. The model outperforms all alternative approaches across all metrics, establishing a state of the art on the benchmark.

Fingerspelling recognition in the wild with iterative visual attention

Aug 28, 2019

Abstract:Sign language recognition is a challenging gesture sequence recognition problem, characterized by quick and highly coarticulated motion. In this paper we focus on recognition of fingerspelling sequences in American Sign Language (ASL) videos collected in the wild, mainly from YouTube and Deaf social media. Most previous work on sign language recognition has focused on controlled settings where the data is recorded in a studio environment and the number of signers is limited. Our work aims to address the challenges of real-life data, reducing the need for detection or segmentation modules commonly used in this domain. We propose an end-to-end model based on an iterative attention mechanism, without explicit hand detection or segmentation. Our approach dynamically focuses on increasingly high-resolution regions of interest. It outperforms prior work by a large margin. We also introduce a newly collected data set of crowdsourced annotations of fingerspelling in the wild, and show that performance can be further improved with this additional data set.

American Sign Language fingerspelling recognition in the wild

Oct 26, 2018

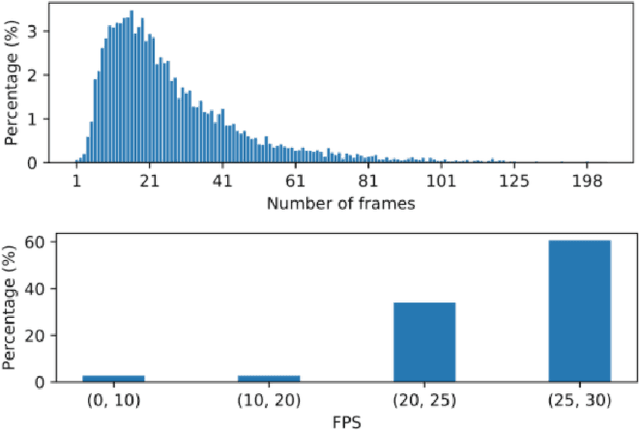

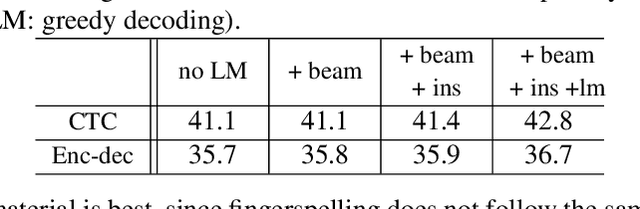

Abstract:We address the problem of American Sign Language fingerspelling recognition in the wild, using videos collected from websites. We introduce the largest data set available so far for the problem of fingerspelling recognition, and the first using naturally occurring video data. Using this data set, we present the first attempt to recognize fingerspelling sequences in this challenging setting. Unlike prior work, our video data is extremely challenging due to low frame rates and visual variability. To tackle the visual challenges, we train a special-purpose signing hand detector using a small subset of our data. Given the hand detector output, a sequence model decodes the hypothesized fingerspelled letter sequence. For the sequence model, we explore attention-based recurrent encoder-decoders and CTC-based approaches. As the first attempt at fingerspelling recognition in the wild, this work is intended to serve as a baseline for future work on sign language recognition in realistic conditions. We find that, as expected, letter error rates are much higher than in previous work on more controlled data, and we analyze the sources of error and effects of model variants.

Lexicon-Free Fingerspelling Recognition from Video: Data, Models, and Signer Adaptation

Sep 26, 2016

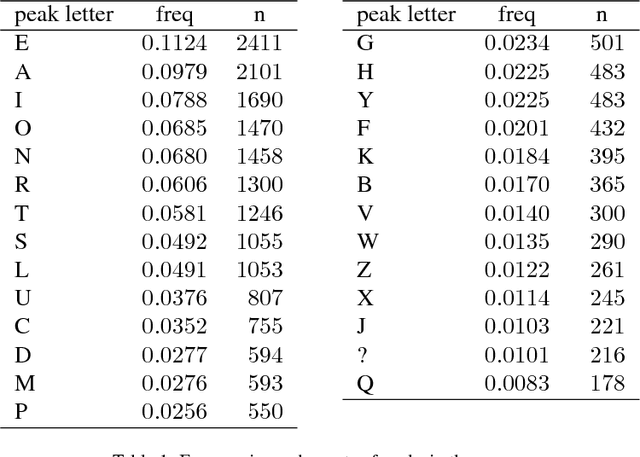

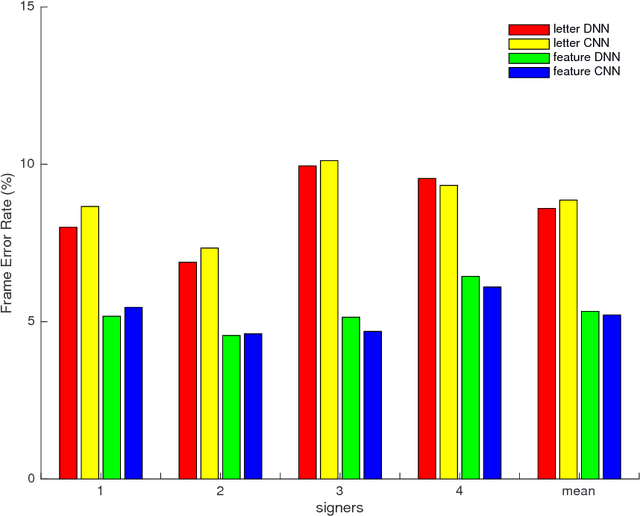

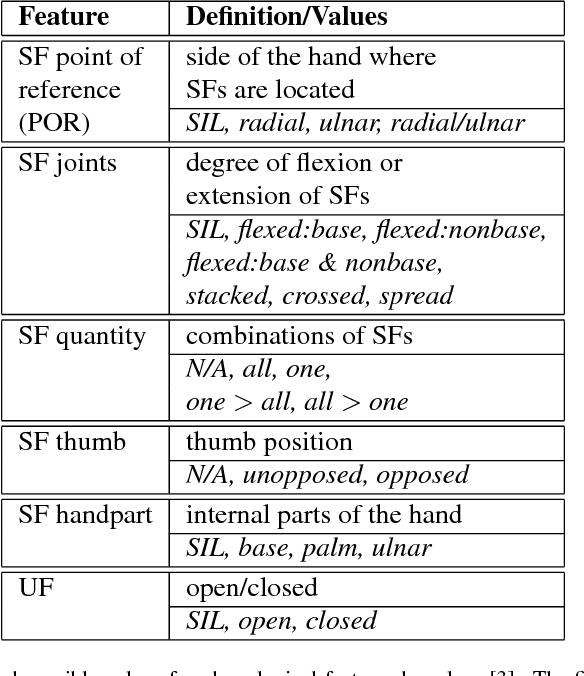

Abstract:We study the problem of recognizing video sequences of fingerspelled letters in American Sign Language (ASL). Fingerspelling comprises a significant but relatively understudied part of ASL. Recognizing fingerspelling is challenging for a number of reasons: It involves quick, small motions that are often highly coarticulated; it exhibits significant variation between signers; and there has been a dearth of continuous fingerspelling data collected. In this work we collect and annotate a new data set of continuous fingerspelling videos, compare several types of recognizers, and explore the problem of signer variation. Our best-performing models are segmental (semi-Markov) conditional random fields using deep neural network-based features. In the signer-dependent setting, our recognizers achieve up to about 92% letter accuracy. The multi-signer setting is much more challenging, but with neural network adaptation we achieve up to 83% letter accuracies in this setting.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge