Devleena Das

CE-MRS: Contrastive Explanations for Multi-Robot Systems

Oct 10, 2024

Abstract:As the complexity of multi-robot systems grows to incorporate a greater number of robots, more complex tasks, and longer time horizons, the solutions to such problems often become too complex to be fully intelligible to human users. In this work, we introduce an approach for generating natural language explanations that justify the validity of the system's solution to the user, or else aid the user in correcting any errors that led to a suboptimal system solution. Toward this goal, we first contribute a generalizable formalism of contrastive explanations for multi-robot systems, and then introduce a holistic approach to generating contrastive explanations for multi-robot scenarios that selectively incorporates data from multi-robot task allocation, scheduling, and motion-planning to explain system behavior. Through user studies with human operators we demonstrate that our integrated contrastive explanation approach leads to significant improvements in user ability to identify and solve system errors, leading to significant improvements in overall multi-robot team performance.

* Accepted to IEEE Robotics and Automation Letters

DEFT: Data Efficient Fine-Tuning for Large Language Models via Unsupervised Core-Set Selection

Oct 26, 2023Abstract:Recent advances have led to the availability of many pre-trained language models (PLMs); however, a question that remains is how much data is truly needed to fine-tune PLMs for downstream tasks? In this work, we introduce DEFT, a data-efficient fine-tuning framework that leverages unsupervised core-set selection to minimize the amount of data needed to fine-tune PLMs for downstream tasks. We demonstrate the efficacy of our DEFT framework in the context of text-editing LMs, and compare to the state-of-the art text-editing model, CoEDIT. Our quantitative and qualitative results demonstrate that DEFT models are just as accurate as CoEDIT while being finetuned on ~70% less data.

State2Explanation: Concept-Based Explanations to Benefit Agent Learning and User Understanding

Sep 21, 2023

Abstract:With more complex AI systems used by non-AI experts to complete daily tasks, there is an increasing effort to develop methods that produce explanations of AI decision making understandable by non-AI experts. Towards this effort, leveraging higher-level concepts and producing concept-based explanations have become a popular method. Most concept-based explanations have been developed for classification techniques, and we posit that the few existing methods for sequential decision making are limited in scope. In this work, we first contribute a desiderata for defining "concepts" in sequential decision making settings. Additionally, inspired by the Protege Effect which states explaining knowledge often reinforces one's self-learning, we explore the utility of concept-based explanations providing a dual benefit to the RL agent by improving agent learning rate, and to the end-user by improving end-user understanding of agent decision making. To this end, we contribute a unified framework, State2Explanation (S2E), that involves learning a joint embedding model between state-action pairs and concept-based explanations, and leveraging such learned model to both (1) inform reward shaping during an agent's training, and (2) provide explanations to end-users at deployment for improved task performance. Our experimental validations, in Connect 4 and Lunar Lander, demonstrate the success of S2E in providing a dual-benefit, successfully informing reward shaping and improving agent learning rate, as well as significantly improving end user task performance at deployment time.

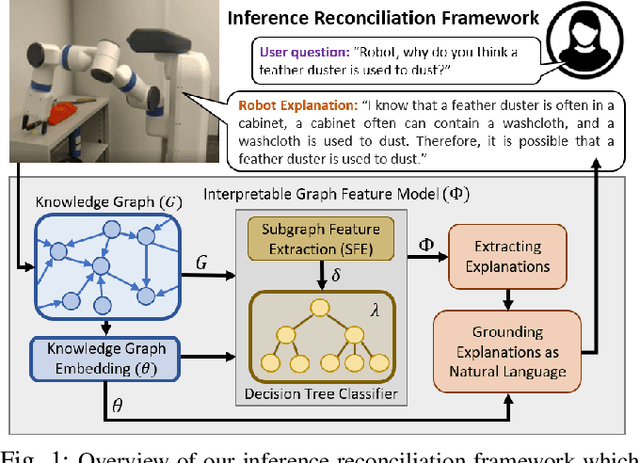

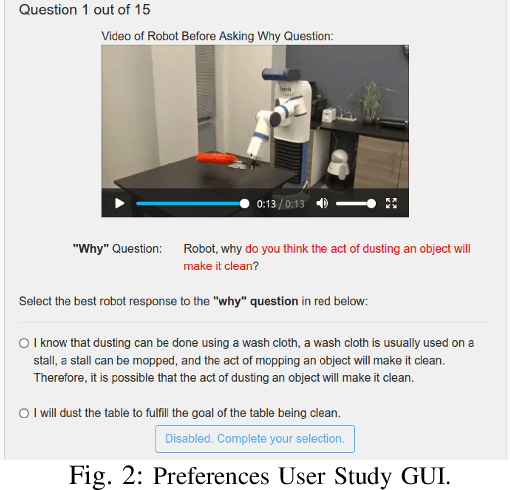

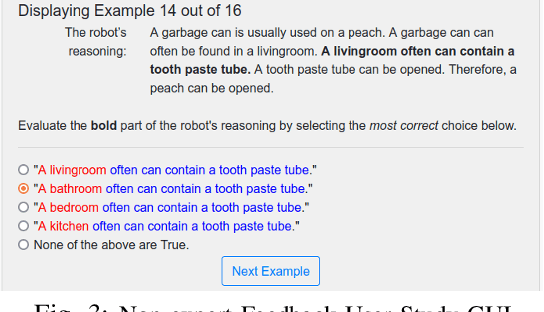

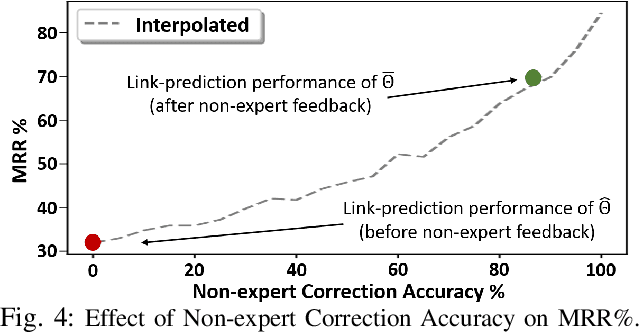

Explainable Knowledge Graph Embedding: Inference Reconciliation for Knowledge Inferences Supporting Robot Actions

May 04, 2022

Abstract:Learned knowledge graph representations supporting robots contain a wealth of domain knowledge that drives robot behavior. However, there does not exist an inference reconciliation framework that expresses how a knowledge graph representation affects a robot's sequential decision making. We use a pedagogical approach to explain the inferences of a learned, black-box knowledge graph representation, a knowledge graph embedding. Our interpretable model, uses a decision tree classifier to locally approximate the predictions of the black-box model, and provides natural language explanations interpretable by non-experts. Results from our algorithmic evaluation affirm our model design choices, and the results of our user studies with non-experts support the need for the proposed inference reconciliation framework. Critically, results from our simulated robot evaluation indicate that our explanations enable non-experts to correct erratic robot behaviors due to nonsensical beliefs within the black-box.

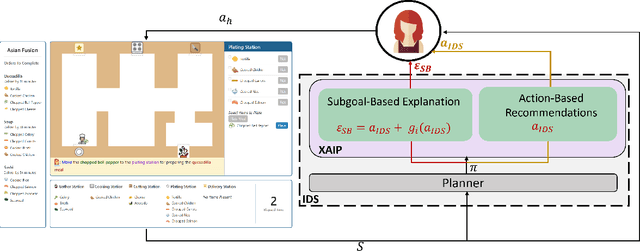

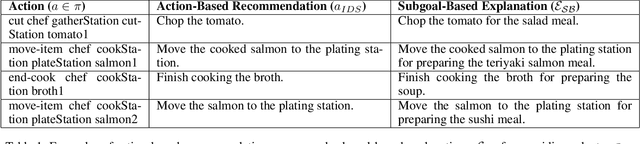

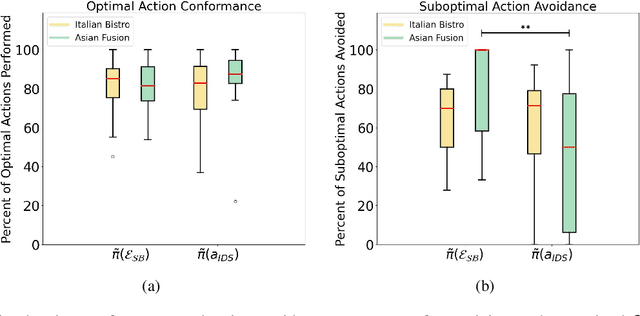

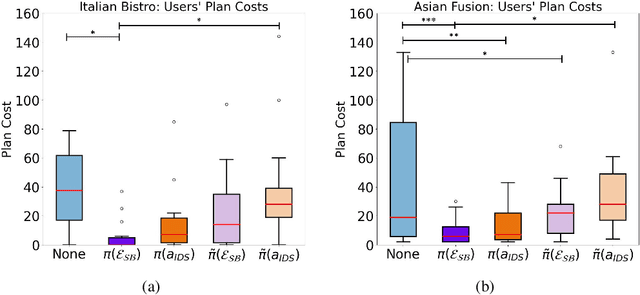

Subgoal-Based Explanations for Unreliable Intelligent Decision Support Systems

Jan 11, 2022

Abstract:Intelligent decision support (IDS) systems leverage artificial intelligence techniques to generate recommendations that guide human users through the decision making phases of a task. However, a key challenge is that IDS systems are not perfect, and in complex real-world scenarios may produce incorrect output or fail to work altogether. The field of explainable AI planning (XAIP) has sought to develop techniques that make the decision making of sequential decision making AI systems more explainable to end-users. Critically, prior work in applying XAIP techniques to IDS systems has assumed that the plan being proposed by the planner is always optimal, and therefore the action or plan being recommended as decision support to the user is always correct. In this work, we examine novice user interactions with a non-robust IDS system -- one that occasionally recommends the wrong action, and one that may become unavailable after users have become accustomed to its guidance. We introduce a novel explanation type, subgoal-based explanations, for planning-based IDS systems, that supplements traditional IDS output with information about the subgoal toward which the recommended action would contribute. We demonstrate that subgoal-based explanations lead to improved user task performance, improve user ability to distinguish optimal and suboptimal IDS recommendations, are preferred by users, and enable more robust user performance in the case of IDS failure

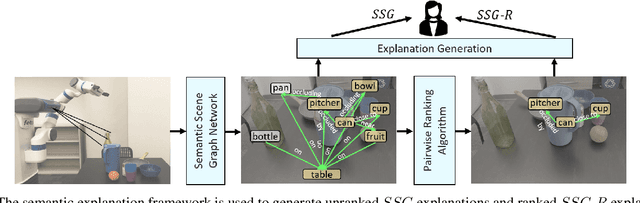

Semantic-Based Explainable AI: Leveraging Semantic Scene Graphs and Pairwise Ranking to Explain Robot Failures

Aug 08, 2021

Abstract:When interacting in unstructured human environments, occasional robot failures are inevitable. When such failures occur, everyday people, rather than trained technicians, will be the first to respond. Existing natural language explanations hand-annotate contextual information from an environment to help everyday people understand robot failures. However, this methodology lacks generalizability and scalability. In our work, we introduce a more generalizable semantic explanation framework. Our framework autonomously captures the semantic information in a scene to produce semantically descriptive explanations for everyday users. To generate failure-focused explanations that are semantically grounded, we leverages both semantic scene graphs to extract spatial relations and object attributes from an environment, as well as pairwise ranking. Our results show that these semantically descriptive explanations significantly improve everyday users' ability to both identify failures and provide assistance for recovery than the existing state-of-the-art context-based explanations.

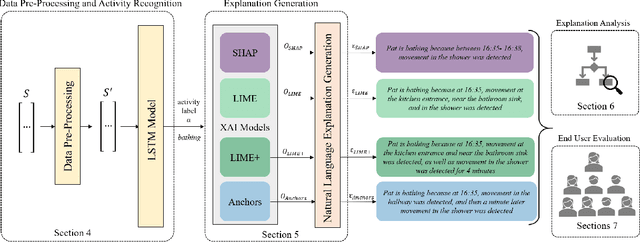

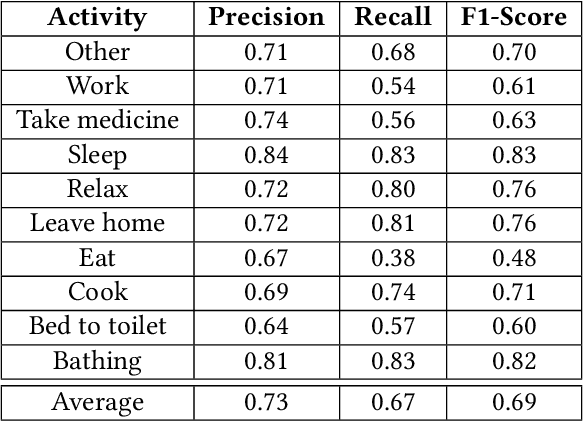

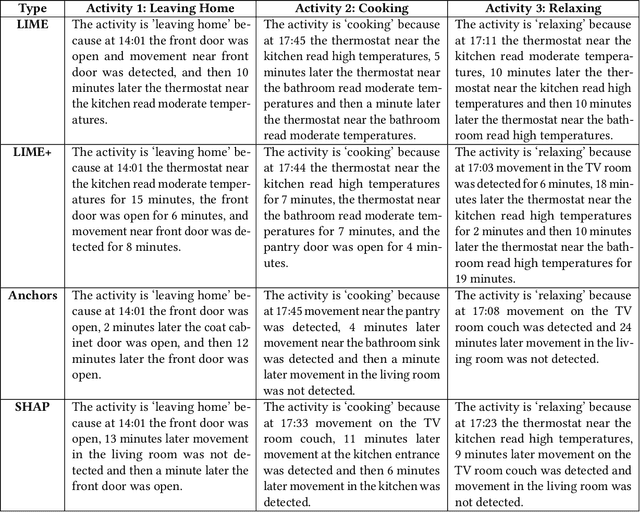

Explainable Activity Recognition for Smart Home Systems

May 20, 2021

Abstract:Smart home environments are designed to provide services that help improve the quality of life for the occupant via a variety of sensors and actuators installed throughout the space. Many automated actions taken by a smart home are governed by the output of an underlying activity recognition system. However, activity recognition systems may not be perfectly accurate and therefore inconsistencies in smart home operations can lead a user to wonder "why did the smart home do that?" In this work, we build on insights from Explainable Artificial Intelligence (XAI) techniques to contribute computational methods for explainable activity recognition. Specifically, we generate explanations for smart home activity recognition systems that explain what about an activity led to the given classification. To do so, we introduce four computational techniques for generating natural language explanations of smart home data and compare their effectiveness at generating meaningful explanations. Through a study with everyday users, we evaluate user preferences towards the four explanation types. Our results show that the leading approach, SHAP, has a 92% success rate in generating accurate explanations. Moreover, 84% of sampled scenarios users preferred natural language explanations over a simple activity label, underscoring the need for explainable activity recognition systems. Finally, we show that explanations generated by some XAI methods can lead users to lose confidence in the accuracy of the underlying activity recognition model, while others lead users to gain confidence. Taking all studied factors into consideration, we make a recommendation regarding which existing XAI method leads to the best performance in the domain of smart home automation, and discuss a range of topics for future work in this area.

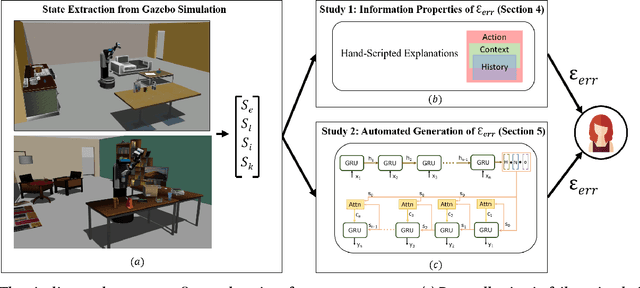

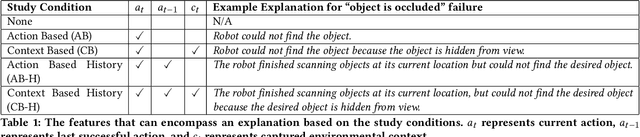

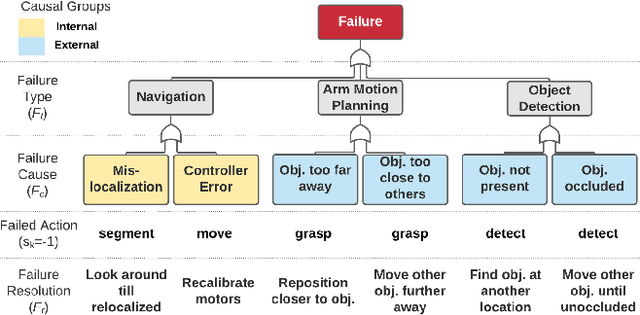

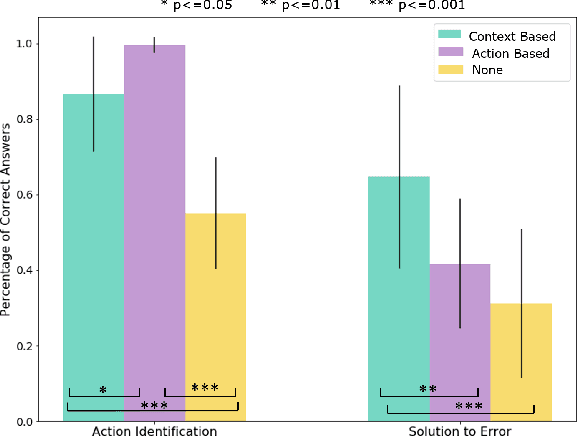

Explainable AI for Robot Failures: Generating Explanations that Improve User Assistance in Fault Recovery

Jan 05, 2021

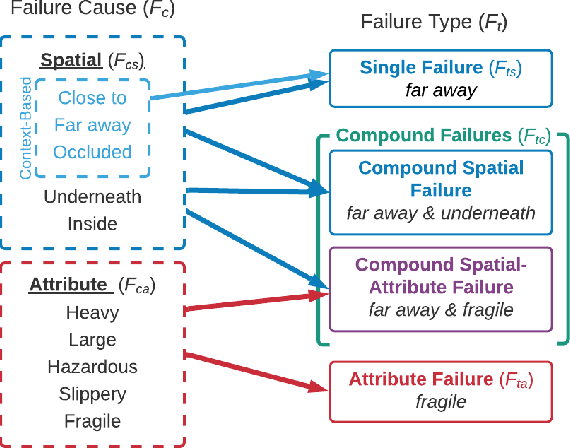

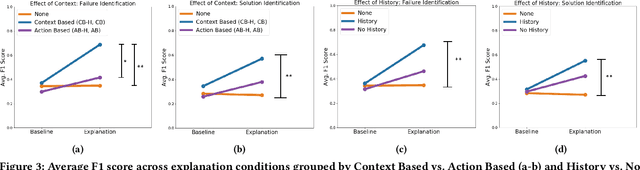

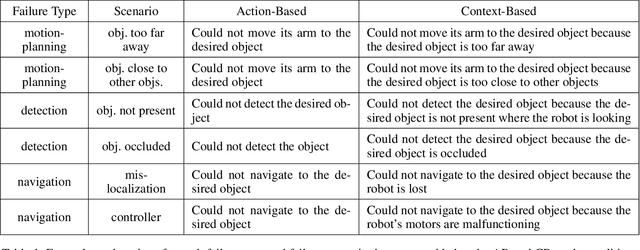

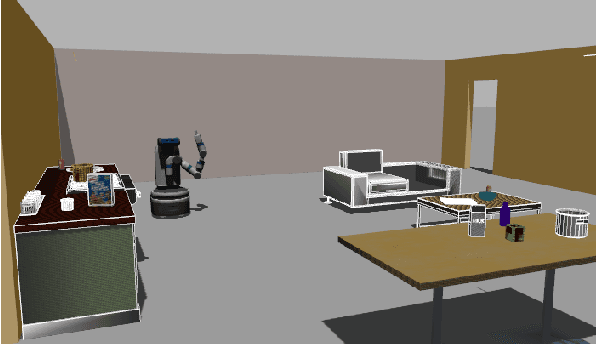

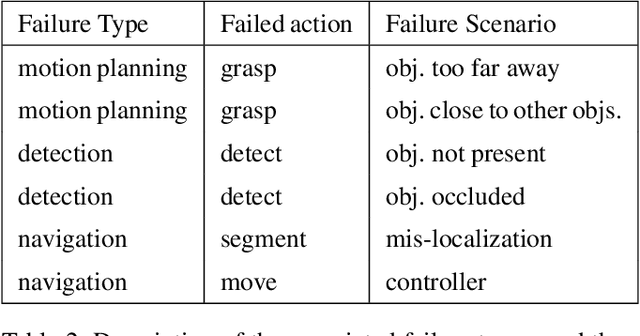

Abstract:With the growing capabilities of intelligent systems, the integration of robots in our everyday life is increasing. However, when interacting in such complex human environments, the occasional failure of robotic systems is inevitable. The field of explainable AI has sought to make complex-decision making systems more interpretable but most existing techniques target domain experts. On the contrary, in many failure cases, robots will require recovery assistance from non-expert users. In this work, we introduce a new type of explanation, that explains the cause of an unexpected failure during an agent's plan execution to non-experts. In order for error explanations to be meaningful, we investigate what types of information within a set of hand-scripted explanations are most helpful to non-experts for failure and solution identification. Additionally, we investigate how such explanations can be autonomously generated, extending an existing encoder-decoder model, and generalized across environments. We investigate such questions in the context of a robot performing a pick-and-place manipulation task in the home environment. Our results show that explanations capturing the context of a failure and history of past actions, are the most effective for failure and solution identification among non-experts. Furthermore, through a second user evaluation, we verify that our model-generated explanations can generalize to an unseen office environment, and are just as effective as the hand-scripted explanations.

Explainable AI for System Failures: Generating Explanations that Improve Human Assistance in Fault Recovery

Nov 19, 2020

Abstract:With the growing capabilities of intelligent systems, the integration of artificial intelligence (AI) and robots in everyday life is increasing. However, when interacting in such complex human environments, the failure of intelligent systems, such as robots, can be inevitable, requiring recovery assistance from users. In this work, we develop automated, natural language explanations for failures encountered during an AI agents' plan execution. These explanations are developed with a focus of helping non-expert users understand different point of failures to better provide recovery assistance. Specifically, we introduce a context-based information type for explanations that can both help non-expert users understand the underlying cause of a system failure, and select proper failure recoveries. Additionally, we extend an existing sequence-to-sequence methodology to automatically generate our context-based explanations. By doing so, we are able develop a model that can generalize context-based explanations over both different failure types and failure scenarios.

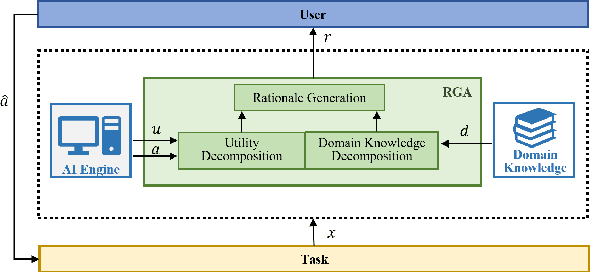

Leveraging Rationales to Improve Human Task Performance

Feb 11, 2020

Abstract:Machine learning (ML) systems across many application areas are increasingly demonstrating performance that is beyond that of humans. In response to the proliferation of such models, the field of Explainable AI (XAI) has sought to develop techniques that enhance the transparency and interpretability of machine learning methods. In this work, we consider a question not previously explored within the XAI and ML communities: Given a computational system whose performance exceeds that of its human user, can explainable AI capabilities be leveraged to improve the performance of the human? We study this question in the context of the game of Chess, for which computational game engines that surpass the performance of the average player are widely available. We introduce the Rationale-Generating Algorithm, an automated technique for generating rationales for utility-based computational methods, which we evaluate with a multi-day user study against two baselines. The results show that our approach produces rationales that lead to statistically significant improvement in human task performance, demonstrating that rationales automatically generated from an AI's internal task model can be used not only to explain what the system is doing, but also to instruct the user and ultimately improve their task performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge