Despoina Chatzakou

User Identity Linkage in Social Media Using Linguistic and Social Interaction Features

Aug 22, 2023

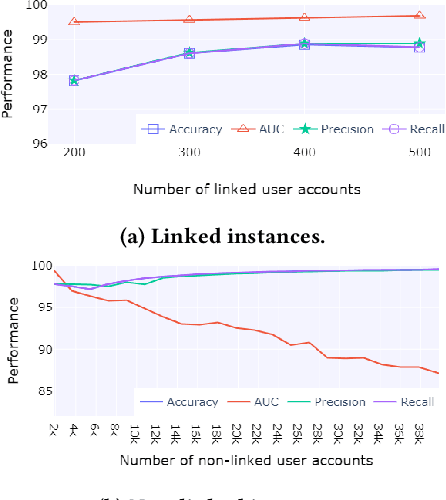

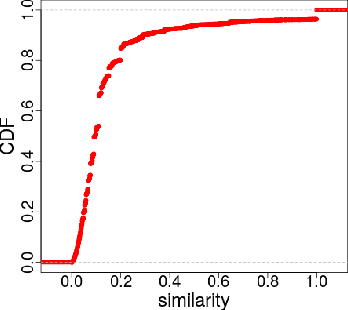

Abstract:Social media users often hold several accounts in their effort to multiply the spread of their thoughts, ideas, and viewpoints. In the particular case of objectionable content, users tend to create multiple accounts to bypass the combating measures enforced by social media platforms and thus retain their online identity even if some of their accounts are suspended. User identity linkage aims to reveal social media accounts likely to belong to the same natural person so as to prevent the spread of abusive/illegal activities. To this end, this work proposes a machine learning-based detection model, which uses multiple attributes of users' online activity in order to identify whether two or more virtual identities belong to the same real natural person. The models efficacy is demonstrated on two cases on abusive and terrorism-related Twitter content.

A Unified Deep Learning Architecture for Abuse Detection

Feb 21, 2018

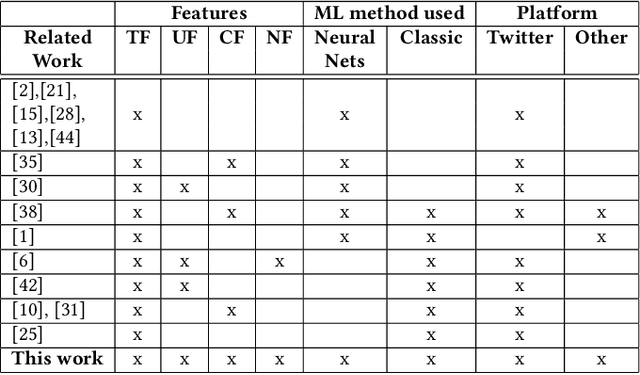

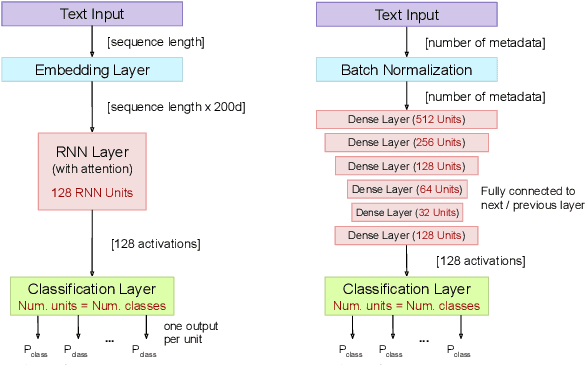

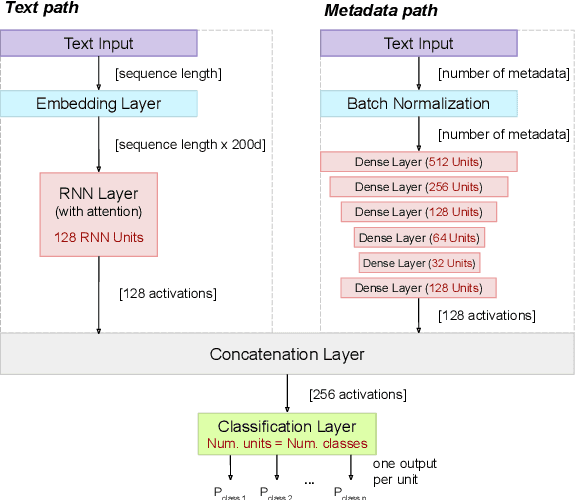

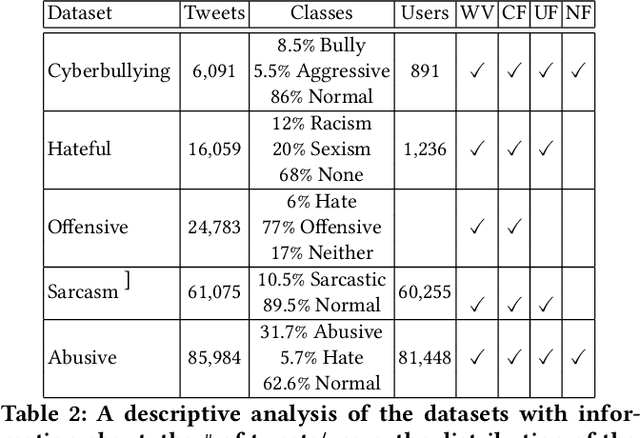

Abstract:Hate speech, offensive language, sexism, racism and other types of abusive behavior have become a common phenomenon in many online social media platforms. In recent years, such diverse abusive behaviors have been manifesting with increased frequency and levels of intensity. This is due to the openness and willingness of popular media platforms, such as Twitter and Facebook, to host content of sensitive or controversial topics. However, these platforms have not adequately addressed the problem of online abusive behavior, and their responsiveness to the effective detection and blocking of such inappropriate behavior remains limited. In the present paper, we study this complex problem by following a more holistic approach, which considers the various aspects of abusive behavior. To make the approach tangible, we focus on Twitter data and analyze user and textual properties from different angles of abusive posting behavior. We propose a deep learning architecture, which utilizes a wide variety of available metadata, and combines it with automatically-extracted hidden patterns within the text of the tweets, to detect multiple abusive behavioral norms which are highly inter-related. We apply this unified architecture in a seamless, transparent fashion to detect different types of abusive behavior (hate speech, sexism vs. racism, bullying, sarcasm, etc.) without the need for any tuning of the model architecture for each task. We test the proposed approach with multiple datasets addressing different and multiple abusive behaviors on Twitter. Our results demonstrate that it largely outperforms the state-of-art methods (between 21 and 45\% improvement in AUC, depending on the dataset).

Measuring #GamerGate: A Tale of Hate, Sexism, and Bullying

Feb 24, 2017

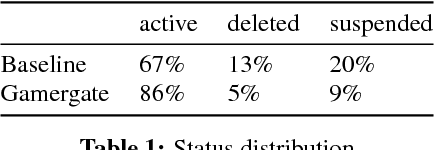

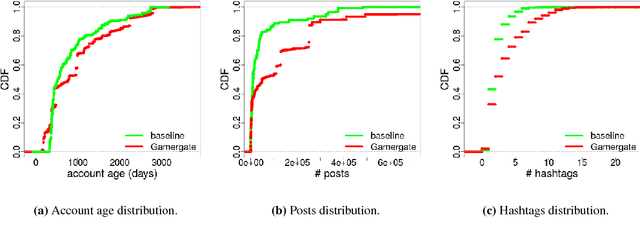

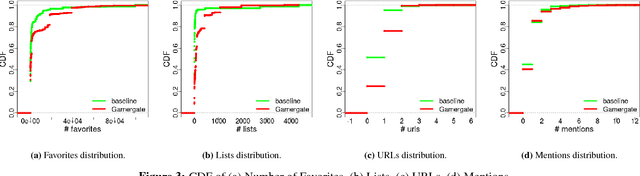

Abstract:Over the past few years, online aggression and abusive behaviors have occurred in many different forms and on a variety of platforms. In extreme cases, these incidents have evolved into hate, discrimination, and bullying, and even materialized into real-world threats and attacks against individuals or groups. In this paper, we study the Gamergate controversy. Started in August 2014 in the online gaming world, it quickly spread across various social networking platforms, ultimately leading to many incidents of cyberbullying and cyberaggression. We focus on Twitter, presenting a measurement study of a dataset of 340k unique users and 1.6M tweets to study the properties of these users, the content they post, and how they differ from random Twitter users. We find that users involved in this "Twitter war" tend to have more friends and followers, are generally more engaged and post tweets with negative sentiment, less joy, and more hate than random users. We also perform preliminary measurements on how the Twitter suspension mechanism deals with such abusive behaviors. While we focus on Gamergate, our methodology to collect and analyze tweets related to aggressive and bullying activities is of independent interest.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge