Chong Zhong

Real-Time Cascade Mitigation in Power Systems Using Influence Graph Improved by Reinforcement Learning

Jun 10, 2025

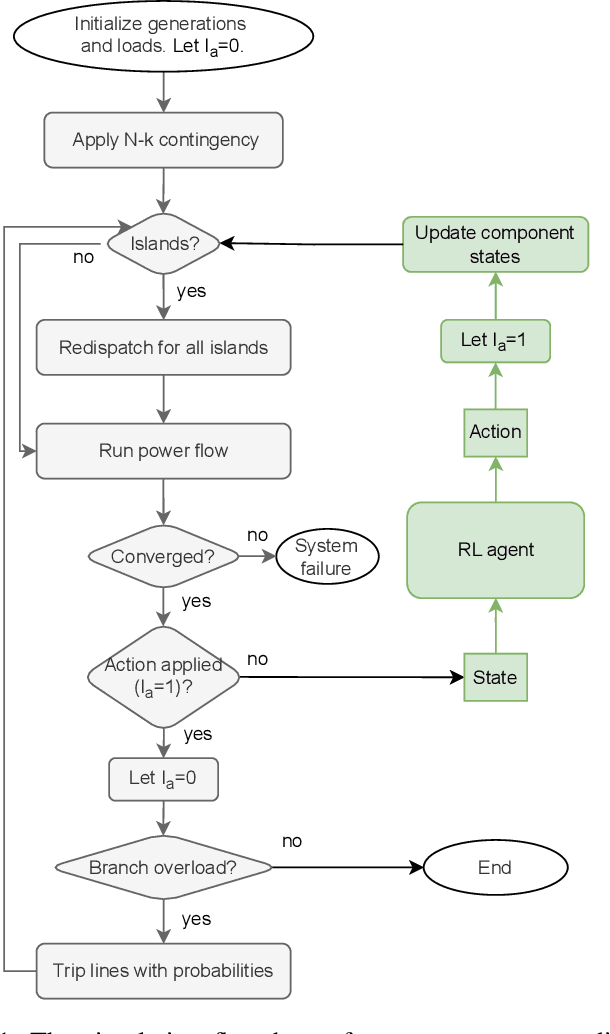

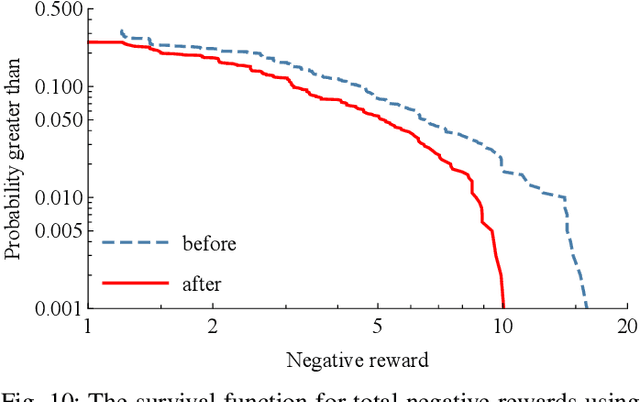

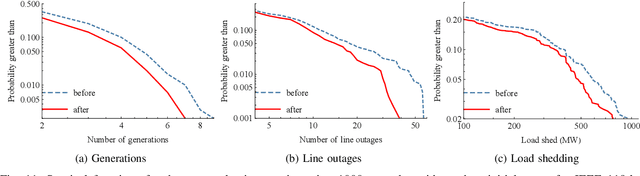

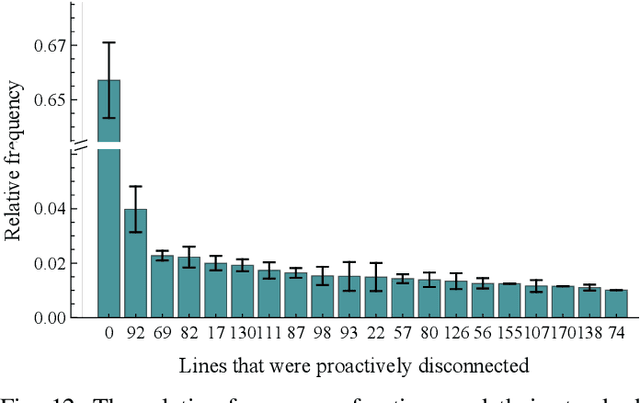

Abstract:Despite high reliability, modern power systems with growing renewable penetration face an increasing risk of cascading outages. Real-time cascade mitigation requires fast, complex operational decisions under uncertainty. In this work, we extend the influence graph into a Markov decision process model (MDP) for real-time mitigation of cascading outages in power transmission systems, accounting for uncertainties in generation, load, and initial contingencies. The MDP includes a do-nothing action to allow for conservative decision-making and is solved using reinforcement learning. We present a policy gradient learning algorithm initialized with a policy corresponding to the unmitigated case and designed to handle invalid actions. The proposed learning method converges faster than the conventional algorithm. Through careful reward design, we learn a policy that takes conservative actions without deteriorating system conditions. The model is validated on the IEEE 14-bus and IEEE 118-bus systems. The results show that proactive line disconnections can effectively reduce cascading risk, and certain lines consistently emerge as critical in mitigating cascade propagation.

CeViT: Copula-Enhanced Vision Transformer in multi-task learning and bi-group image covariates with an application to myopia screening

Jan 11, 2025

Abstract:We aim to assist image-based myopia screening by resolving two longstanding problems, "how to integrate the information of ocular images of a pair of eyes" and "how to incorporate the inherent dependence among high-myopia status and axial length for both eyes." The classification-regression task is modeled as a novel 4-dimensional muti-response regression, where discrete responses are allowed, that relates to two dependent 3rd-order tensors (3D ultrawide-field fundus images). We present a Vision Transformer-based bi-channel architecture, named CeViT, where the common features of a pair of eyes are extracted via a shared Transformer encoder, and the interocular asymmetries are modeled through separated multilayer perceptron heads. Statistically, we model the conditional dependence among mixture of discrete-continuous responses given the image covariates by a so-called copula loss. We establish a new theoretical framework regarding fine-tuning on CeViT based on latent representations, allowing the black-box fine-tuning procedure interpretable and guaranteeing higher relative efficiency of fine-tuning weight estimation in the asymptotic setting. We apply CeViT to an annotated ultrawide-field fundus image dataset collected by Shanghai Eye \& ENT Hospital, demonstrating that CeViT enhances the baseline model in both accuracy of classifying high-myopia and prediction of AL on both eyes.

OU-CoViT: Copula-Enhanced Bi-Channel Multi-Task Vision Transformers with Dual Adaptation for OU-UWF Images

Aug 18, 2024

Abstract:Myopia screening using cutting-edge ultra-widefield (UWF) fundus imaging and joint modeling of multiple discrete and continuous clinical scores presents a promising new paradigm for multi-task problems in Ophthalmology. The bi-channel framework that arises from the Ophthalmic phenomenon of ``interocular asymmetries'' of both eyes (OU) calls for new employment on the SOTA transformer-based models. However, the application of copula models for multiple mixed discrete-continuous labels on deep learning (DL) is challenging. Moreover, the application of advanced large transformer-based models to small medical datasets is challenging due to overfitting and computational resource constraints. To resolve these challenges, we propose OU-CoViT: a novel Copula-Enhanced Bi-Channel Multi-Task Vision Transformers with Dual Adaptation for OU-UWF images, which can i) incorporate conditional correlation information across multiple discrete and continuous labels within a deep learning framework (by deriving the closed form of a novel Copula Loss); ii) take OU inputs subject to both high correlation and interocular asymmetries using a bi-channel model with dual adaptation; and iii) enable the adaptation of large vision transformer (ViT) models to small medical datasets. Solid experiments demonstrate that OU-CoViT significantly improves prediction performance compared to single-channel baseline models with empirical loss. Furthermore, the novel architecture of OU-CoViT allows generalizability and extensions of our dual adaptation and Copula Loss to various ViT variants and large DL models on small medical datasets. Our approach opens up new possibilities for joint modeling of heterogeneous multi-channel input and mixed discrete-continuous clinical scores in medical practices and has the potential to advance AI-assisted clinical decision-making in various medical domains beyond Ophthalmology.

OUCopula: Bi-Channel Multi-Label Copula-Enhanced Adapter-Based CNN for Myopia Screening Based on OU-UWF Images

Mar 18, 2024

Abstract:Myopia screening using cutting-edge ultra-widefield (UWF) fundus imaging is potentially significant for ophthalmic outcomes. Current multidisciplinary research between ophthalmology and deep learning (DL) concentrates primarily on disease classification and diagnosis using single-eye images, largely ignoring joint modeling and prediction for Oculus Uterque (OU, both eyes). Inspired by the complex relationships between OU and the high correlation between the (continuous) outcome labels (Spherical Equivalent and Axial Length), we propose a framework of copula-enhanced adapter convolutional neural network (CNN) learning with OU UWF fundus images (OUCopula) for joint prediction of multiple clinical scores. We design a novel bi-channel multi-label CNN that can (1) take bi-channel image inputs subject to both high correlation and heterogeneity (by sharing the same backbone network and employing adapters to parameterize the channel-wise discrepancy), and (2) incorporate correlation information between continuous output labels (using a copula). Solid experiments show that OUCopula achieves satisfactory performance in myopia score prediction compared to backbone models. Moreover, OUCopula can far exceed the performance of models constructed for single-eye inputs. Importantly, our study also hints at the potential extension of the bi-channel model to a multi-channel paradigm and the generalizability of OUCopula across various backbone CNNs.

MTSA-SNN: A Multi-modal Time Series Analysis Model Based on Spiking Neural Network

Feb 08, 2024

Abstract:Time series analysis and modelling constitute a crucial research area. Traditional artificial neural networks struggle with complex, non-stationary time series data due to high computational complexity, limited ability to capture temporal information, and difficulty in handling event-driven data. To address these challenges, we propose a Multi-modal Time Series Analysis Model Based on Spiking Neural Network (MTSA-SNN). The Pulse Encoder unifies the encoding of temporal images and sequential information in a common pulse-based representation. The Joint Learning Module employs a joint learning function and weight allocation mechanism to fuse information from multi-modal pulse signals complementary. Additionally, we incorporate wavelet transform operations to enhance the model's ability to analyze and evaluate temporal information. Experimental results demonstrate that our method achieved superior performance on three complex time-series tasks. This work provides an effective event-driven approach to overcome the challenges associated with analyzing intricate temporal information. Access to the source code is available at https://github.com/Chenngzz/MTSA-SNN}{https://github.com/Chenngzz/MTSA-SNN

* 6 pages, 6 figures, published to International Conference on Computer Supported Cooperative Work in Design

CeCNN: Copula-enhanced convolutional neural networks in joint prediction of refraction error and axial length based on ultra-widefield fundus images

Nov 07, 2023Abstract:Ultra-widefield (UWF) fundus images are replacing traditional fundus images in screening, detection, prediction, and treatment of complications related to myopia because their much broader visual range is advantageous for highly myopic eyes. Spherical equivalent (SE) is extensively used as the main myopia outcome measure, and axial length (AL) has drawn increasing interest as an important ocular component for assessing myopia. Cutting-edge studies show that SE and AL are strongly correlated. Using the joint information from SE and AL is potentially better than using either separately. In the deep learning community, though there is research on multiple-response tasks with a 3D image biomarker, dependence among responses is only sporadically taken into consideration. Inspired by the spirit that information extracted from the data by statistical methods can improve the prediction accuracy of deep learning models, we formulate a class of multivariate response regression models with a higher-order tensor biomarker, for the bivariate tasks of regression-classification and regression-regression. Specifically, we propose a copula-enhanced convolutional neural network (CeCNN) framework that incorporates the dependence between responses through a Gaussian copula (with parameters estimated from a warm-up CNN) and uses the induced copula-likelihood loss with the backbone CNNs. We establish the statistical framework and algorithms for the aforementioned two bivariate tasks. We show that the CeCNN has better prediction accuracy after adding the dependency information to the backbone models. The modeling and the proposed CeCNN algorithm are applicable beyond the UWF scenario and can be effective with other backbones beyond ResNet and LeNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge