Chong Yang

Cause-Aware Empathetic Response Generation via Chain-of-Thought Fine-Tuning

Aug 21, 2024

Abstract:Empathetic response generation endows agents with the capability to comprehend dialogue contexts and react to expressed emotions. Previous works predominantly focus on leveraging the speaker's emotional labels, but ignore the importance of emotion cause reasoning in empathetic response generation, which hinders the model's capacity for further affective understanding and cognitive inference. In this paper, we propose a cause-aware empathetic generation approach by integrating emotions and causes through a well-designed Chain-of-Thought (CoT) prompt on Large Language Models (LLMs). Our approach can greatly promote LLMs' performance of empathy by instruction tuning and enhancing the role awareness of an empathetic listener in the prompt. Additionally, we propose to incorporate cause-oriented external knowledge from COMET into the prompt, which improves the diversity of generation and alleviates conflicts between internal and external knowledge at the same time. Experimental results on the benchmark dataset demonstrate that our approach on LLaMA-7b achieves state-of-the-art performance in both automatic and human evaluations.

S+PAGE: A Speaker and Position-Aware Graph Neural Network Model for Emotion Recognition in Conversation

Dec 23, 2021

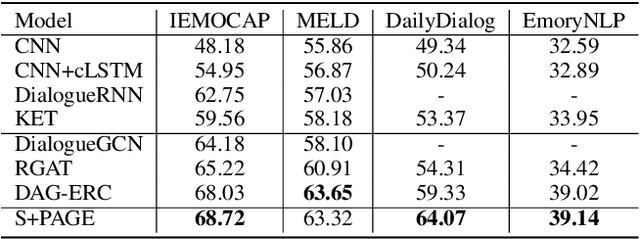

Abstract:Emotion recognition in conversation (ERC) has attracted much attention in recent years for its necessity in widespread applications. Existing ERC methods mostly model the self and inter-speaker context separately, posing a major issue for lacking enough interaction between them. In this paper, we propose a novel Speaker and Position-Aware Graph neural network model for ERC (S+PAGE), which contains three stages to combine the benefits of both Transformer and relational graph convolution network (R-GCN) for better contextual modeling. Firstly, a two-stream conversational Transformer is presented to extract the coarse self and inter-speaker contextual features for each utterance. Then, a speaker and position-aware conversation graph is constructed, and we propose an enhanced R-GCN model, called PAG, to refine the coarse features guided by a relative positional encoding. Finally, both of the features from the former two stages are input into a conditional random field layer to model the emotion transfer.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge