Cheng Song

KP-PINNs: Kernel Packet Accelerated Physics Informed Neural Networks

Jun 11, 2025Abstract:Differential equations are involved in modeling many engineering problems. Many efforts have been devoted to solving differential equations. Due to the flexibility of neural networks, Physics Informed Neural Networks (PINNs) have recently been proposed to solve complex differential equations and have demonstrated superior performance in many applications. While the L2 loss function is usually a default choice in PINNs, it has been shown that the corresponding numerical solution is incorrect and unstable for some complex equations. In this work, we propose a new PINNs framework named Kernel Packet accelerated PINNs (KP-PINNs), which gives a new expression of the loss function using the reproducing kernel Hilbert space (RKHS) norm and uses the Kernel Packet (KP) method to accelerate the computation. Theoretical results show that KP-PINNs can be stable across various differential equations. Numerical experiments illustrate that KP-PINNs can solve differential equations effectively and efficiently. This framework provides a promising direction for improving the stability and accuracy of PINNs-based solvers in scientific computing.

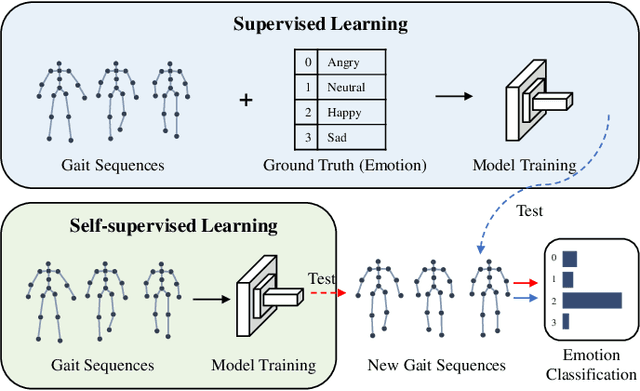

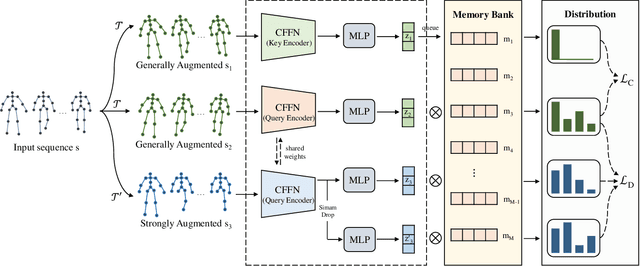

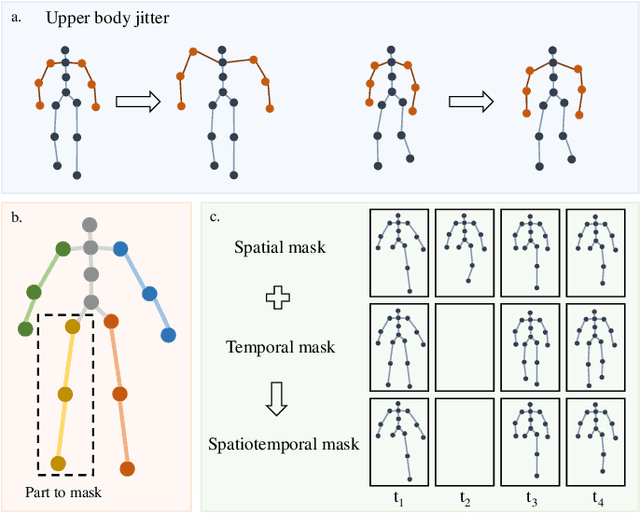

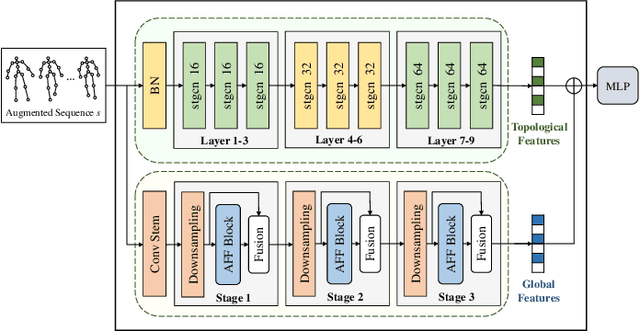

Self-supervised Gait-based Emotion Representation Learning from Selective Strongly Augmented Skeleton Sequences

May 08, 2024

Abstract:Emotion recognition is an important part of affective computing. Extracting emotional cues from human gaits yields benefits such as natural interaction, a nonintrusive nature, and remote detection. Recently, the introduction of self-supervised learning techniques offers a practical solution to the issues arising from the scarcity of labeled data in the field of gait-based emotion recognition. However, due to the limited diversity of gaits and the incompleteness of feature representations for skeletons, the existing contrastive learning methods are usually inefficient for the acquisition of gait emotions. In this paper, we propose a contrastive learning framework utilizing selective strong augmentation (SSA) for self-supervised gait-based emotion representation, which aims to derive effective representations from limited labeled gait data. First, we propose an SSA method for the gait emotion recognition task, which includes upper body jitter and random spatiotemporal mask. The goal of SSA is to generate more diverse and targeted positive samples and prompt the model to learn more distinctive and robust feature representations. Then, we design a complementary feature fusion network (CFFN) that facilitates the integration of cross-domain information to acquire topological structural and global adaptive features. Finally, we implement the distributional divergence minimization loss to supervise the representation learning of the generally and strongly augmented queries. Our approach is validated on the Emotion-Gait (E-Gait) and Emilya datasets and outperforms the state-of-the-art methods under different evaluation protocols.

CGS-Mask: Making Time Series Predictions Intuitive for All

Dec 20, 2023

Abstract:Artificial intelligence (AI) has immense potential in time series prediction, but most explainable tools have limited capabilities in providing a systematic understanding of important features over time. These tools typically rely on evaluating a single time point, overlook the time ordering of inputs, and neglect the time-sensitive nature of time series applications. These factors make it difficult for users, particularly those without domain knowledge, to comprehend AI model decisions and obtain meaningful explanations. We propose CGS-Mask, a post-hoc and model-agnostic cellular genetic strip mask-based saliency approach to address these challenges. CGS-Mask uses consecutive time steps as a cohesive entity to evaluate the impact of features on the final prediction, providing binary and sustained feature importance scores over time. Our algorithm optimizes the mask population iteratively to obtain the optimal mask in a reasonable time. We evaluated CGS-Mask on synthetic and real-world datasets, and it outperformed state-of-the-art methods in elucidating the importance of features over time. According to our pilot user study via a questionnaire survey, CGS-Mask is the most effective approach in presenting easily understandable time series prediction results, enabling users to comprehend the decision-making process of AI models with ease.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge