Changbo Zhu

Sobolev Gradient Ascent for Optimal Transport: Barycenter Optimization and Convergence Analysis

May 19, 2025Abstract:This paper introduces a new constraint-free concave dual formulation for the Wasserstein barycenter. Tailoring the vanilla dual gradient ascent algorithm to the Sobolev geometry, we derive a scalable Sobolev gradient ascent (SGA) algorithm to compute the barycenter for input distributions supported on a regular grid. Despite the algorithmic simplicity, we provide a global convergence analysis that achieves the same rate as the classical subgradient descent methods for minimizing nonsmooth convex functions in the Euclidean space. A central feature of our SGA algorithm is that the computationally expensive $c$-concavity projection operator enforced on the Kantorovich dual potentials is unnecessary to guarantee convergence, leading to significant algorithmic and theoretical simplifications over all existing primal and dual methods for computing the exact barycenter. Our numerical experiments demonstrate the superior empirical performance of SGA over the existing optimal transport barycenter solvers.

Optimal Transport Barycenter via Nonconvex-Concave Minimax Optimization

Jan 24, 2025

Abstract:The optimal transport barycenter (a.k.a. Wasserstein barycenter) is a fundamental notion of averaging that extends from the Euclidean space to the Wasserstein space of probability distributions. Computation of the unregularized barycenter for discretized probability distributions on point clouds is a challenging task when the domain dimension $d > 1$. Most practical algorithms for approximating the barycenter problem are based on entropic regularization. In this paper, we introduce a nearly linear time $O(m \log{m})$ and linear space complexity $O(m)$ primal-dual algorithm, the Wasserstein-Descent $\dot{\mathbb{H}}^1$-Ascent (WDHA) algorithm, for computing the exact barycenter when the input probability density functions are discretized on an $m$-point grid. The key success of the WDHA algorithm hinges on alternating between two different yet closely related Wasserstein and Sobolev optimization geometries for the primal barycenter and dual Kantorovich potential subproblems. Under reasonable assumptions, we establish the convergence rate and iteration complexity of WDHA to its stationary point when the step size is appropriately chosen. Superior computational efficacy, scalability, and accuracy over the existing Sinkhorn-type algorithms are demonstrated on high-resolution (e.g., $1024 \times 1024$ images) 2D synthetic and real data.

Generalized Singular Value Thresholding

May 27, 2018

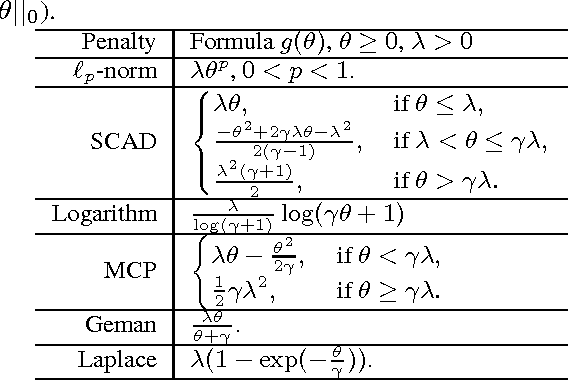

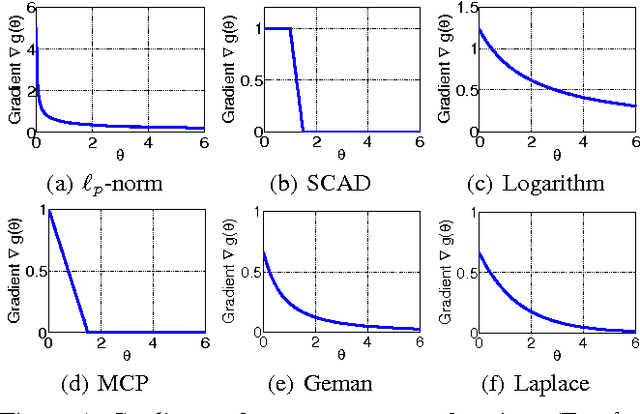

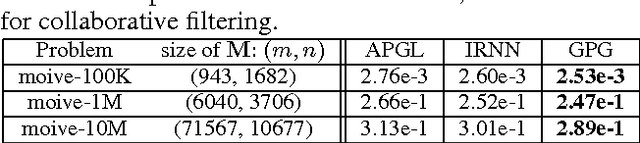

Abstract:This work studies the Generalized Singular Value Thresholding (GSVT) operator ${\text{Prox}}_{g}^{{\sigma}}(\cdot)$, \begin{equation*} {\text{Prox}}_{g}^{{\sigma}}(B)=\arg\min\limits_{X}\sum_{i=1}^{m}g(\sigma_{i}(X)) + \frac{1}{2}||X-B||_{F}^{2}, \end{equation*} associated with a nonconvex function $g$ defined on the singular values of $X$. We prove that GSVT can be obtained by performing the proximal operator of $g$ (denoted as $\text{Prox}_g(\cdot)$) on the singular values since $\text{Prox}_g(\cdot)$ is monotone when $g$ is lower bounded. If the nonconvex $g$ satisfies some conditions (many popular nonconvex surrogate functions, e.g., $\ell_p$-norm, $0<p<1$, of $\ell_0$-norm are special cases), a general solver to find $\text{Prox}_g(b)$ is proposed for any $b\geq0$. GSVT greatly generalizes the known Singular Value Thresholding (SVT) which is a basic subroutine in many convex low rank minimization methods. We are able to solve the nonconvex low rank minimization problem by using GSVT in place of SVT.

Online Gradient Descent in Function Space

Dec 08, 2015Abstract:In many problems in machine learning and operations research, we need to optimize a function whose input is a random variable or a probability density function, i.e. to solve optimization problems in an infinite dimensional space. On the other hand, online learning has the advantage of dealing with streaming examples, and better model a changing environ- ment. In this paper, we extend the celebrated online gradient descent algorithm to Hilbert spaces (function spaces), and analyze the convergence guarantee of the algorithm. Finally, we demonstrate that our algorithms can be useful in several important problems.

Online Crowdsourcing

Dec 08, 2015

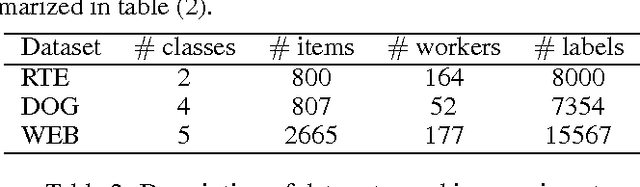

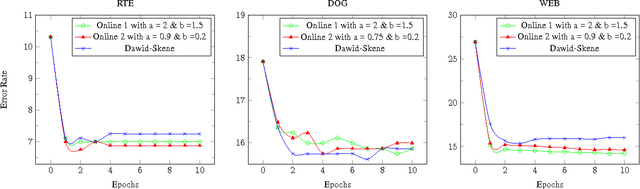

Abstract:With the success of modern internet based platform, such as Amazon Mechanical Turk, it is now normal to collect a large number of hand labeled samples from non-experts. The Dawid- Skene algorithm, which is based on Expectation- Maximization update, has been widely used for inferring the true labels from noisy crowdsourced labels. However, Dawid-Skene scheme requires all the data to perform each EM iteration, and can be infeasible for streaming data or large scale data. In this paper, we provide an online version of Dawid- Skene algorithm that only requires one data frame for each iteration. Further, we prove that under mild conditions, the online Dawid-Skene scheme with projection converges to a stationary point of the marginal log-likelihood of the observed data. Our experiments demonstrate that the online Dawid- Skene scheme achieves state of the art performance comparing with other methods based on the Dawid- Skene scheme.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge