Caroline C. W. Klaver

for the AREDS2 Deep Learning Research Group

retinalysis-vascx: An explainable software toolbox for the extraction of retinal vascular biomarkers

Feb 09, 2026Abstract:The automatic extraction of retinal vascular biomarkers from color fundus images (CFI) is essential for large-scale studies of the retinal vasculature. We present VascX, an open-source Python toolbox designed for the automated extraction of biomarkers from artery and vein segmentations. The VascX workflow processes vessel segmentation masks into skeletons to build undirected and directed vessel graphs, which are then used to resolve segments into continuous vessels. This architecture enables the calculation of a comprehensive suite of biomarkers, including vascular density, bifurcation angles, central retinal equivalents (CREs), tortuosity, and temporal angles, alongside image quality metrics. A distinguishing feature of VascX is its region awareness; by utilizing the fovea, optic disc, and CFI boundaries as anatomical landmarks, the tool ensures spatially standardized measurements and identifies when specific biomarkers are not computable. Spatially localized biomarkers are calculated over grids relative to these landmarks, facilitating precise clinical analysis. Released via GitHub and PyPI, VascX provides an explainable and modifiable framework that supports reproducible vascular research through integrated visualizations. By enabling the rapid extraction of established biomarkers and the development of new ones, VascX advances the field of oculomics, offering a robust, computationally efficient solution for scalable deployment in large-scale clinical and epidemiological databases.

Uncertainty-Aware Multiple-Instance Learning for Reliable Classification: Application to Optical Coherence Tomography

Feb 06, 2023

Abstract:Deep learning classification models for medical image analysis often perform well on data from scanners that were used during training. However, when these models are applied to data from different vendors, their performance tends to drop substantially. Artifacts that only occur within scans from specific scanners are major causes of this poor generalizability. We aimed to improve the reliability of deep learning classification models by proposing Uncertainty-Based Instance eXclusion (UBIX). This technique, based on multiple-instance learning, reduces the effect of corrupted instances on the bag-classification by seamlessly integrating out-of-distribution (OOD) instance detection during inference. Although UBIX is generally applicable to different medical images and diverse classification tasks, we focused on staging of age-related macular degeneration in optical coherence tomography. After being trained using images from one vendor, UBIX showed a reliable behavior, with a slight decrease in performance (a decrease of the quadratic weighted kappa ($\kappa_w$) from 0.861 to 0.708), when applied to images from different vendors containing artifacts; while a state-of-the-art 3D neural network suffered from a significant detriment of performance ($\kappa_w$ from 0.852 to 0.084) on the same test set. We showed that instances with unseen artifacts can be identified with OOD detection and their contribution to the bag-level predictions can be reduced, improving reliability without the need for retraining on new data. This potentially increases the applicability of artificial intelligence models to data from other scanners than the ones for which they were developed.

Multi-modal, multi-task, multi-attention deep learning detection of reticular pseudodrusen: towards automated and accessible classification of age-related macular degeneration

Nov 11, 2020

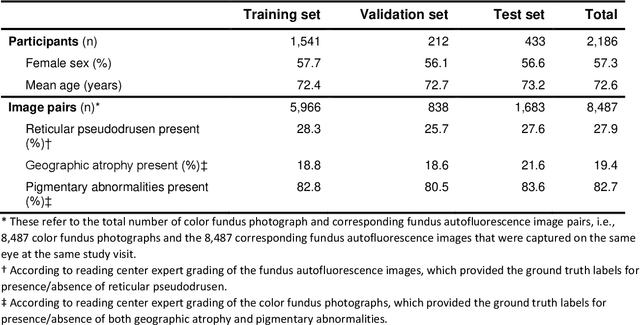

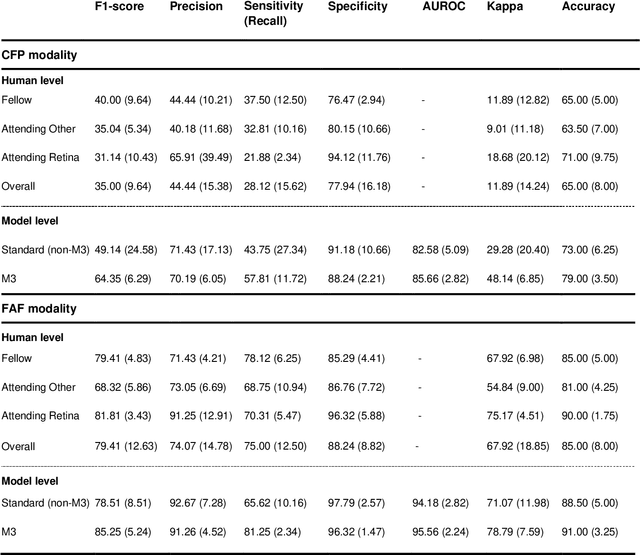

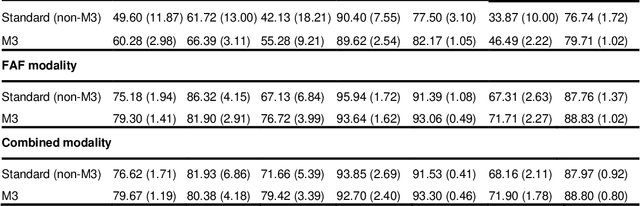

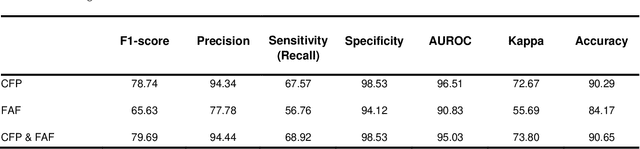

Abstract:Objective Reticular pseudodrusen (RPD), a key feature of age-related macular degeneration (AMD), are poorly detected by human experts on standard color fundus photography (CFP) and typically require advanced imaging modalities such as fundus autofluorescence (FAF). The objective was to develop and evaluate the performance of a novel 'M3' deep learning framework on RPD detection. Materials and Methods A deep learning framework M3 was developed to detect RPD presence accurately using CFP alone, FAF alone, or both, employing >8000 CFP-FAF image pairs obtained prospectively (Age-Related Eye Disease Study 2). The M3 framework includes multi-modal (detection from single or multiple image modalities), multi-task (training different tasks simultaneously to improve generalizability), and multi-attention (improving ensembled feature representation) operation. Performance on RPD detection was compared with state-of-the-art deep learning models and 13 ophthalmologists; performance on detection of two other AMD features (geographic atrophy and pigmentary abnormalities) was also evaluated. Results For RPD detection, M3 achieved area under receiver operating characteristic (AUROC) 0.832, 0.931, and 0.933 for CFP alone, FAF alone, and both, respectively. M3 performance on CFP was very substantially superior to human retinal specialists (median F1-score 0.644 versus 0.350). External validation (on Rotterdam Study, Netherlands) demonstrated high accuracy on CFP alone (AUROC 0.965). The M3 framework also accurately detected geographic atrophy and pigmentary abnormalities (AUROC 0.909 and 0.912, respectively), demonstrating its generalizability. Conclusion This study demonstrates the successful development, robust evaluation, and external validation of a novel deep learning framework that enables accessible, accurate, and automated AMD diagnosis and prognosis.

A deep learning model for segmentation of geographic atrophy to study its long-term natural history

Aug 15, 2019

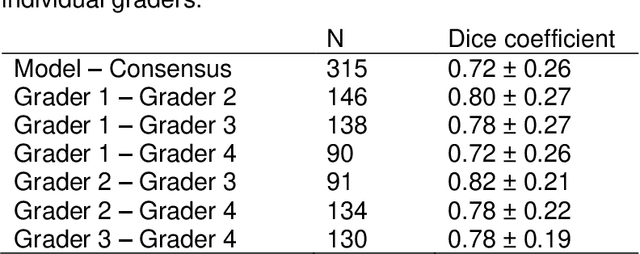

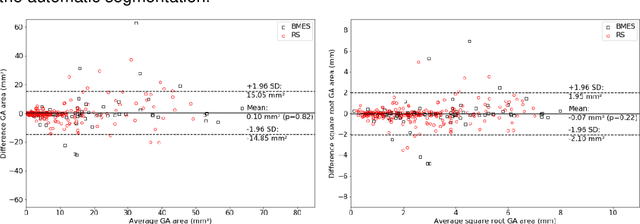

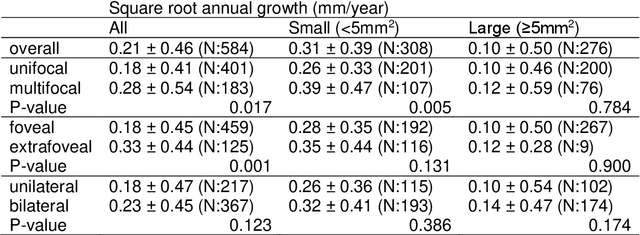

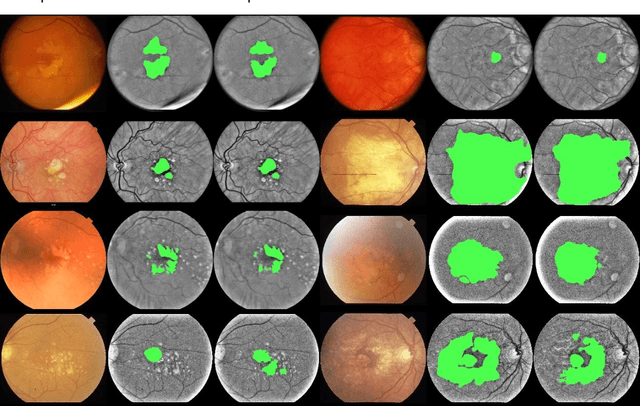

Abstract:Purpose: To develop and validate a deep learning model for automatic segmentation of geographic atrophy (GA) in color fundus images (CFIs) and its application to study growth rate of GA. Participants: 409 CFIs of 238 eyes with GA from the Rotterdam Study (RS) and the Blue Mountain Eye Study (BMES) for model development, and 5,379 CFIs of 625 eyes from the Age-Related Eye Disease Study (AREDS) for analysis of GA growth rate. Methods: A deep learning model based on an ensemble of encoder-decoder architectures was implemented and optimized for the segmentation of GA in CFIs. Four experienced graders delineated GA in CFIs from RS and BMES. These manual delineations were used to evaluate the segmentation model using 5-fold cross-validation. The model was further applied to CFIs from the AREDS to study the growth rate of GA. Linear regression analysis was used to study associations between structural biomarkers at baseline and GA growth rate. A general estimate of the progression of GA area over time was made by combining growth rates of all eyes with GA from the AREDS set. Results: The model obtained an average Dice coefficient of 0.72 $\pm$ 0.26 on the BMES and RS. An intraclass correlation coefficient of 0.83 was reached between the automatically estimated GA area and the graders' consensus measures. Eight automatically calculated structural biomarkers (area, filled area, convex area, convex solidity, eccentricity, roundness, foveal involvement and perimeter) were significantly associated with growth rate. Combining all growth rates indicated that GA area grows quadratically up to an area of around 12 mm$^{2}$, after which growth rate stabilizes or decreases. Conclusion: The presented deep learning model allowed for fully automatic and robust segmentation of GA in CFIs. These segmentations can be used to extract structural characteristics of GA that predict its growth rate.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge