Benjamin Remy

Opportunities in AI/ML for the Rubin LSST Dark Energy Science Collaboration

Jan 20, 2026Abstract:The Vera C. Rubin Observatory's Legacy Survey of Space and Time (LSST) will produce unprecedented volumes of heterogeneous astronomical data (images, catalogs, and alerts) that challenge traditional analysis pipelines. The LSST Dark Energy Science Collaboration (DESC) aims to derive robust constraints on dark energy and dark matter from these data, requiring methods that are statistically powerful, scalable, and operationally reliable. Artificial intelligence and machine learning (AI/ML) are already embedded across DESC science workflows, from photometric redshifts and transient classification to weak lensing inference and cosmological simulations. Yet their utility for precision cosmology hinges on trustworthy uncertainty quantification, robustness to covariate shift and model misspecification, and reproducible integration within scientific pipelines. This white paper surveys the current landscape of AI/ML across DESC's primary cosmological probes and cross-cutting analyses, revealing that the same core methodologies and fundamental challenges recur across disparate science cases. Since progress on these cross-cutting challenges would benefit multiple probes simultaneously, we identify key methodological research priorities, including Bayesian inference at scale, physics-informed methods, validation frameworks, and active learning for discovery. With an eye on emerging techniques, we also explore the potential of the latest foundation model methodologies and LLM-driven agentic AI systems to reshape DESC workflows, provided their deployment is coupled with rigorous evaluation and governance. Finally, we discuss critical software, computing, data infrastructure, and human capital requirements for the successful deployment of these new methodologies, and consider associated risks and opportunities for broader coordination with external actors.

Towards solving model bias in cosmic shear forward modeling

Oct 28, 2022Abstract:As the volume and quality of modern galaxy surveys increase, so does the difficulty of measuring the cosmological signal imprinted in galaxy shapes. Weak gravitational lensing sourced by the most massive structures in the Universe generates a slight shearing of galaxy morphologies called cosmic shear, key probe for cosmological models. Modern techniques of shear estimation based on statistics of ellipticity measurements suffer from the fact that the ellipticity is not a well-defined quantity for arbitrary galaxy light profiles, biasing the shear estimation. We show that a hybrid physical and deep learning Hierarchical Bayesian Model, where a generative model captures the galaxy morphology, enables us to recover an unbiased estimate of the shear on realistic galaxies, thus solving the model bias.

Neural Posterior Estimation with Differentiable Simulators

Jul 12, 2022

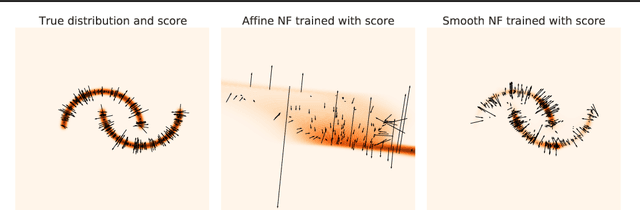

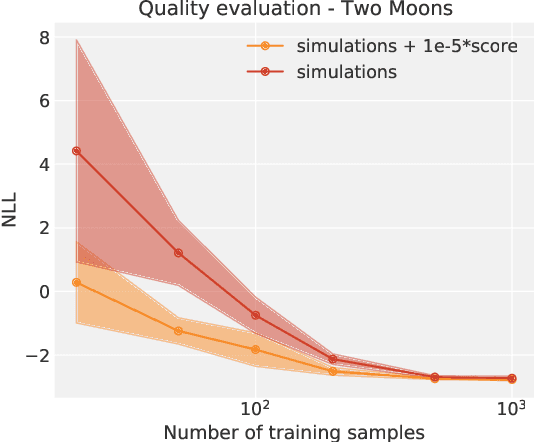

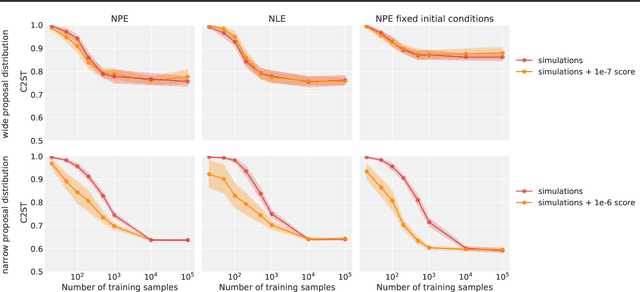

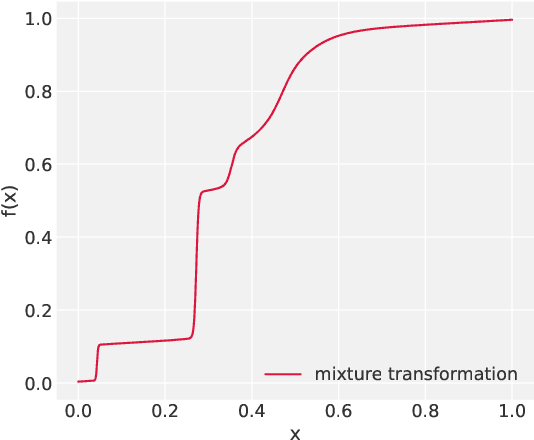

Abstract:Simulation-Based Inference (SBI) is a promising Bayesian inference framework that alleviates the need for analytic likelihoods to estimate posterior distributions. Recent advances using neural density estimators in SBI algorithms have demonstrated the ability to achieve high-fidelity posteriors, at the expense of a large number of simulations ; which makes their application potentially very time-consuming when using complex physical simulations. In this work we focus on boosting the sample-efficiency of posterior density estimation using the gradients of the simulator. We present a new method to perform Neural Posterior Estimation (NPE) with a differentiable simulator. We demonstrate how gradient information helps constrain the shape of the posterior and improves sample-efficiency.

Probabilistic Mass Mapping with Neural Score Estimation

Jan 17, 2022

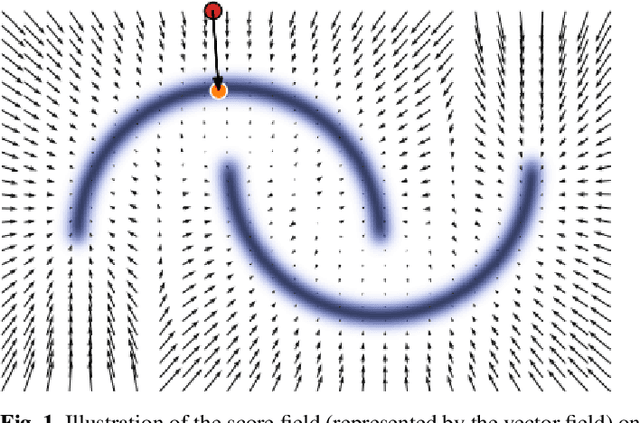

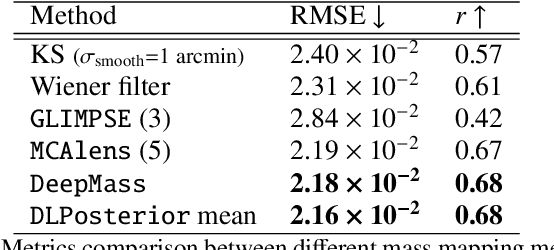

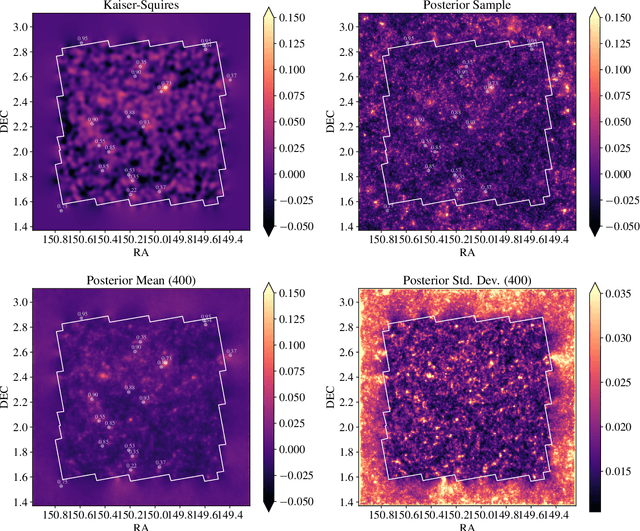

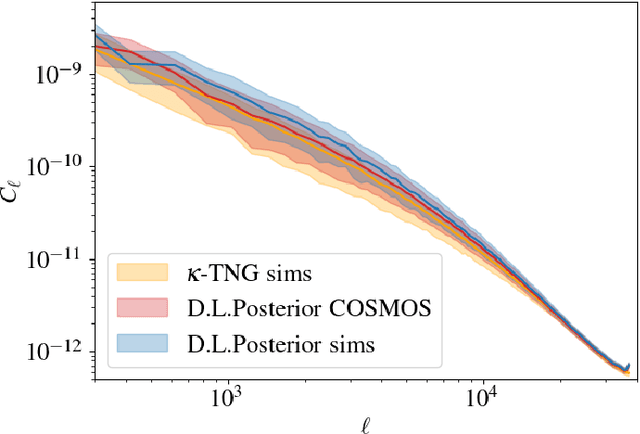

Abstract:Weak lensing mass-mapping is a useful tool to access the full distribution of dark matter on the sky, but because of intrinsic galaxy ellipticies and finite fields/missing data, the recovery of dark matter maps constitutes a challenging ill-posed inverse problem. We introduce a novel methodology allowing for efficient sampling of the high-dimensional Bayesian posterior of the weak lensing mass-mapping problem, and relying on simulations for defining a fully non-Gaussian prior. We aim to demonstrate the accuracy of the method on simulations, and then proceed to applying it to the mass reconstruction of the HST/ACS COSMOS field. The proposed methodology combines elements of Bayesian statistics, analytic theory, and a recent class of Deep Generative Models based on Neural Score Matching. This approach allows us to do the following: 1) Make full use of analytic cosmological theory to constrain the 2pt statistics of the solution. 2) Learn from cosmological simulations any differences between this analytic prior and full simulations. 3) Obtain samples from the full Bayesian posterior of the problem for robust Uncertainty Quantification. We demonstrate the method on the $\kappa$TNG simulations and find that the posterior mean significantly outperfoms previous methods (Kaiser-Squires, Wiener filter, Sparsity priors) both on root-mean-square error and in terms of the Pearson correlation. We further illustrate the interpretability of the recovered posterior by establishing a close correlation between posterior convergence values and SNR of clusters artificially introduced into a field. Finally, we apply the method to the reconstruction of the HST/ACS COSMOS field and yield the highest quality convergence map of this field to date.

Denoising Score-Matching for Uncertainty Quantification in Inverse Problems

Nov 16, 2020

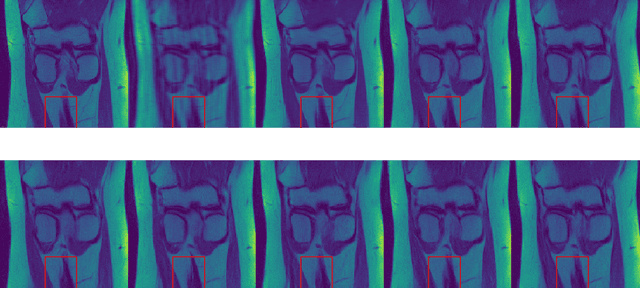

Abstract:Deep neural networks have proven extremely efficient at solving a wide rangeof inverse problems, but most often the uncertainty on the solution they provideis hard to quantify. In this work, we propose a generic Bayesian framework forsolving inverse problems, in which we limit the use of deep neural networks tolearning a prior distribution on the signals to recover. We adopt recent denoisingscore matching techniques to learn this prior from data, and subsequently use it aspart of an annealed Hamiltonian Monte-Carlo scheme to sample the full posteriorof image inverse problems. We apply this framework to Magnetic ResonanceImage (MRI) reconstruction and illustrate how this approach not only yields highquality reconstructions but can also be used to assess the uncertainty on particularfeatures of a reconstructed image.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge