Benjamin Genchel

Automatic Melody Harmonization with Triad Chords: A Comparative Study

Jan 08, 2020

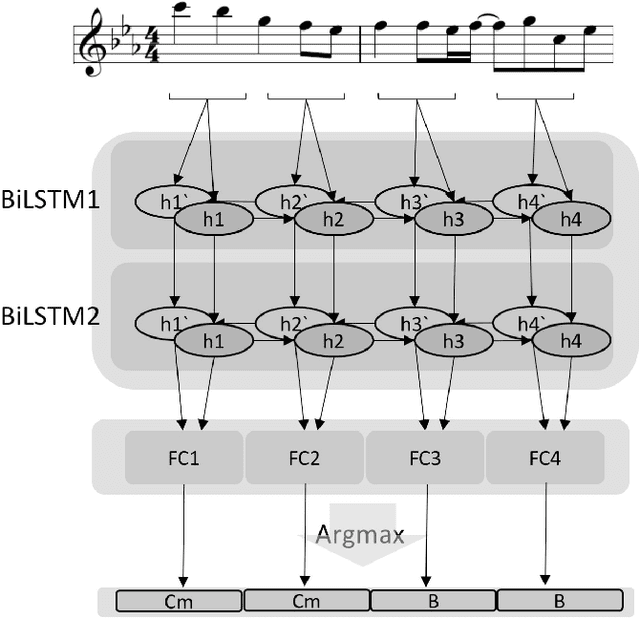

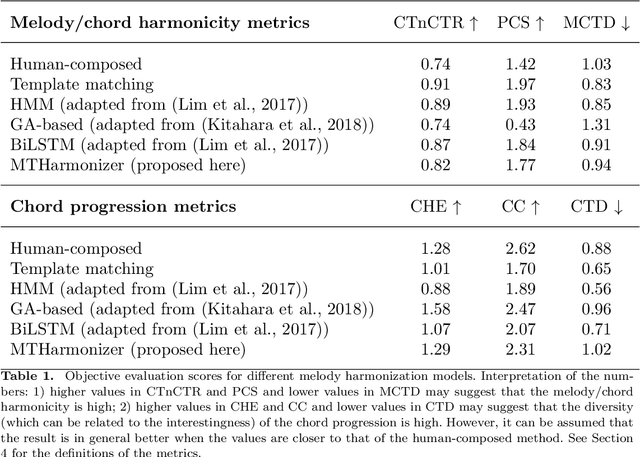

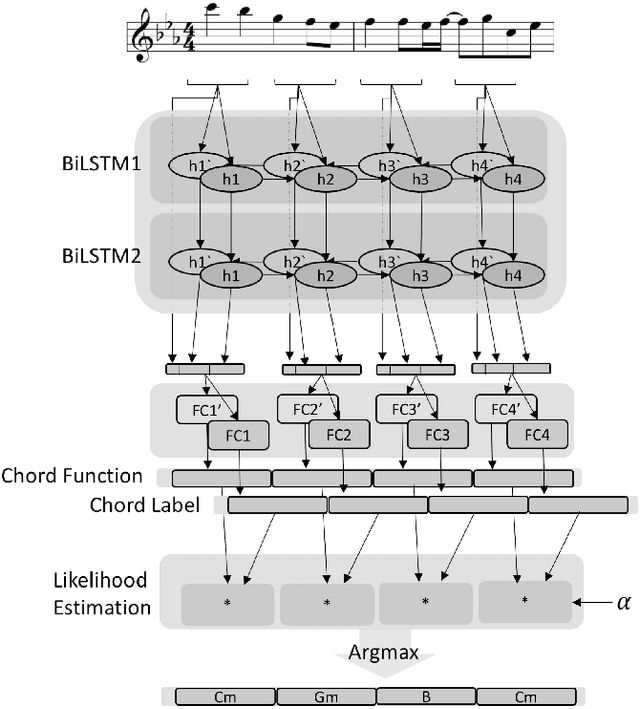

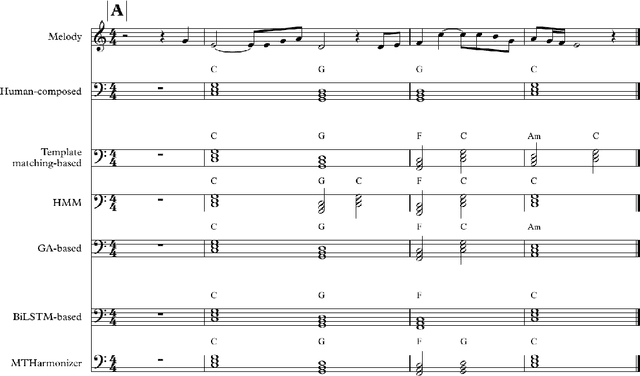

Abstract:Several prior works have proposed various methods for the task of automatic melody harmonization, in which a model aims to generate a sequence of chords to serve as the harmonic accompaniment of a given multiple-bar melody sequence. In this paper, we present a comparative study evaluating and comparing the performance of a set of canonical approaches to this task, including a template matching based model, a hidden Markov based model, a genetic algorithm based model, and two deep learning based models. The evaluation is conducted on a dataset of 9,226 melody/chord pairs we newly collect for this study, considering up to 48 triad chords, using a standardized training/test split. We report the result of an objective evaluation using six different metrics and a subjective study with 202 participants.

Explicitly Conditioned Melody Generation: A Case Study with Interdependent RNNs

Jul 10, 2019

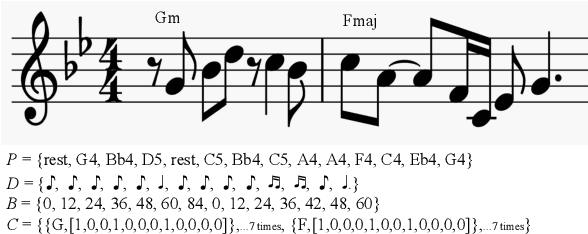

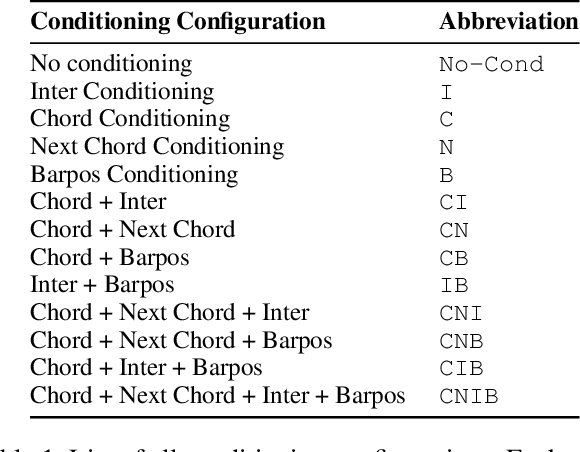

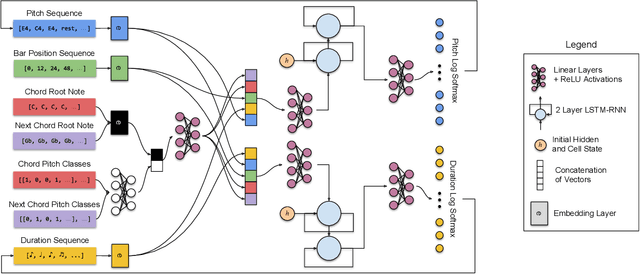

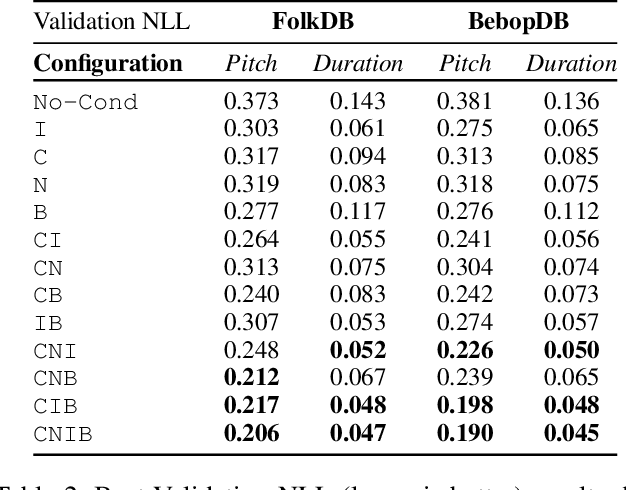

Abstract:Deep generative models for symbolic music are typically designed to model temporal dependencies in music so as to predict the next musical event given previous events. In many cases, such models are expected to learn abstract concepts such as harmony, meter, and rhythm from raw musical data without any additional information. In this study, we investigate the effects of explicitly conditioning deep generative models with musically relevant information. Specifically, we study the effects of four different conditioning inputs on the performance of a recurrent monophonic melody generation model. Several combinations of these conditioning inputs are used to train different model variants which are then evaluated using three objective evaluation paradigms across two genres of music. The results indicate musically relevant conditioning significantly improves learning and performance, and reveal how this information affects learning of musical features related to pitch and rhythm. An informal subjective evaluation suggests a corresponding improvement in the aesthetic quality of generations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge