Bart Bussmann

Minimal and Mechanistic Conditions for Behavioral Self-Awareness in LLMs

Nov 06, 2025Abstract:Recent studies have revealed that LLMs can exhibit behavioral self-awareness: the ability to accurately describe or predict their own learned behaviors without explicit supervision. This capability raises safety concerns as it may, for example, allow models to better conceal their true abilities during evaluation. We attempt to characterize the minimal conditions under which such self-awareness emerges, and the mechanistic processes through which it manifests. Through controlled finetuning experiments on instruction-tuned LLMs with low-rank adapters (LoRA), we find: (1) that self-awareness can be reliably induced using a single rank-1 LoRA adapter; (2) that the learned self-aware behavior can be largely captured by a single steering vector in activation space, recovering nearly all of the fine-tune's behavioral effect; and (3) that self-awareness is non-universal and domain-localized, with independent representations across tasks. Together, these findings suggest that behavioral self-awareness emerges as a domain-specific, linear feature that can be easily induced and modulated.

Sparse Autoencoders Do Not Find Canonical Units of Analysis

Feb 07, 2025

Abstract:A common goal of mechanistic interpretability is to decompose the activations of neural networks into features: interpretable properties of the input computed by the model. Sparse autoencoders (SAEs) are a popular method for finding these features in LLMs, and it has been postulated that they can be used to find a \textit{canonical} set of units: a unique and complete list of atomic features. We cast doubt on this belief using two novel techniques: SAE stitching to show they are incomplete, and meta-SAEs to show they are not atomic. SAE stitching involves inserting or swapping latents from a larger SAE into a smaller one. Latents from the larger SAE can be divided into two categories: \emph{novel latents}, which improve performance when added to the smaller SAE, indicating they capture novel information, and \emph{reconstruction latents}, which can replace corresponding latents in the smaller SAE that have similar behavior. The existence of novel features indicates incompleteness of smaller SAEs. Using meta-SAEs -- SAEs trained on the decoder matrix of another SAE -- we find that latents in SAEs often decompose into combinations of latents from a smaller SAE, showing that larger SAE latents are not atomic. The resulting decompositions are often interpretable; e.g. a latent representing ``Einstein'' decomposes into ``scientist'', ``Germany'', and ``famous person''. Even if SAEs do not find canonical units of analysis, they may still be useful tools. We suggest that future research should either pursue different approaches for identifying such units, or pragmatically choose the SAE size suited to their task. We provide an interactive dashboard to explore meta-SAEs: https://metasaes.streamlit.app/

BatchTopK Sparse Autoencoders

Dec 09, 2024Abstract:Sparse autoencoders (SAEs) have emerged as a powerful tool for interpreting language model activations by decomposing them into sparse, interpretable features. A popular approach is the TopK SAE, that uses a fixed number of the most active latents per sample to reconstruct the model activations. We introduce BatchTopK SAEs, a training method that improves upon TopK SAEs by relaxing the top-k constraint to the batch-level, allowing for a variable number of latents to be active per sample. As a result, BatchTopK adaptively allocates more or fewer latents depending on the sample, improving reconstruction without sacrificing average sparsity. We show that BatchTopK SAEs consistently outperform TopK SAEs in reconstructing activations from GPT-2 Small and Gemma 2 2B, and achieve comparable performance to state-of-the-art JumpReLU SAEs. However, an advantage of BatchTopK is that the average number of latents can be directly specified, rather than approximately tuned through a costly hyperparameter sweep. We provide code for training and evaluating BatchTopK SAEs at https://github.com/bartbussmann/BatchTopK

Inferring the relationship between soil temperature and the normalized difference vegetation index with machine learning

Dec 19, 2023

Abstract:Changes in climate can greatly affect the phenology of plants, which can have important feedback effects, such as altering the carbon cycle. These phenological feedback effects are often induced by a shift in the start or end dates of the growing season of plants. The normalized difference vegetation index (NDVI) serves as a straightforward indicator for assessing the presence of green vegetation and can also provide an estimation of the plants' growing season. In this study, we investigated the effect of soil temperature on the timing of the start of the season (SOS), timing of the peak of the season (POS), and the maximum annual NDVI value (PEAK) in subarctic grassland ecosystems between 2014 and 2019. We also explored the impact of other meteorological variables, including air temperature, precipitation, and irradiance, on the inter-annual variation in vegetation phenology. Using machine learning (ML) techniques and SHapley Additive exPlanations (SHAP) values, we analyzed the relative importance and contribution of each variable to the phenological predictions. Our results reveal a significant relationship between soil temperature and SOS and POS, indicating that higher soil temperatures lead to an earlier start and peak of the growing season. However, the Peak NDVI values showed just a slight increase with higher soil temperatures. The analysis of other meteorological variables demonstrated their impacts on the inter-annual variation of the vegetation phenology. Ultimately, this study contributes to our knowledge of the relationships between soil temperature, meteorological variables, and vegetation phenology, providing valuable insights for predicting vegetation phenology characteristics and managing subarctic grasslands in the face of climate change. Additionally, this work provides a solid foundation for future ML-based vegetation phenology studies.

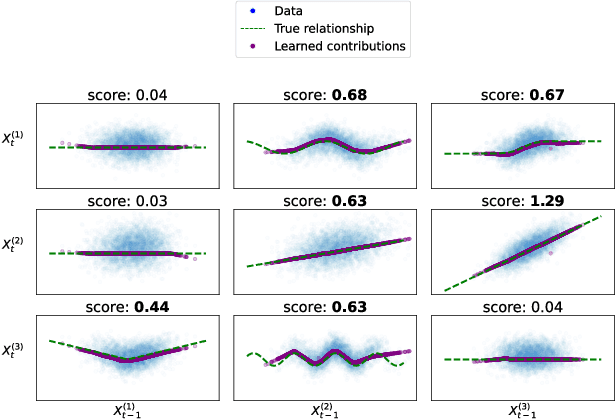

Neural Additive Vector Autoregression Models for Causal Discovery in Time Series Data

Oct 19, 2020

Abstract:Causal structure discovery in complex dynamical systems is an important challenge for many scientific domains. Although data from (interventional) experiments is usually limited, large amounts of observational time series data sets are usually available. Current methods that learn causal structure from time series often assume linear relationships. Hence, they may fail in realistic settings that contain nonlinear relations between the variables. We propose Neural Additive Vector Autoregression (NAVAR) models, a neural approach to causal structure learning that can discover nonlinear relationships. We train deep neural networks that extract the (additive) Granger causal influences from the time evolution in multi-variate time series. The method achieves state-of-the-art results on various benchmark data sets for causal discovery, while providing clear interpretations of the mapped causal relations.

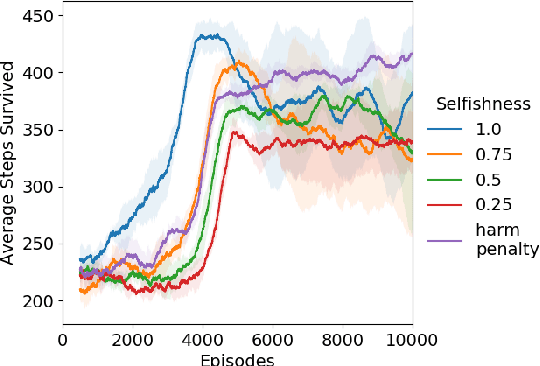

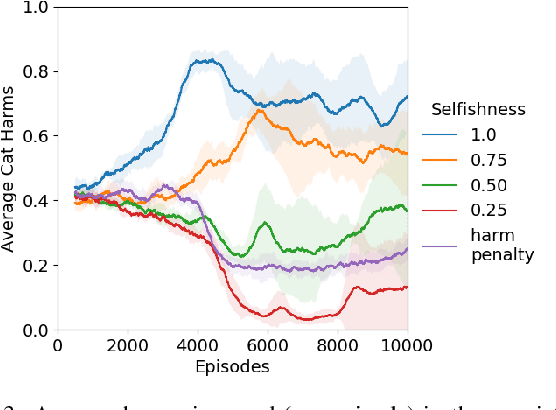

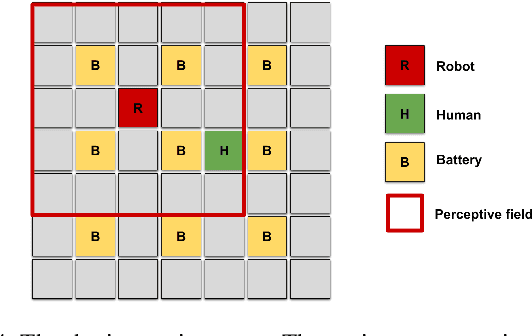

Towards Empathic Deep Q-Learning

Jun 26, 2019

Abstract:As reinforcement learning (RL) scales to solve increasingly complex tasks, interest continues to grow in the fields of AI safety and machine ethics. As a contribution to these fields, this paper introduces an extension to Deep Q-Networks (DQNs), called Empathic DQN, that is loosely inspired both by empathy and the golden rule ("Do unto others as you would have them do unto you"). Empathic DQN aims to help mitigate negative side effects to other agents resulting from myopic goal-directed behavior. We assume a setting where a learning agent coexists with other independent agents (who receive unknown rewards), where some types of reward (e.g. negative rewards from physical harm) may generalize across agents. Empathic DQN combines the typical (self-centered) value with the estimated value of other agents, by imagining (by its own standards) the value of it being in the other's situation (by considering constructed states where both agents are swapped). Proof-of-concept results in two gridworld environments highlight the approach's potential to decrease collateral harms. While extending Empathic DQN to complex environments is non-trivial, we believe that this first step highlights the potential of bridge-work between machine ethics and RL to contribute useful priors for norm-abiding RL agents.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge