Asli Arslan Esme

Natural Language Interactions in Autonomous Vehicles: Intent Detection and Slot Filling from Passenger Utterances

Apr 23, 2019

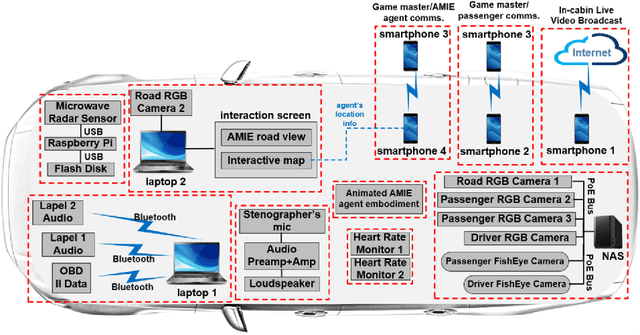

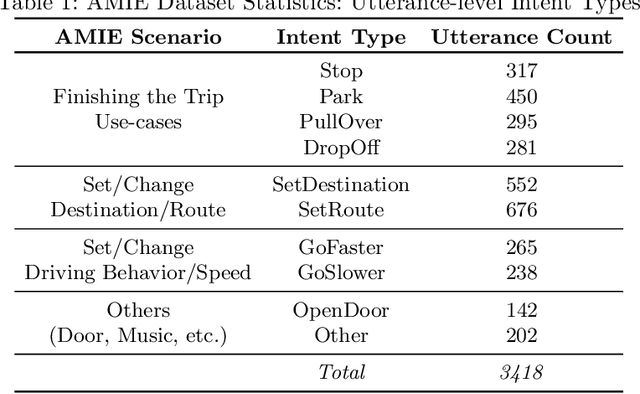

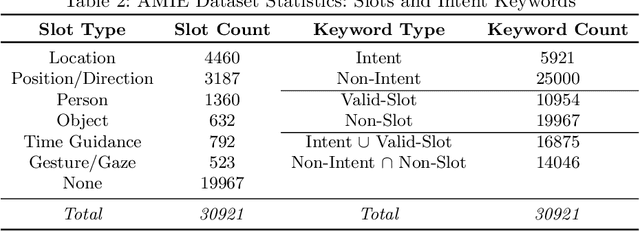

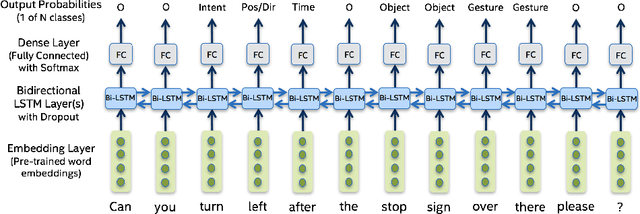

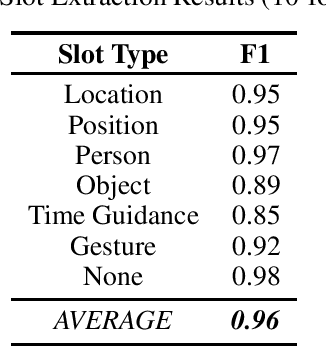

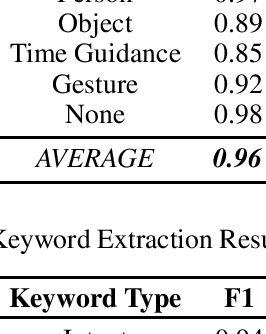

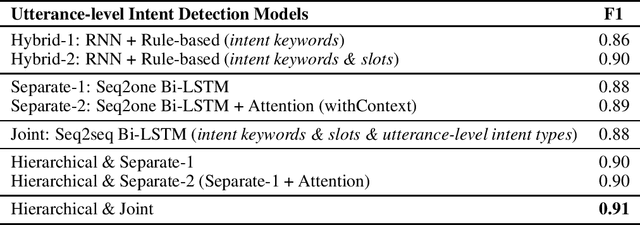

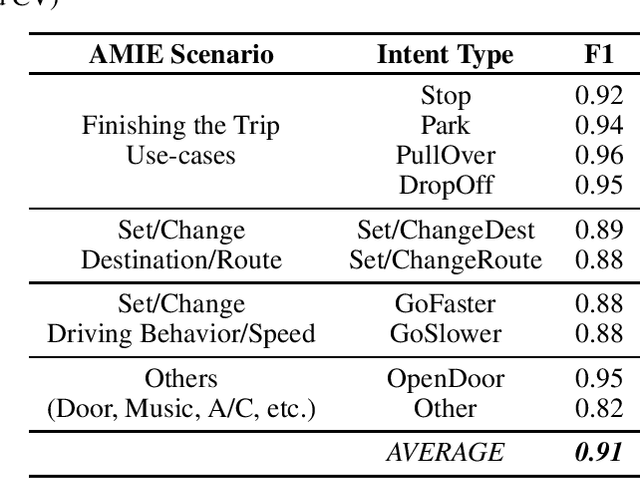

Abstract:Understanding passenger intents and extracting relevant slots are important building blocks towards developing contextual dialogue systems for natural interactions in autonomous vehicles (AV). In this work, we explored AMIE (Automated-vehicle Multi-modal In-cabin Experience), the in-cabin agent responsible for handling certain passenger-vehicle interactions. When the passengers give instructions to AMIE, the agent should parse such commands properly and trigger the appropriate functionality of the AV system. In our current explorations, we focused on AMIE scenarios describing usages around setting or changing the destination and route, updating driving behavior or speed, finishing the trip and other use-cases to support various natural commands. We collected a multi-modal in-cabin dataset with multi-turn dialogues between the passengers and AMIE using a Wizard-of-Oz scheme via a realistic scavenger hunt game activity. After exploring various recent Recurrent Neural Networks (RNN) based techniques, we introduced our own hierarchical joint models to recognize passenger intents along with relevant slots associated with the action to be performed in AV scenarios. Our experimental results outperformed certain competitive baselines and achieved overall F1 scores of 0.91 for utterance-level intent detection and 0.96 for slot filling tasks. In addition, we conducted initial speech-to-text explorations by comparing intent/slot models trained and tested on human transcriptions versus noisy Automatic Speech Recognition (ASR) outputs. Finally, we compared the results with single passenger rides versus the rides with multiple passengers.

* Accepted and presented as a full paper at 20th International Conference on Computational Linguistics and Intelligent Text Processing (CICLing 2019), April 7-13, 2019, La Rochelle, France

Unobtrusive and Multimodal Approach for Behavioral Engagement Detection of Students

Jan 16, 2019

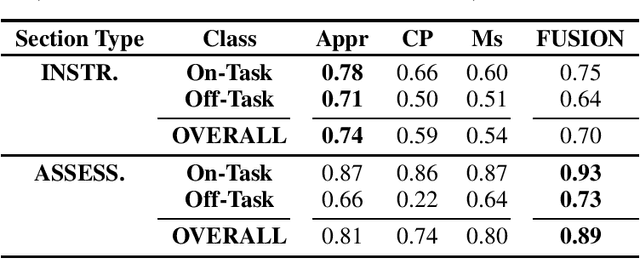

Abstract:We propose a multimodal approach for detection of students' behavioral engagement states (i.e., On-Task vs. Off-Task), based on three unobtrusive modalities: Appearance, Context-Performance, and Mouse. Final behavioral engagement states are achieved by fusing modality-specific classifiers at the decision level. Various experiments were conducted on a student dataset collected in an authentic classroom.

Detecting Behavioral Engagement of Students in the Wild Based on Contextual and Visual Data

Jan 15, 2019

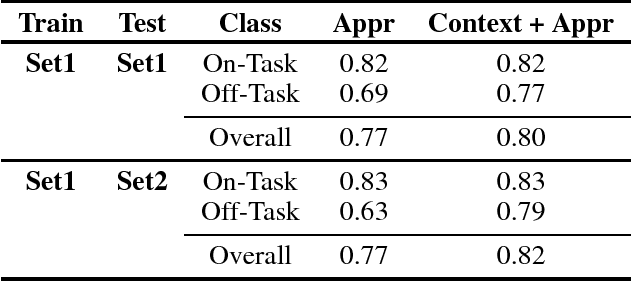

Abstract:To investigate the detection of students' behavioral engagement (On-Task vs. Off-Task), we propose a two-phase approach in this study. In Phase 1, contextual logs (URLs) are utilized to assess active usage of the content platform. If there is active use, the appearance information is utilized in Phase 2 to infer behavioral engagement. Incorporating the contextual information improved the overall F1-scores from 0.77 to 0.82. Our cross-classroom and cross-platform experiments showed the proposed generic and multi-modal behavioral engagement models' applicability to a different set of students or different subject areas.

The Importance of Socio-Cultural Differences for Annotating and Detecting the Affective States of Students

Jan 12, 2019

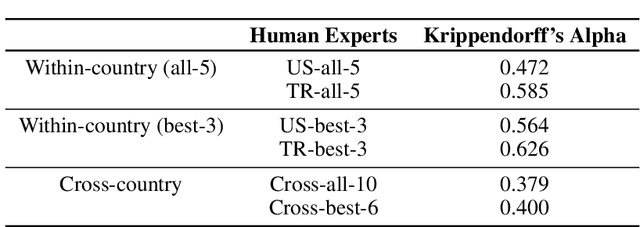

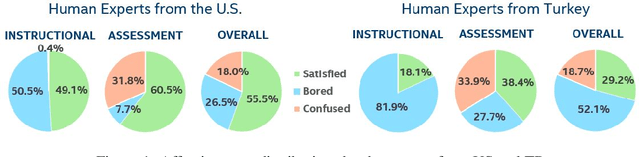

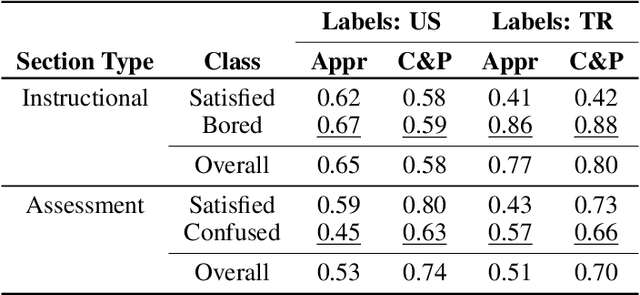

Abstract:The development of real-time affect detection models often depends upon obtaining annotated data for supervised learning by employing human experts to label the student data. One open question in annotating affective data for affect detection is whether the labelers (i.e., human experts) need to be socio-culturally similar to the students being labeled, as this impacts the cost feasibility of obtaining the labels. In this study, we investigate the following research questions: For affective state annotation, how does the socio-cultural background of human expert labelers, compared to the subjects, impact the degree of consensus and distribution of affective states obtained? Secondly, how do differences in labeler background impact the performance of affect detection models that are trained using these labels?

Conversational Intent Understanding for Passengers in Autonomous Vehicles

Dec 14, 2018

Abstract:Understanding passenger intents and extracting relevant slots are important building blocks towards developing a contextual dialogue system responsible for handling certain vehicle-passenger interactions in autonomous vehicles (AV). When the passengers give instructions to AMIE (Automated-vehicle Multimodal In-cabin Experience), the agent should parse such commands properly and trigger the appropriate functionality of the AV system. In our AMIE scenarios, we describe usages and support various natural commands for interacting with the vehicle. We collected a multimodal in-cabin data-set with multi-turn dialogues between the passengers and AMIE using a Wizard-of-Oz scheme. We explored various recent Recurrent Neural Networks (RNN) based techniques and built our own hierarchical models to recognize passenger intents along with relevant slots associated with the action to be performed in AV scenarios. Our experimental results achieved F1-score of 0.91 on utterance-level intent recognition and 0.96 on slot extraction models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge