Arne Thomsen

Hybrid Physical-Neural Simulator for Fast Cosmological Hydrodynamics

Oct 30, 2025Abstract:Cosmological field-level inference requires differentiable forward models that solve the challenging dynamics of gas and dark matter under hydrodynamics and gravity. We propose a hybrid approach where gravitational forces are computed using a differentiable particle-mesh solver, while the hydrodynamics are parametrized by a neural network that maps local quantities to an effective pressure field. We demonstrate that our method improves upon alternative approaches, such as an Enthalpy Gradient Descent baseline, both at the field and summary-statistic level. The approach is furthermore highly data efficient, with a single reference simulation of cosmological structure formation being sufficient to constrain the neural pressure model. This opens the door for future applications where the model is fit directly to observational data, rather than a training set of simulations.

Cosmology from Galaxy Redshift Surveys with PointNet

Nov 22, 2022

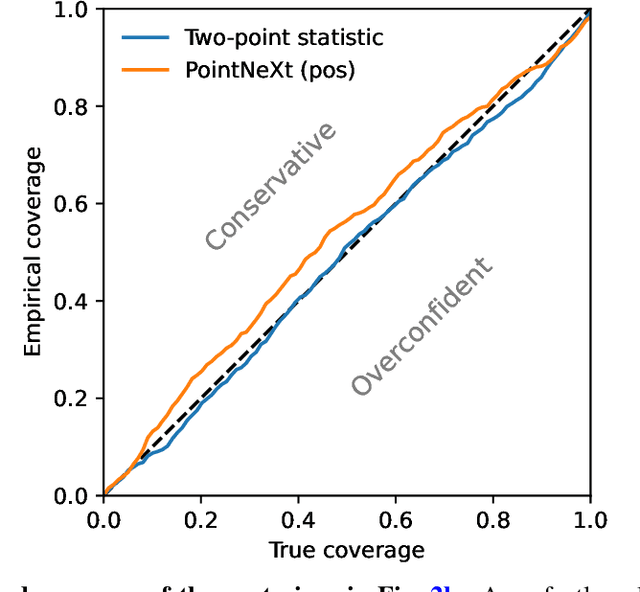

Abstract:In recent years, deep learning approaches have achieved state-of-the-art results in the analysis of point cloud data. In cosmology, galaxy redshift surveys resemble such a permutation invariant collection of positions in space. These surveys have so far mostly been analysed with two-point statistics, such as power spectra and correlation functions. The usage of these summary statistics is best justified on large scales, where the density field is linear and Gaussian. However, in light of the increased precision expected from upcoming surveys, the analysis of -- intrinsically non-Gaussian -- small angular separations represents an appealing avenue to better constrain cosmological parameters. In this work, we aim to improve upon two-point statistics by employing a \textit{PointNet}-like neural network to regress the values of the cosmological parameters directly from point cloud data. Our implementation of PointNets can analyse inputs of $\mathcal{O}(10^4) - \mathcal{O}(10^5)$ galaxies at a time, which improves upon earlier work for this application by roughly two orders of magnitude. Additionally, we demonstrate the ability to analyse galaxy redshift survey data on the lightcone, as opposed to previously static simulation boxes at a given fixed redshift.

The complexity of quantum support vector machines

Feb 28, 2022

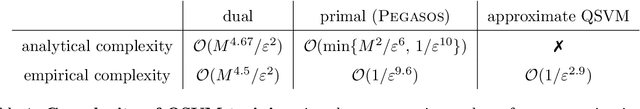

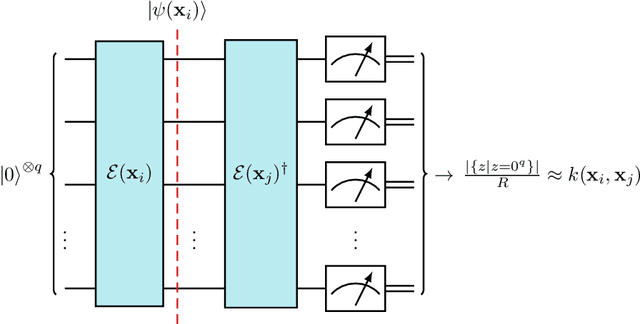

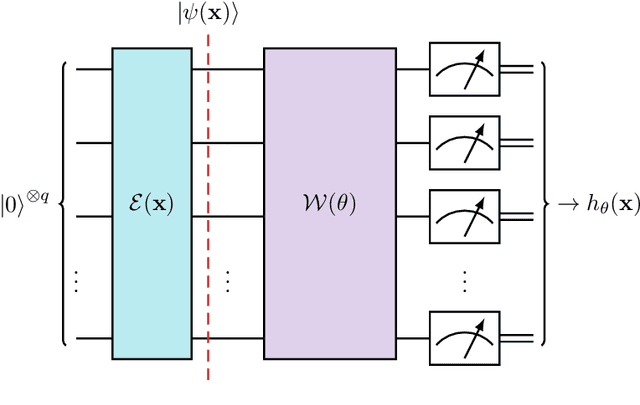

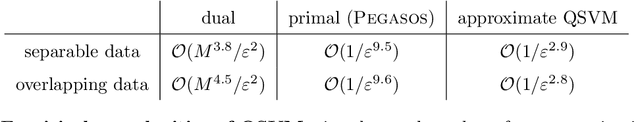

Abstract:Quantum support vector machines employ quantum circuits to define the kernel function. It has been shown that this approach offers a provable exponential speedup compared to any known classical algorithm for certain data sets. The training of such models corresponds to solving a convex optimization problem either via its primal or dual formulation. Due to the probabilistic nature of quantum mechanics, the training algorithms are affected by statistical uncertainty, which has a major impact on their complexity. We show that the dual problem can be solved in $\mathcal{O}(M^{4.67}/\varepsilon^2)$ quantum circuit evaluations, where $M$ denotes the size of the data set and $\varepsilon$ the solution accuracy. We prove under an empirically motivated assumption that the kernelized primal problem can alternatively be solved in $\mathcal{O}(\min \{ M^2/\varepsilon^6, \, 1/\varepsilon^{10} \})$ evaluations by employing a generalization of a known classical algorithm called Pegasos. Accompanying empirical results demonstrate these analytical complexities to be essentially tight. In addition, we investigate a variational approximation to quantum support vector machines and show that their heuristic training achieves considerably better scaling in our experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge