Anna Luo

Deep Inventory Management

Oct 06, 2022

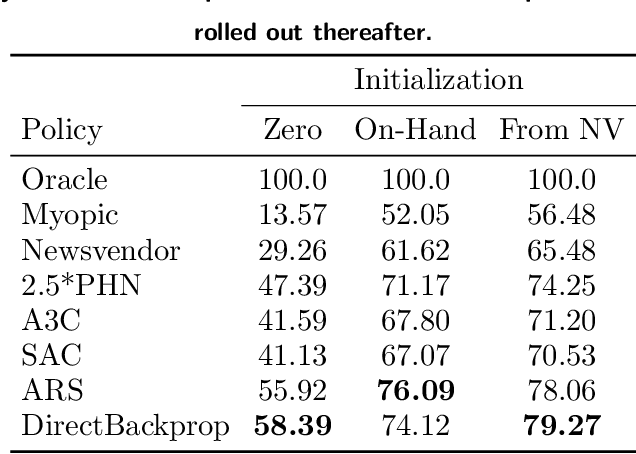

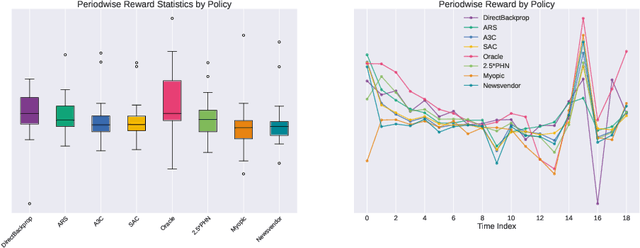

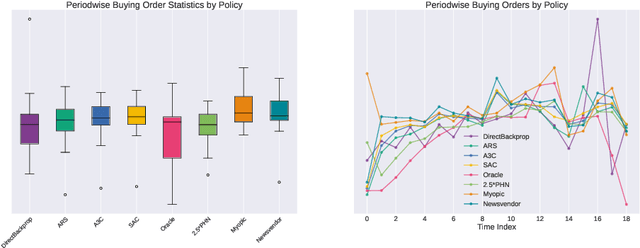

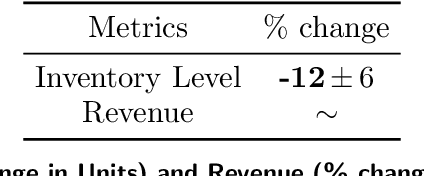

Abstract:We present a Deep Reinforcement Learning approach to solving a periodic review inventory control system with stochastic vendor lead times, lost sales, correlated demand, and price matching. While this dynamic program has historically been considered intractable, we show that several policy learning approaches are competitive with or outperform classical baseline approaches. In order to train these algorithms, we develop novel techniques to convert historical data into a simulator. We also present a model-based reinforcement learning procedure (Direct Backprop) to solve the dynamic periodic review inventory control problem by constructing a differentiable simulator. Under a variety of metrics Direct Backprop outperforms model-free RL and newsvendor baselines, in both simulations and real-world deployments.

Battlesnake Challenge: A Multi-agent Reinforcement Learning Playground with Human-in-the-loop

Jul 20, 2020

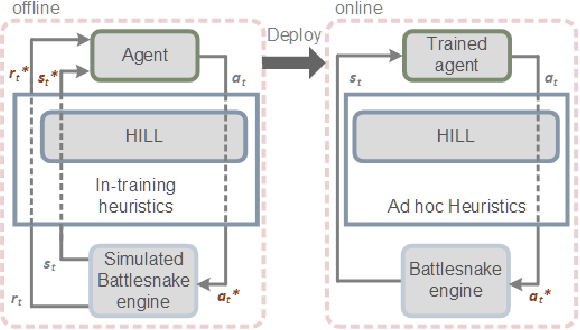

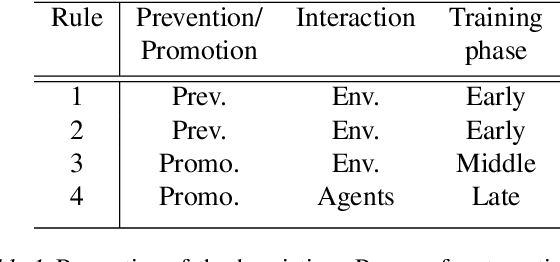

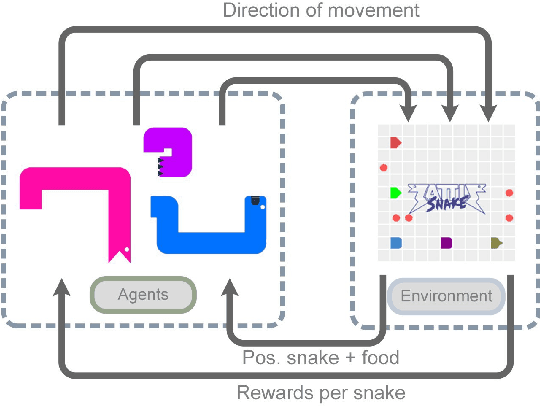

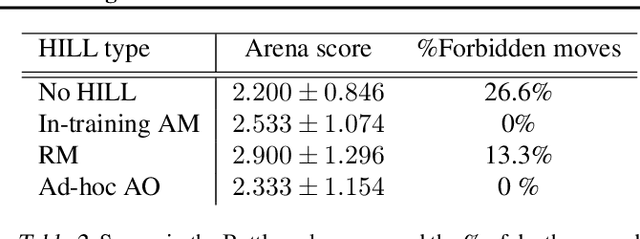

Abstract:We present the Battlesnake Challenge, a framework for multi-agent reinforcement learning with Human-In-the-Loop Learning (HILL). It is developed upon Battlesnake, a multiplayer extension of the traditional Snake game in which 2 or more snakes compete for the final survival. The Battlesnake Challenge consists of an offline module for model training and an online module for live competitions. We develop a simulated game environment for the offline multi-agent model training and identify a set of baseline heuristics that can be instilled to improve learning. Our framework is agent-agnostic and heuristics-agnostic such that researchers can design their own algorithms, train their models, and demonstrate in the online Battlesnake competition. We validate the framework and baseline heuristics with our preliminary experiments. Our results show that agents with the proposed HILL methods consistently outperform agents without HILL. Besides, heuristics of reward manipulation had the best performance in the online competition. We open source our framework at https://github.com/awslabs/sagemaker-battlesnake-ai.

Out-of-the-box channel pruned networks

Apr 30, 2020

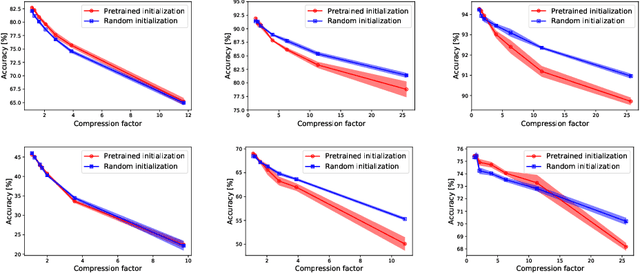

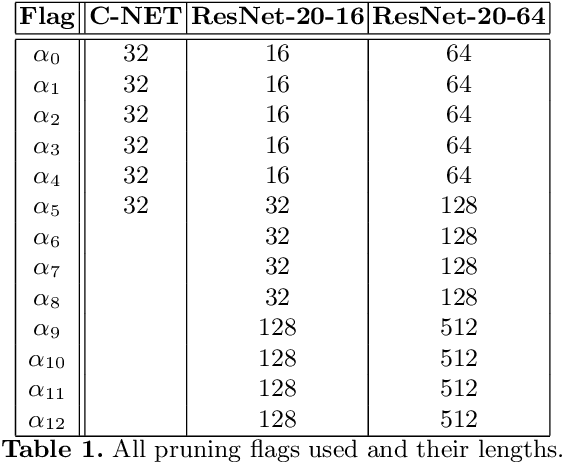

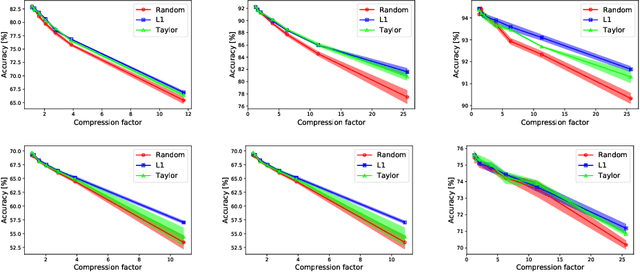

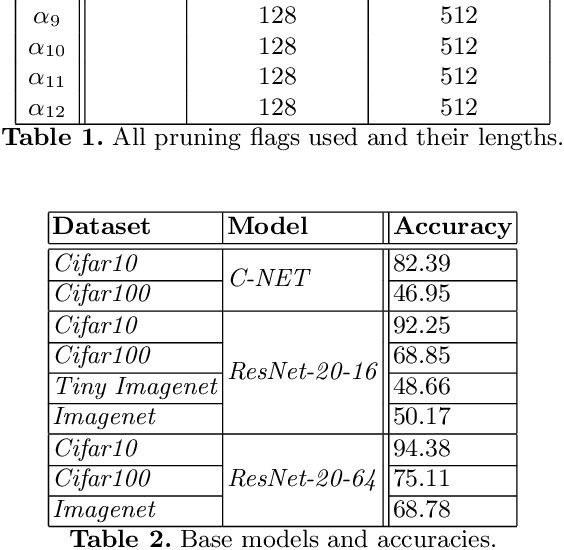

Abstract:In the last decade convolutional neural networks have become gargantuan. Pre-trained models, when used as initializers are able to fine-tune ever larger networks on small datasets. Consequently, not all the convolutional features that these fine-tuned models detect are requisite for the end-task. Several works of channel pruning have been proposed to prune away compute and memory from models that were trained already. Typically, these involve policies that decide which and how many channels to remove from each layer leading to channel-wise and/or layer-wise pruning profiles, respectively. In this paper, we conduct several baseline experiments and establish that profiles from random channel-wise pruning policies are as good as metric-based ones. We also establish that there may exist profiles from some layer-wise pruning policies that are measurably better than common baselines. We then demonstrate that the top layer-wise pruning profiles found using an exhaustive random search from one datatset are also among the top profiles for other datasets. This implies that we could identify out-of-the-box layer-wise pruning profiles using benchmark datasets and use these directly for new datasets. Furthermore, we develop a Reinforcement Learning (RL) policy-based search algorithm with a direct objective of finding transferable layer-wise pruning profiles using many models for the same architecture. We use a novel reward formulation that drives this RL search towards an expected compression while maximizing accuracy. Our results show that our transferred RL-based profiles are as good or better than best profiles found on the original dataset via exhaustive search. We then demonstrate that if we found the profiles using a mid-sized dataset such as Cifar10/100, we are able to transfer them to even a large dataset such as Imagenet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge