Ambuj Arora

Human Body Measurement Estimation with Adversarial Augmentation

Oct 11, 2022

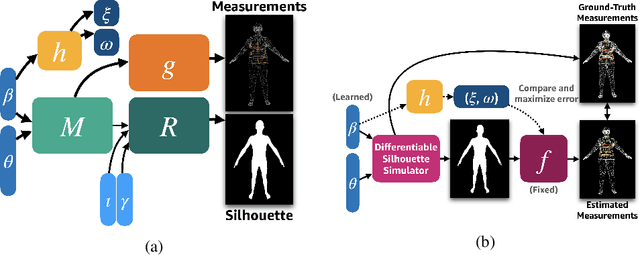

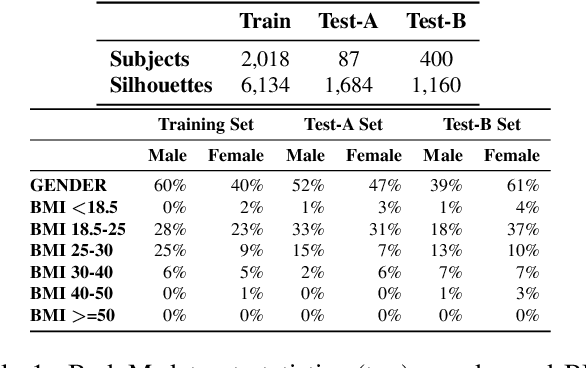

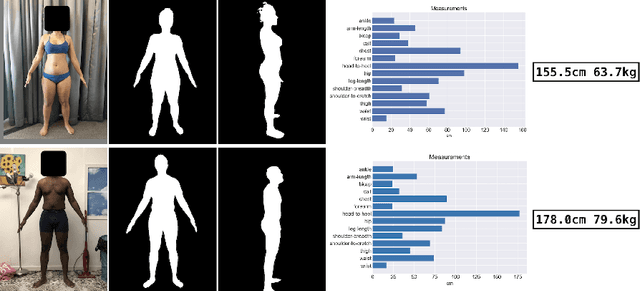

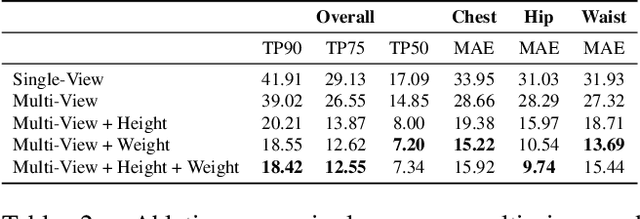

Abstract:We present a Body Measurement network (BMnet) for estimating 3D anthropomorphic measurements of the human body shape from silhouette images. Training of BMnet is performed on data from real human subjects, and augmented with a novel adversarial body simulator (ABS) that finds and synthesizes challenging body shapes. ABS is based on the skinned multiperson linear (SMPL) body model, and aims to maximize BMnet measurement prediction error with respect to latent SMPL shape parameters. ABS is fully differentiable with respect to these parameters, and trained end-to-end via backpropagation with BMnet in the loop. Experiments show that ABS effectively discovers adversarial examples, such as bodies with extreme body mass indices (BMI), consistent with the rarity of extreme-BMI bodies in BMnet's training set. Thus ABS is able to reveal gaps in training data and potential failures in predicting under-represented body shapes. Results show that training BMnet with ABS improves measurement prediction accuracy on real bodies by up to 10%, when compared to no augmentation or random body shape sampling. Furthermore, our method significantly outperforms SOTA measurement estimation methods by as much as 3x. Finally, we release BodyM, the first challenging, large-scale dataset of photo silhouettes and body measurements of real human subjects, to further promote research in this area. Project website: https://adversarialbodysim.github.io

Comparing radiologists' gaze and saliency maps generated by interpretability methods for chest x-rays

Dec 22, 2021

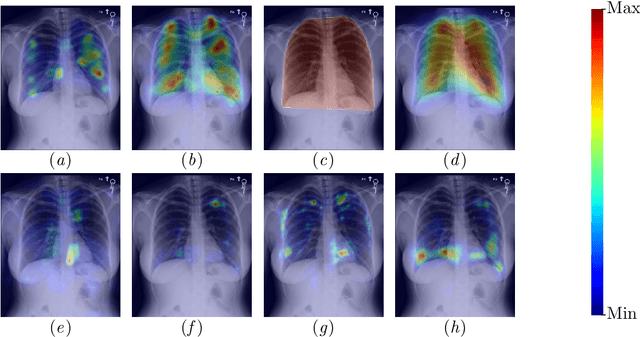

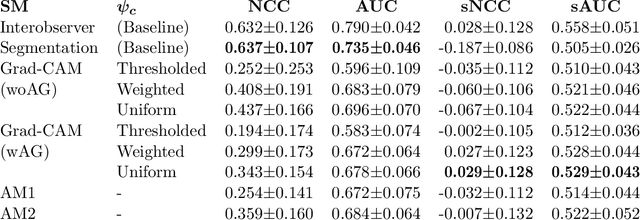

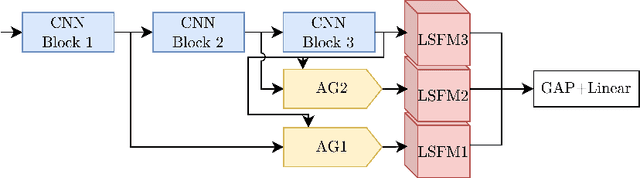

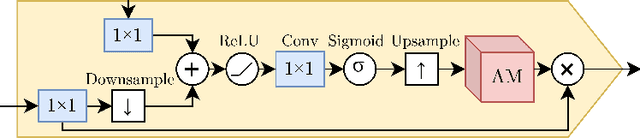

Abstract:The interpretability of medical image analysis models is considered a key research field. We use a dataset of eye-tracking data from five radiologists to compare the outputs of interpretability methods against the heatmaps representing where radiologists looked. We conduct a class-independent analysis of the saliency maps generated by two methods selected from the literature: Grad-CAM and attention maps from an attention-gated model. For the comparison, we use shuffled metrics, which avoid biases from fixation locations. We achieve scores comparable to an interobserver baseline in one shuffled metric, highlighting the potential of saliency maps from Grad-CAM to mimic a radiologist's attention over an image. We also divide the dataset into subsets to evaluate in which cases similarities are higher.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge