Amal Farag

Spatial Aggregation of Holistically-Nested Convolutional Neural Networks for Automated Pancreas Localization and Segmentation

Jan 31, 2017

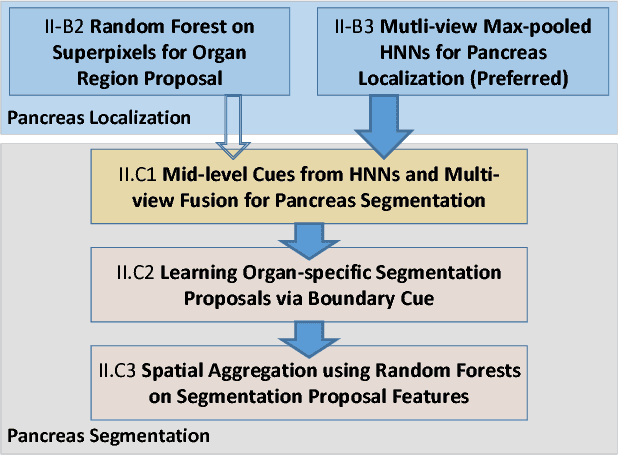

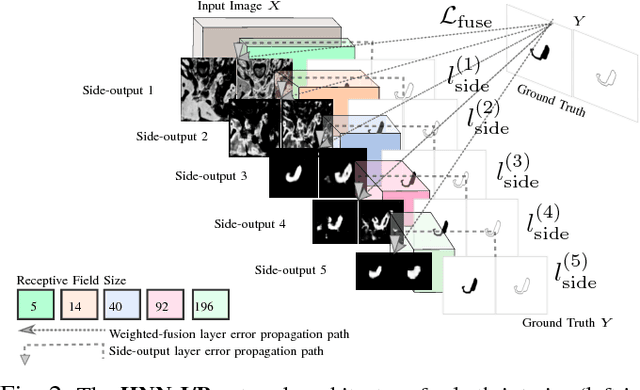

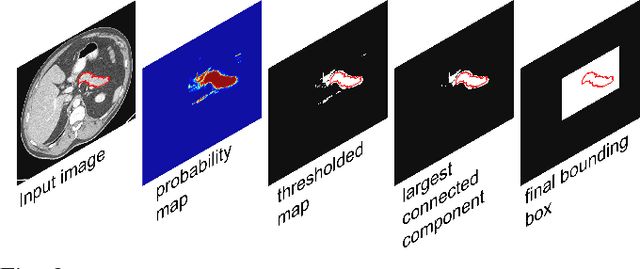

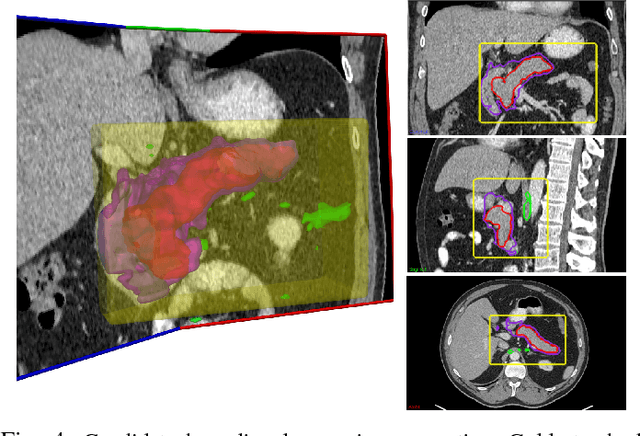

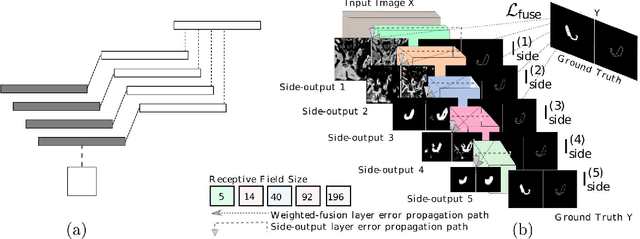

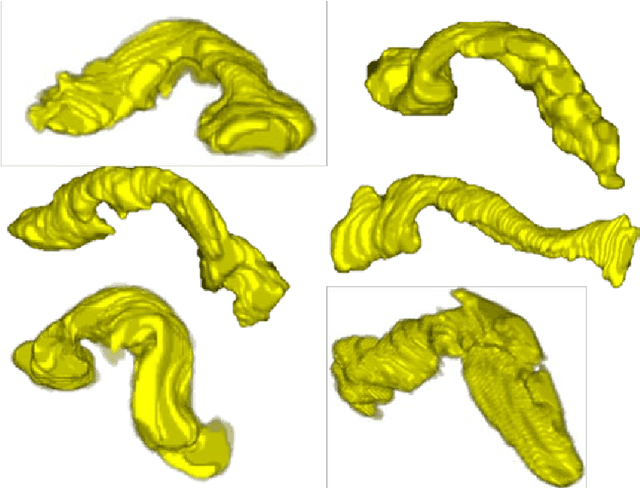

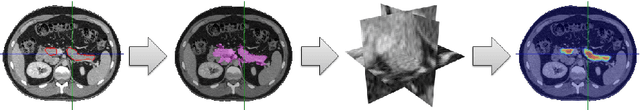

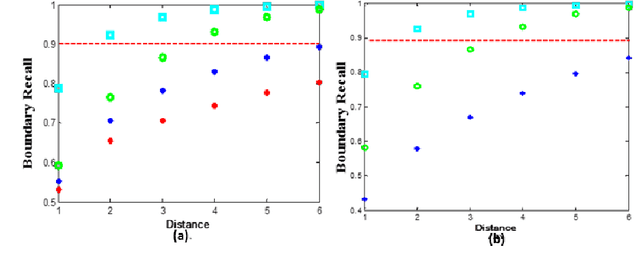

Abstract:Accurate and automatic organ segmentation from 3D radiological scans is an important yet challenging problem for medical image analysis. Specifically, the pancreas demonstrates very high inter-patient anatomical variability in both its shape and volume. In this paper, we present an automated system using 3D computed tomography (CT) volumes via a two-stage cascaded approach: pancreas localization and segmentation. For the first step, we localize the pancreas from the entire 3D CT scan, providing a reliable bounding box for the more refined segmentation step. We introduce a fully deep-learning approach, based on an efficient application of holistically-nested convolutional networks (HNNs) on the three orthogonal axial, sagittal, and coronal views. The resulting HNN per-pixel probability maps are then fused using pooling to reliably produce a 3D bounding box of the pancreas that maximizes the recall. We show that our introduced localizer compares favorably to both a conventional non-deep-learning method and a recent hybrid approach based on spatial aggregation of superpixels using random forest classification. The second, segmentation, phase operates within the computed bounding box and integrates semantic mid-level cues of deeply-learned organ interior and boundary maps, obtained by two additional and separate realizations of HNNs. By integrating these two mid-level cues, our method is capable of generating boundary-preserving pixel-wise class label maps that result in the final pancreas segmentation. Quantitative evaluation is performed on a publicly available dataset of 82 patient CT scans using 4-fold cross-validation (CV). We achieve a Dice similarity coefficient (DSC) of 81.27+/-6.27% in validation, which significantly outperforms previous state-of-the art methods that report DSCs of 71.80+/-10.70% and 78.01+/-8.20%, respectively, using the same dataset.

Spatial Aggregation of Holistically-Nested Networks for Automated Pancreas Segmentation

Jun 24, 2016

Abstract:Accurate automatic organ segmentation is an important yet challenging problem for medical image analysis. The pancreas is an abdominal organ with very high anatomical variability. This inhibits traditional segmentation methods from achieving high accuracies, especially compared to other organs such as the liver, heart or kidneys. In this paper, we present a holistic learning approach that integrates semantic mid-level cues of deeply-learned organ interior and boundary maps via robust spatial aggregation using random forest. Our method generates boundary preserving pixel-wise class labels for pancreas segmentation. Quantitative evaluation is performed on CT scans of 82 patients in 4-fold cross-validation. We achieve a (mean $\pm$ std. dev.) Dice Similarity Coefficient of 78.01% $\pm$ 8.2% in testing which significantly outperforms the previous state-of-the-art approach of 71.8% $\pm$ 10.7% under the same evaluation criterion.

A Bottom-up Approach for Pancreas Segmentation using Cascaded Superpixels and (Deep) Image Patch Labeling

Mar 07, 2016

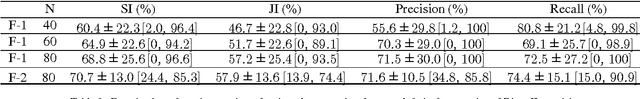

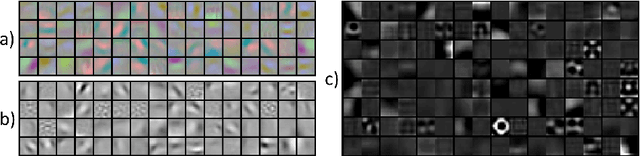

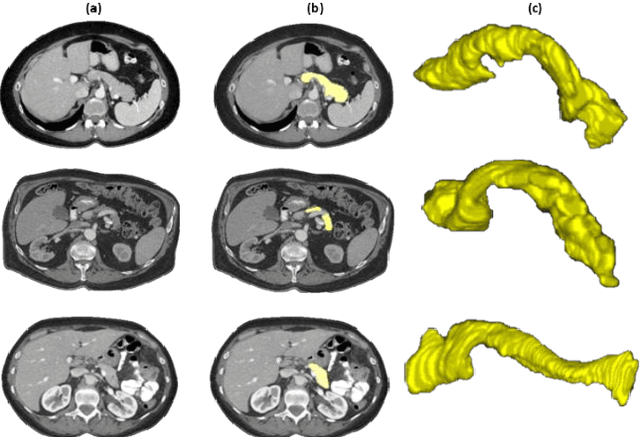

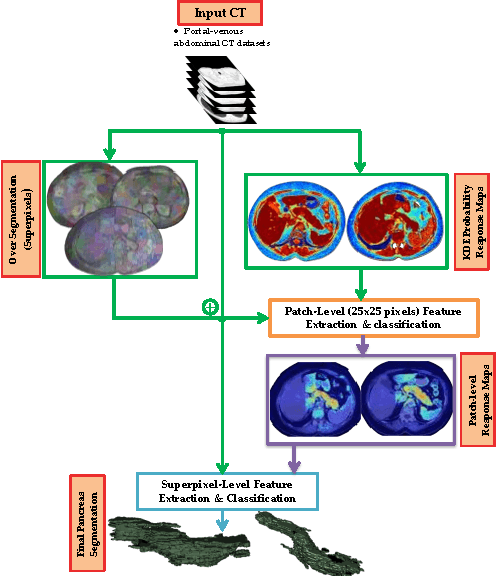

Abstract:Robust automated organ segmentation is a prerequisite for computer-aided diagnosis (CAD), quantitative imaging analysis and surgical assistance. For high-variability organs such as the pancreas, previous approaches report undesirably low accuracies. We present a bottom-up approach for pancreas segmentation in abdominal CT scans that is based on a hierarchy of information propagation by classifying image patches at different resolutions; and cascading superpixels. There are four stages: 1) decomposing CT slice images as a set of disjoint boundary-preserving superpixels; 2) computing pancreas class probability maps via dense patch labeling; 3) classifying superpixels by pooling both intensity and probability features to form empirical statistics in cascaded random forest frameworks; and 4) simple connectivity based post-processing. The dense image patch labeling are conducted by: efficient random forest classifier on image histogram, location and texture features; and more expensive (but with better specificity) deep convolutional neural network classification on larger image windows (with more spatial contexts). Evaluation of the approach is performed on a database of 80 manually segmented CT volumes in six-fold cross-validation (CV). Our achieved results are comparable, or better than the state-of-the-art methods (evaluated by "leave-one-patient-out"), with Dice 70.7% and Jaccard 57.9%. The computational efficiency has been drastically improved in the order of 6~8 minutes, comparing with others of ~10 hours per case. Finally, we implement a multi-atlas label fusion (MALF) approach for pancreas segmentation using the same datasets. Under six-fold CV, our bottom-up segmentation method significantly outperforms its MALF counterpart: (70.7 +/- 13.0%) versus (52.5 +/- 20.8%) in Dice. Deep CNN patch labeling confidences offer more numerical stability, reflected by smaller standard deviations.

DeepOrgan: Multi-level Deep Convolutional Networks for Automated Pancreas Segmentation

Jun 22, 2015

Abstract:Automatic organ segmentation is an important yet challenging problem for medical image analysis. The pancreas is an abdominal organ with very high anatomical variability. This inhibits previous segmentation methods from achieving high accuracies, especially compared to other organs such as the liver, heart or kidneys. In this paper, we present a probabilistic bottom-up approach for pancreas segmentation in abdominal computed tomography (CT) scans, using multi-level deep convolutional networks (ConvNets). We propose and evaluate several variations of deep ConvNets in the context of hierarchical, coarse-to-fine classification on image patches and regions, i.e. superpixels. We first present a dense labeling of local image patches via $P{-}\mathrm{ConvNet}$ and nearest neighbor fusion. Then we describe a regional ConvNet ($R_1{-}\mathrm{ConvNet}$) that samples a set of bounding boxes around each image superpixel at different scales of contexts in a "zoom-out" fashion. Our ConvNets learn to assign class probabilities for each superpixel region of being pancreas. Last, we study a stacked $R_2{-}\mathrm{ConvNet}$ leveraging the joint space of CT intensities and the $P{-}\mathrm{ConvNet}$ dense probability maps. Both 3D Gaussian smoothing and 2D conditional random fields are exploited as structured predictions for post-processing. We evaluate on CT images of 82 patients in 4-fold cross-validation. We achieve a Dice Similarity Coefficient of 83.6$\pm$6.3% in training and 71.8$\pm$10.7% in testing.

Deep convolutional networks for pancreas segmentation in CT imaging

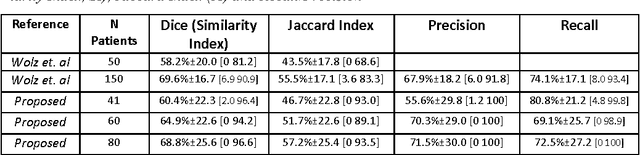

Apr 15, 2015Abstract:Automatic organ segmentation is an important prerequisite for many computer-aided diagnosis systems. The high anatomical variability of organs in the abdomen, such as the pancreas, prevents many segmentation methods from achieving high accuracies when compared to other segmentation of organs like the liver, heart or kidneys. Recently, the availability of large annotated training sets and the accessibility of affordable parallel computing resources via GPUs have made it feasible for "deep learning" methods such as convolutional networks (ConvNets) to succeed in image classification tasks. These methods have the advantage that used classification features are trained directly from the imaging data. We present a fully-automated bottom-up method for pancreas segmentation in computed tomography (CT) images of the abdomen. The method is based on hierarchical coarse-to-fine classification of local image regions (superpixels). Superpixels are extracted from the abdominal region using Simple Linear Iterative Clustering (SLIC). An initial probability response map is generated, using patch-level confidences and a two-level cascade of random forest classifiers, from which superpixel regions with probabilities larger 0.5 are retained. These retained superpixels serve as a highly sensitive initial input of the pancreas and its surroundings to a ConvNet that samples a bounding box around each superpixel at different scales (and random non-rigid deformations at training time) in order to assign a more distinct probability of each superpixel region being pancreas or not. We evaluate our method on CT images of 82 patients (60 for training, 2 for validation, and 20 for testing). Using ConvNets we achieve average Dice scores of 68%+-10% (range, 43-80%) in testing. This shows promise for accurate pancreas segmentation, using a deep learning approach and compares favorably to state-of-the-art methods.

* SPIE Medical Imaging conference, Orlando, FL, USA: SPIE Proceedings | Volume 9413 | Classification

A Bottom-Up Approach for Automatic Pancreas Segmentation in Abdominal CT Scans

Jul 31, 2014

Abstract:Organ segmentation is a prerequisite for a computer-aided diagnosis (CAD) system to detect pathologies and perform quantitative analysis. For anatomically high-variability abdominal organs such as the pancreas, previous segmentation works report low accuracies when comparing to organs like the heart or liver. In this paper, a fully-automated bottom-up method is presented for pancreas segmentation, using abdominal computed tomography (CT) scans. The method is based on a hierarchical two-tiered information propagation by classifying image patches. It labels superpixels as pancreas or not via pooling patch-level confidences on 2D CT slices over-segmented by the Simple Linear Iterative Clustering approach. A supervised random forest (RF) classifier is trained on the patch level and a two-level cascade of RFs is applied at the superpixel level, coupled with multi-channel feature extraction, respectively. On six-fold cross-validation using 80 patient CT volumes, we achieved 68.8% Dice coefficient and 57.2% Jaccard Index, comparable to or slightly better than published state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge