Alice Allen

Flexible Moment-Invariant Bases from Irreducible Tensors

Mar 27, 2025Abstract:Moment invariants are a powerful tool for the generation of rotation-invariant descriptors needed for many applications in pattern detection, classification, and machine learning. A set of invariants is optimal if it is complete, independent, and robust against degeneracy in the input. In this paper, we show that the current state of the art for the generation of these bases of moment invariants, despite being robust against moment tensors being identically zero, is vulnerable to a degeneracy that is common in real-world applications, namely spherical functions. We show how to overcome this vulnerability by combining two popular moment invariant approaches: one based on spherical harmonics and one based on Cartesian tensor algebra.

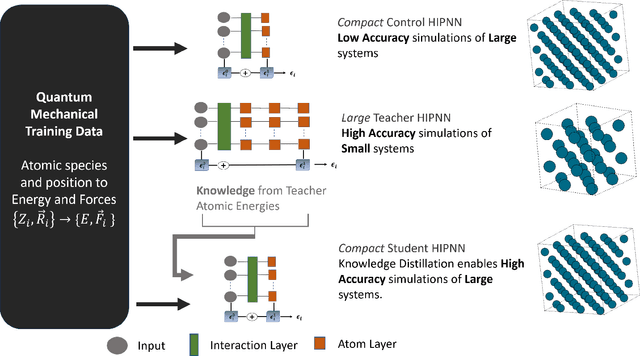

Teacher-student training improves accuracy and efficiency of machine learning inter-atomic potentials

Feb 07, 2025

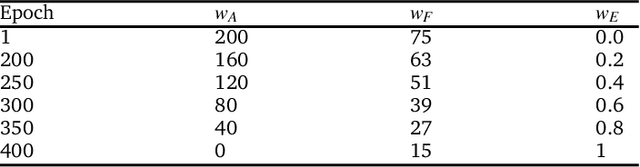

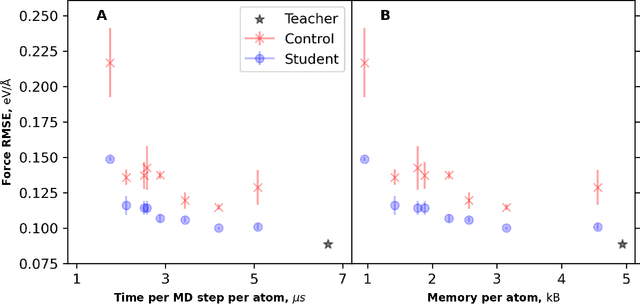

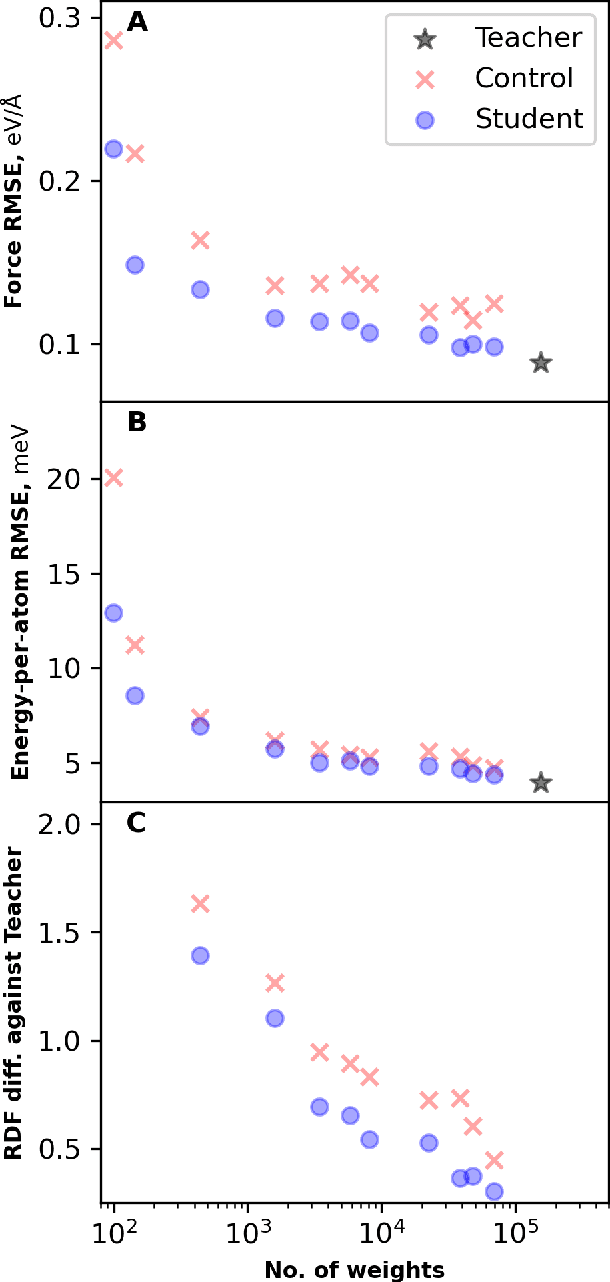

Abstract:Machine learning inter-atomic potentials (MLIPs) are revolutionizing the field of molecular dynamics (MD) simulations. Recent MLIPs have tended towards more complex architectures trained on larger datasets. The resulting increase in computational and memory costs may prohibit the application of these MLIPs to perform large-scale MD simulations. Here, we present a teacher-student training framework in which the latent knowledge from the teacher (atomic energies) is used to augment the students' training. We show that the light-weight student MLIPs have faster MD speeds at a fraction of the memory footprint compared to the teacher models. Remarkably, the student models can even surpass the accuracy of the teachers, even though both are trained on the same quantum chemistry dataset. Our work highlights a practical method for MLIPs to reduce the resources required for large-scale MD simulations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge