Alexander Chowdhury

MedPerf: Open Benchmarking Platform for Medical Artificial Intelligence using Federated Evaluation

Oct 08, 2021

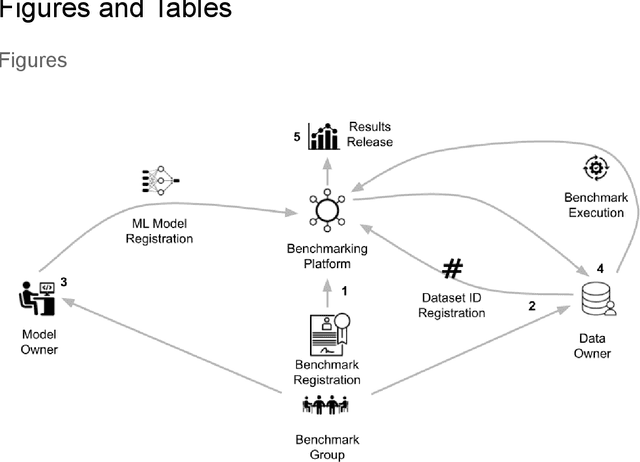

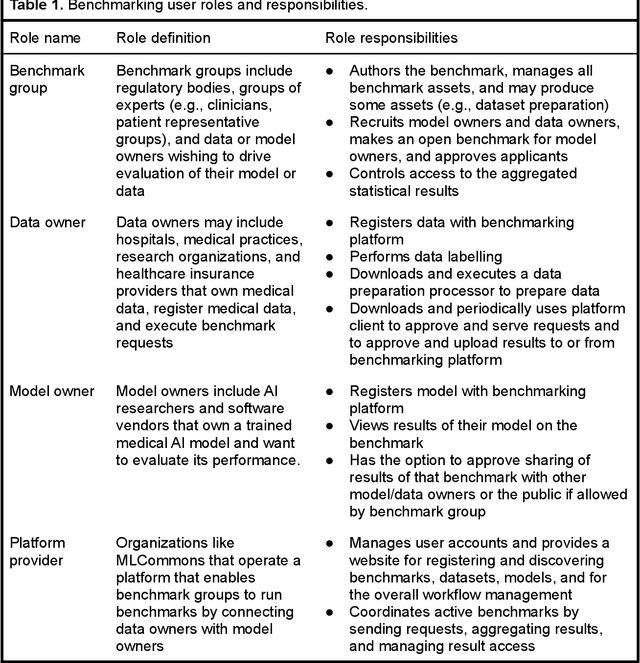

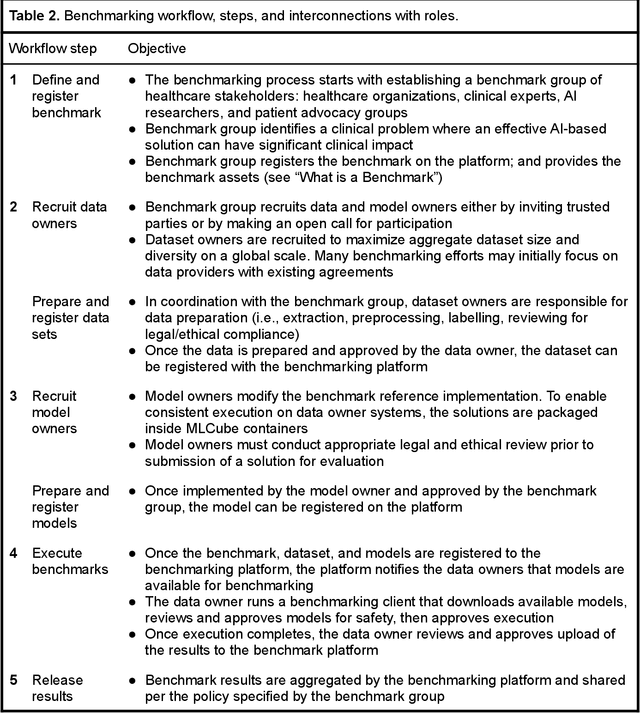

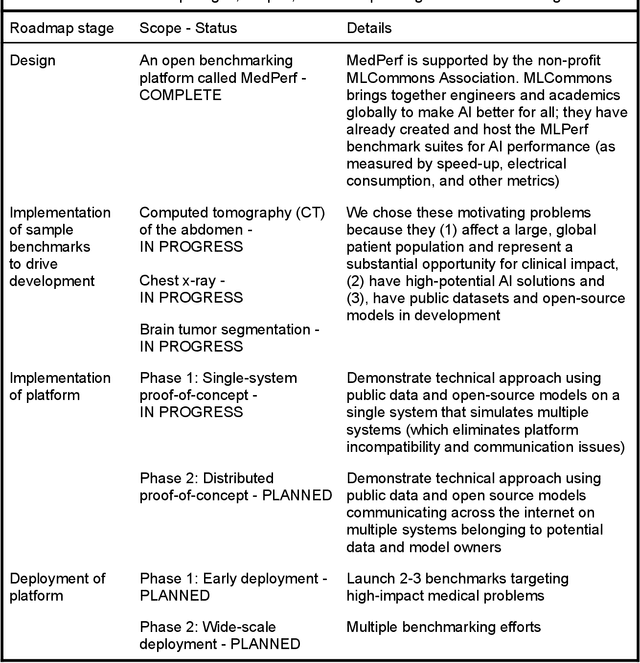

Abstract:Medical AI has tremendous potential to advance healthcare by supporting the evidence-based practice of medicine, personalizing patient treatment, reducing costs, and improving provider and patient experience. We argue that unlocking this potential requires a systematic way to measure the performance of medical AI models on large-scale heterogeneous data. To meet this need, we are building MedPerf, an open framework for benchmarking machine learning in the medical domain. MedPerf will enable federated evaluation in which models are securely distributed to different facilities for evaluation, thereby empowering healthcare organizations to assess and verify the performance of AI models in an efficient and human-supervised process, while prioritizing privacy. We describe the current challenges healthcare and AI communities face, the need for an open platform, the design philosophy of MedPerf, its current implementation status, and our roadmap. We call for researchers and organizations to join us in creating the MedPerf open benchmarking platform.

Deep Imitation Learning for Bimanual Robotic Manipulation

Oct 11, 2020

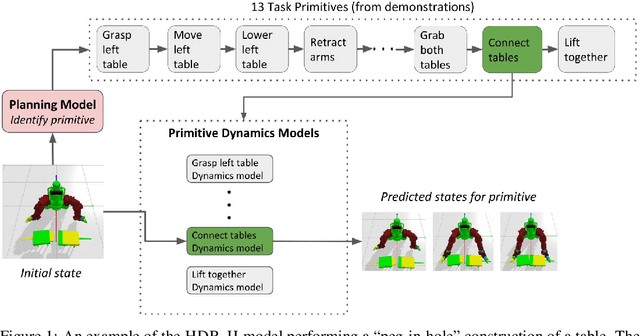

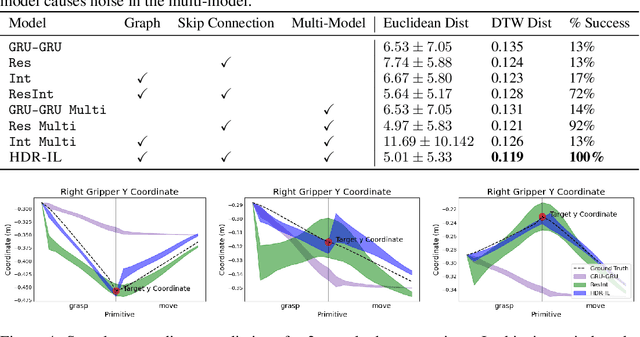

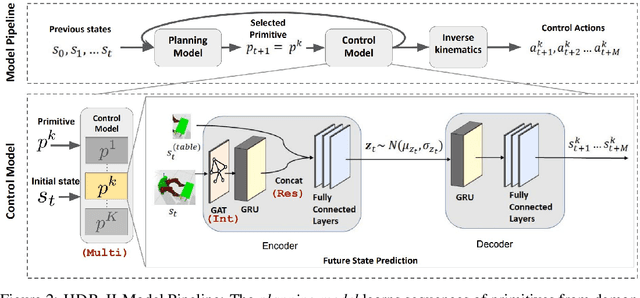

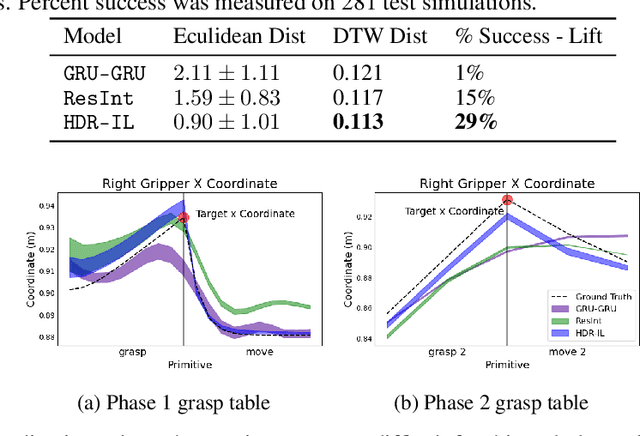

Abstract:We present a deep imitation learning framework for robotic bimanual manipulation in a continuous state-action space. Imitation learning has been effectively utilized in mimicking bimanual manipulation movements, but generalizing the movement to objects in different locations has not been explored. We hypothesize that to precisely generalize the learned behavior relative to an object's location requires modeling relational information in the environment. To achieve this, we designed a method that (i) uses a multi-model framework to decomposes complex dynamics into elemental movement primitives, and (ii) parameterizes each primitive using a recurrent graph neural network to capture interactions. Our model is a deep, hierarchical, modular architecture with a high-level planner that learns to compose primitives sequentially and a low-level controller which integrates primitive dynamics modules and inverse kinematics control. We demonstrate the effectiveness using several simulated bimanual robotic manipulation tasks. Compared to models based on previous imitation learning studies, our model generalizes better and achieves higher success rates in the simulated tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge