Alberto Giammarino

Human-Robot Interfaces and Physical Interaction, Istituto Italiano di Tecnologia, Genoa, Italy

A Reinforcement Learning Approach for Robotic Unloading from Visual Observations

Sep 12, 2023Abstract:In this work, we focus on a robotic unloading problem from visual observations, where robots are required to autonomously unload stacks of parcels using RGB-D images as their primary input source. While supervised and imitation learning have accomplished good results in these types of tasks, they heavily rely on labeled data, which are challenging to obtain in realistic scenarios. Our study aims to develop a sample efficient controller framework that can learn unloading tasks without the need for labeled data during the learning process. To tackle this challenge, we propose a hierarchical controller structure that combines a high-level decision-making module with classical motion control. The high-level module is trained using Deep Reinforcement Learning (DRL), wherein we incorporate a safety bias mechanism and design a reward function tailored to this task. Our experiments demonstrate that both these elements play a crucial role in achieving improved learning performance. Furthermore, to ensure reproducibility and establish a benchmark for future research, we provide free access to our code and simulation.

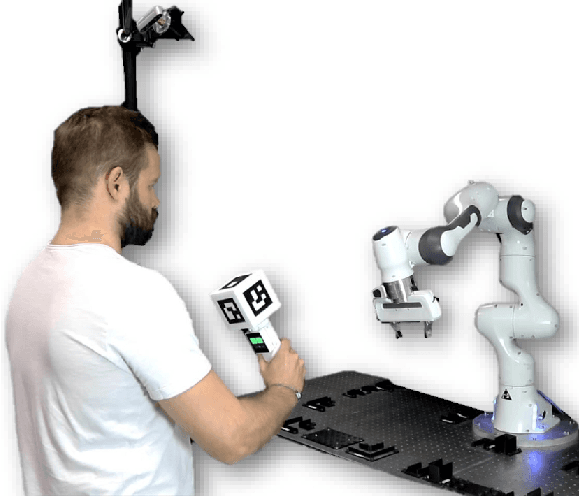

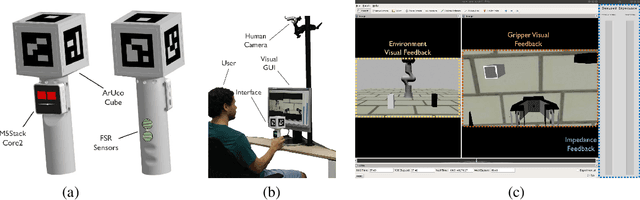

An Open Tele-Impedance Framework to Generate Large Datasets for Contact-Rich Tasks in Robotic Manipulation

Sep 21, 2022

Abstract:Using large datasets in machine learning has led to outstanding results, in some cases outperforming humans in tasks that were believed impossible for machines. However, achieving human-level performance when dealing with physically interactive tasks, e.g., in contact-rich robotic manipulation, is still a big challenge. It is well known that regulating the Cartesian impedance for such operations is of utmost importance for their successful execution. Approaches like reinforcement Learning (RL) can be a promising paradigm for solving such problems. More precisely, approaches that use task-agnostic expert demonstrations to bootstrap learning when solving new tasks have a huge potential since they can exploit large datasets. However, existing data collection systems are expensive, complex, or do not allow for impedance regulation. This work represents a first step towards a data collection framework suitable for collecting large datasets of impedance-based expert demonstrations compatible with the RL problem formulation, where a novel action space is used. The framework is designed according to requirements acquired after an extensive analysis of available data collection frameworks for robotics manipulation. The result is a low-cost and open-access tele-impedance framework which makes human experts capable of demonstrating contact-rich tasks.

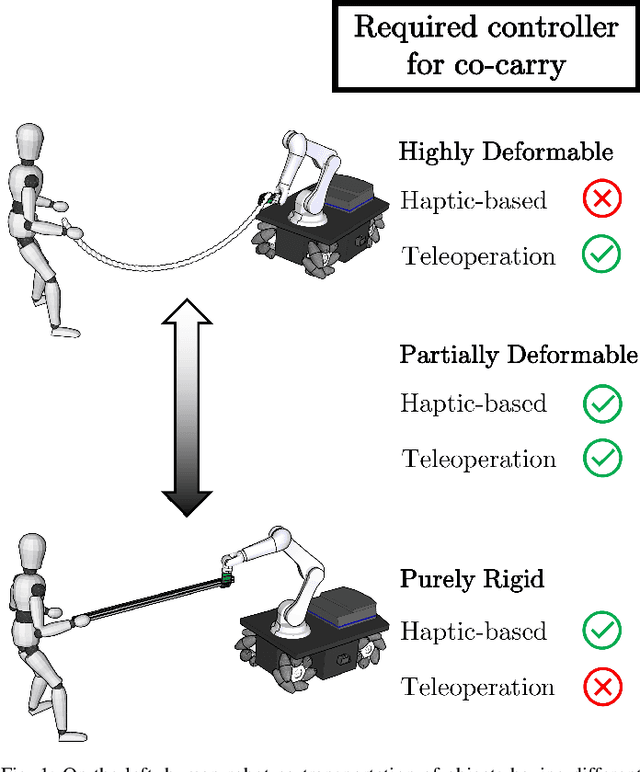

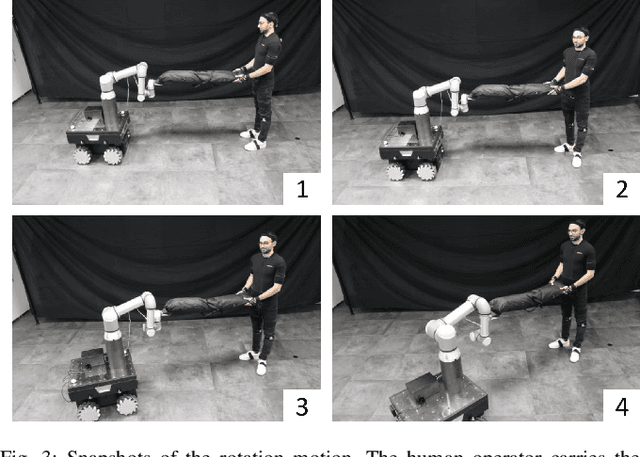

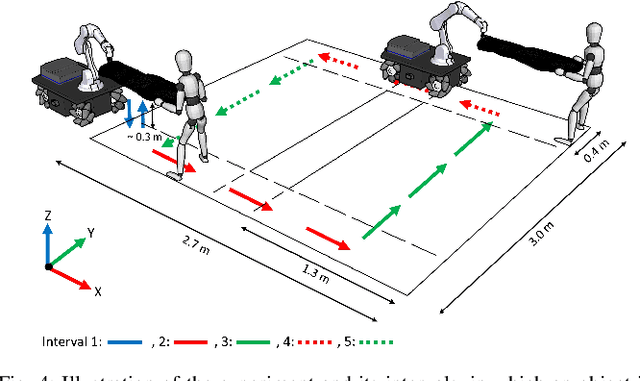

An Object Deformation-Agnostic Framework for Human-Robot Collaborative Transportation

Jul 27, 2022

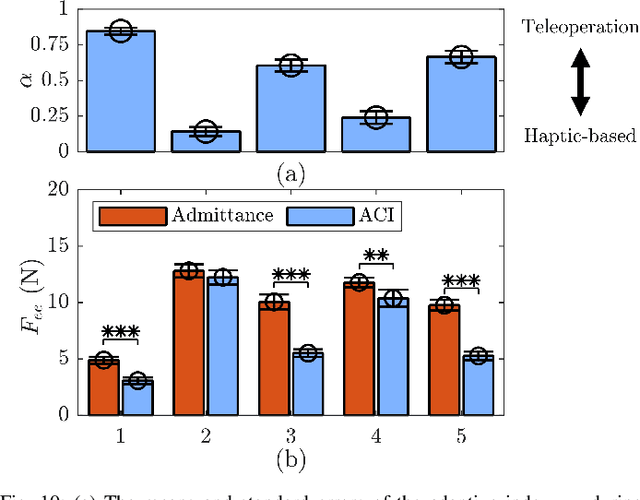

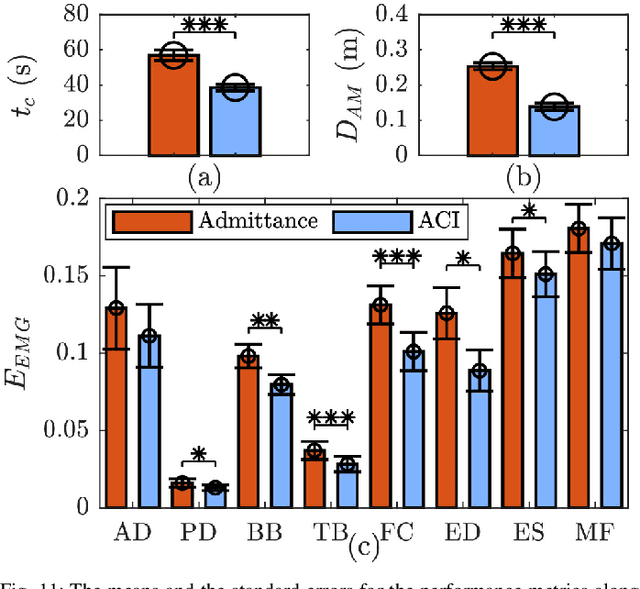

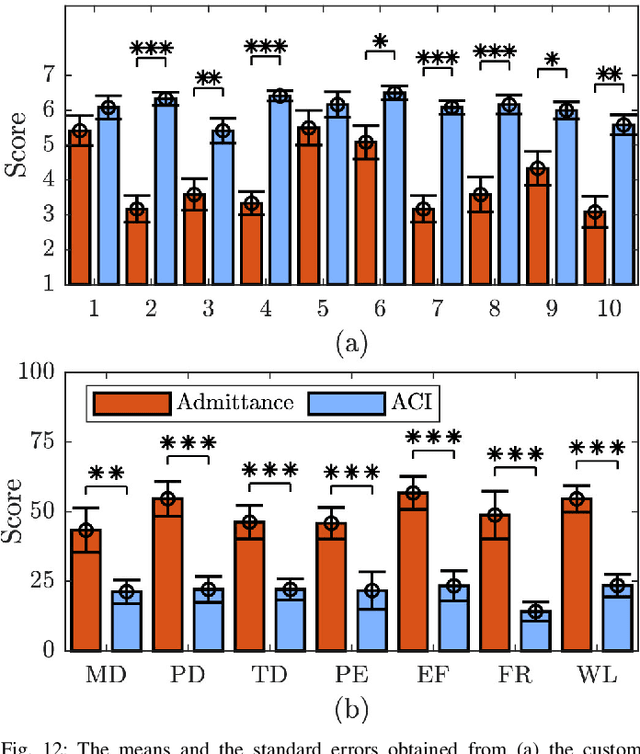

Abstract:In this study, an adaptive object deformability-agnostic human-robot collaborative transportation framework is presented. The proposed framework enables to combine the haptic information transferred through the object with the human kinematic information obtained from a motion capture system to generate reactive whole-body motions on a mobile collaborative robot. Furthermore, it allows rotating the objects in an intuitive and accurate way during co-transportation based on an algorithm that detects the human rotation intention using the torso and hand movements. First, we validate the framework with the two extremities of the object deformability range (i.e, purely rigid aluminum rod and highly deformable rope) by utilizing a mobile manipulator which consists of an Omni-directional mobile base and a collaborative robotic arm. Next, its performance is compared with an admittance controller during a co-carry task of a partially deformable object in a 12-subjects user study. Quantitative and qualitative results of this experiment show that the proposed framework can effectively handle the transportation of objects regardless of their deformability and provides intuitive assistance to human partners. Finally, we have demonstrated the potential of our framework in a different scenario, where the human and the robot co-transport a manikin using a deformable sheet.

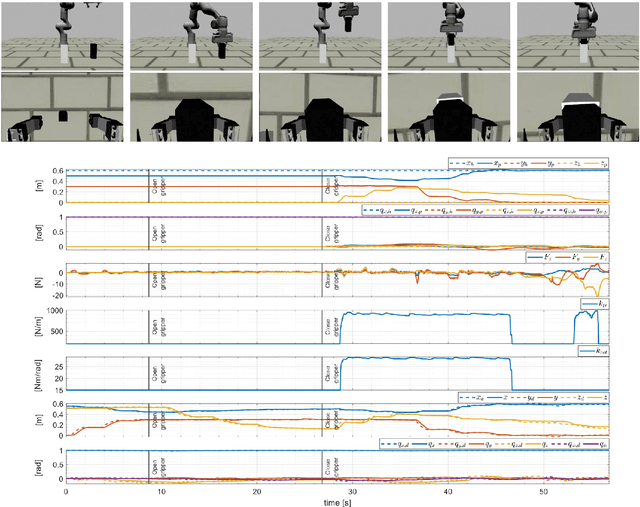

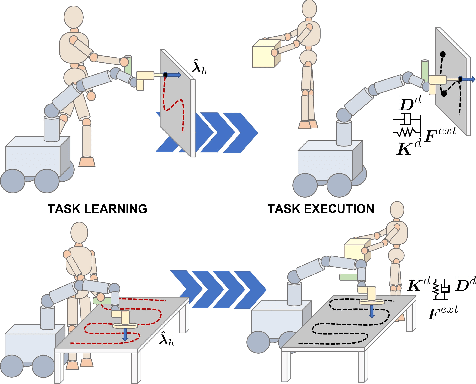

A Hybrid Learning and Optimization Framework to Achieve Physically Interactive Tasks with Mobile Manipulators

Mar 28, 2022

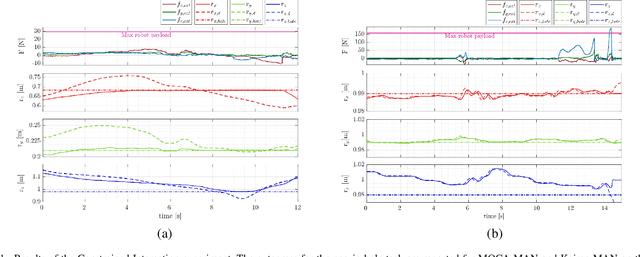

Abstract:This paper proposes a hybrid learning and optimization framework for mobile manipulators for complex and physically interactive tasks. The framework exploits the MOCA-MAN interface to obtain intuitive and simplified human demonstrations and Gaussian Mixture Model/Gaussian Mixture Regression to encode and generate the learned task requirements in terms of position, velocity, and force profiles. Next, using the desired trajectories and force profiles generated by GMM/GMR, the impedance parameters of a Cartesian impedance controller are optimized online through a Quadratic Program augmented with an energy tank to ensure the passivity of the controlled system. Two experiments are conducted to validate the framework, comparing our method with two approaches with constant stiffness (high and low). The results showed that the proposed method outperforms the other two cases in terms of trajectory tracking and generated interaction forces, even in the presence of disturbances such as unexpected end-effector collisions.

Human-Robot Collaborative Carrying of Objects with Unknown Deformation Characteristics

Jan 25, 2022

Abstract:In this work, we introduce an adaptive control framework for human-robot collaborative transportation of objects with unknown deformation behaviour. The proposed framework takes as input the haptic information transmitted through the object, and the kinematic information of the human body obtained from a motion capture system to create reactive whole-body motions on a mobile collaborative robot. Moreover, the designed framework delivers an intuitive way to rotate the object by processing the human torso and hand movements. In order to validate our framework experimentally, we compared its performance with an admittance controller during a co-transportation task of a partially deformable object. We additionally demonstrate the potential of the framework while co-transporting rigid (aluminum rod) and deformable (rope) objects. A mobile manipulator which consists of an Omni-directional mobile base, a collaborative robotic arm, and a robotic hand is used as the robotic partner in the experiments. Quantitative and qualitative results of a 12-subjects experiment show that the proposed framework can effectively deal with objects of unknown deformability and provides intuitive assistance to human partners.

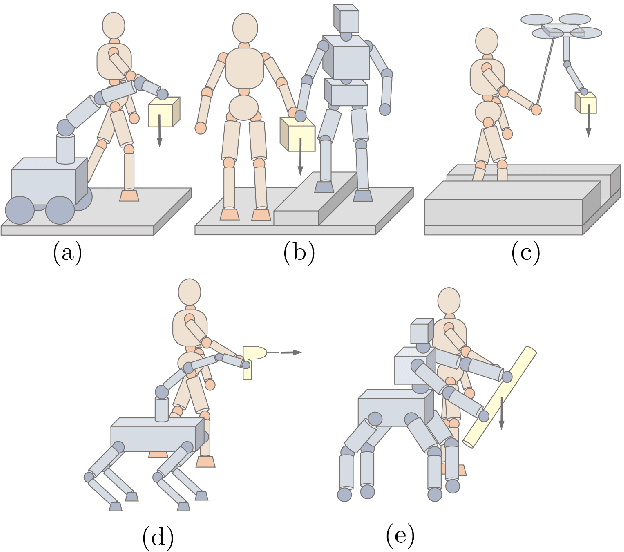

SUPER-MAN: SUPERnumerary Robotic Bodies for Physical Assistance in HuMAN-Robot Conjoined Actions

Jan 17, 2022

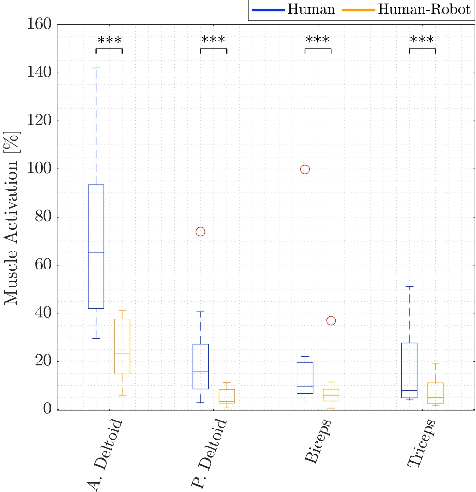

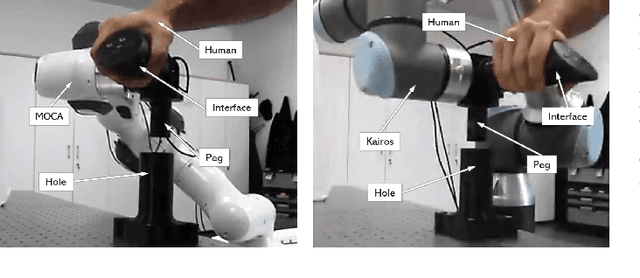

Abstract:This paper presents a mobile supernumerary robotic approach to physical assistance in human-robot conjoined actions. The study starts with the description of the SUPER-MAN concept. The idea is to develop and utilize mobile collaborative systems that can follow human loco-manipulation commands to perform industrial tasks through three main components: i) a physical interface, ii) a human-robot interaction controller and iii) a supernumerary robotic body. Next, we present two possible implementations within the framework - from theoretical and hardware perspectives. The first system is called MOCA-MAN, and is composed of a redundant torque-controlled robotic arm and an omni-directional mobile platform. The second one is called Kairos-MAN, formed by a high-payload 6-DoF velocity-controlled robotic arm and an omni-directional mobile platform. The systems share the same admittance interface, through which user wrenches are translated to loco-manipulation commands, generated by whole-body controllers of each system. Besides, a thorough user-study with multiple and cross-gender subjects is presented to reveal the quantitative performance of the two systems in effort demanding and dexterous tasks. Moreover, we provide qualitative results from the NASA-TLX questionnaire to demonstrate the SUPER-MAN approach's potential and its acceptability from the users' viewpoint.

Improving Standing Balance Performance through the Assistance of a Mobile Collaborative Robot

Sep 24, 2021

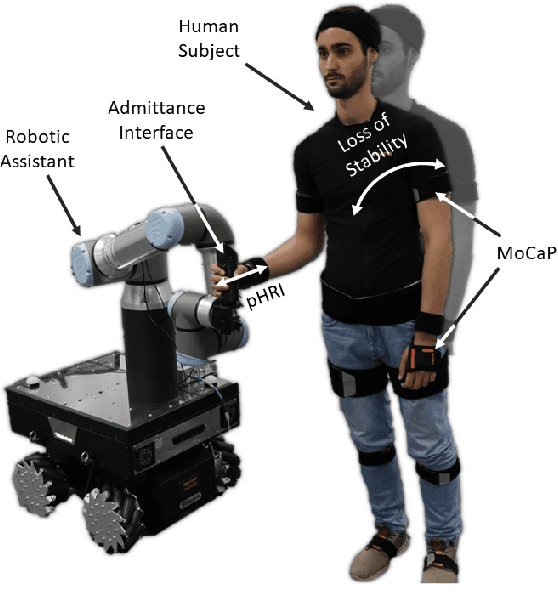

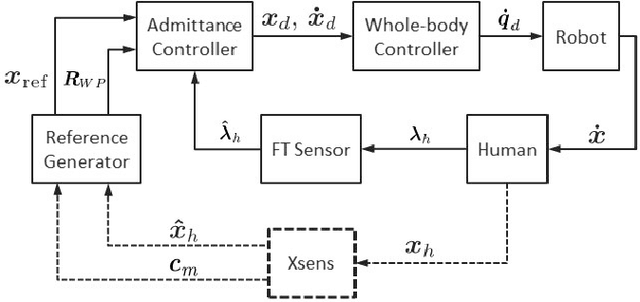

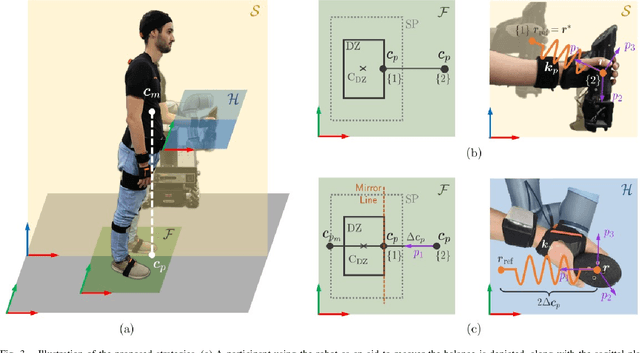

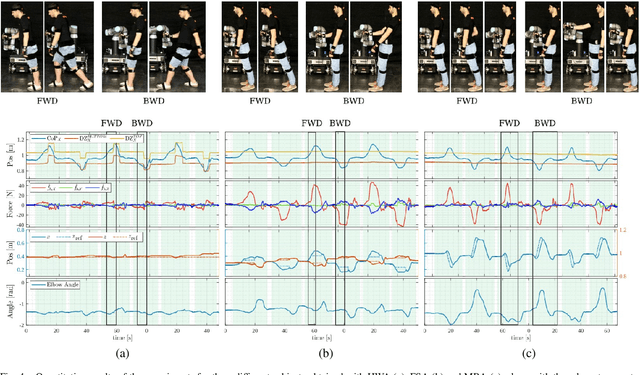

Abstract:This letter presents the design and development of a robotic system to give physical assistance to the elderly or people with neurological disorders such as Ataxia or Parkinson's. In particular, we propose using a mobile collaborative robot with an interaction-assistive whole-body interface to help people unable to maintain balance. The robotic system consists of an Omni-directional mobile base, a high-payload robotic arm, and an admittance-type interface acting as a support handle while measuring human-sourced interaction forces. The postural balance of the human body is estimated through the projection of the body Center of Mass (CoM) to the support polygon (SP) representing the quasi-static Center of Pressure (CoP). In response to the interaction forces and the tracking of the human posture, the robot can create assistive forces to restore balance in case of its loss. Otherwise, during normal stance or walking, it will follow the user with minimum/no opposing forces through the generation of coupled arm and base movements. As the balance-restoring strategy, we propose two strategies and evaluate them in a laboratory setting on healthy human participants. Quantitative and qualitative results of a 12-subjects experiment are then illustrated and discussed, comparing the performances of the two strategies and the overall system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge