Alan Chen

Transferring Features Across Language Models With Model Stitching

Jun 07, 2025Abstract:In this work, we demonstrate that affine mappings between residual streams of language models is a cheap way to effectively transfer represented features between models. We apply this technique to transfer the weights of Sparse Autoencoders (SAEs) between models of different sizes to compare their representations. We find that small and large models learn highly similar representation spaces, which motivates training expensive components like SAEs on a smaller model and transferring to a larger model at a FLOPs savings. For example, using a small-to-large transferred SAE as initialization can lead to 50% cheaper training runs when training SAEs on larger models. Next, we show that transferred probes and steering vectors can effectively recover ground truth performance. Finally, we dive deeper into feature-level transferability, finding that semantic and structural features transfer noticeably differently while specific classes of functional features have their roles faithfully mapped. Overall, our findings illustrate similarities and differences in the linear representation spaces of small and large models and demonstrate a method for improving the training efficiency of SAEs.

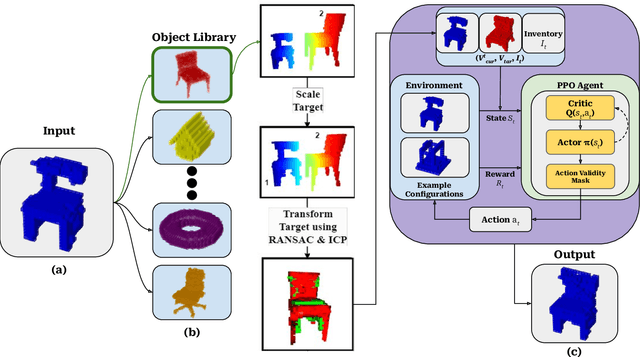

AssemblyComplete: 3D Combinatorial Construction with Deep Reinforcement Learning

Oct 20, 2024

Abstract:A critical goal in robotics and autonomy is to teach robots to adapt to real-world collaborative tasks, particularly in automatic assembly. The ability of a robot to understand the original intent of an incomplete assembly and complete missing features without human instruction is valuable but challenging. This paper introduces 3D combinatorial assembly completion, which is demonstrated using combinatorial unit primitives (i.e., Lego bricks). Combinatorial assembly is challenging due to the possible assembly combinations and complex physical constraints (e.g., no brick collisions, structure stability, inventory constraints, etc.). To address these challenges, we propose a two-part deep reinforcement learning (DRL) framework that tackles teaching the robot to understand the objective of an incomplete assembly and learning a construction policy to complete the assembly. The robot queries a stable object library to facilitate assembly inference and guide learning. In addition to the robot policy, an action mask is developed to rule out invalid actions that violate physical constraints for object-oriented construction. We demonstrate the proposed framework's feasibility and robustness in a variety of assembly scenarios in which the robot satisfies real-life assembly with respect to both solution and runtime quality. Furthermore, results demonstrate that the proposed framework effectively infers and assembles incomplete structures for unseen and unique object types.

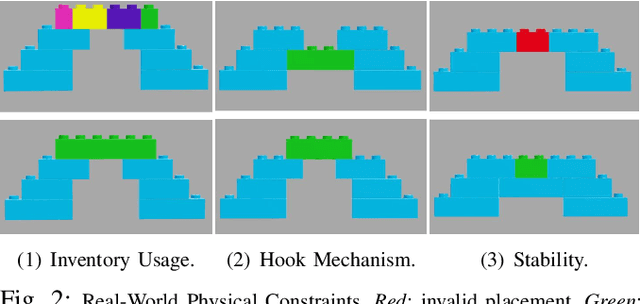

Physics-Aware Combinatorial Assembly Planning using Deep Reinforcement Learning

Aug 19, 2024

Abstract:Combinatorial assembly uses standardized unit primitives to build objects that satisfy user specifications. Lego is a widely used platform for combinatorial assembly, in which people use unit primitives (ie Lego bricks) to build highly customizable 3D objects. This paper studies sequence planning for physical combinatorial assembly using Lego. Given the shape of the desired object, we want to find a sequence of actions for placing Lego bricks to build the target object. In particular, we aim to ensure the planned assembly sequence is physically executable. However, assembly sequence planning (ASP) for combinatorial assembly is particularly challenging due to its combinatorial nature, ie the vast number of possible combinations and complex constraints. To address the challenges, we employ deep reinforcement learning to learn a construction policy for placing unit primitives sequentially to build the desired object. Specifically, we design an online physics-aware action mask that efficiently filters out invalid actions and guides policy learning. In the end, we demonstrate that the proposed method successfully plans physically valid assembly sequences for constructing different Lego structures. The generated construction plan can be executed in real.

Simulation-aided Learning from Demonstration for Robotic LEGO Construction

Sep 24, 2023Abstract:Recent advancements in manufacturing have a growing demand for fast, automatic prototyping (i.e. assembly and disassembly) capabilities to meet users' needs. This paper studies automatic rapid LEGO prototyping, which is devoted to constructing target LEGO objects that satisfy individual customization needs and allow users to freely construct their novel designs. A construction plan is needed in order to automatically construct the user-specified LEGO design. However, a freely designed LEGO object might not have an existing construction plan, and generating such a LEGO construction plan requires a non-trivial effort since it requires accounting for numerous constraints (e.g. object shape, colors, stability, etc.). In addition, programming the prototyping skill for the robot requires the users to have expert programming skills, which makes the task beyond the reach of the general public. To address the challenges, this paper presents a simulation-aided learning from demonstration (SaLfD) framework for easily deploying LEGO prototyping capability to robots. In particular, the user demonstrates constructing the customized novel LEGO object. The robot extracts the task information by observing the human operation and generates the construction plan. A simulation is developed to verify the correctness of the learned construction plan and the resulting LEGO prototype. The proposed system is deployed to a FANUC LR-mate 200id/7L robot. Experiments demonstrate that the proposed SaLfD framework can effectively correct and learn the prototyping (i.e. assembly and disassembly) tasks from human demonstrations. And the learned prototyping tasks are realized by the FANUC robot.

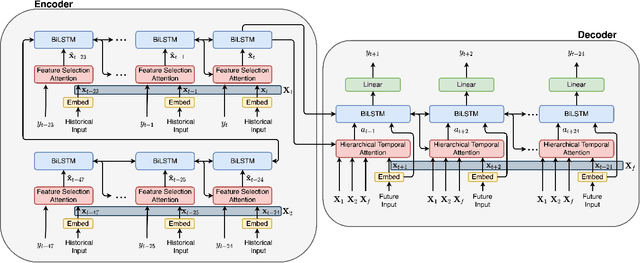

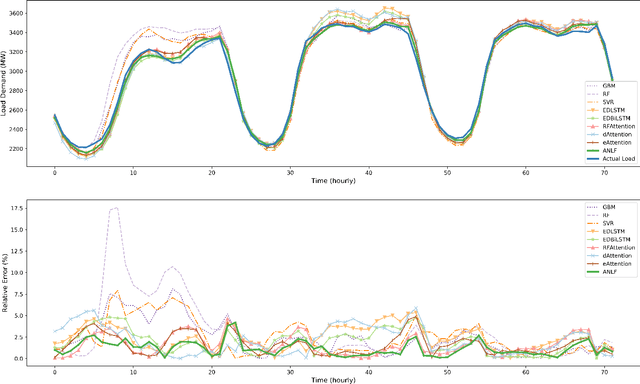

Attention-based Neural Load Forecasting: A Dynamic Feature Selection Approach

Aug 25, 2021

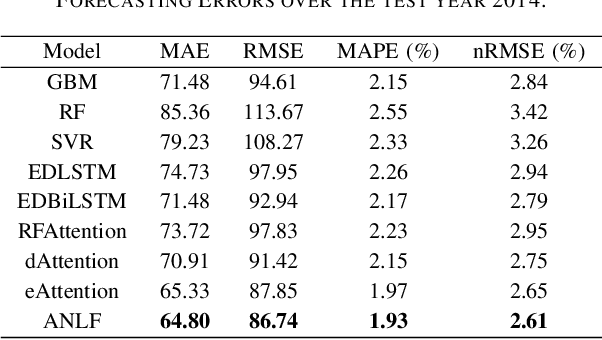

Abstract:Encoder-decoder-based recurrent neural network (RNN) has made significant progress in sequence-to-sequence learning tasks such as machine translation and conversational models. Recent works have shown the advantage of this type of network in dealing with various time series forecasting tasks. The present paper focuses on the problem of multi-horizon short-term load forecasting, which plays a key role in the power system's planning and operation. Leveraging the encoder-decoder RNN, we develop an attention model to select the relevant features and similar temporal information adaptively. First, input features are assigned with different weights by a feature selection attention layer, while the updated historical features are encoded by a bi-directional long short-term memory (BiLSTM) layer. Then, a decoder with hierarchical temporal attention enables a similar day selection, which re-evaluates the importance of historical information at each time step. Numerical results tested on the dataset of the global energy forecasting competition 2014 show that our proposed model significantly outperforms some existing forecasting schemes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge