Aboudy Kreidieh

Failout: Achieving Failure-Resilient Inference in Distributed Neural Networks

Feb 18, 2020

Abstract:When a neural network is partitioned and distributed across physical nodes, failure of physical nodes causes the failure of the neural units that are placed on those nodes, which results in a significant performance drop. Current approaches focus on resiliency of training in distributed neural networks. However, resiliency of inference in distributed neural networks is less explored. We introduce ResiliNet, a scheme for making inference in distributed neural networks resilient to physical node failures. ResiliNet combines two concepts to provide resiliency: skip connection in residual neural networks, and a novel technique called failout, which is introduced in this paper. Failout simulates physical node failure conditions during training using dropout, and is specifically designed to improve the resiliency of distributed neural networks. The results of the experiments and ablation studies using three datasets confirm the ability of ResiliNet to provide inference resiliency for distributed neural networks.

Guardians of the Deep Fog: Failure-Resilient DNN Inference from Edge to Cloud

Sep 21, 2019

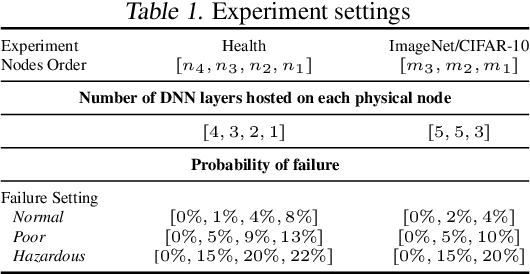

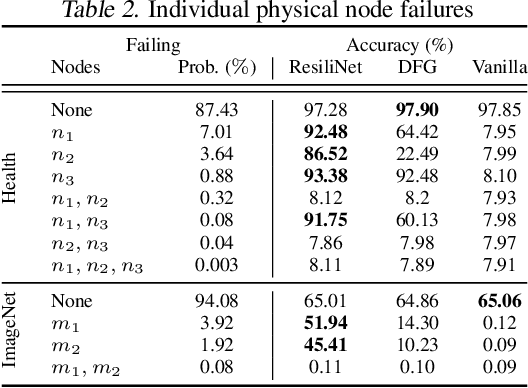

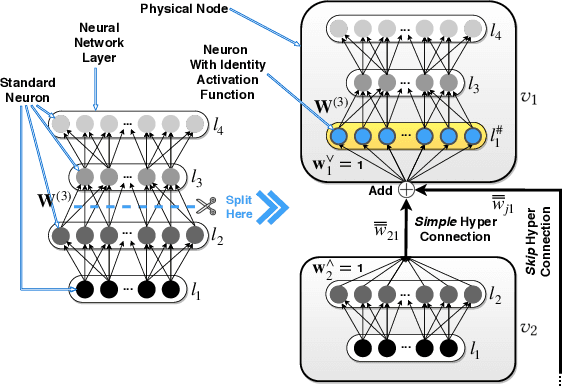

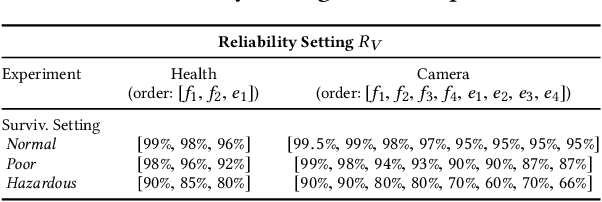

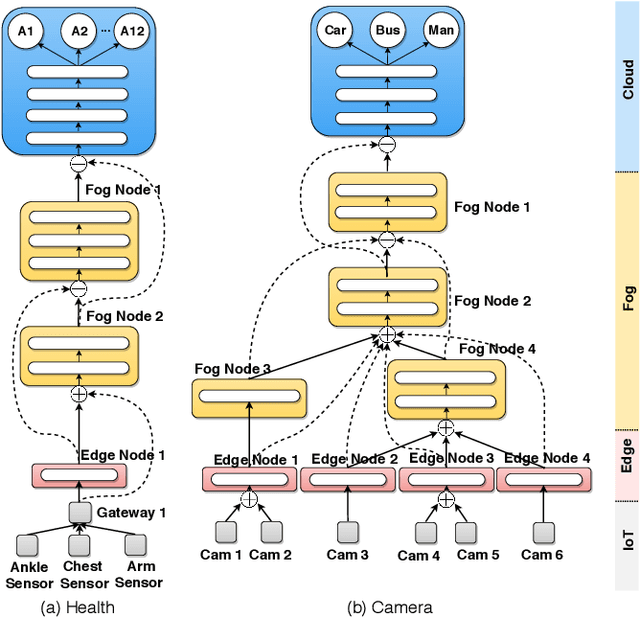

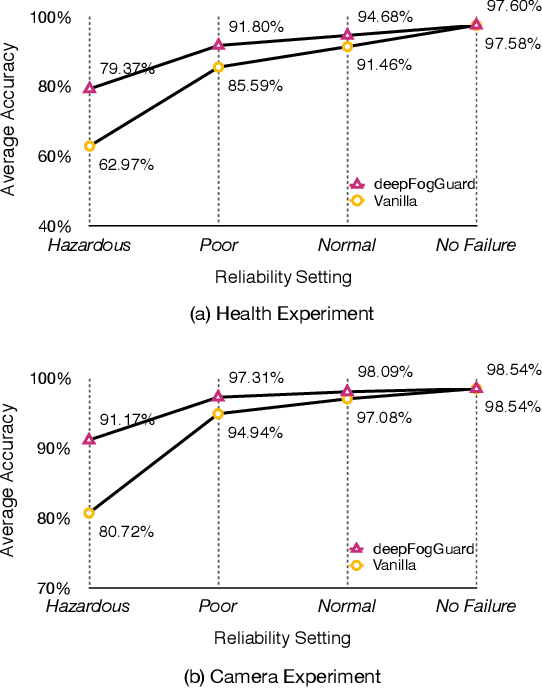

Abstract:Partitioning and distributing deep neural networks (DNNs) over physical nodes such as edge, fog, or cloud nodes, could enhance sensor fusion, and reduce bandwidth and inference latency. However, when a DNN is distributed over physical nodes, failure of the physical nodes causes the failure of the DNN units that are placed on these nodes. The performance of the inference task will be unpredictable, and most likely, poor, if the distributed DNN is not specifically designed and properly trained for failures. Motivated by this, we introduce deepFogGuard, a DNN architecture augmentation scheme for making the distributed DNN inference task failure-resilient. To articulate deepFogGuard, we introduce the elements and a model for the resiliency of distributed DNN inference. Inspired by the concept of residual connections in DNNs, we introduce skip hyperconnections in distributed DNNs, which are the basis of deepFogGuard's design to provide resiliency. Next, our extensive experiments using two existing datasets for the sensing and vision applications confirm the ability of deepFogGuard to provide resiliency for distributed DNNs in edge-cloud networks.

Flow: Architecture and Benchmarking for Reinforcement Learning in Traffic Control

Oct 16, 2017

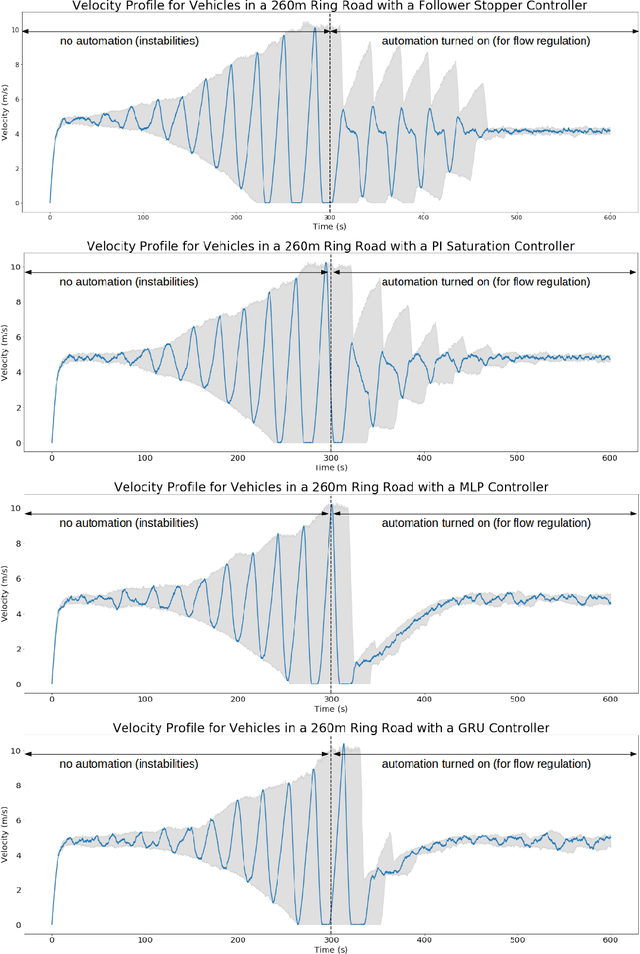

Abstract:Flow is a new computational framework, built to support a key need triggered by the rapid growth of autonomy in ground traffic: controllers for autonomous vehicles in the presence of complex nonlinear dynamics in traffic. Leveraging recent advances in deep Reinforcement Learning (RL), Flow enables the use of RL methods such as policy gradient for traffic control and enables benchmarking the performance of classical (including hand-designed) controllers with learned policies (control laws). Flow integrates traffic microsimulator SUMO with deep reinforcement learning library rllab and enables the easy design of traffic tasks, including different networks configurations and vehicle dynamics. We use Flow to develop reliable controllers for complex problems, such as controlling mixed-autonomy traffic (involving both autonomous and human-driven vehicles) in a ring road. For this, we first show that state-of-the-art hand-designed controllers excel when in-distribution, but fail to generalize; then, we show that even simple neural network policies can solve the stabilization task across density settings and generalize to out-of-distribution settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge