Zonghua Gu

Real-time Stereo-based 3D Object Detection for Streaming Perception

Oct 16, 2024

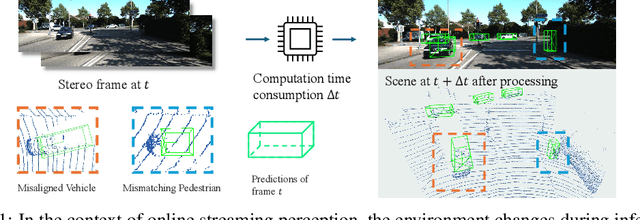

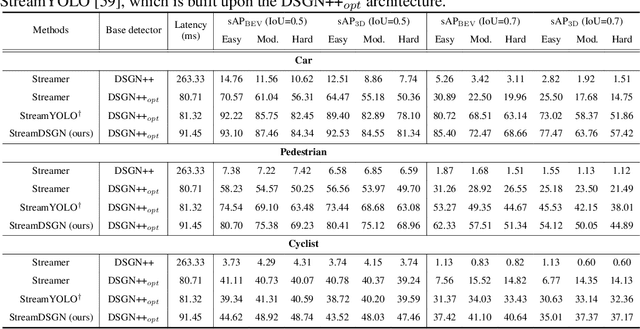

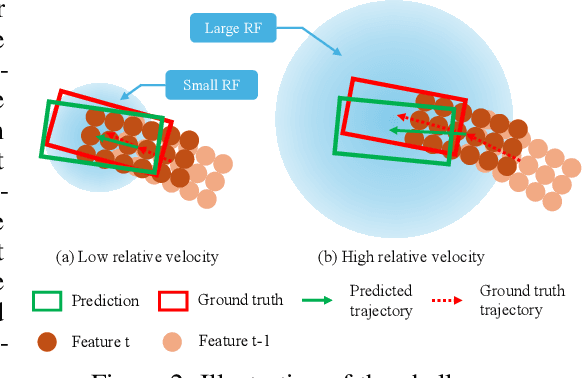

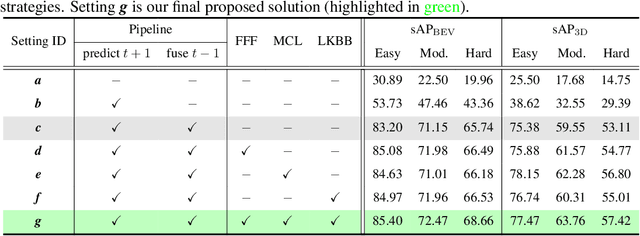

Abstract:The ability to promptly respond to environmental changes is crucial for the perception system of autonomous driving. Recently, a new task called streaming perception was proposed. It jointly evaluate the latency and accuracy into a single metric for video online perception. In this work, we introduce StreamDSGN, the first real-time stereo-based 3D object detection framework designed for streaming perception. StreamDSGN is an end-to-end framework that directly predicts the 3D properties of objects in the next moment by leveraging historical information, thereby alleviating the accuracy degradation of streaming perception. Further, StreamDSGN applies three strategies to enhance the perception accuracy: (1) A feature-flow-based fusion method, which generates a pseudo-next feature at the current moment to address the misalignment issue between feature and ground truth. (2) An extra regression loss for explicit supervision of object motion consistency in consecutive frames. (3) A large kernel backbone with a large receptive field for effectively capturing long-range spatial contextual features caused by changes in object positions. Experiments on the KITTI Tracking dataset show that, compared with the strong baseline, StreamDSGN significantly improves the streaming average precision by up to 4.33%. Our code is available at https://github.com/weiyangdaren/streamDSGN-pytorch.

Efficient Spiking Neural Networks with Logarithmic Temporal Coding

Nov 10, 2018

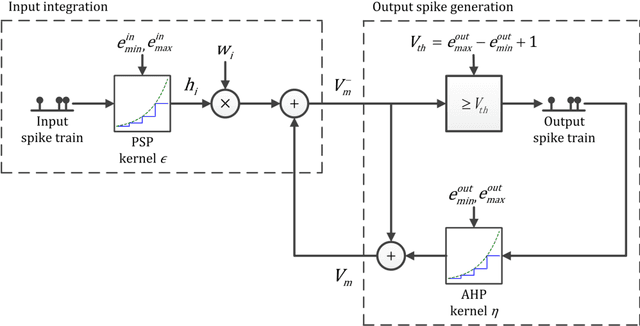

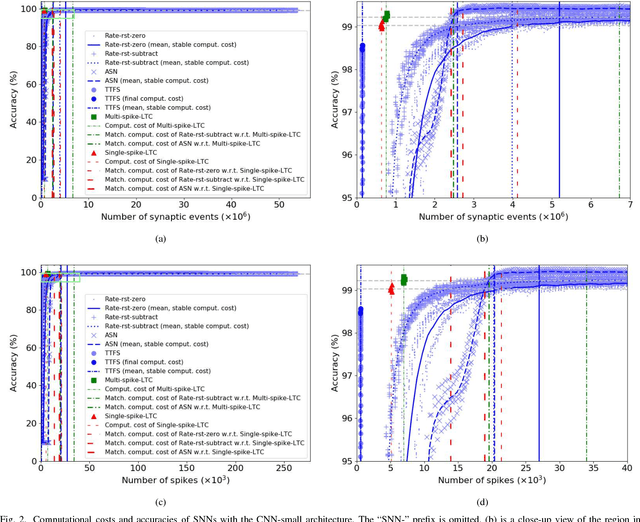

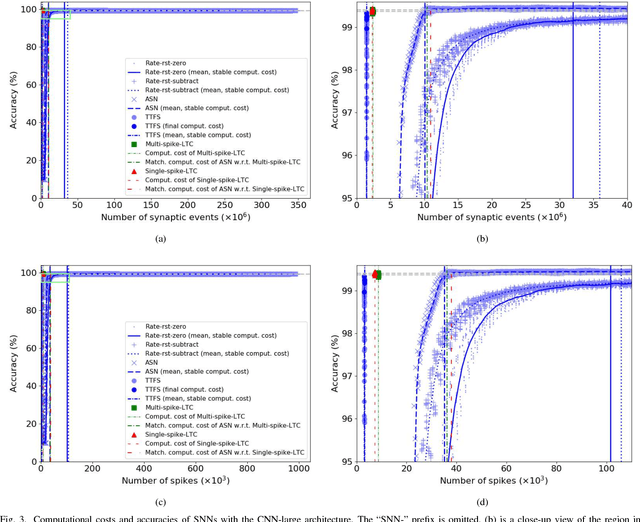

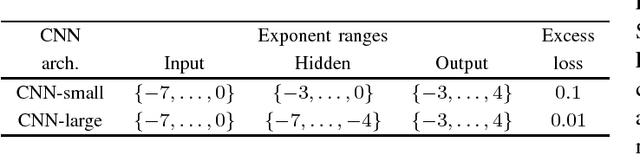

Abstract:A Spiking Neural Network (SNN) can be trained indirectly by first training an Artificial Neural Network (ANN) with the conventional backpropagation algorithm, then converting it into an SNN. The conventional rate-coding method for SNNs uses the number of spikes to encode magnitude of an activation value, and may be computationally inefficient due to the large number of spikes. Temporal-coding is typically more efficient by leveraging the timing of spikes to encode information. In this paper, we present Logarithmic Temporal Coding (LTC), where the number of spikes used to encode an activation value grows logarithmically with the activation value; and the accompanying Exponentiate-and-Fire (EF) spiking neuron model, which only involves efficient bit-shift and addition operations. Moreover, we improve the training process of ANN to compensate for approximation errors due to LTC. Experimental results indicate that the resulting SNN achieves competitive performance at significantly lower computational cost than related work.

Two-Bit Networks for Deep Learning on Resource-Constrained Embedded Devices

Jan 04, 2017

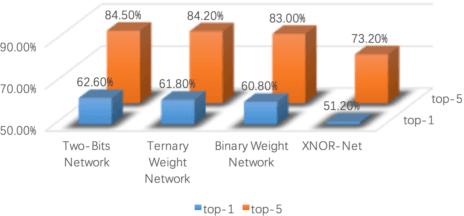

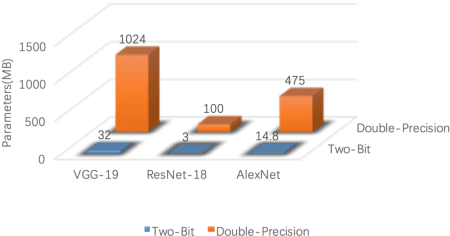

Abstract:With the rapid proliferation of Internet of Things and intelligent edge devices, there is an increasing need for implementing machine learning algorithms, including deep learning, on resource-constrained mobile embedded devices with limited memory and computation power. Typical large Convolutional Neural Networks (CNNs) need large amounts of memory and computational power, and cannot be deployed on embedded devices efficiently. We present Two-Bit Networks (TBNs) for model compression of CNNs with edge weights constrained to (-2, -1, 1, 2), which can be encoded with two bits. Our approach can reduce the memory usage and improve computational efficiency significantly while achieving good performance in terms of classification accuracy, thus representing a reasonable tradeoff between model size and performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge