Zhiguang Feng

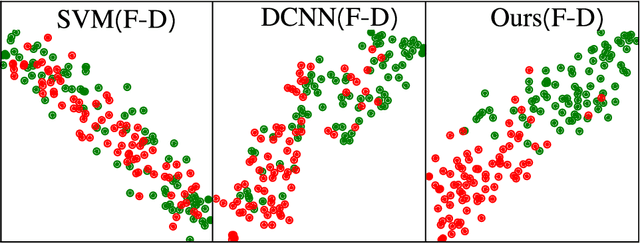

Morphological feature visualization of Alzheimer's disease via Multidirectional Perception GAN

Nov 25, 2021

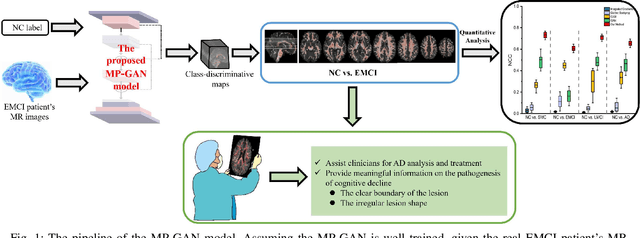

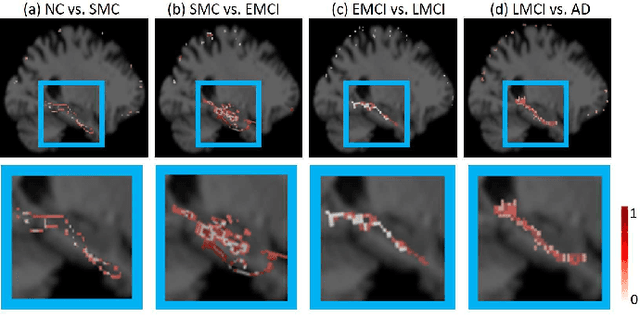

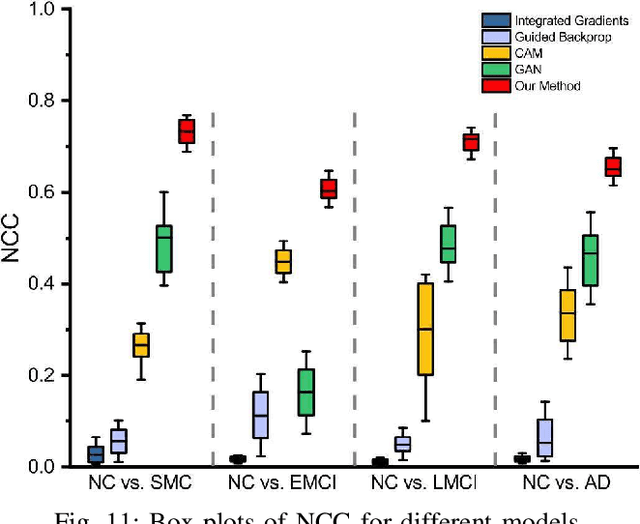

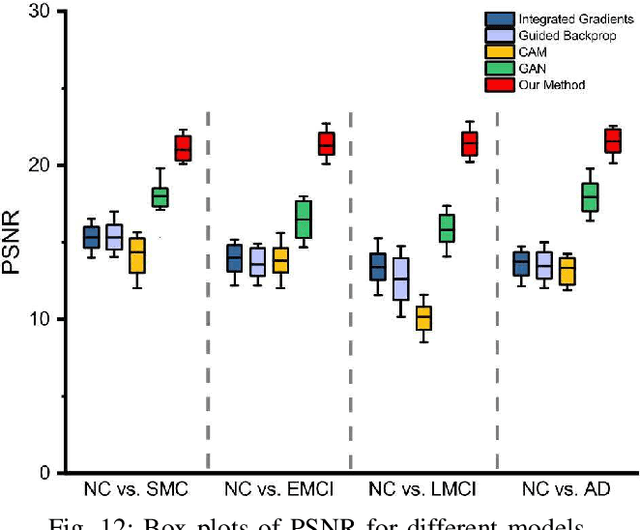

Abstract:The diagnosis of early stages of Alzheimer's disease (AD) is essential for timely treatment to slow further deterioration. Visualizing the morphological features for the early stages of AD is of great clinical value. In this work, a novel Multidirectional Perception Generative Adversarial Network (MP-GAN) is proposed to visualize the morphological features indicating the severity of AD for patients of different stages. Specifically, by introducing a novel multidirectional mapping mechanism into the model, the proposed MP-GAN can capture the salient global features efficiently. Thus, by utilizing the class-discriminative map from the generator, the proposed model can clearly delineate the subtle lesions via MR image transformations between the source domain and the pre-defined target domain. Besides, by integrating the adversarial loss, classification loss, cycle consistency loss and \emph{L}1 penalty, a single generator in MP-GAN can learn the class-discriminative maps for multiple-classes. Extensive experimental results on Alzheimer's Disease Neuroimaging Initiative (ADNI) dataset demonstrate that MP-GAN achieves superior performance compared with the existing methods. The lesions visualized by MP-GAN are also consistent with what clinicians observe.

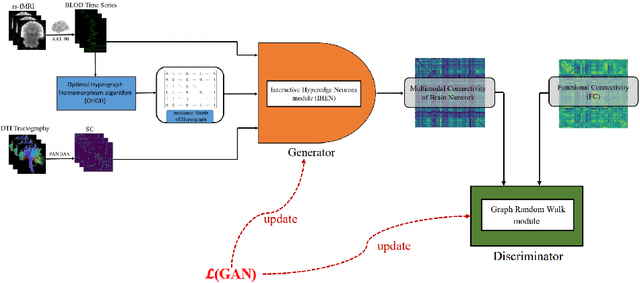

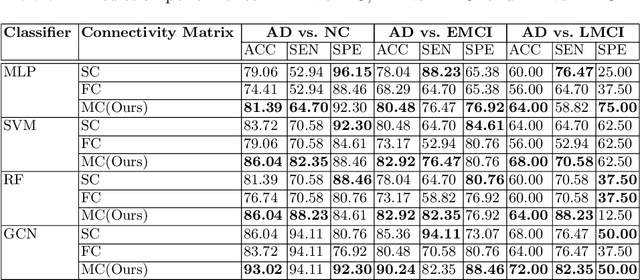

Characterization Multimodal Connectivity of Brain Network by Hypergraph GAN for Alzheimer's Disease Analysis

Jul 21, 2021

Abstract:Using multimodal neuroimaging data to characterize brain network is currently an advanced technique for Alzheimer's disease(AD) Analysis. Over recent years the neuroimaging community has made tremendous progress in the study of resting-state functional magnetic resonance imaging (rs-fMRI) derived from blood-oxygen-level-dependent (BOLD) signals and Diffusion Tensor Imaging (DTI) derived from white matter fiber tractography. However, Due to the heterogeneity and complexity between BOLD signals and fiber tractography, Most existing multimodal data fusion algorithms can not sufficiently take advantage of the complementary information between rs-fMRI and DTI. To overcome this problem, a novel Hypergraph Generative Adversarial Networks(HGGAN) is proposed in this paper, which utilizes Interactive Hyperedge Neurons module (IHEN) and Optimal Hypergraph Homomorphism algorithm(OHGH) to generate multimodal connectivity of Brain Network from rs-fMRI combination with DTI. To evaluate the performance of this model, We use publicly available data from the ADNI database to demonstrate that the proposed model not only can identify discriminative brain regions of AD but also can effectively improve classification performance.

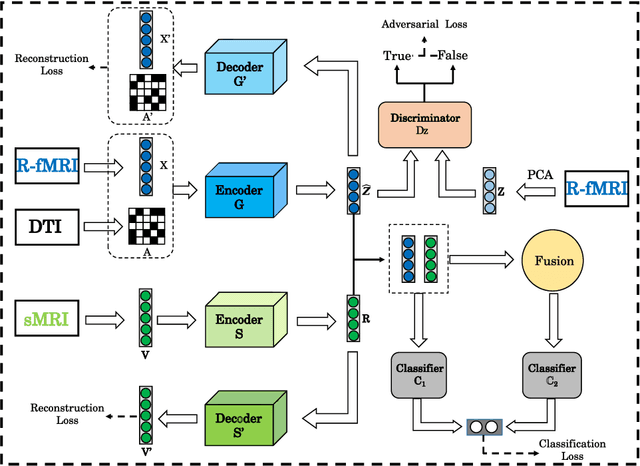

Multimodal Representations Learning and Adversarial Hypergraph Fusion for Early Alzheimer's Disease Prediction

Jul 21, 2021

Abstract:Multimodal neuroimage can provide complementary information about the dementia, but small size of complete multimodal data limits the ability in representation learning. Moreover, the data distribution inconsistency from different modalities may lead to ineffective fusion, which fails to sufficiently explore the intra-modal and inter-modal interactions and compromises the disease diagnosis performance. To solve these problems, we proposed a novel multimodal representation learning and adversarial hypergraph fusion (MRL-AHF) framework for Alzheimer's disease diagnosis using complete trimodal images. First, adversarial strategy and pre-trained model are incorporated into the MRL to extract latent representations from multimodal data. Then two hypergraphs are constructed from the latent representations and the adversarial network based on graph convolution is employed to narrow the distribution difference of hyperedge features. Finally, the hyperedge-invariant features are fused for disease prediction by hyperedge convolution. Experiments on the public Alzheimer's Disease Neuroimaging Initiative(ADNI) database demonstrate that our model achieves superior performance on Alzheimer's disease detection compared with other related models and provides a possible way to understand the underlying mechanisms of disorder's progression by analyzing the abnormal brain connections.

Bidirectional Mapping Generative Adversarial Networks for Brain MR to PET Synthesis

Aug 08, 2020

Abstract:Fusing multi-modality medical images, such as MR and PET, can provide various anatomical or functional information about human body. But PET data is always unavailable due to different reasons such as cost, radiation, or other limitations. In this paper, we propose a 3D end-to-end synthesis network, called Bidirectional Mapping Generative Adversarial Networks (BMGAN), where image contexts and latent vector are effectively used and jointly optimized for brain MR-to-PET synthesis. Concretely, a bidirectional mapping mechanism is designed to embed the semantic information of PET images into the high dimensional latent space. And the 3D DenseU-Net generator architecture and the extensive objective functions are further utilized to improve the visual quality of synthetic results. The most appealing part is that the proposed method can synthesize the perceptually realistic PET images while preserving the diverse brain structures of different subjects. Experimental results demonstrate that the performance of the proposed method outperforms other competitive cross-modality synthesis methods in terms of quantitative measures, qualitative displays, and classification evaluation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge