Zhenjie Zhao

6-DoF Robotic Grasping with Transformer

Jan 29, 2023

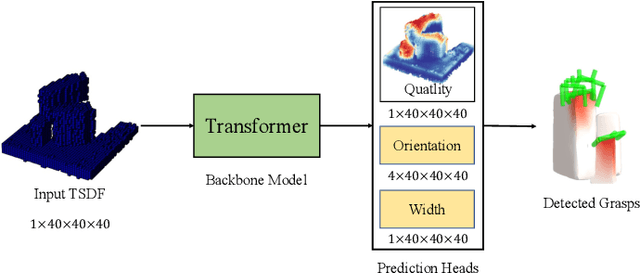

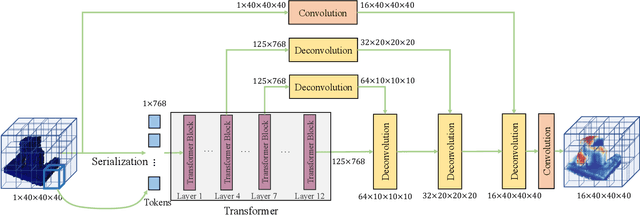

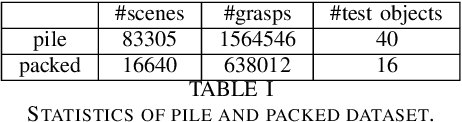

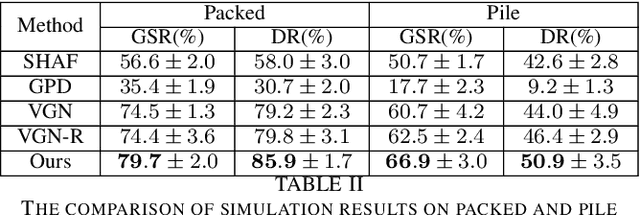

Abstract:Robotic grasping aims to detect graspable points and their corresponding gripper configurations in a particular scene, and is fundamental for robot manipulation. Existing research works have demonstrated the potential of using a transformer model for robotic grasping, which can efficiently learn both global and local features. However, such methods are still limited in grasp detection on a 2D plane. In this paper, we extend a transformer model for 6-Degree-of-Freedom (6-DoF) robotic grasping, which makes it more flexible and suitable for tasks that concern safety. The key designs of our method are a serialization module that turns a 3D voxelized space into a sequence of feature tokens that a transformer model can consume and skip-connections that merge multiscale features effectively. In particular, our method takes a Truncated Signed Distance Function (TSDF) as input. After serializing the TSDF, a transformer model is utilized to encode the sequence, which can obtain a set of aggregated hidden feature vectors through multi-head attention. We then decode the hidden features to obtain per-voxel feature vectors through deconvolution and skip-connections. Voxel feature vectors are then used to regress parameters for executing grasping actions. On a recently proposed pile and packed grasping dataset, we showcase that our transformer-based method can surpass existing methods by about 5% in terms of success rates and declutter rates. We further evaluate the running time and generalization ability to demonstrate the superiority of the proposed method.

Educational Question Generation of Children Storybooks via Question Type Distribution Learning and Event-Centric Summarization

Mar 27, 2022

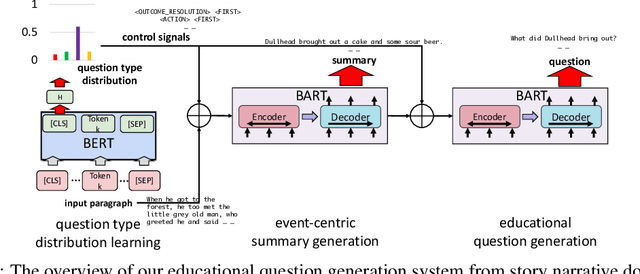

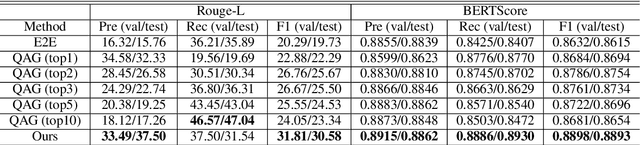

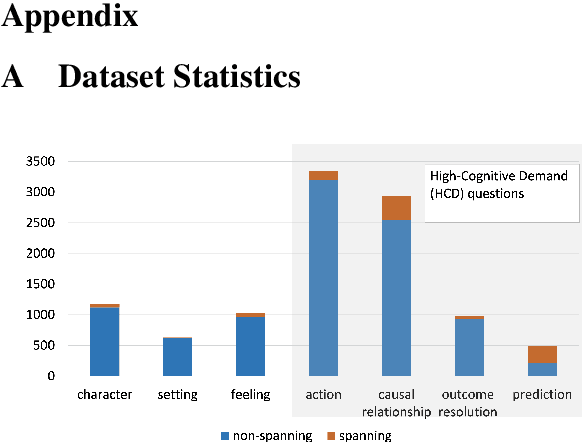

Abstract:Generating educational questions of fairytales or storybooks is vital for improving children's literacy ability. However, it is challenging to generate questions that capture the interesting aspects of a fairytale story with educational meaningfulness. In this paper, we propose a novel question generation method that first learns the question type distribution of an input story paragraph, and then summarizes salient events which can be used to generate high-cognitive-demand questions. To train the event-centric summarizer, we finetune a pre-trained transformer-based sequence-to-sequence model using silver samples composed by educational question-answer pairs. On a newly proposed educational question answering dataset FairytaleQA, we show good performance of our method on both automatic and human evaluation metrics. Our work indicates the necessity of decomposing question type distribution learning and event-centric summary generation for educational question generation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge